Under review as a conference paper at ICLR 2019

(a) (b)

Figure 1: (a)

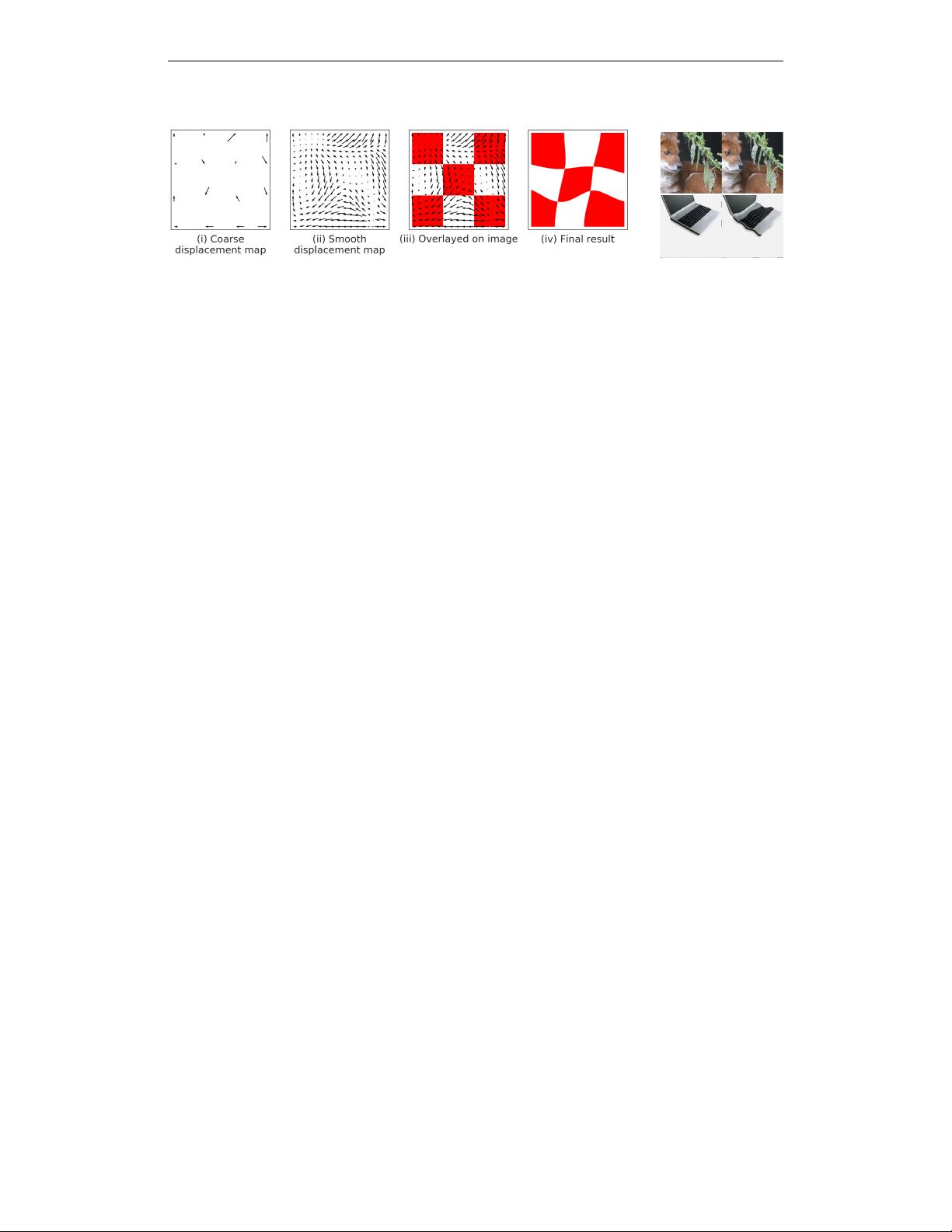

Generating deformed images

: To randomly deform an image we: (i) Start with a fixed

evenly spaced grid of control points (here 4x4 control points) and then choose a random source for

each control point within a neighborhood of the point; (ii) we then smooth the resulting vector field

using thin plate interpolation; (iii) vector field overlayed on original image: the value in the final

result at the tip of an arrow is computed using bilinear interpolation of values in a neighbourhood

around the tail of the arrow in the original image; (iv) the final result. (b)

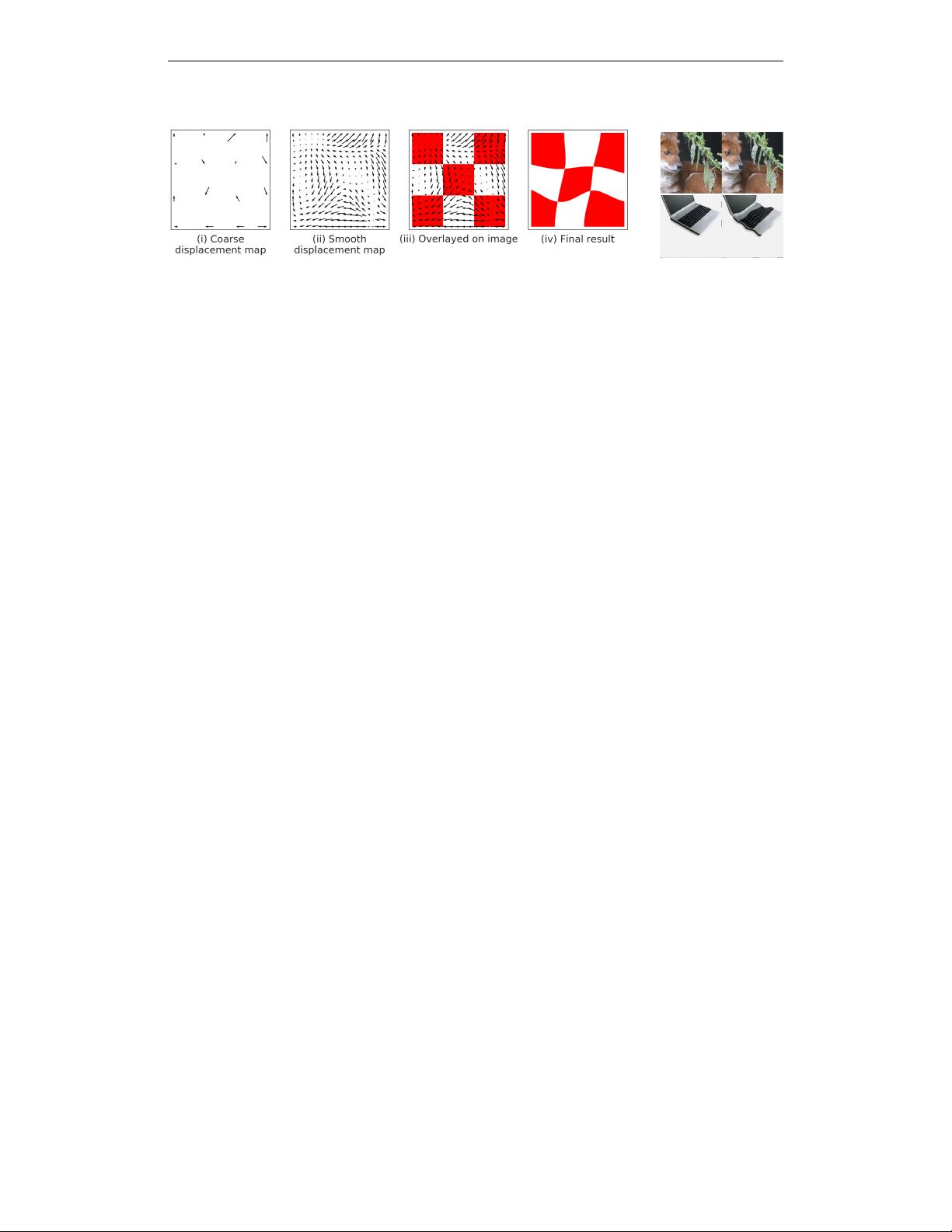

Examples of deformed

ImageNet images.

left: original images, right: deformed images. While the images have changed

significantly, for example under the

L

2

metric, they would likely be given the same label by a human.

Empirical investigations.

Previous empirical investigations of these phenomena in CNNs include

the work of Lenc & Vedaldi (2015), which focused on a more limited set of invariances such as

global affine transformations. More recently, there has been interest in the robustness of networks

to adversarial geometric transformations in the work of Fawzi & Frossard (2015) and Kanbak et al.

(2017). In particular, these studies looked at worst-case sensitivity of the output to such transforma-

tions, and found that CNNs can indeed be quite sensitive to particular geometric transformations (a

phenomenon that can be mitigated by augmenting the training sets). However, this line of work does

not address how deformation sensitivity is generally achieved in the first place, and how it changes

over the course of training. In addition, these investigations have been restricted to a limited class of

deformations, which we seek to remedy here.

2 METHODS

2.1 DEFORMATION SENSITIVITY

In order to study how CNN representations are affected by image deformations we first need a

controllable source of deformation. Here, we choose a flexible class of local deformations of image

coordinates, i.e., maps

τ : R

2

→ R

2

such that

k∇τk

∞

< C

for some

C

, similar to Mallat (2012).

We choose this class for several reasons. First, it subsumes or approximates many of the canonical

forms of image deformation we would want to be robust to, including:

• Pose: Small shifts in pose or location of subparts

• Affine transformations: translation, scaling, rotation or shear

• Thin-plate spline transforms

• Optical flow: Roth & Black (2007); Rosenbaum et al. (2013)

We show examples of several of these in Section 2 of the supplementary material.

This class also allows us to modulate the strength of image deformations, which we deploy to

investigate how task demands are met by CNNs. Furthermore, this class of deformations approximates

most of the commonly used methods of data augmentation for object recognition Simard et al. (2003);

Wong et al. (2016); Cire¸san et al. (2010).

While it is in principle possible to explore finer-grained distributions of deformation (e.g., choosing

adversarial deformations to maximally shift the representation), we think our approach offers good

coverage over the space, and a reasonable first order approximations to the class of natural deforma-

tions. We leave the study of richer transformations—such as those requiring a renderer to produce or

those chosen adversarially Fawzi & Frossard (2015); Kanbak et al. (2017)—as future work.

3