2.2. Knowledge Distillation

Knowledge Distillation (KD) which transfers knowledge from

the TM to the SM is one of the effective methods to train light-

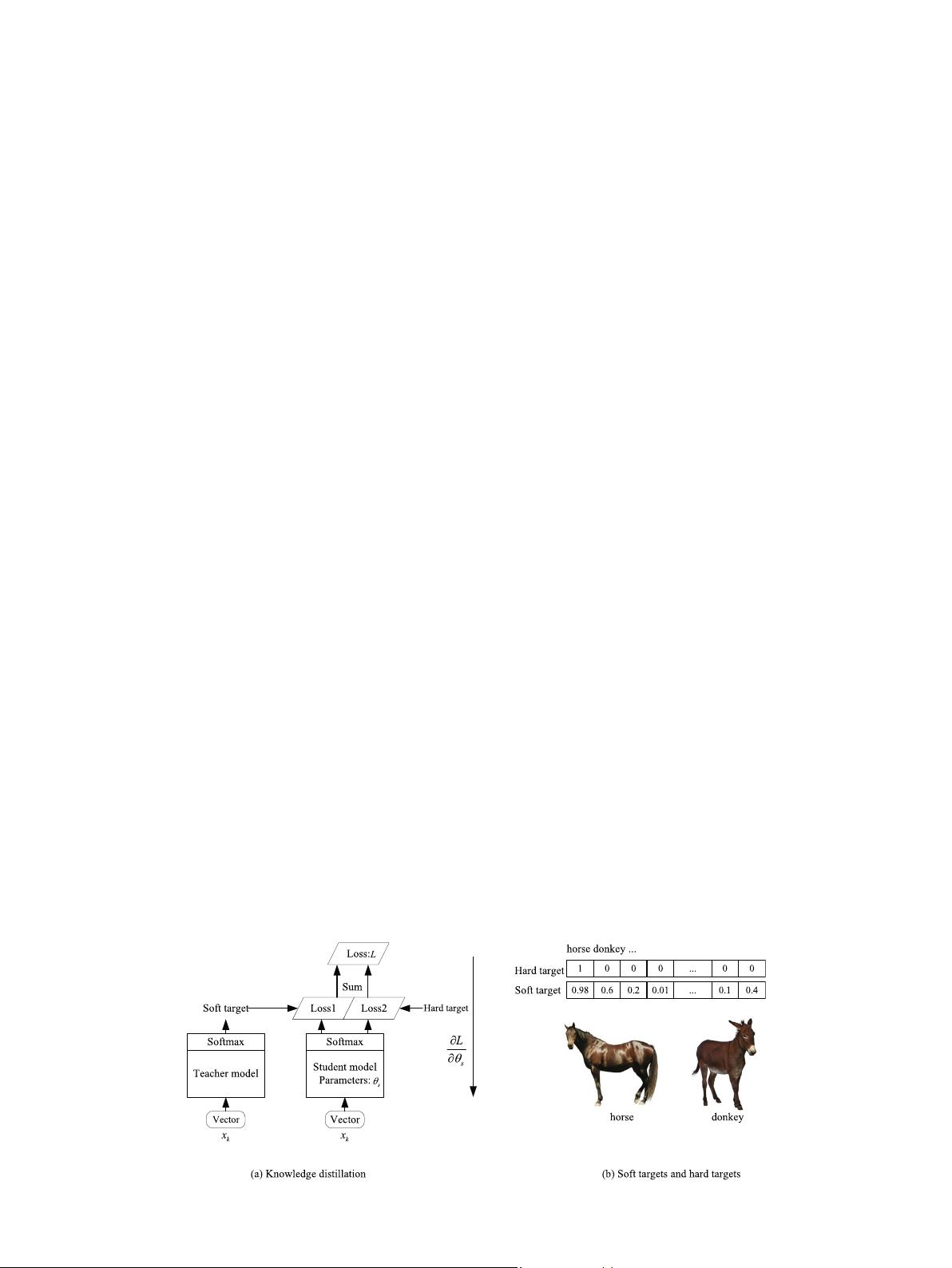

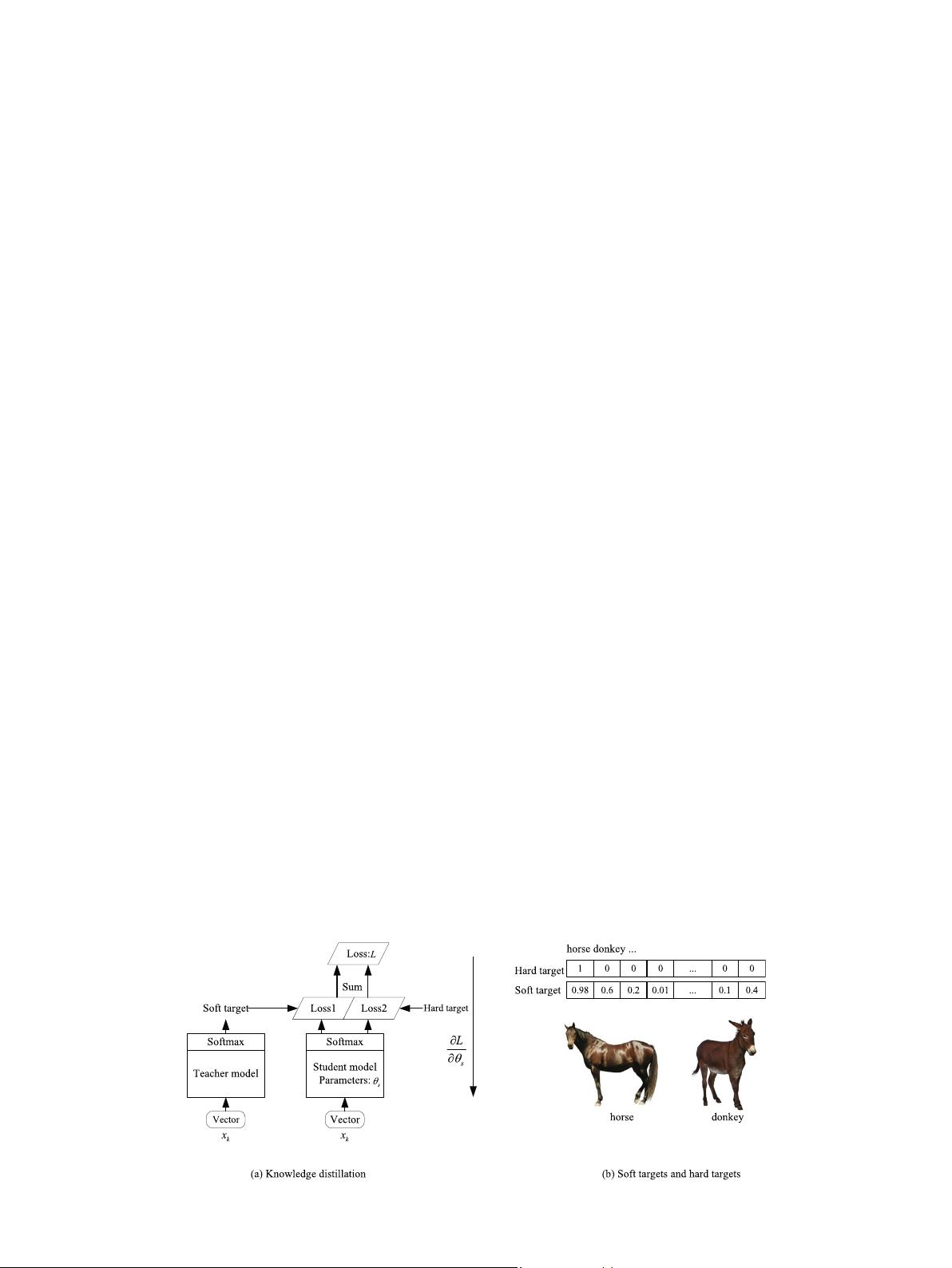

weight CNN models. As shown in Fig. 1(a), the outputs of the

trained TM are utilized as ‘soft targets’ and the true labels of sam-

ples are employed as ‘hard targets’ for the training of the SM. The

soft targets have high entropy, and they provide much more infor-

mation and much less variance in the gradient between training

samples than hard targets, so the SM can be trained on much less

data than the TM, and it can achieve higher performance than the

SM trained on hard targets [24]. For the example shown in Fig. 1

(b), the inter-class similarity is ignored by the hard target, and

the same distance between classes is considered. Compared with

the hard target, the inter-class similarity learned by the TM is

added into the soft target, such as the similarity between horse

and donkey, so the soft target provides much more information

than the hard target.

Exploiting knowledge transfer to train a lightweight model was

proposed first in [39], where a trained TM is utilized to label a large

unlabeled data set, and the newly labeled data set is employed to

train a SM. However, this work is limited to shallow models. The

idea, KD, was advanced in [40] to compress a deep and wide TM

into a shallower SM, where the SM mimicked knowledge learned

by the TM. Recently, KD has attracted a lot of attention. For exam-

ple, Hinton et al. [24] designed a joint loss function between the

TM and the SM to guide the SM training. In [25], Romero et al.

mapped hidden layers in the SM to the prediction of hidden layers

in the TM, and defined a loss function to train an efficient SM.

Zagoruyko et al. [26] employed attention information from the

convolutional layers of the TM to improve the SM performance.

In [27], Zhou et al. utilized parameter sharing and a new loss func-

tion to train the SM and the TM simultaneously. Besides, some

researchers [41,42] focused on using adversarial networks to

replace the manually designed loss, such as L1/L2 loss and KL

divergence.

Previous KD methods can be divided into two groups. The first

group of methods refers to that a TM is trained first on hard targets,

and then a corresponding SM is trained on hard targets and soft

targets from the TM, such as in [24–26]. However, in [27], Zhou

et al. assumed that not only the final outputs of TM but also the

learning process of TM is beneficial to train SM. Therefore, they uti-

lized the simultaneous training of SM and TM to make the learning

process of TM guide the learning of SM. Nevertheless, the opti-

mization of SM at each iteration is mainly influenced by TM, which

restricts the independence of SM, and makes TM guide SM in the

wrong direction possibly. It is just like that a student A who has

a strong learning ability guides another student B with weak learn-

ing ability to learn knowledge, but knowledge from student A

maybe not correct. In addition, in each epoch, the TM is not always

optimized in the direction of reaching the final goal, such as the

case that the loss of the TM increases, and the accuracy of the

TM decrease in a training epoch.

3. PML

In this section, the PML is described in detail. Firstly, inspired by

human learning behavior, we present the PML for the training of

lightweight CNN models, and we design a landmark loss for the

PML. Then, the landmark loss is described in detail. Finally, an

explicit algorithm flow is given for the PML implementation.

3.1. PML framework

In order to learn higher mathematics, students should learn

some preliminary elementary and middle school mathematics in

advance. In other words, not only the acquired knowledge but also

the accumulated process is important for human learning. In [28],

researches on human learning also prove that the learning process

of a teacher is significant for the learning of students. Inspired by it,

we propose the PML for the better learning of lightweight CNN

models, which mainly exploits the knowledge learned by the TM

and the learning process of the TM to guide the SM training pro-

cess. In the PML, both the learning process of the TM and the learn-

ing process of the SM are regarded as multiple learning stages. In

each learning stage of the SM, the SM is trained to approximate

the TM in the corresponding learning stage, which is different from

all previous knowledge distillation methods.

As illustrated in Fig. 2, the SM is a lightweight, CNN model for

image recognition, and all layers of the SM are divided into b

þ 1

groups that are composed of b groups with convolutional layers

and one group with FC layers. Firstly, b þ 1 groups are utilized to

construct a TM with a higher recognition accuracy, where the

jthð1 6 j 6 b þ 1Þ group in the TM has a similar structure as the

jth group in the SM, and it has more layers and more parameters

than that in the SM.

Then, the learning process of the TM with E iterations is divided

into c learning stages, where E ¼

P

c

i¼1

E

i

; 1 6 i 6 c, and E

i

denotes

the iteration number in the ith learning stage. The last state of

the TM in the ith learning stage is defined as a landmark

M

t

i

¼½f

t

1

ðx

k

Þ; ::; f

t

b

ðx

k

Þ; zðx

k

Þ; qðx

k

Þ, where f

t

j

ðx

k

Þ is the output of the

jth group and zðx

k

Þ is the output of the b þ 1th group in the TM.

Fig. 1. Knowledge distillation. (a) The framework of knowledge distillation. (b) Soft targets and hard targets.

H. Ma, S. Yang, D. Feng et al. Neurocomputing 456 (2021) 220–231

222