"强化学习数学基础:贝尔曼方程到actor-critic方法"

需积分: 0 173 浏览量

更新于2024-01-03

1

收藏 1.68MB PDF 举报

本书《强化学习的数学基础》的作者赵世钰是西湖大学工学院智能无人系统实验室主任。他撰写这份资料的目的是为了弥补现有教材的不足。

书中共分为10章,内容涵盖了强化学习的数学基础。以下是各章节的简要介绍:

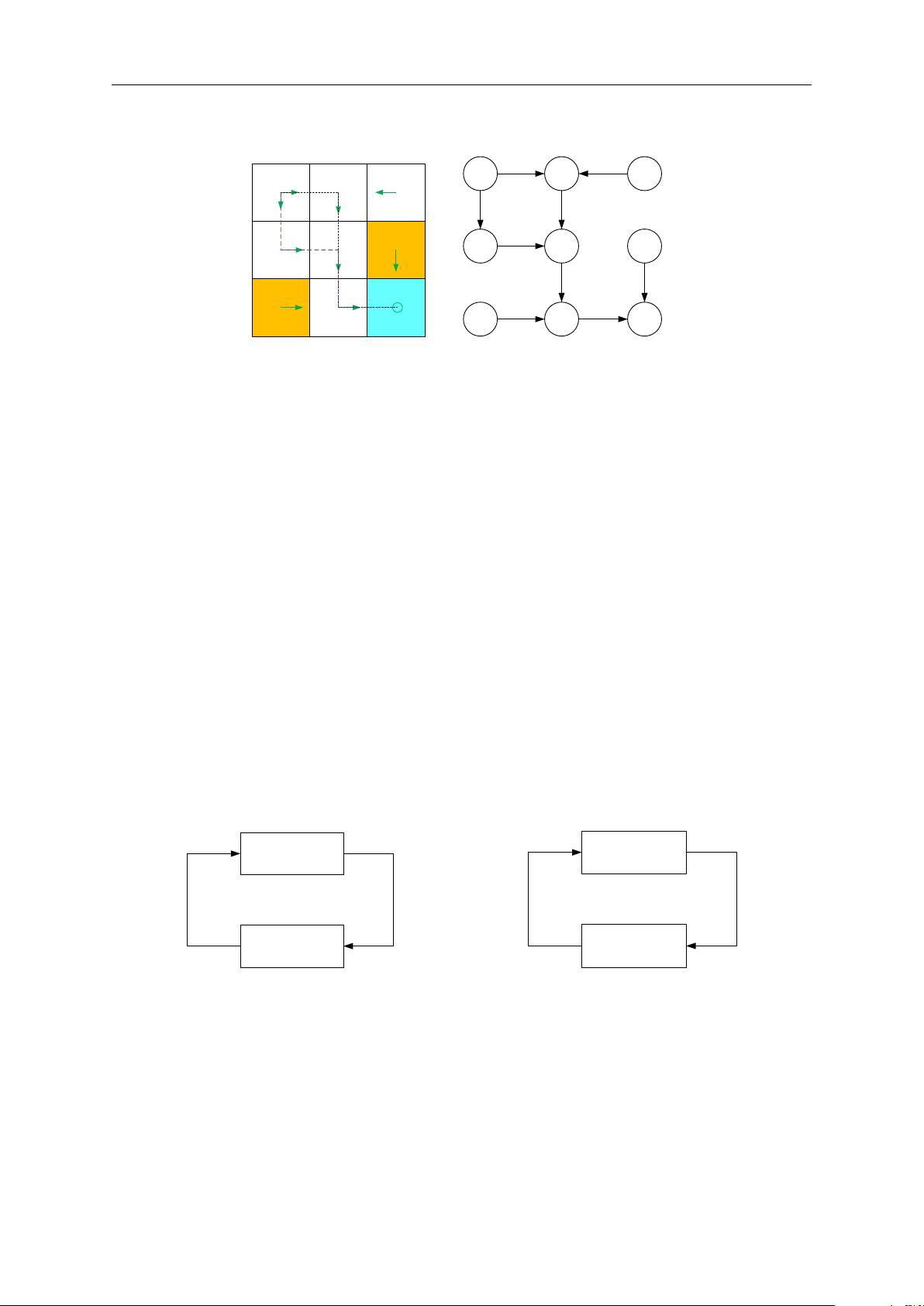

第2章:贝尔曼方程,是分析状态值的基本工具。贝尔曼方程描述了如何通过当前状态的值函数和下一状态的值函数来计算当前状态的值函数。

第3章:贝尔曼最优方程,是一个特殊的贝尔曼方程。贝尔曼最优方程描述了在最优策略下的最优状态值函数和最优动作值函数之间的关系。

第4章:值迭代算法,是一种求解贝尔曼最优方程的算法。值迭代算法通过反复迭代更新状态值函数来逼近最优状态值函数。

第5章:蒙特卡罗学习,是第4章策略迭代算法的扩展。蒙特卡罗学习是一种基于采样的学习方法,通过模拟多个完整的轨迹来估计状态值函数或动作值函数。

第6章:随机逼近的基础知识。本章介绍了随机逼近的基本概念和方法,包括线性回归、多项式逼近和神经网络逼近。

第7章:时差学习,第6章是本章的基础。时差学习是一种基于差分逼近的学习方法,通过逐步更新状态值函数来逼近最优值函数。

第8章:扩展了表格时间对价值函数逼近情况的差分学习方法。本章介绍了一些扩展了表格时间对价值函数逼近情况的差分学习方法,包括线性函数逼近、基于样本的学习和基于模型的学习。

第9章:策略迭代。策略迭代是一种通过交替进行策略评估和策略改进来求解最优策略的方法。

第10章:actor-critic 方法。actor-critic 方法是一种结合了值函数逼近和策略改进的方法,通过同时学习策略和值函数来实现更好的性能。

这本书的目标读者是对强化学习感兴趣的学生和研究人员。通过学习这本书,读者将能够掌握强化学习的数学基础,了解贝尔曼方程、值迭代算法、蒙特卡罗学习等关键概念和方法,并能够应用到实际问题中。这将为他们在强化学习领域的研究和应用提供坚实的数学基础。

2010-10-22 上传

2020-01-15 上传

2011-12-02 上传

2010-06-23 上传

2021-05-22 上传

ShowMeAI

- 粉丝: 6282

- 资源: 42

最新资源

- JHU荣誉单变量微积分课程教案介绍

- Naruto爱好者必备CLI测试应用

- Android应用显示Ignaz-Taschner-Gymnasium取消课程概览

- ASP学生信息档案管理系统毕业设计及完整源码

- Java商城源码解析:酒店管理系统快速开发指南

- 构建可解析文本框:.NET 3.5中实现文本解析与验证

- Java语言打造任天堂红白机模拟器—nes4j解析

- 基于Hadoop和Hive的网络流量分析工具介绍

- Unity实现帝国象棋:从游戏到复刻

- WordPress文档嵌入插件:无需浏览器插件即可上传和显示文档

- Android开源项目精选:优秀项目篇

- 黑色设计商务酷站模板 - 网站构建新选择

- Rollup插件去除JS文件横幅:横扫许可证头

- AngularDart中Hammock服务的使用与REST API集成

- 开源AVR编程器:高效、低成本的微控制器编程解决方案

- Anya Keller 图片组合的开发部署记录