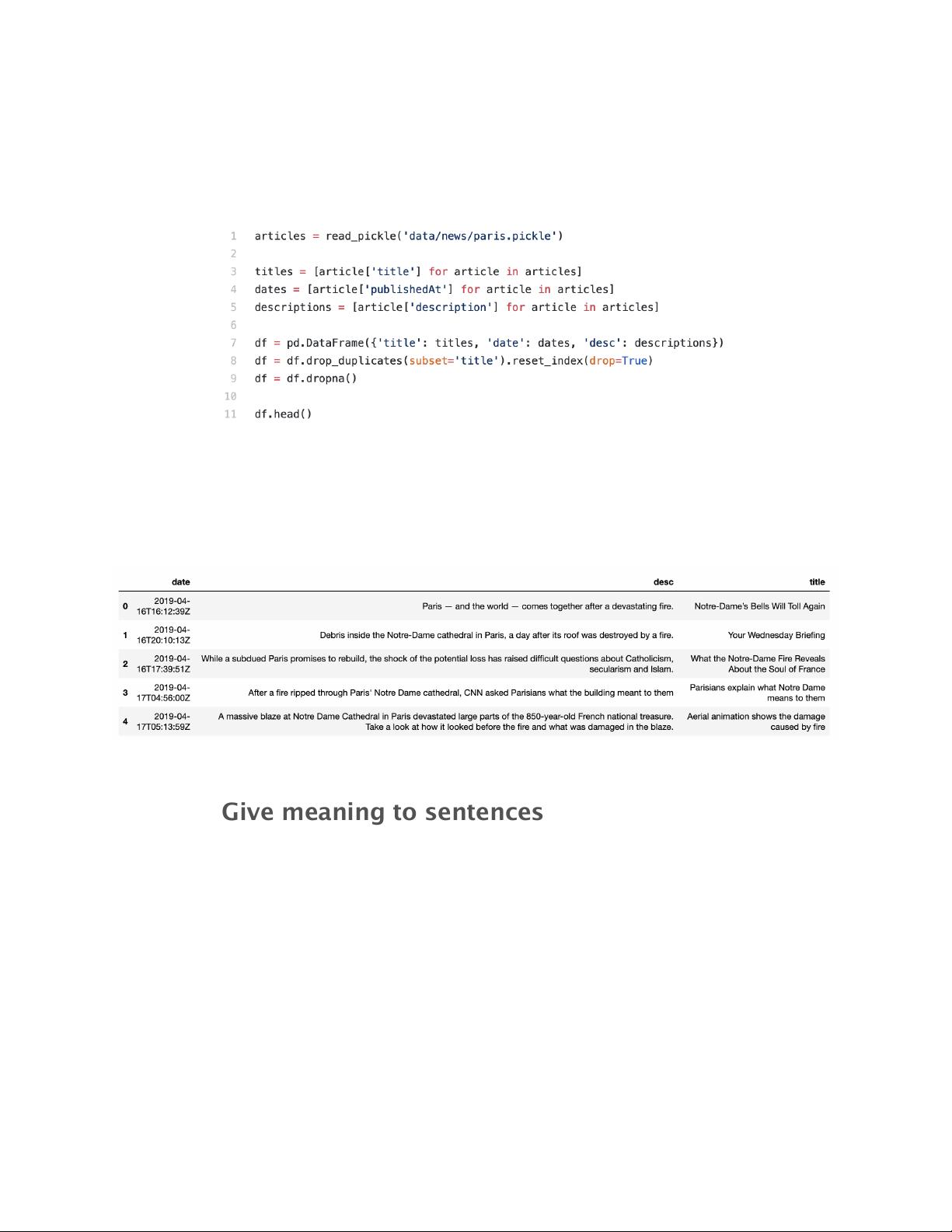

This last function returns a list of approximately 2.000 articles given

a specific query. Our purpose is to extract those articles’ events, so in

order to simplify the process, I’m keeping only their titles (in theory,

titles should already comprise the core message behind the news).

That leaves us with a data frame like the one below, including dates,

descriptions, and titles.

Give meaning to sentences

Now that we have our titles ready, we need to represent them in a

way that our algorithms understand. Notice that I’m skipping a whole

stage of pre-processing here, simply because that isn’t the purpose of

this article. But if you are starting with NLP, make sure to include

those basic pre-processing steps before applying the models → here

is a nice tutorial.

To give meaning to independent words and, consequently, whole

sentences, we’ll use SpaCy’s pre-trained word embeddings models.

More specifically, SpaCy’s large model (en_core_web_lg), which has

pre-trained word vectors for 685k English words. Alternatively, you