local source deduplication if (4) is satisfied when network

bandwidth is very low, and also outperform global source

deduplication since (5) is always satisfied due to high cloud

latency

BWS

LG

¼ T

L

þ T

G

P

L

L

þ

C

B

P

G

L

(3)

P

G

P

L

þ

B T

G

C

G 1(4)

P

L

L

þ

T

L

T

G

G 1: (5)

We can d efine a metric for deduplication efficiency to

balance the cloud s torage cost saving an d backup window

shrinking in source deduplicati on based cloud backup

services. It is well understood that the deduplication

efficiency is proportional to deduplication eff ectiven ess

that is always defined by duplicate el imination ratio

R ¼ L=P , and inversely proportional to the average backup

window size per chunk BWS with average chunk size C.

Based on this understanding and to better quantify and

compare deduplication efficiency of a wide variety of

deduplication techniques, we propose a new metric, called

‘‘bytes saved per second,’’ which is expressed in (6), to

measure the deduplication efficiency DE of different

deduplication schemes on the same platform. Our local-

global source deduplication design can achieve high

efficiency for its global deduplication effectiveness and

reduced backup window

DE ¼

C

BWS

1

1

R

: (6)

Different from the traditional deduplication based

cloud backup services that are oblivious to the file level

semantic knowl edge, we opti mize the eff iciency for the

source deduplication based cloud backup services by

exploiting application awareness. We can divide backup

dataset into n applic ation data subsets acc ording to fil e

semantics, and i mprove the deduplication effec tiveness

and decrease deduplication latency by dedic ated de dupl i-

cation process for each kind of application data. For

application i,wedefineL

i

, P

Li

,andP

Gi

as its lo gical data

subset size, its physical data subset sizes after traditional

local and global source deduplication, respectively; P

ALi

and P

AGi

as its physical data subset sizes after lo cal and

global application aware source deduplication, respective-

ly. We assume its average la tency per chunk for local and

global application a ware duplication detection are T

ALi

and T

AGi

, respectively, then T

ALi

G T

L

and T

AGi

G T

G

due to

the index lookup optimization by exploiting application

awareness. According to our observation in Section 3: the

amount of data shared among different types of applica-

tionsisnegligiblysmall,wehaveP

Li

P

ALi

and

P

Gi

P

AGi

for any i is established. We make formulas

(7) and (8) to estimate the upper bound of physical dataset

size P

AG

and the average backup windo w size per chunk

BWS

ALG

for application-aware local -global source dedu-

plication based cloud backup services, and then we know

formula (9) is established, and it indicates that the

efficiency of application-awarelocal-globalsourcededu-

plication DE

ALG

is higher than that of local-global source

deduplication DE

LG

BWS

ALG

¼

X

n

i¼1

L

i

L

T

Li

þT

Gi

P

ALi

L

i

þ

C

B

P

AGi

L

i

G

X

n

i¼1

L

i

L

T

L

þ T

G

P

ALi

L

i

þ

C

B

P

AGi

L

i

X

n

i¼1

L

i

L

T

L

þT

G

X

n

i¼1

P

Li

L

þ

C

B

X

n

i¼1

P

Gi

L

¼ T

L

þ T

G

P

L

L

þ

C

B

P

G

L

¼ BWS

LG

(7)

P

AG

¼

X

n

i¼1

P

AGi

X

n

i¼1

P

Gi

¼ P

G

(8)

DE

ALG

¼

C

BWS

ALG

1

P

AG

L

9

C

BWS

LG

1

P

G

L

¼ DE

LG

: (9)

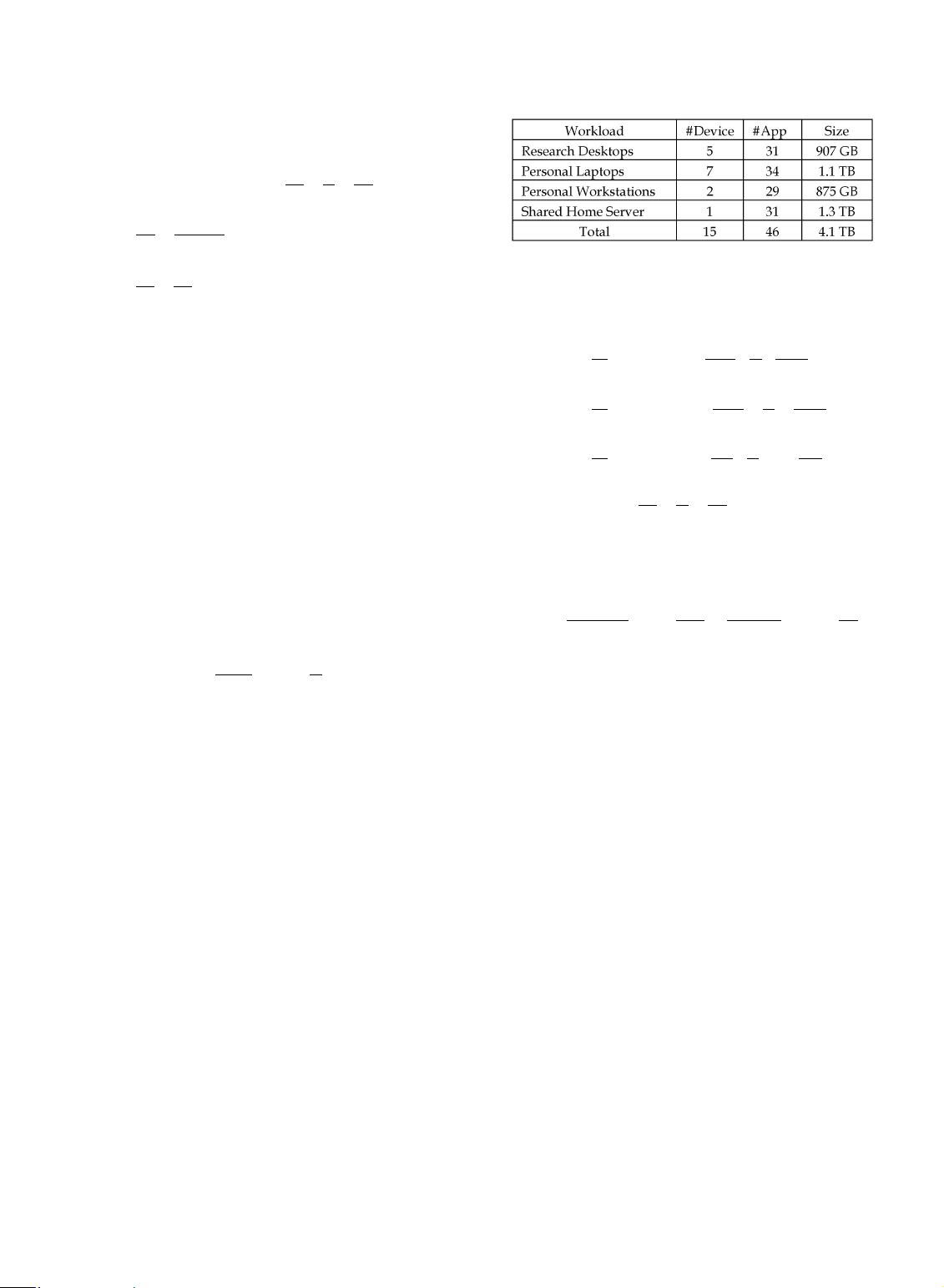

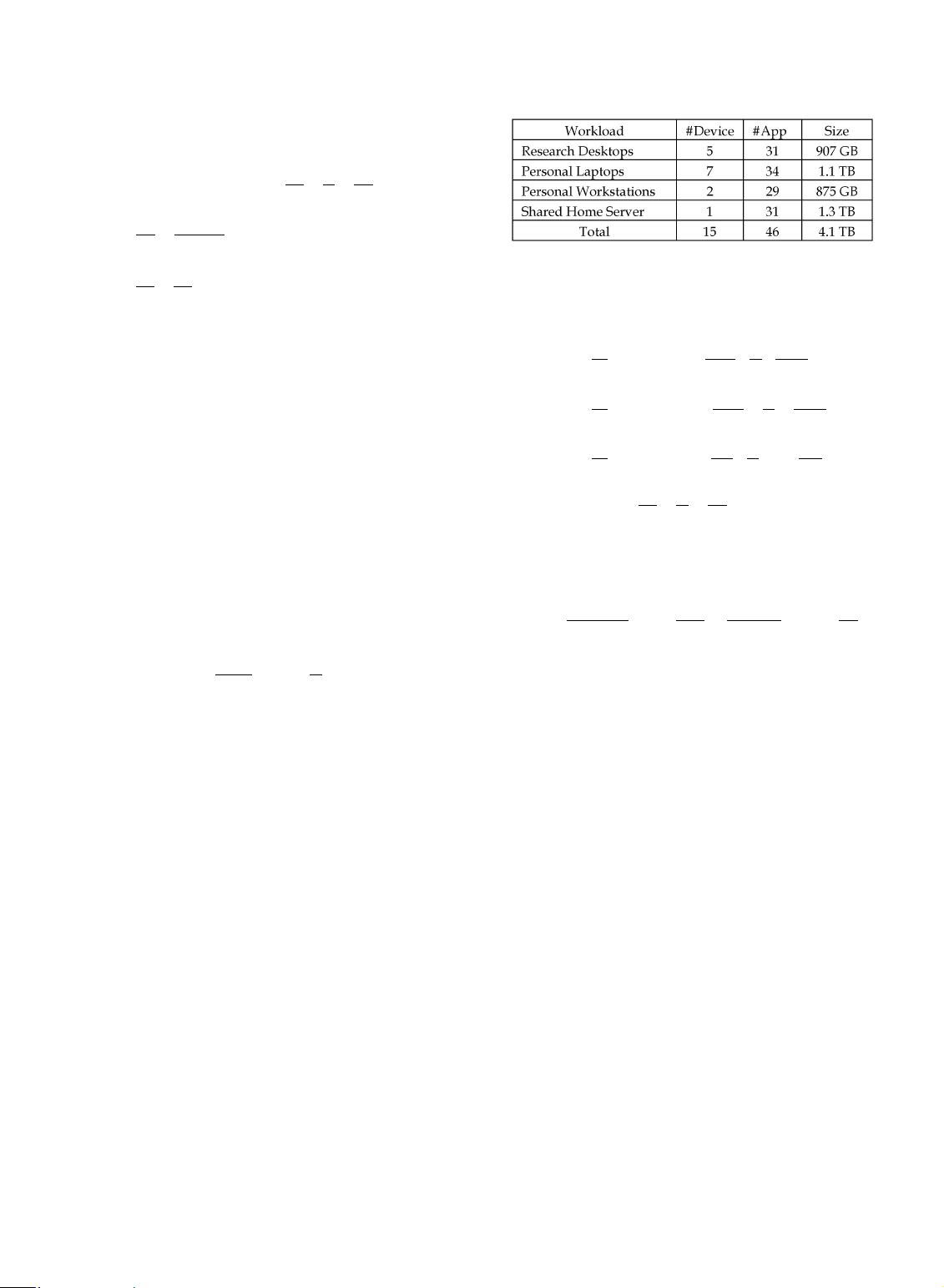

3DEDUPLICATION ANALYSIS ON PERSONAL DATA

In this section, we will investigate how data redundancy,

space utilization efficiency of popular data chunking

methods and computational overhead of typ ical hash

functions change in different applications of personal

computing to motivate our research. We perform prelim-

inary experimental study on datasets collected from desk-

tops in our research group, volunteers’ personal laptops,

personal workstati ons for image processing and financial

analysis,andasharedhomeserver.Table1outlinesthekey

dataset characteristics: the number of devices, applications

and d ataset size for each studied workload. To the best of

ourknowledge,thisisthefirstsystematicdeduplication

analysis on personal storage.

Observation 1

Adisproportionallylargepercentageofstoragespaceis

occupied by a very small number of large f iles with very

low chunk-level redundancy after file-level dedupe.

Implication

File-level deduplic ation using weak hash fun-c tions for

these large files is sufficient t o avoid hash collisio ns f or

small datasets in t he personal computing environment.

To reveal the relationship between file count and storage

capacity under various file size, we co llect statistics on

thedistributionoffilecountandstoragespaceoccupied

by files of differen t sizes in the datasets listed in Table 1

TABLE 1

Datasets Used for Deduplication Analysis

FU ET AL.: LOCAL-GLOBAL SOURCE DEDUPLICATION FOR CLOUD BACKUP SERVICES 1157