13/02/2018 ulmfit_pretraining.html

1/1

dollarThegold or

Embedding

layer

Layer1

Layer2

Layer3

Softmax

layer

gold

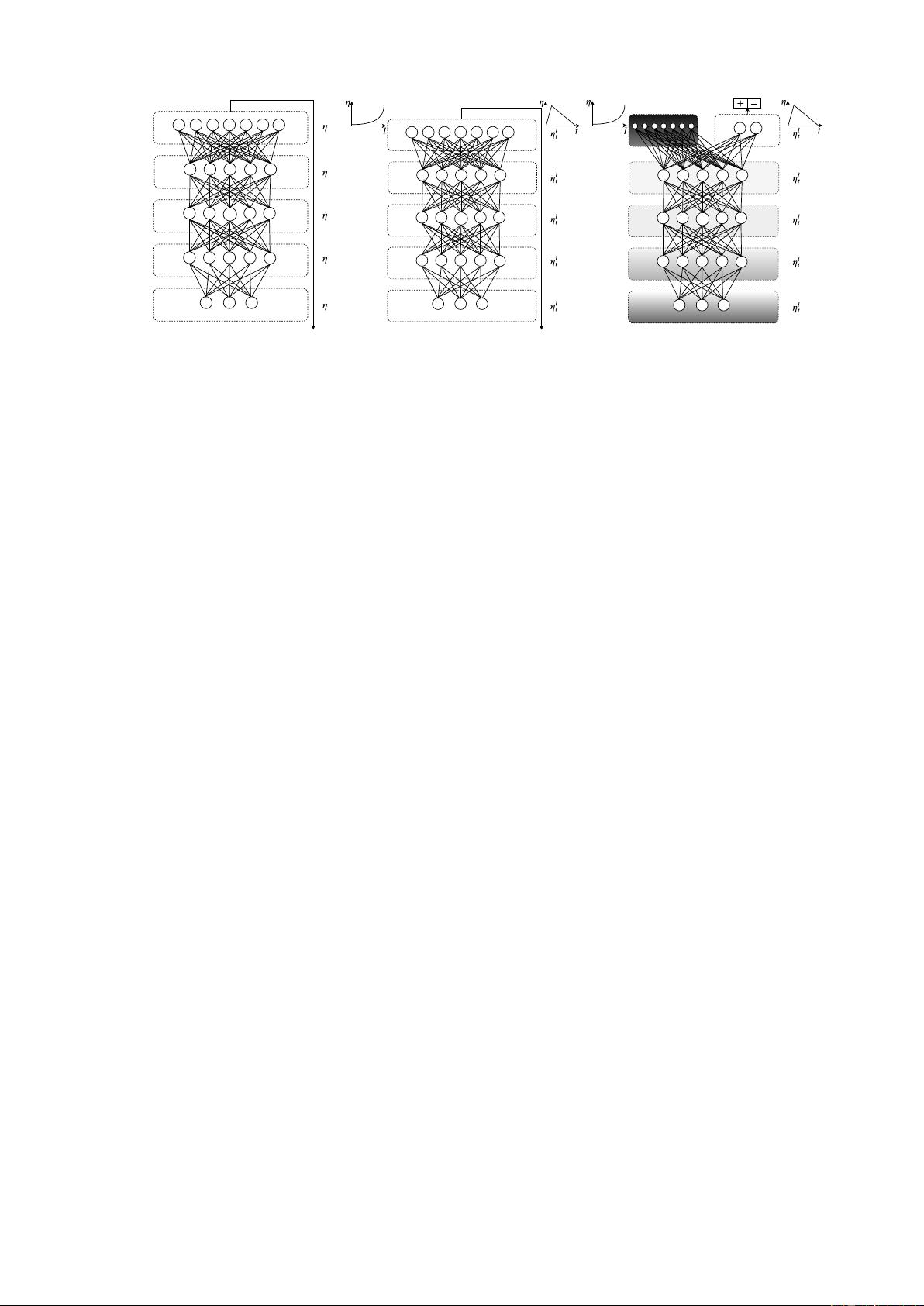

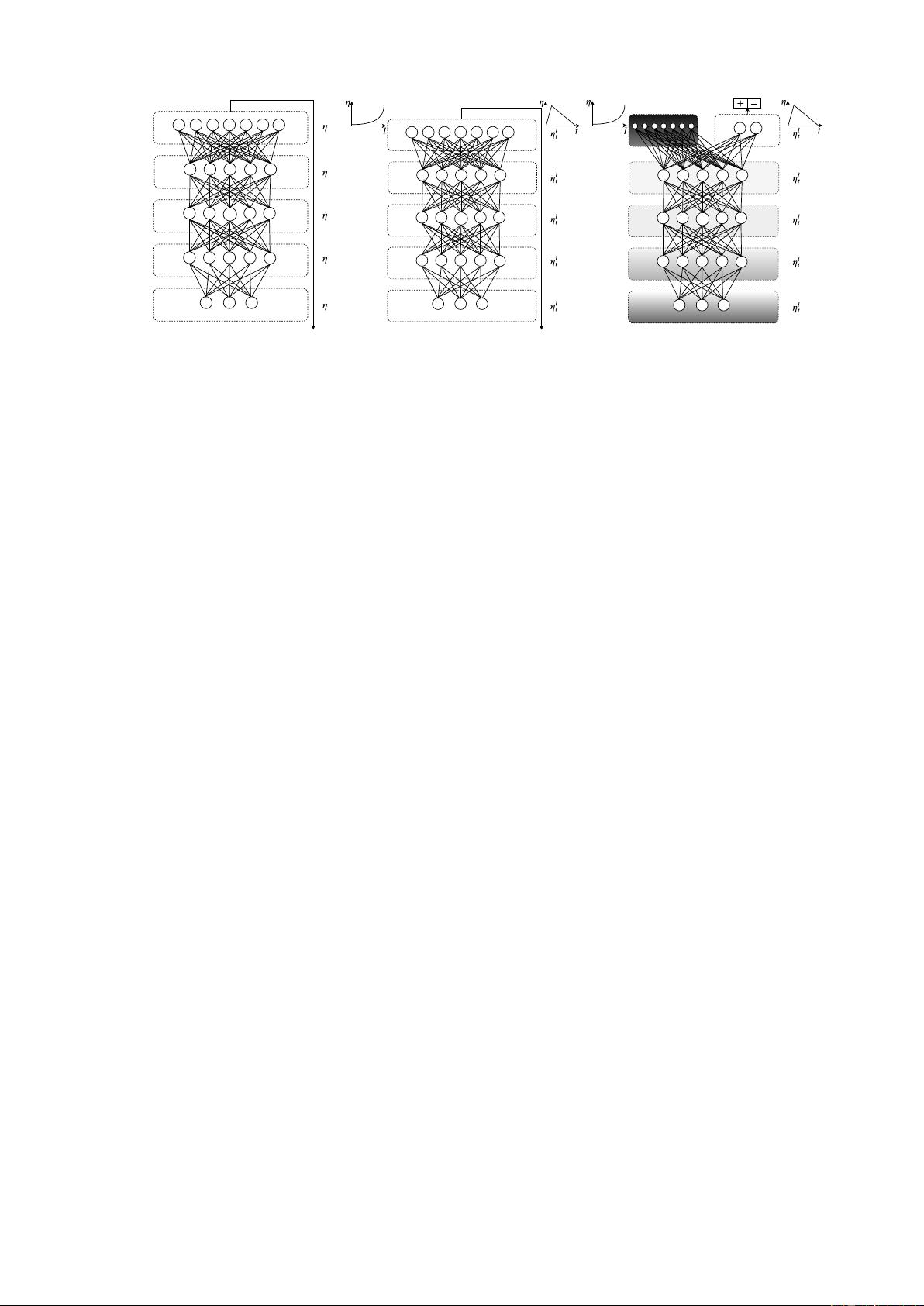

(a) LM pre-training

13/02/2018 ulmfit_lm_fine-tuning.html

1/1

sceneThebest ever

Embedding

layer

Layer1

Layer2

Layer3

Softmax

layer

(b) LM fine-tuning

13/02/2018 ulmfit_clas_fine-tuning.html

1/1

sceneThebest ever

Embedding

layer

Layer1

Layer2

Layer3

Softmax

layer

(c) Classifier fine-tuning

Figure 1: ULMFiT consists of three stages: a) The LM is trained on a general-domain corpus to capture

general features of the language in different layers. b) The full LM is fine-tuned on target task data using

discriminative fine-tuning (‘Discr’) and slanted triangular learning rates (STLR) to learn task-specific

features. c) The classifier is fine-tuned on the target task using gradual unfreezing, ‘Discr’, and STLR to

preserve low-level representations and adapt high-level ones (shaded: unfreezing stages; black: frozen).

task, which we show significantly improves per-

formance (see Section 5). Moreover, language

modeling already is a key component of existing

tasks such as MT and dialogue modeling. For-

mally, language modeling induces a hypothesis

space H that should be useful for many other NLP

tasks (Vapnik and Kotz, 1982; Baxter, 2000).

We propose Universal Language Model Fine-

tuning (ULMFiT), which pretrains a language

model (LM) on a large general-domain corpus and

fine-tunes it on the target task using novel tech-

niques. The method is universal in the sense that

it meets these practical criteria: 1) It works across

tasks varying in document size, number, and label

type; 2) it uses a single architecture and training

process; 3) it requires no custom feature engineer-

ing or preprocessing; and 4) it does not require ad-

ditional in-domain documents or labels.

In our experiments, we use the state-of-the-

art language model AWD-LSTM (Merity et al.,

2017a), a regular LSTM (with no attention,

short-cut connections, or other sophisticated ad-

ditions) with various tuned dropout hyperparame-

ters. Analogous to CV, we expect that downstream

performance can be improved by using higher-

performance language models in the future.

ULMFiT consists of the following steps, which

we show in Figure 1: a) General-domain LM

pretraining (§3.1); b) target task LM fine-tuning

(§3.2); and c) target task classifier fine-tuning

(§3.3). We discuss these in the following sections.

3.1 General-domain LM pretraining

An ImageNet-like corpus for language should be

large and capture general properties of language.

We pretrain the language model on Wikitext-103

(Merity et al., 2017b) consisting of 28,595 prepro-

cessed Wikipedia articles and 103 million words.

Pretraining is most beneficial for tasks with small

datasets and enables generalization even with 100

labeled examples. We leave the exploration of

more diverse pretraining corpora to future work,

but expect that they would boost performance.

While this stage is the most expensive, it only

needs to be performed once and improves perfor-

mance and convergence of downstream models.

3.2 Target task LM fine-tuning

No matter how diverse the general-domain data

used for pretraining is, the data of the target task

will likely come from a different distribution. We

thus fine-tune the LM on data of the target task.

Given a pretrained general-domain LM, this stage

converges faster as it only needs to adapt to the id-

iosyncrasies of the target data, and it allows us to

train a robust LM even for small datasets. We pro-

pose discriminative fine-tuning and slanted trian-

gular learning rates for fine-tuning the LM, which

we introduce in the following.

Discriminative fine-tuning As different layers

capture different types of information (Yosinski

et al., 2014), they should be fine-tuned to differ-

ent extents. To this end, we propose a novel fine-