ISPRS Int. J. Geo-Inf. 2020, 9, 242 5 of 21

1

2

3

4

5

0

1

2

3

4

BN-ReLu

-Conv

BN-ReLu

-Conv

BN-ReLu

-Conv

BN-ReLu

-Conv

Transition Layer

Input

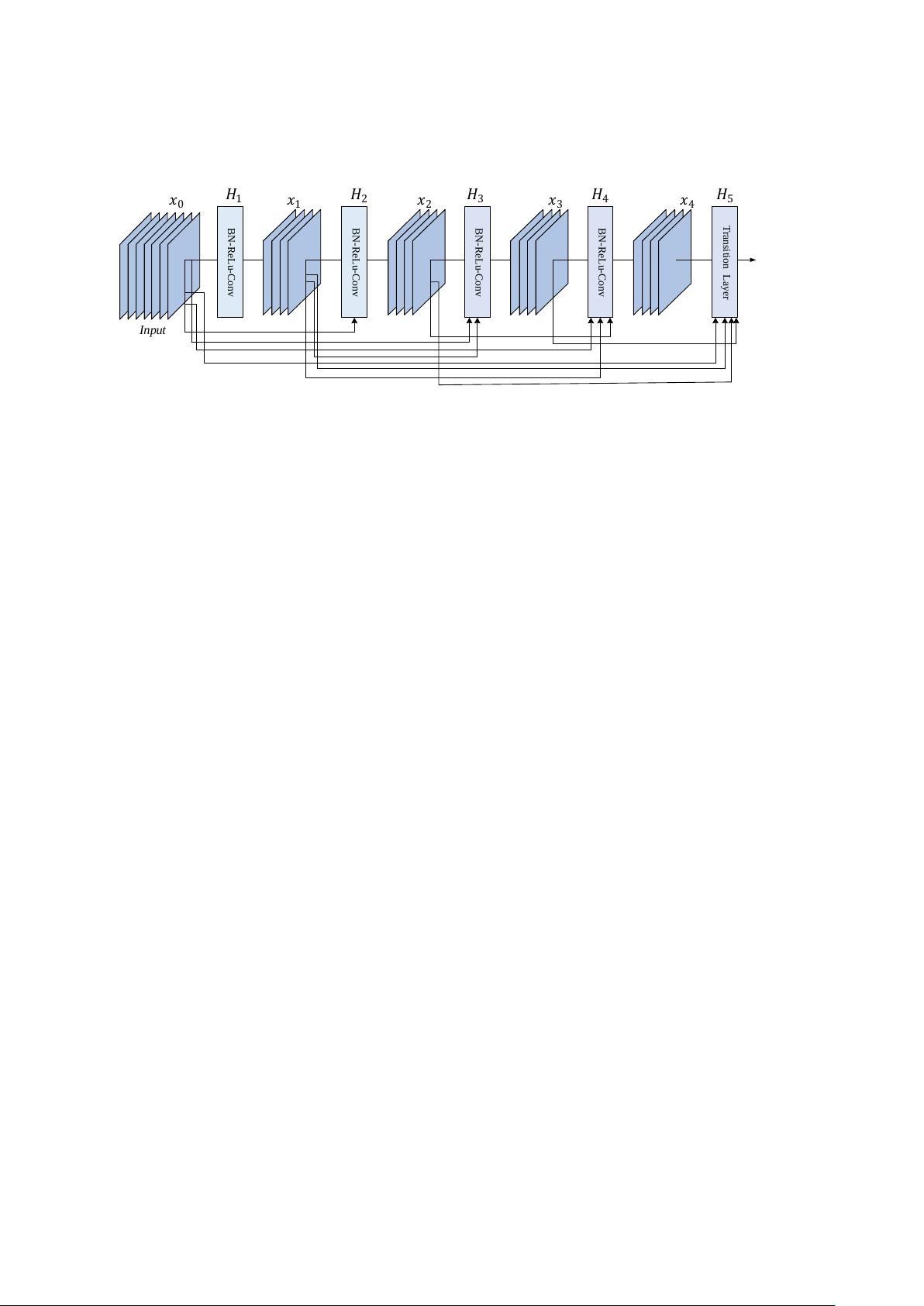

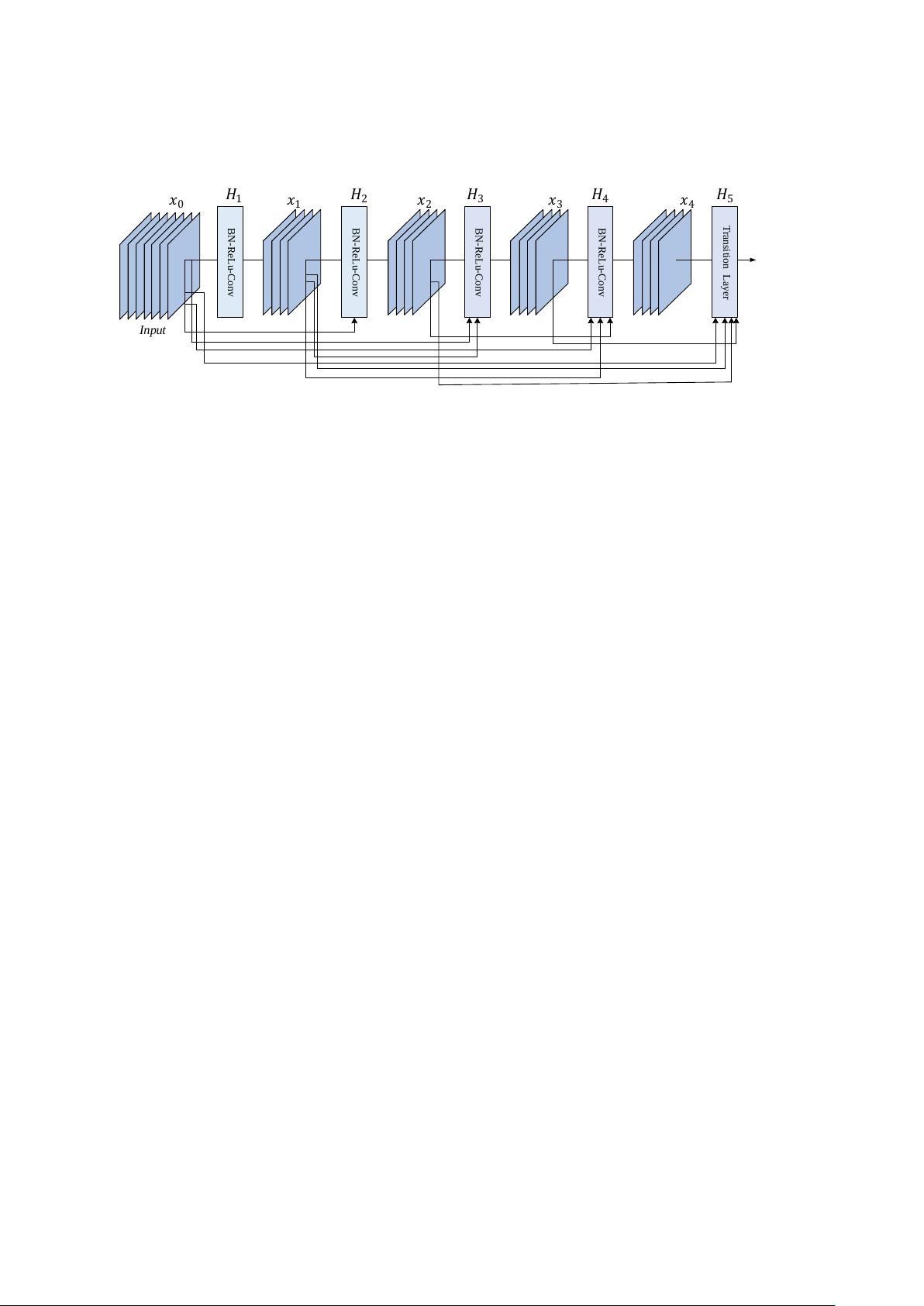

Figure 1.

A five-layer dense block with a growth rate of

k =

4. All preceding feature-maps are the

input data of each layer.

In a dense block, the feature map

x

l

of the

l

th

layer can be achieved from the feature maps

x

0

, x

1

, ..., x

l−1

, which is calculated and expressed as follows:

x

l

= H

l

([x

0

, x

1

, ..., x

l−1

]) (1)

where

x

0

, ...,

x

l−1

are the result of tensor stitching of feature maps from the zeroth layer to the

(l −

1

)

th

layer, which are the input data of the

l

th

layer. The standard

H

l

(

.

)

is a compound function consisting of

three successive operations: BN, ReLU, and the convolution kernel with a size of 3

×

3. Each function

H

l

(

.

)

outputs kfeature maps, so there are

k(l −

1

) + k

0

input feature maps in the

l

th

layer, where

k

0

is the channel number of the first input layer. In order to control the width of the network and

improve the efficiency of the parameters,

k

is generally limited to a smaller integer. This control of the

growth rate can not only reduce the parameters of the DenseNet, but also ensure the performance of

the DenseNet.

In addition, although each layer only outputs feature maps, a large amount of feature maps

(

k(l −

1

) + k

0

) is the input data of each layer. To solve this problem, a bottleneck layer is added to

the DenseNet architecture. That is to say, a 1

×

1 convolution operation is introduced before each

3

×

3 convolution operation to reduce the dimension. This network architecture with bottleneck

layers is called DenseNet-B. At the same time, for simplifying the architecture, a compression factor

θ

(0

≤ θ ≤

1) can be added in the transition layer to decrease the output of the feature maps. If the

output of the dense blocks includes feature maps, the subsequent transition layer will output

θ ∗ m

feature maps.

θ =

1 indicates that the number of feature maps passing through the transition layer

remains unchanged. The network architecture containing the compression factor is called DenseNet-C.

The network architecture including the bottleneck layer and compression factor is called DenseNet-BC.

DenseNet-BC uses the bottleneck layer and the compression factor to narrow the network and reduce

the network parameters, effectively suppressing over-fitting. Moreover, the experimental results show

that DenseNet-BC using the bottleneck layer and compression factor can obtain a better fused image

than DenseNet.

3. Methodology

Some studies have demonstrated that deeper CNN architectures can extract more feature

information, but with the deepening of the network architecture, training will become increasingly

difficult. In view of the particularities of pan-sharpening, more feature information needs to be

extracted to ensure the preservation of spectral information and the enhancement of spatial resolution.

Therefore, a new pan-sharpening method is proposed in this paper that employs the advantages of

DenseNet to mitigate gradient disappearance, improve feature propagation, and promote feature reuse.

In this way, the fused image can retain the original image spectrum information and enhance its spatial

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功