Transformer模型全览:从BERT到ChatGPT背后的预训练技术

需积分: 0 195 浏览量

更新于2024-06-25

收藏 2.73MB PDF 举报

"这篇PDF论文提供了对Transformer预训练模型的全面分类和介绍,涵盖了从基础的Transformer架构到各种变体模型的详细信息。作者Xavier Amatriain在论文中梳理了近年来出现的各种Transformer模型,包括它们的名字、特点、用途以及创新之处。论文还特别讨论了Transformer模型的核心组件如注意力机制,以及它们为何在众多领域受到广泛应用。此外,他还介绍了强化学习与人类反馈(RLHF)和扩散模型等概念,并列出了一个详细的Transformer模型目录,包括它们的预训练架构、任务和应用。目录中包含了ALBERT、AlphaFold、Anthropic Assistant、BART、BERT和BigBird等多个知名模型的介绍和时间线。"

在深度学习领域,Transformer模型已经成为自然语言处理(NLP)和其他序列建模任务的主流选择。Transformer由Vaswani等人在2017年提出,以其独特的自注意力机制和编码-解码结构颠覆了传统的RNN和CNN模型。它们能够并行处理输入序列,提高了计算效率,尤其在大规模预训练任务中表现突出。

1. **Encoder/Decoder架构**:Transformer模型由编码器和解码器组成,其中编码器负责理解输入序列,解码器则生成输出序列。这种设计使得模型能够处理序列到序列的任务,如机器翻译。

2. **注意力机制**:Transformer的核心是多头自注意力(Multi-Head Self-Attention),它允许模型在不同位置之间建立动态的关系,从而捕获长距离依赖。

3. **Transformer的应用与流行原因**:Transformer不仅在NLP中取得突破,还在计算机视觉、语音识别等领域有广泛应用。它们的并行性、可扩展性和优秀性能是其受欢迎的主要原因。

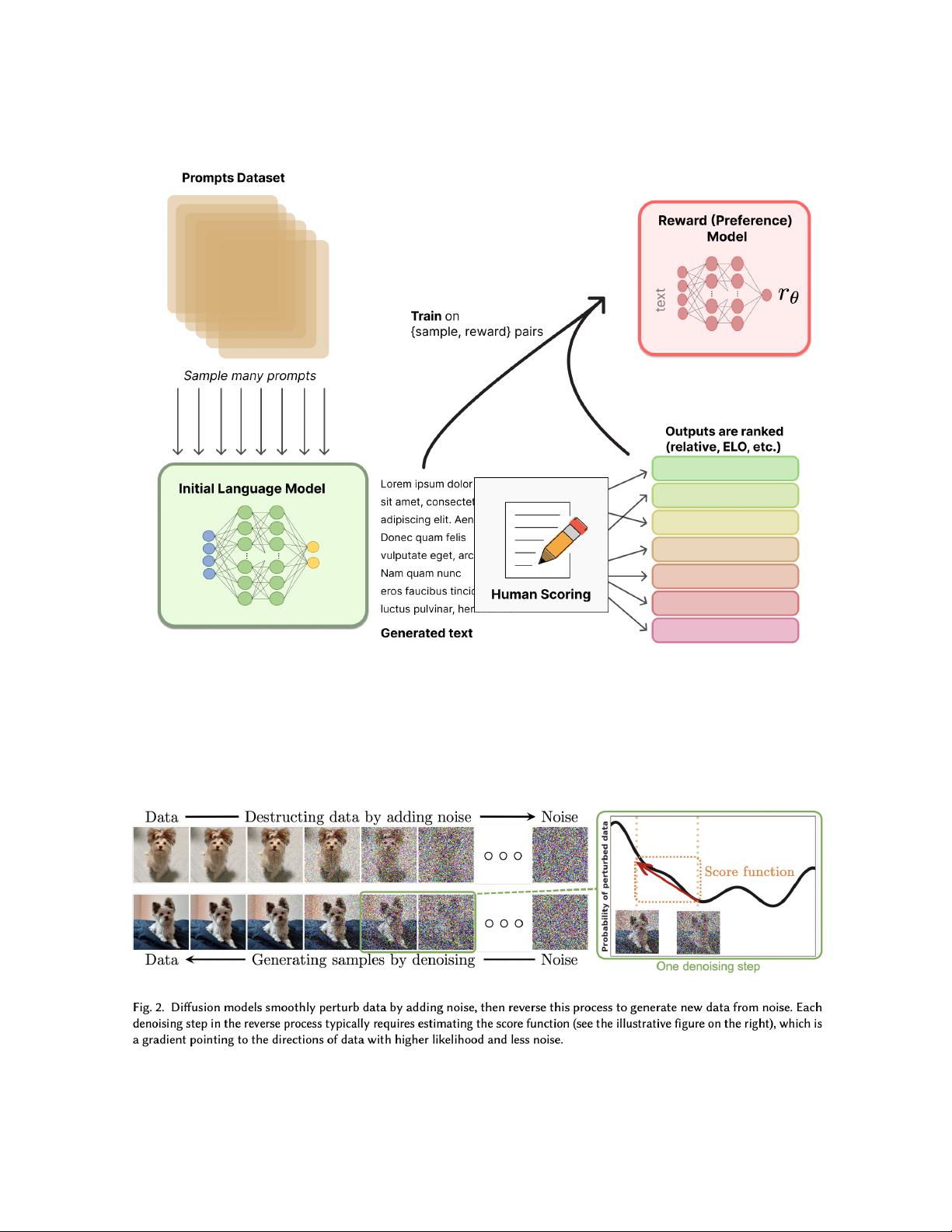

4. **RLHF(Reinforcement Learning with Human Feedback)**:这是一种结合强化学习和人类反馈的方法,用于优化模型的行为使其更符合人类期望。

5. **扩散模型**:在自然语言生成和图像生成等领域,扩散模型通过逐步“去噪”过程来生成高质量的序列或图像,提供了一种新的生成方法。

论文的目录部分列出了多种Transformer变体模型,例如:

- **ALBERT**:轻量级BERT,通过因子分解和共享层来减少模型大小,同时保持高性能。

- **AlphaFold**:DeepMind开发的蛋白质结构预测模型,利用Transformer架构解决了生物学上的难题。

- **Anthropic Assistant**:可能是指与人类价值观一致的AI助手,涉及伦理和安全考虑的预训练模型。

- **BART**:基于Transformer的序列到序列模型,用于文本生成任务,通过破坏和重建文本进行预训练。

- **BERT**:Bidirectional Encoder Representations from Transformers,双向预训练模型,开创了预训练-微调范式。

- **BigBird**:Google开发的长序列Transformer,通过稀疏注意力机制处理长文本。

这篇论文对于理解Transformer模型家族的发展和应用具有重要价值,对于研究者和从业者来说是一份宝贵的参考资料。

2023-06-16 上传

2023-12-24 上传

2023-04-03 上传

2023-12-23 上传

2023-03-15 上传

2023-06-05 上传

2023-04-04 上传

死磕代码程序媛

- 粉丝: 136

- 资源: 320

最新资源

- Java毕业设计项目:校园二手交易网站开发指南

- Blaseball Plus插件开发与构建教程

- Deno Express:模仿Node.js Express的Deno Web服务器解决方案

- coc-snippets: 强化coc.nvim代码片段体验

- Java面向对象编程语言特性解析与学生信息管理系统开发

- 掌握Java实现硬盘链接技术:LinkDisks深度解析

- 基于Springboot和Vue的Java网盘系统开发

- jMonkeyEngine3 SDK:Netbeans集成的3D应用开发利器

- Python家庭作业指南与实践技巧

- Java企业级Web项目实践指南

- Eureka注册中心与Go客户端使用指南

- TsinghuaNet客户端:跨平台校园网联网解决方案

- 掌握lazycsv:C++中高效解析CSV文件的单头库

- FSDAF遥感影像时空融合python实现教程

- Envato Markets分析工具扩展:监控销售与评论

- Kotlin实现NumPy绑定:提升数组数据处理性能