IEEE TRANSACTIONS ON PARALLEL DISTRIBUTED COMPUTING 3

servers in World Wide Web. Their observation was that

the web servers, which are responsible to handle access

requests from several clients, can make predictions on

which files are most likely to be demanded in the

near future [32]. I. Zhang [29] has implemented types

of prefetching schemes to improve the performance of

reading files and directories in WheelFS, which is a FUSE-

based distributed file system that aims to offer flexible

wide-area storage for distributed applications [30]. Re-

cently, Y. Yin et al. have proposed the IOSIG tool based

on their previous work [23], which can keep track of

parallel I/O calls of an application and then analyze the

collected information to provide a clear understanding

of I/O behavior of the application on the client machine

[17]. As a consequence, the client file systems can issue

prefetching requests or adjusting layout requests to the

storage servers for I/O optimization after certain access

predictions. However, tracing and analyzing I/O calls on

the client node causes extra space and time overhead,

thus the IOSIG tool may not be a good choice for

configuration-limited client machines to conduct I/O

optimization operations.

Furthermore, C. Amza’s group [36] and X. Zhang’s

group [37] are the pioneers of storage server side

prefetching in network based file systems. Both groups

proposed their prefetching schemes running on storage

servers, and their evaluation verified the effectiveness of

server-side prefetching. These schemes, however, either

require modifications of the applications or are only

working for a very limited number of block access

patterns. In brief, although block access history reveals

the behavior of disk traces, there are no general stor-

age server-side prefetching schemes that analyze block

access history in a distributed file system for yielding

better system performance. The well-known reasons for

this are the difficulties in modeling block access history

to generate block access patterns, as well as the aporias

in deciding the destination client file system for pushing

the prefetched data from storage servers.

3DATA PREFETCHING ON STO RAG E

SERVERS

In general, the sequence of block access on the stor-

age server is ordered in time, so that the block access

sequence can be split into successive parts by a con-

stant time interval, meaning that the sequence resembles

typical time series [13], [34]. This is a crucial fact for

understanding the proposal of this paper, which is a

server-side data prefetching mechanism that considers

block access history on the storage servers as a time

series, and then tries to classify various block access

patterns from the series. Consequently, it predicts the

future block access requests by matching the fixed access

patterns with current block access events in the predic-

tion window to guide reading block data in advance, and

finally the fetched data will be pushed to the relevant

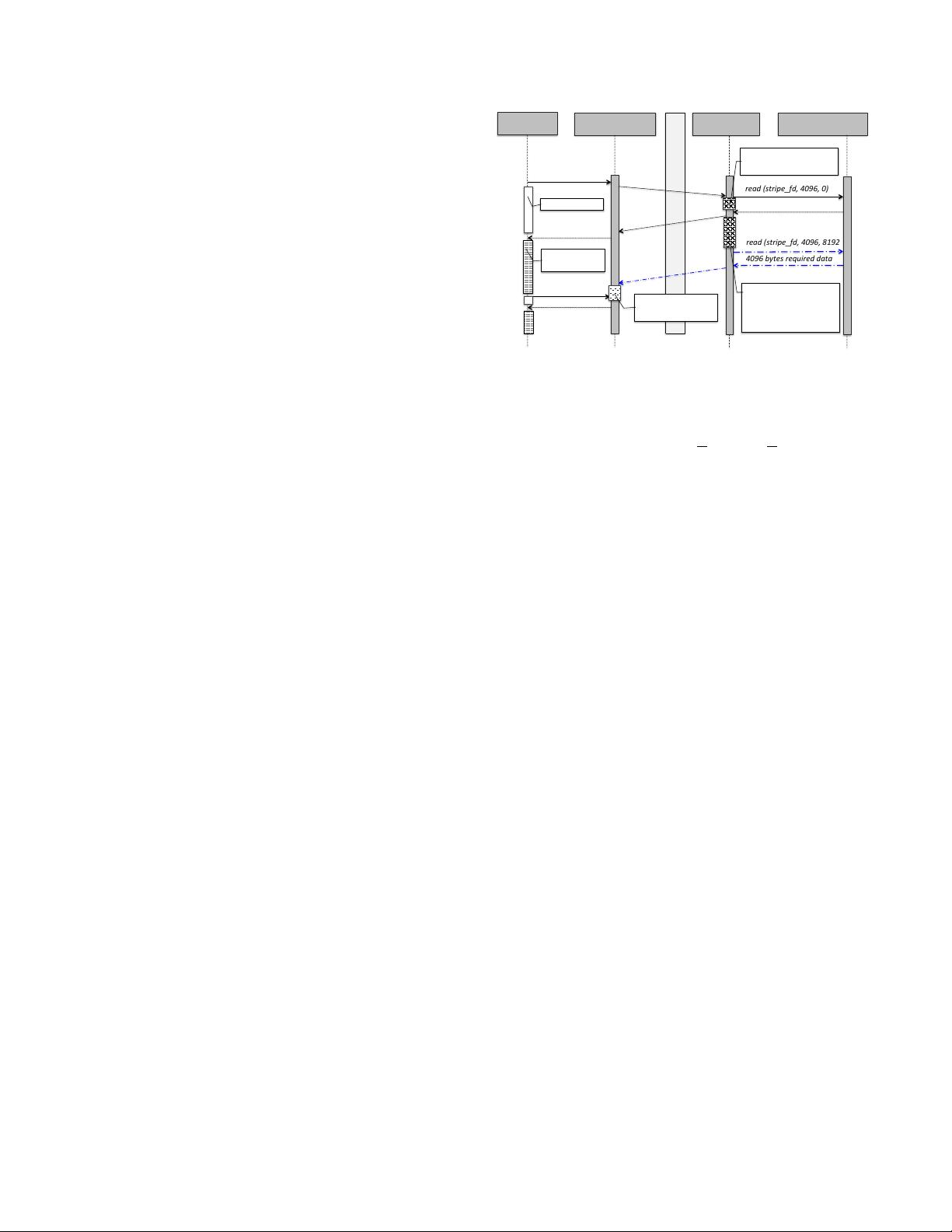

Client'file'system Storage'server Low'level'file'system

Network

Applica7on

read%(fd,%4096,%0)

read%(stripe_fd,%4096,%0)%

%%+%piggybacked%info.

read%(stripe_fd,%4096,%0)

4096%bytes%required%data

4096%bytes%required%data

required%data

read%(stripe_fd,%4096,%8192)

4096%bytes%required%data

4096%bytes%prefetched%data

read%(fd,%4096,%8192)

required%data

(

Forecas-ng(I/O(access(to(

prefetch(block(data(a9er(

analyzing(access(history(

&(piggybacked/info.

(

Caching(&(managing(

prefetched(data

Compu7ng'with'

input'data

Read'latency

.

Logging(access(event(

with(piggybacked/info.

①'

②'

③'

④'

⑤'

Fig. 1. Overview of the proposed server-side data

prefetching mechanism. The assumed synopsis of a read

operation is read(int fildes, size t size, off t off)

client file systems to fulfill potential I/O requests on the

client side.

Figure 1 presents the basic idea of our proposed

server-side prefetching mechanism, where the interac-

tion between the client file system and storage server

can be described through the following steps:

1) After contacting the metadata server to know

which storage server needs to be accessed for the

actual data request, the client file system sends

the corresponding storage server an I/O request

accompanying with some additional information

about the application and the client file system

(labeled as piggybacked info.).

2) The storage server, fetches the requested data from

the low level file system, and records the block

access event along.

3) The application on the client machine can perform

computing tasks after receiving the required data

from the storage server.

4) Meanwhile, the storage server is able to forecast

future block I/O access requests by analyzing the

history of block I/O access events and the piggy-

backed client information. Therefore, the storage

server can issue relevant physical read requests to

the low level file system for reading data (that are

predicted to be accessed by the future I/O requests)

in advance.

5) Finally, the prefetched data are forwarded to the

corresponding client file system (determined by

the piggybacked client identification) proactively.

As a result, when the prediction of I/O access

is successful, the buffered data on the client file

system can be returned to the application instantly,

and then read latency can be reduced to a great

extent.

In brief, the storage server can predict the future block