A memory Mapping Approach for Parallel Interleaver design with multiples read and write accesses

C. Chavet, P. Coussy

LabSTICC Lab., Université de Bretagne Sud

Abstract-For high throughput applications, turbo-like iterative

decoders are implemented with parallel architectures. However,

to be efficient parallel architectures require to avoid collision

accesses i.e. concurrent read/write accesses should not target

the same memory block. This consideration applies to the two

main classes of turbo-like codes which are Low Density Parity

Check (LDPC) and Turbo-Codes. In this paper we propose a

methodology which always finds a collision-free mapping of

the variables in the memory banks and which optimizes the

resulting interleaving architecture. Finally, we show through a

pedagogical example the interest our approach. This research

was supported by the European project DAVINCI.

Index Terms - Parallel architecture, interleavers, turbo-like

codes, memory mapping.

1. INTRODUCTION

In the multimedia and telecommunications domain, continuously

emerging customer services require severe performance to

implement the new communication standards. Indeed,

communication systems require high throughput -on the order of

several hundred Mb/s- accompanied by both low latency and

severe bit error rate BER constraints (e.g. wireless, fiber-optic

communication…). Owing to their impressive near-Shannon-limit

error correcting performance, turbo-like codes in their parallel or

serially concatenated versions [3], originally dedicated to channel

coding, or LDPC codes [5], are being currently reused in most of

digital communication systems (e.g. equalization, demodulation,

synchronization, MIMO…).

These coders are formed by two or more processing elements PE

(encoders/decoders) and one communication network composed of

steering components (multiplexers, butterflies, barrel shifters…) and

memory elements (registers, RAMs…). This network interleaves the

data blocks exchanged by the PEs according to a predefined rule

named interleaving law or permutation law. The turbo-like

decoding principle is based on an iterative algorithm using

decoders exchanging information in order to improve the error

correction performance through the iterations. The iterative nature

of these algorithms is a severe constraint to satisfy the

aforementioned requirements with an affordable implementation

complexity. A widespread solution is to realize the decoder in a

parallel fashion. On the one hand, this solution increases the

throughput since the latency of the system becomes the latency of

constituent sub-blocks [3]. On the other hand, the complexity and

the cost of the system are increased due to parallel nature of the

architecture.

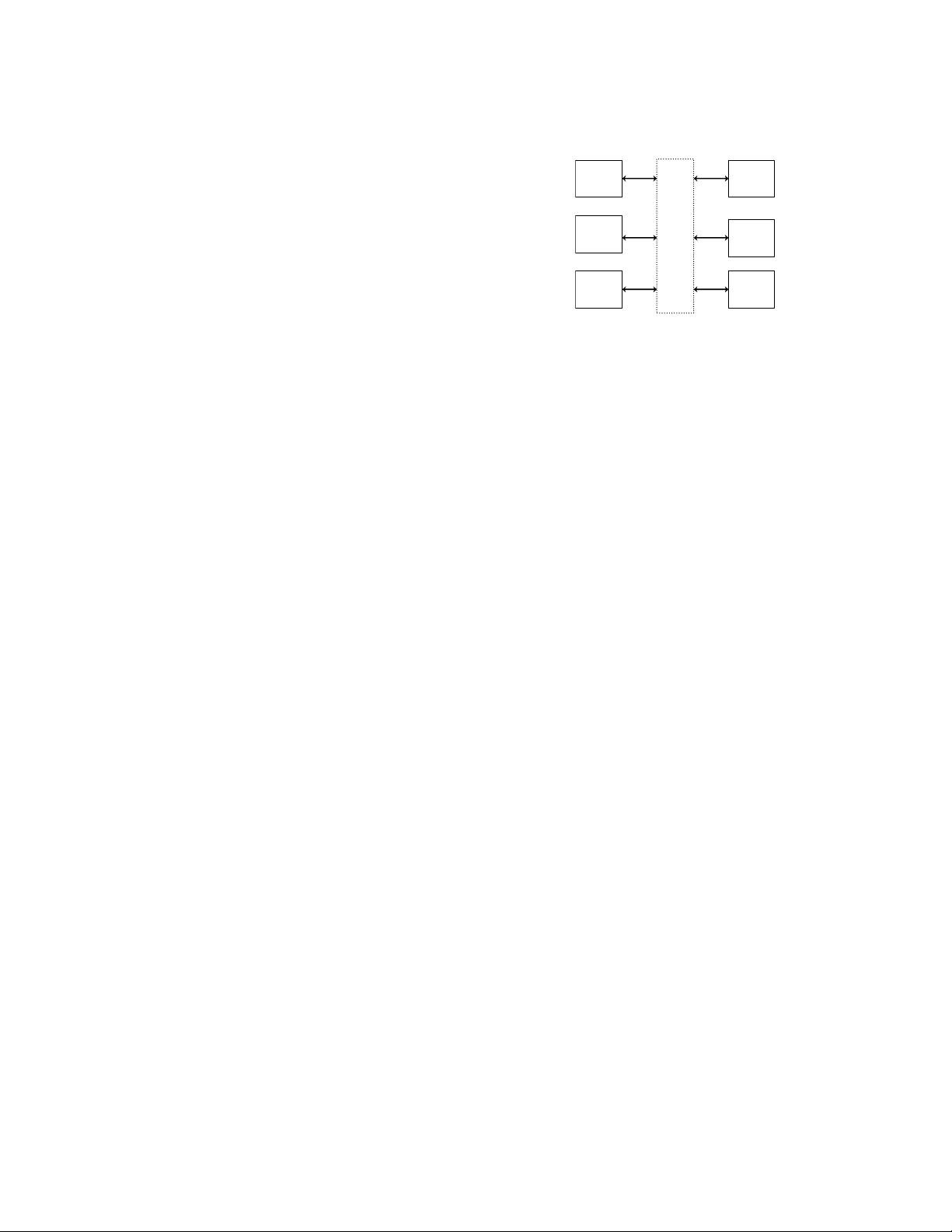

By the way, depending on the interleaving law, different parallel

processing elements may try to simultaneously access the same

memory block (cf. Fig.

1

). This problem is known as the “collision”

problem [11]. In this case, three classes of solution are available:

The designer may

- define his own dedicated interleaving law in order to avoid such

collision problems, but the resulting architecture may not be

standard compliant.

- add extra memory elements and control logic in the communication

network in order to buffer and postpone the conflicting data.

- find a memory mapping avoiding any conflict access and taking into

account the cost of the architecture (i.e. interconnection network).

The paper is organized as follows: the second section presents the

existing solutions to design parallel interleaver architectures.

Mem 0

Mem 1

Mem 2

PE 0

PE 1

PE 2

Interconnection network

Fig. 1 Memory collision problem

The third section is dedicated to the problem formulation of the

interleaver design. In the fourth section we present the proposed

approach to automatically find a memory mapping solution that

avoids any conflict access. Finally, the last section presents

experimental results on a pedagogical example.

2. RELATED WORKS

An interleaving law is a permutation law, also referred as π, that

scrambles data to break up neighbourhood-relations [11]. It is a

key factor for turbo-codes performances, which varies from one

communication standard to another. Moreover for a given

standard, different interleaving rules can be used for different

modes through varying frame lengths and/or data rates [7]. In this

context, taking into account the aforementioned constraints and the

collision problems to design hardware implementations of parallel

turbo decoders require the integration of complex interconnection

network topology (cf. Fig.

1

) supporting the intensive interleaved

memory accesses. Indeed, in state-of-the-art parallel turbo-

decoding, interleaving is considered as a limiting factor for the

overall system performance and the architectural cost. To

successfully tackle these problems, different solutions exist.

Multiple solutions have been proposed in classical Single-Read /

Single-Write approaches. A first solution to get rid of collisions

with non prunable interleavers, consists in designing a specific

interleaver rule. In [11], the authors propose a deterministic

methodology to design collision-free interleavers. In [12] and [8]

the authors define collision-free permutations thanks to a

combination of a spatial and a temporal permutation. The authors

of [14] simply integrate the collision-free constraint in the design

of their interleaver. However, the multi-modes architectures

(depending on the frame length, the data-rate…) cannot be handled

by such approaches. Another solution consists in defining a

collision-free interleaver that preserves this property even when

pruned. In [7], the authors describe a design rule to obtain such

interleavers, with an incremental algorithm that generates

collision-free interleavers by adding new elements in successive

steps, to a small initial permutation. Of course, all these solutions

are viable if and only if the designer is free to choose the

permutation law to be used in the system. As a consequence, the

resulting architecture may not be standard compliant.

A second approach consists in adding extra memory elements in

the communication network. The aim is to buffer and to postpone

the conflicting data. In [19] the authors propose, when a collision

appears, to store the conflicting information in the communication

network until the targeted sub-block can process it. Of course, the

additional network buffering resources, and consequently the time

needed to interleave information, increase with the number of

parallel processors. This is a suboptimal strategy, in terms of

latency and thus throughput, which avoids collisions at the

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功