LADDER: A Human-Level Bidding Agent for Large-Scale Real-Time

Online Auctions

Yu Wang, Jiayi Liu, Yuxiang Liu, Jun Hao, Yang He, Jinghe Hu, Weipeng Yan, Mantian Li

Business Growth Division. JD.com.

Beijing, China

{wangyu5, liujiayi5, liuyuxiang1, haojun, landy, hujinghe, paul.yan, limantian}@jd.com

Abstract

We present LADDER, the first deep reinforcement learning

agent that can successfully learn control policies for large-

scale real-world problems directly from raw inputs com-

posed of high-level semantic information. The agent is

based on an asynchronous stochastic variant of DQN (Deep

Q Network) named DASQN. The inputs of the agent are

plain-text descriptions of states of a game of incomplete in-

formation, i.e. real-time large scale online auctions, and the

rewards are auction profits of very large scale. We apply the

agent to an essential portion of JD’s online RTB (real-time

bidding) advertising business and find that it easily beats the

former state-of-the-art bidding policy that had been careful-

ly engineered and calibrated by human experts: during

JD.com’s June 18

th

anniversary sale, the agent increased the

company’s ads revenue from the portion by more than 50%,

while the advertisers’ ROI (return on investment) also im-

proved significantly.

Introduction

Researchers have made great progress recently in learning

to control agents directly from raw high-dimensional sen-

sory inputs like vision in domains such as Atari 2600

games (Mnih et al. 2015), where reinforcement learning

(RL) agents have human-level performance. However,

most real-world problems have high-level semantic infor-

mation inputs rather than sensory inputs, where what hu-

man experts usually do is to read and understand inputs in

plain-text form and act after judging by expertise. Real-

world problems are much more challenging than video

games in that they always have a larger solution space and

in that their states can only be partially observed. Such

real-world problems have not been tackled by any state-of-

the-art RL agents until now.

This paper demonstrates an agent named LADDER for

such a problem. Using a deep asynchronous stochastic Q-

network (DASQN), the agent improves the performance of

JD’s real-time bidding (RTB) ad business.

RTB is the most promising field in online advertising

which greatly promotes the effectiveness of the industry

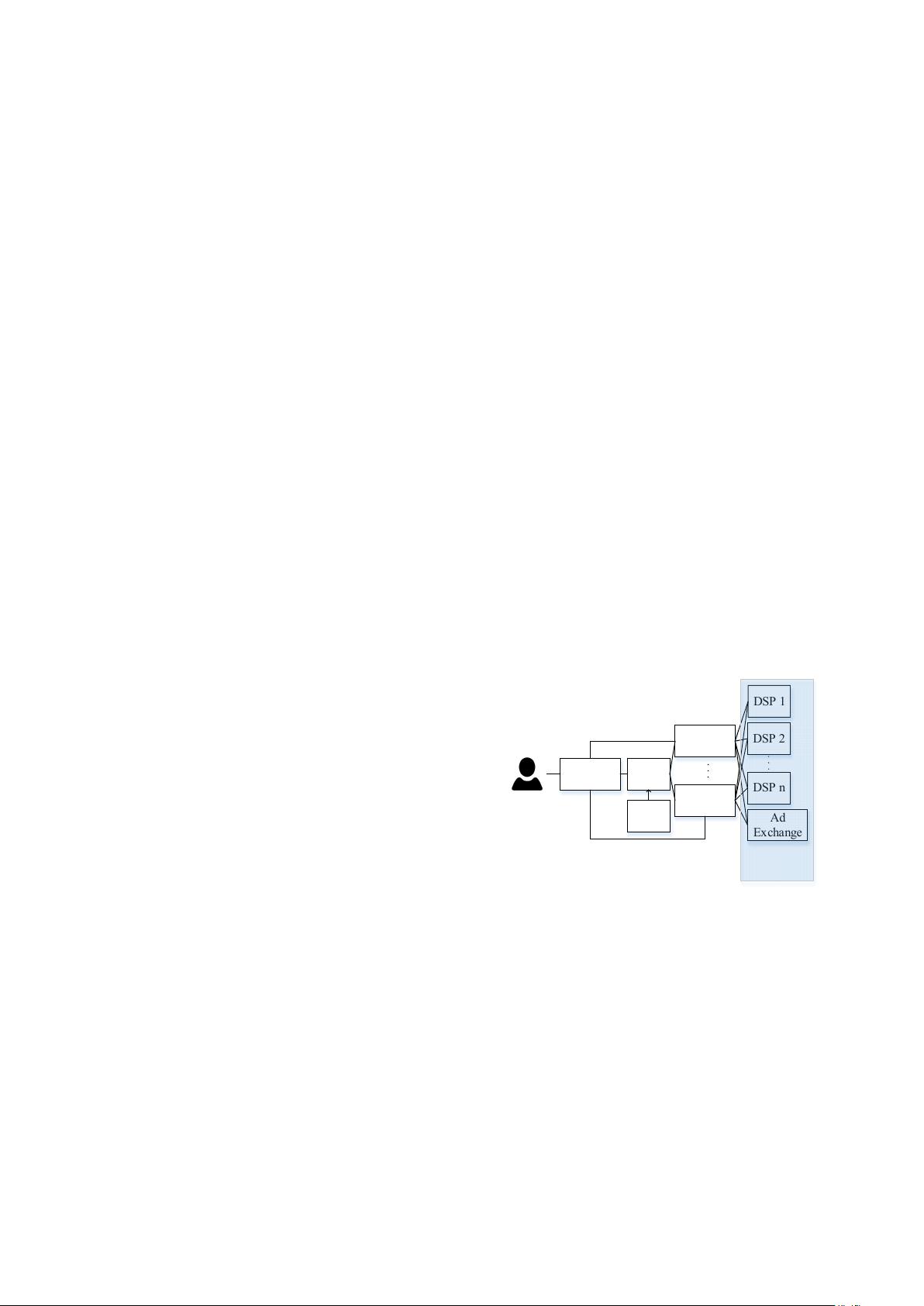

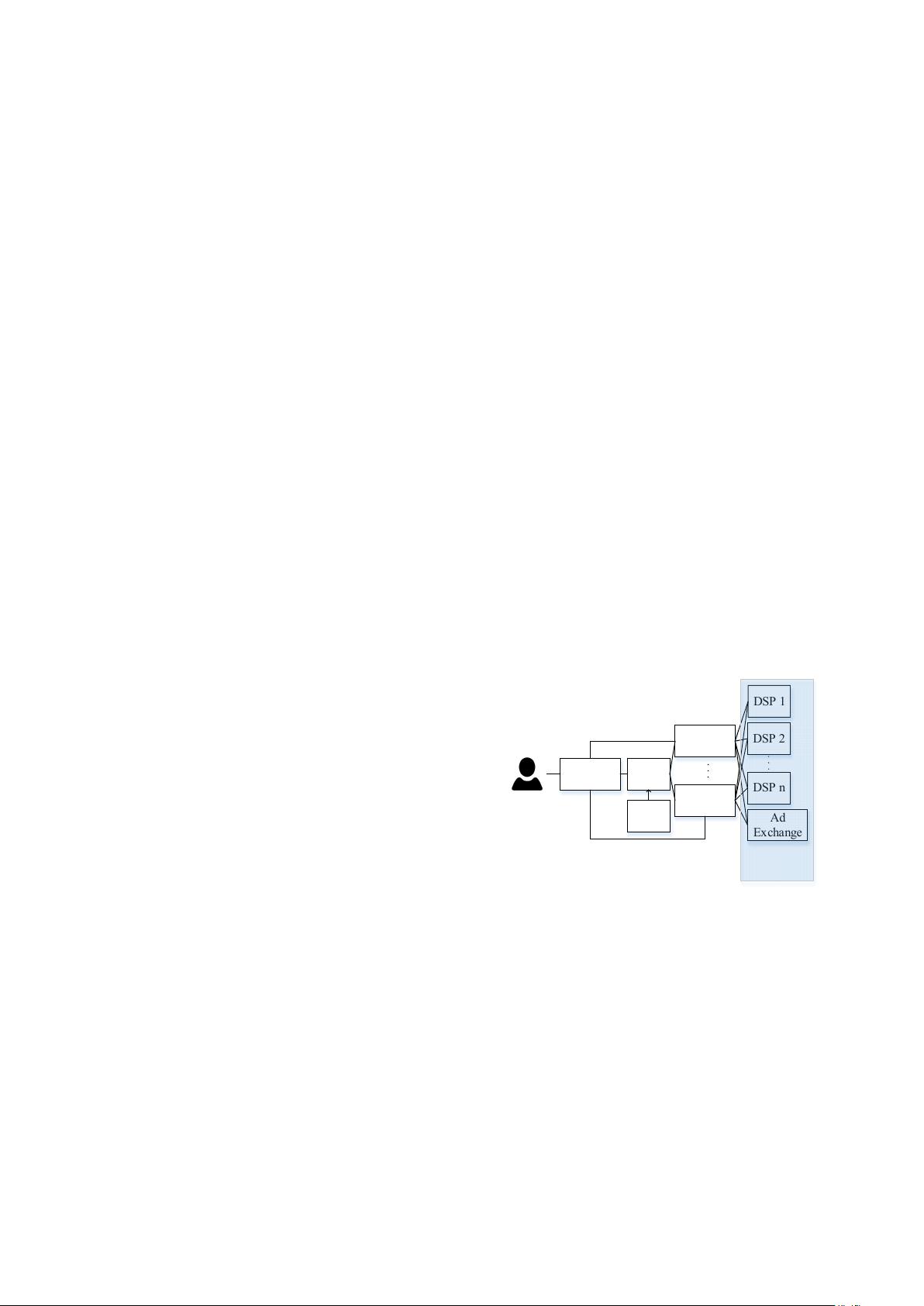

(Yuan, Wang, and Zhao 2013). A typical RTB environ-

ment (Figure 1) consists of ad exchanges (ADXs), supply

side platforms (SSPs), data management platforms (DMPs)

and demand side platforms (DSPs). ADXs and DSPs uti-

lize algorithms to buy/sell ads in real-time. SSPs integrate

information of publishers (i.e. online media) and offer ads

requests of the publishers to ADXs. An ADX puts the of-

fers out to DSPs for bidding. DSPs target appropriate ads

to the involved user based on information supplied by

DMPs and return the ads with their bids to the ADX which

displays ads of the highest bidder and charges the winner

DSP with general second price (Varian 2007).

Ad

Exchange

DSP 1

DSP 2

DSP n

Ad

Exchange

Ad

Exchange

SSP

DMP

Publisher

intense

competetive

Figure 1: A typical RTB auction environment

Obviously, the process of many DSPs/ADXs bidding for

an ad offer is an auction game (Myerson 1981) of incom-

plete information. However, the online ads industry just

ignores this fact and considers RTB a solved problem: all

existing DSPs model auction games as supervised learning

(SL) problems by predicting the click through rate (CTR)

(McMahan et al. 2013) or conversion rate (CVR) (Yuan,

Wang, and Zhao 2013) of ads and using effective cost per

mille (ECPM) as bids (Chen et al. 2011).