AutoRemover: Automatic Object Removal for Autonomous Driving Videos

Rong Zhang,

1∗

Wei Li,

2,4†

Peng Wang,

2

Chenye Guan,

2

Jin Fang,

2

Yuhang Song,

3

Jinhui Yu,

1

Baoquan Chen,

4

Weiwei Xu,

1†

Ruigang Yang,

2

1

Zhejiang University,

2

Baidu Research, Baidu Inc.

3

University of Southern California,

4

Peking University

cadzhangrong@zju.edu.cn, liweimcc@gmail.com, jerryking234@gmail.com, {guanchenye, fangjin}@baidu.com,

yuhangso@usc.edu, jhyu@cad.zju.edu.cn, baoquan@pku.edu.cn, xww@cad.zju.edu.cn, ryang2@outlook.com

Abstract

Motivated by the need for photo-realistic simulation in au-

tonomous driving, in this paper we present a video inpaint-

ing algorithm AutoRemover, designed specifically for gener-

ating street-view videos without any moving objects. In our

setup we have two challenges: the first is the shadow, shad-

ows are usually unlabeled but tightly coupled with the mov-

ing objects. The second is the large ego-motion in the videos.

To deal with shadows, we build up an autonomous driving

shadow dataset and design a deep neural network to detect

shadows automatically. To deal with large ego-motion, we

take advantage of the multi-source data, in particular the 3D

data, in autonomous driving. More specifically, the geometric

relationship between frames is incorporated into an inpaint-

ing deep neural network to produce high-quality structurally

consistent video output. Experiments show that our method

outperforms other state-of-the-art (SOTA) object removal al-

gorithms, reducing the RMSE by over 19%.

1 Introduction

With the explosive growth of AI robotic techniques, espe-

cially the autonomous driving (AD) vehicles, countless im-

ages or videos as long as other sensor data are captured daily.

To fuel the learning-based AI algorithms (such as percep-

tion, scene parsing, planning) in those intelligence systems,

a large number of annotated data are still in great demand.

Thus, building virtual simulators for saving massive efforts

on labeling and processing the captured data are essential

to make the data best used for various AD applications (Al-

haija et al. 2018; Seif and Hu 2016). One basic procedure

in those applications is removing the unwanted or hard-to-

annotate parts of the raw data, a.k.a the object removal or im-

age/video inpainting. As shown in Figure 1, with the devel-

oped simulation system in (Li et al. 2019), the background

image obtained by removing the foreground vehicles can be

used to synthesize new traffic images with annotations or re-

construct 3D road models with clean textures, which is one

of the desirable ways for data augmentation.

The image inpainting problem has been widely inves-

tigated, which also forms the basis of video inpainting.

∗

The authors from Zhejiang University are affiliated with the

State Key Lab of CAD&CG.

†

Corresponding authors.

Copyright

c

2020, Association for the Advancement of Artificial

Intelligence (www.aaai.org). All rights reserved.

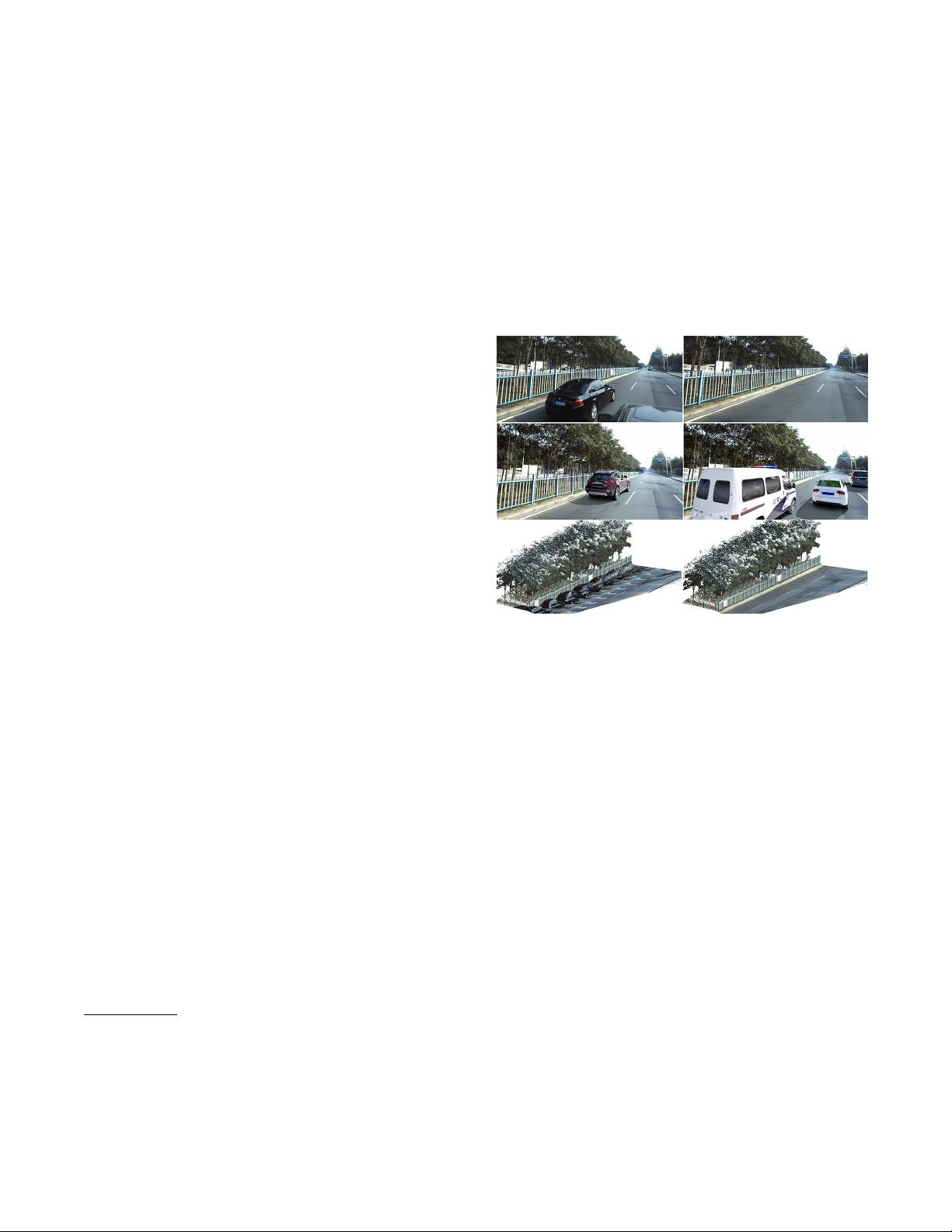

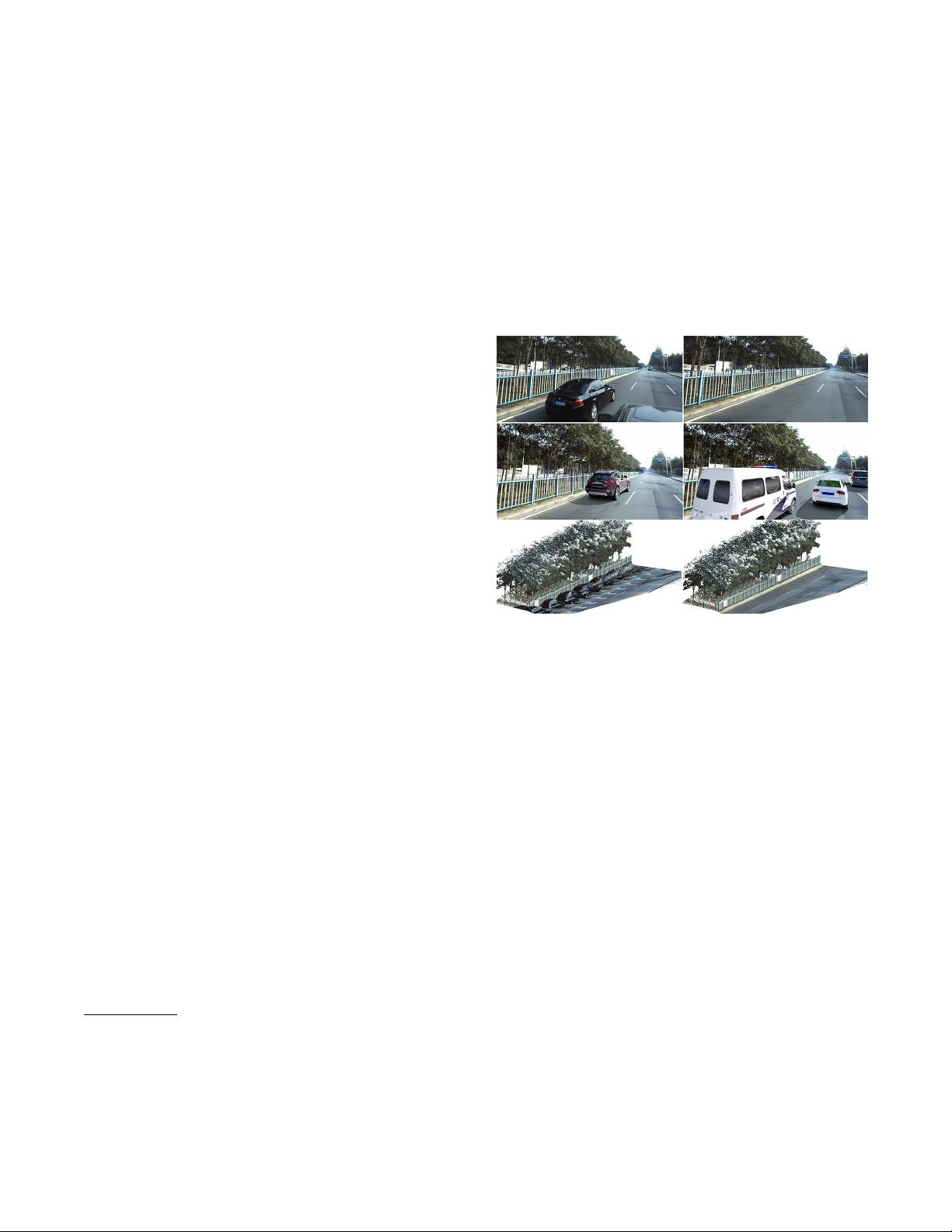

Figure 1: 1st row shows the source image and inpainted one

from a video. 2nd row shows the usage of inpainting in data

augmentation and simulator. With the inpainted background,

the vehicle can be moved or inserted to synthesize new traf-

fic images. 3rd row shows inpainted videos are used to yield

3D model with clean texture.

Technically, image inpainting algorithms either utilize sim-

ilar patches in the current image to fill the hole by the

optimization-based methods or directly hallucinate from

training images by the learning-based methods. Recently,

the CNNs, especially GANs, hugely advanced the image in-

painting technique (Pathak et al. 2016; Iizuka, Simo-Serra,

and Ishikawa 2017; Yu et al. 2018a), yielding visually plau-

sible and impressive results. However, directly applying im-

age inpainting techniques to videos suffers from jittering and

inconsistency. Thus, different kinds of temporal constraints

are introduced in recent video inpainting approaches (Huang

et al. 2016; Xu et al. 2019), whose core is jointly estimating

optical flow and inpainting color.

Even several video inpainting systems have been pro-

posed in the very close recent, their target scenarios are

usually with only small camera ego-motion in the behind

of foreground objects movements, where the flow between

frames are easy to estimate. Unfortunately, the videos cap-

tured by AD vehicles have large camera ego-motion (Fig-

arXiv:1911.12588v1 [cs.CV] 28 Nov 2019