MaskGEC: Improving Neural Grammatical Error Correction

via Dynamic Masking

Zewei Zhao, Houfeng Wang

MOE Key Lab of Computational Linguistics, Peking University, Beijing, 100871, China

zhaozewei@pku.edu.cn, wanghf@pku.edu.cn

Abstract

Grammatical error correction (GEC) is a promising natu-

ral language processing (NLP) application, whose goal is to

change the sentences with grammatical errors into the correct

ones. Neural machine translation (NMT) approaches have

been widely applied to this translation-like task. However,

such methods need a fairly large parallel corpus of error-

annotated sentence pairs, which is not easy to get especially

in the field of Chinese grammatical error correction. In this

paper, we propose a simple yet effective method to improve

the NMT-based GEC models by dynamic masking. By adding

random masks to the original source sentences dynamically

in the training procedure, more diverse instances of error-

corrected sentence pairs are generated to enhance the gen-

eralization ability of the grammatical error correction model

without additional data. The experiments on NLPCC 2018

Task 2 show that our MaskGEC model improves the perfor-

mance of the neural GEC models. Besides, our single model

for Chinese GEC outperforms the current state-of-the-art en-

semble system in NLPCC 2018 Task 2 without any extra

knowledge.

Introduction

Grammatical error correction (GEC) has attracted much in-

terest as a natural language processing (NLP) application in

recent years. The definition of the grammatical error correc-

tion task is: given a sentence which may contain grammat-

ical errors, one is required to detect and correct the errors

presented in the sentence, and return its error-free natural

language representation. Regarding the incorrect sentences

as source language and the corrected sentences as target lan-

guage, the GEC task can be treated as a machine translation

(MT) task. For example, English GEC can be converted to

the translation from “bad” English to “good” English.

With the rapid development of deep learning, neural ma-

chine translation (NMT) approaches based on sequence-

to-sequence (seq2seq) models have become mainstream

in the field of machine translation. Recently, quite a few

works (Yuan and Briscoe 2016; Ji et al. 2017; Chollampatt

and Ng 2018) have applied the neural seq2seq models to

the grammatical error correction tasks and have made some

Copyright

c

2020, Association for the Advancement of Artificial

Intelligence (www.aaai.org). All rights reserved.

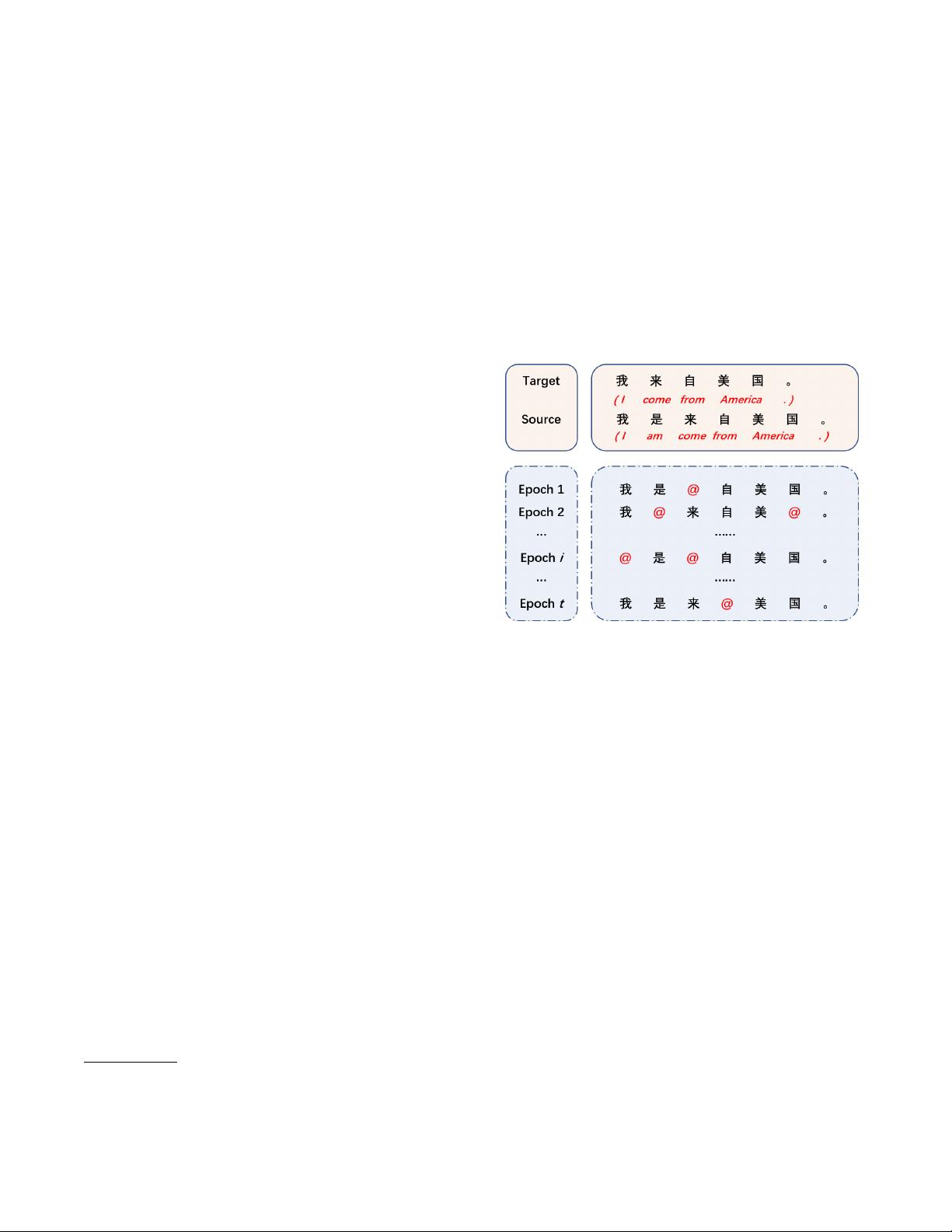

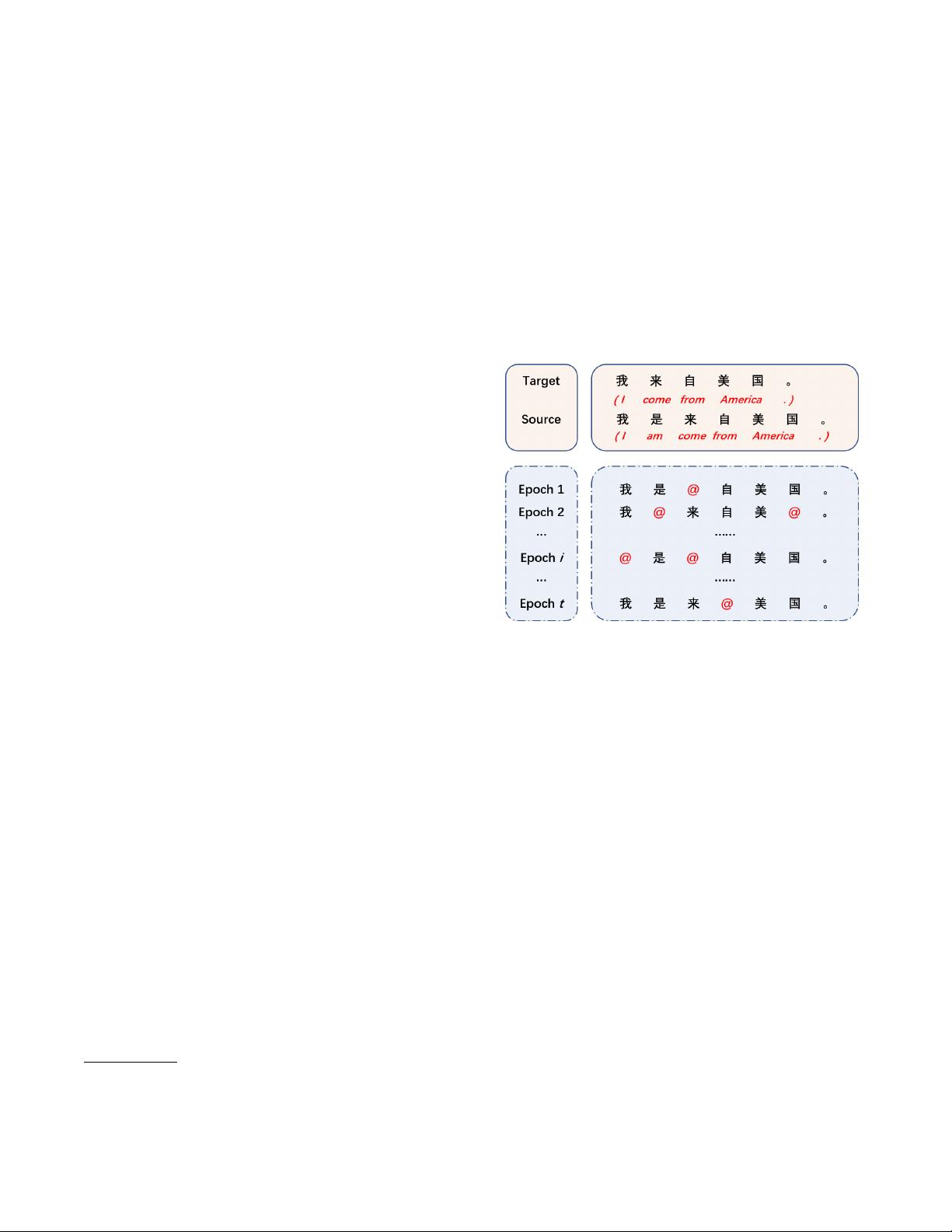

Figure 1: An example of error-corrected sentence pairs and

the generated noisy sentence pairs during the whole training

time. The placeholder ’@’ denotes any possible word that is

chosen as a replacement.

progress. However, these NMT-based models for GEC face

a problem. Due to the limited size of the parallel corpus of

error-corrected sentence pairs, the seq2seq models for GEC,

which usually contain millions of parameters, are difficult to

be trained sufficiently. Therefore, even if a test case sentence

is just slightly different from a training instance, the models

may fail to correct it.

In order to overcome the drawback of neural grammati-

cal error correction models which is mentioned above, we

propose a simple yet effective dynamic masking method to

enhance the performance of neural GEC models.

In the training procedure, We add various kinds of random

noises to the inputs via masking to generate noisy source

sentences dynamically, but keep the target sentences un-

changed. By pairing the new source sentences with the cor-

responding target sentences, we can obtain more abundant

error-corrected sentence pairs, as Figure 1 shows. For the

sake of convenience, we call the newly constructed error-

corrected sentence pairs through random noising noisy sen-

tence pairs. Rather than use the aforementioned noisy sen-

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功