ASPLOS ’21, April 19–23, 2021, Virtual, USA Yu Gan, Mingyu Liang, Sundar Dev, David Lo, and Christina Delimitrou

A

B

- : Request send queueing

- : Request network latency

- : Request recv queueing

- : RPC server-side latency

- : Response send queueing

- : Response network latency

- : Response recv queueing

- : RPC client-side latency

RPC 0

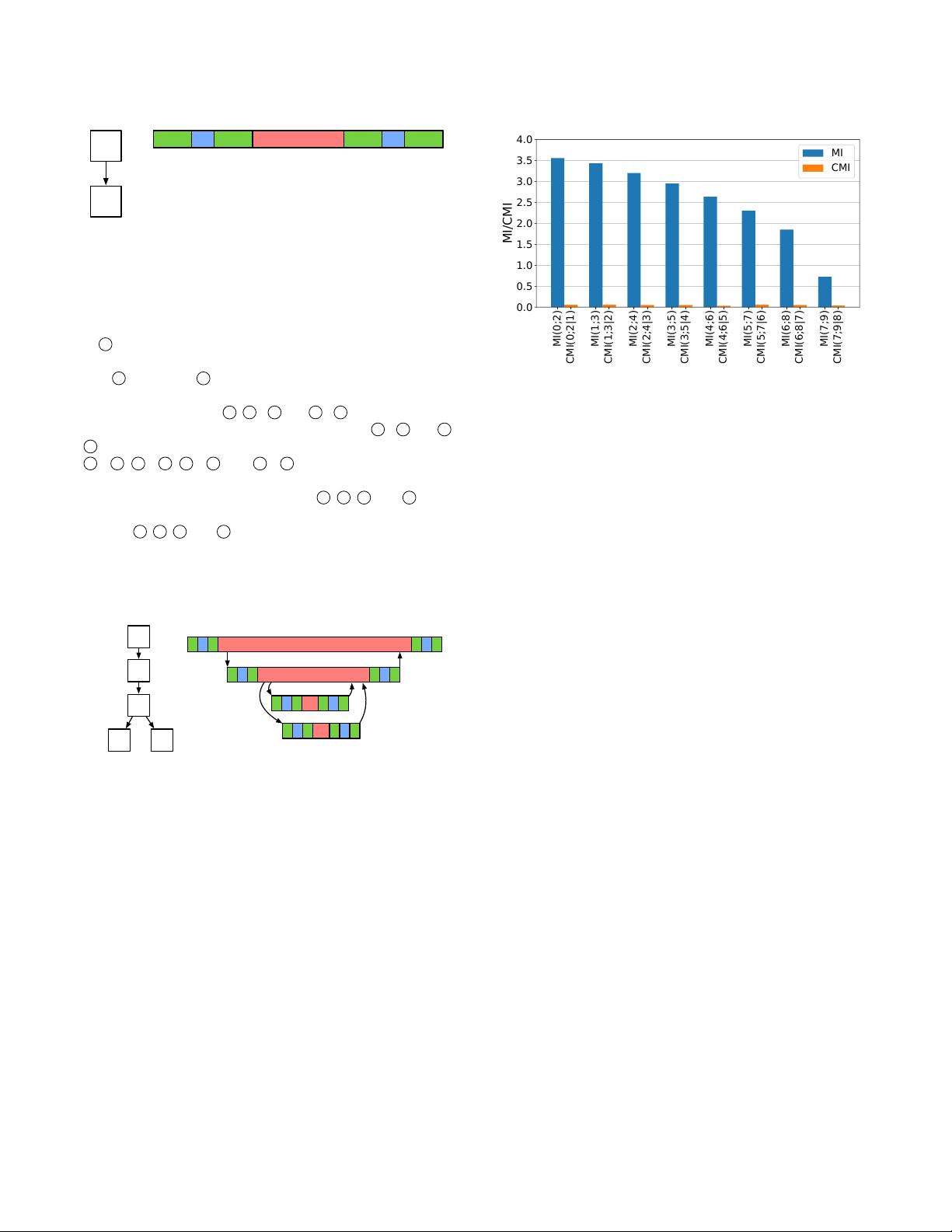

Figure 2: RPC latency breakdown. Red bars: RPC server-side

latency, blue bars: network latency, green bars: application

queueing.

NIC. After being processed by the server’s network protocol stack

at

3

, the request is queued in the RPC channel’s receive queue,

waiting to be processed via the

rpc0_handler

, which starts at

time

4

and ends at

5

. Finally, the RPC response follows the same

process from server to client, until it is received by the client’s

application layer at time

8

.

1

-

8

and

4

-

5

are the application-

level client- and server-side latencies, respectively.

2

-

3

and

6

-

7 are the latencies in the network protocol, switches, and wiring.

1

-

2

,

3

-

4

,

5

-

6

, and

7

-

8

is the queueing time in the

application layer of the client and server, respectively.

Timestamps for the user-level events

1

,

4

,

5

, and

8

can be

obtained with distributed tracing frameworks, such as Jaeger. Times-

tamping

2

,

3

,

6

, and

7

, would require probing the Linux kernel

with high-overhead tools, like SystemTap [

45

]. Instead, we approxi-

mate the request/response network delay by measuring the latency

of heartbeat signals between client and server, when queueing in

the application is zero.

B

C

D E

A

RPC 0

RPC 1

RPC 2

RPC 3

RPC 0

RPC 1

RPC 2

RPC 3

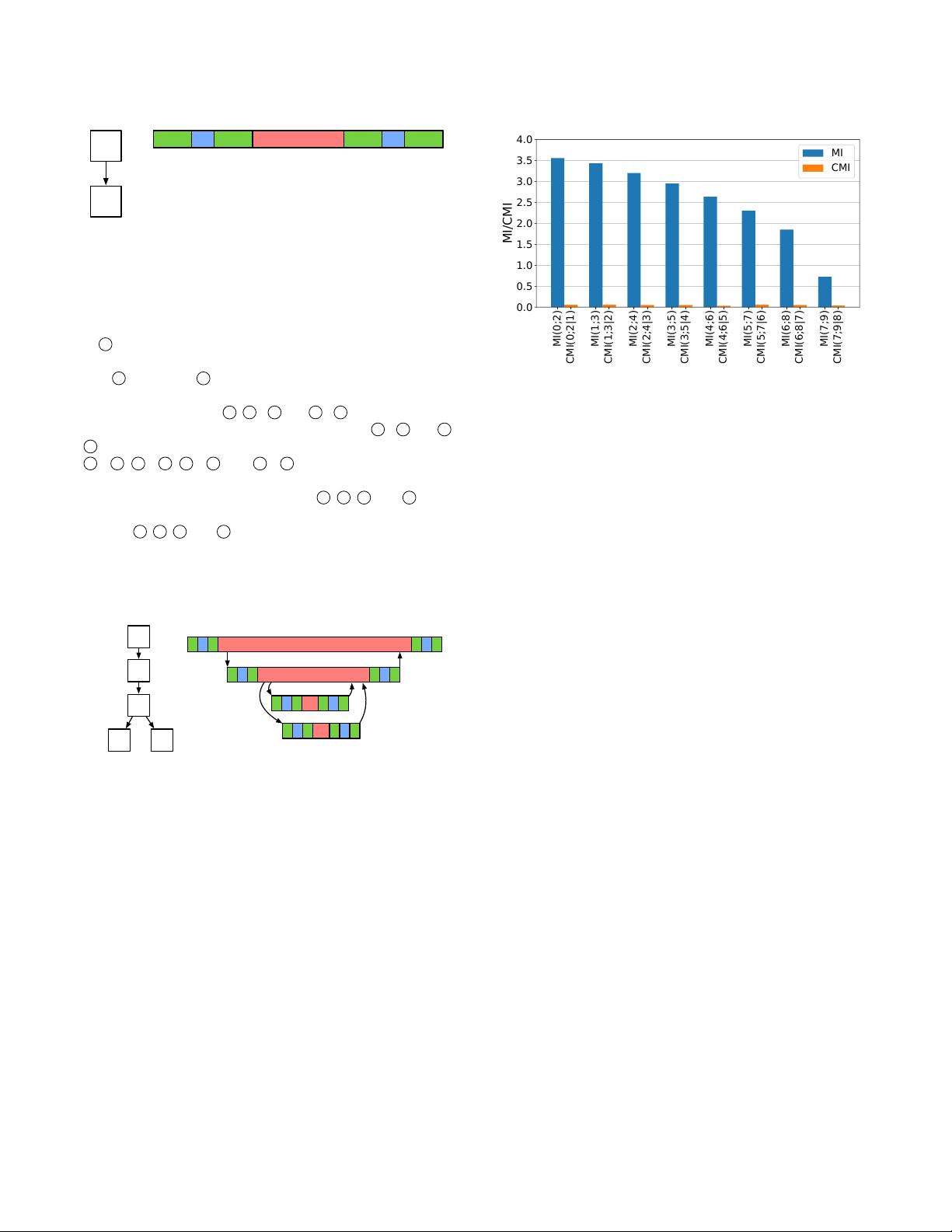

Figure 3: Dep endency graph and traces of nested RPCs.

3.2.2 Markov Property of RPC Latency Propagation.

Multiple RPCs form a tree of nested traces in a distributed mon-

itoring system. Fig. 3 shows an example RPC dependency graph

with ve services, four RPCs, and its corresponding latency traces.

When the user request arrives at

A

, it sends

RPC0

to service

B

.

B

further forwards the request to

C

via

RPC1

, and

C

sends it to the

backend services

D

and

E

via

RPC2

and

RPC3

in parallel. After pro-

cessing the responses from

D

and

E

,

C

replies to

B

, and

B

replies to

A, as RPC1 and RPC0 return.

The server-side latency of any non-leaf RPC is determined by the

processing time of the RPC itself and the waiting time (i.e., client-

side latency) of its child RPCs. This latency propagates through

the RPC graph to the frontend. Since the latency of a child RPC

cannot propagate to its parent without impacting its own latency,

the latency propagation follows a local Markov property, where

each latency is conditionally independent on its non-descendant

RPCs, given its child RPC latencies [

69

]. For instance, the latency

0.0

0.5

1.0

1.5

2.0

2.5

3.0

3.5

4.0

MI/CMI

MI

CMI

MI(0;2)

CMI(0;2|1)

MI(1;3)

CMI(1;3|2)

MI(2;4)

CMI(2;4|3)

MI(3;5)

CMI(3;5|4)

MI(4;6)

CMI(4;6|5)

MI(5;7)

CMI(5;7|6)

MI(6;8)

CMI(6;8|7)

MI(7;9)

CMI(7;9|8)

Figure 4: Mutual Information (MI) of two distance-of-2

RPCs, and Conditional Mutual Information (CMI) given the

server latency of the middle RPC. CMI of zero means that

the latencies of the two RPCs are conditionally independent,

given the latency of their in-between RPC.

of

RPC0

is conditionally independent of

RPC2

and

RPC3

, given the

latency of RPC1.

In information theory, mutual information measures the reduc-

tion of uncertainty in one random variable given another random

variable. Two random variables are independent or conditionally

independent if their mutual information (MI) or conditional mu-

tual information (CMI) is zero [

53

]. Fig. 4 shows the MI of the

server-side latencies of two RPCs with distance of two, and their

CMI, given the server-side latency of the in-between RPC, in a

10-microservice chain. The MI of each two non-adjacent RPCs is

blocked by the latency of the RPC in the middle, making them

conditionally independent [27].

3.3 Modeling Microservice Dependency Graphs

3.3.1 Causal Bayesian Networks.

A CBN is a directed acyclic graph (DAG), where the nodes are

random variables and the edges indicate their conditional depen-

dencies, from cause to eect [84, 88]. Sage uses three node types:

• Metric nodes (X )

: They contain resource-related metrics of all

services and network channels collected with tools, like Google

Wide Proling [

24

,

34

,

96

]. They are the exogenous variables that

cause latency variances across RPCs, and fall into two groups:

server- and network-related. Server-related metrics (

X

s

), include

CPU utilization, memory bandwidth, context switches, etc., and

impact the server’s processing time. Network-related metrics

(

X

net

), such as the round trip time (RTT), packet loss rate, net-

work bandwidth, etc., aect the delay of RPC channels. The set

of sucient metrics was derived by selecting those features that

improve the model’s accuracy, without overtting to a specic

deployment. Features that may be capturing overlapping informa-

tion are discarded by the network by demoting the corresponding

neuron weights. To keep the shape of the vector for each metric

the same regardless of the replicas per tier, we use a vector of

percentiles [

64

], e.g., [10th%, ..., 90th%, 100th%] computed across

the tier’s replicas.