RealFake

Effective

Patch Size

Mult-scale Patch

Discriminator

Mult-scale Patch

Generator

Training Progression

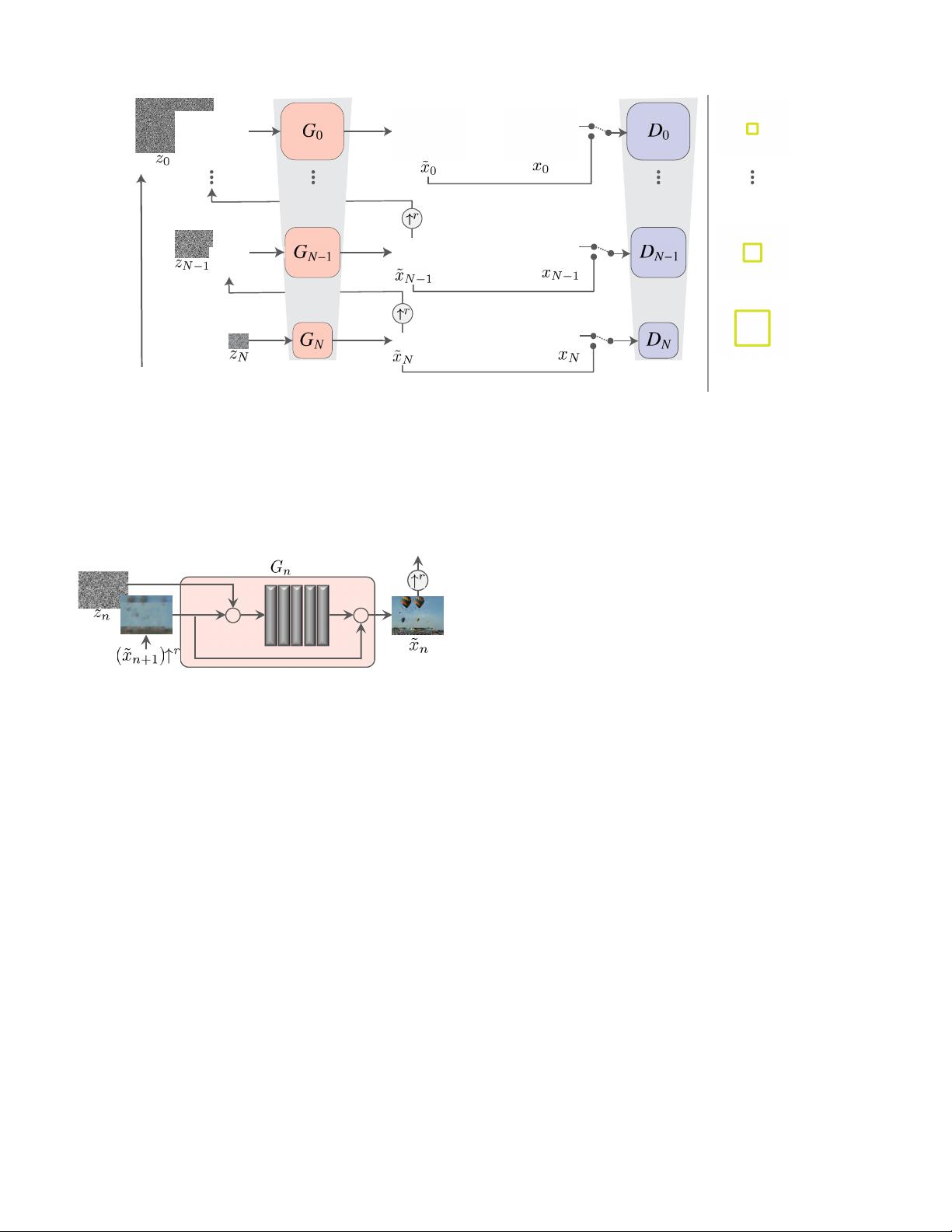

Figure 4: SinGAN’s multi-scale pipeline. Our model consists of a pyramid of GANs, where both training and inference are

done in a coarse-to-fine fashion. At each scale, G

n

learns to generate image samples in which all the overlapping patches

cannot be distinguished from the patches in the down-sampled training image, x

n

, by the discriminator D

n

; the effective

patch size decreases as we go up the pyramid (marked in yellow on the original image for illustration). The input to G

n

is a

random noise image z

n

, and the generated image from the previous scale ˜x

n

, upsampled to the current resolution (except for

the coarsest level which is purely generative). The generation process at level n involves all generators {G

N

. . . G

n

} and all

noise maps {z

N

, . . . , z

n

} up to this level. See more details at Sec. 2.

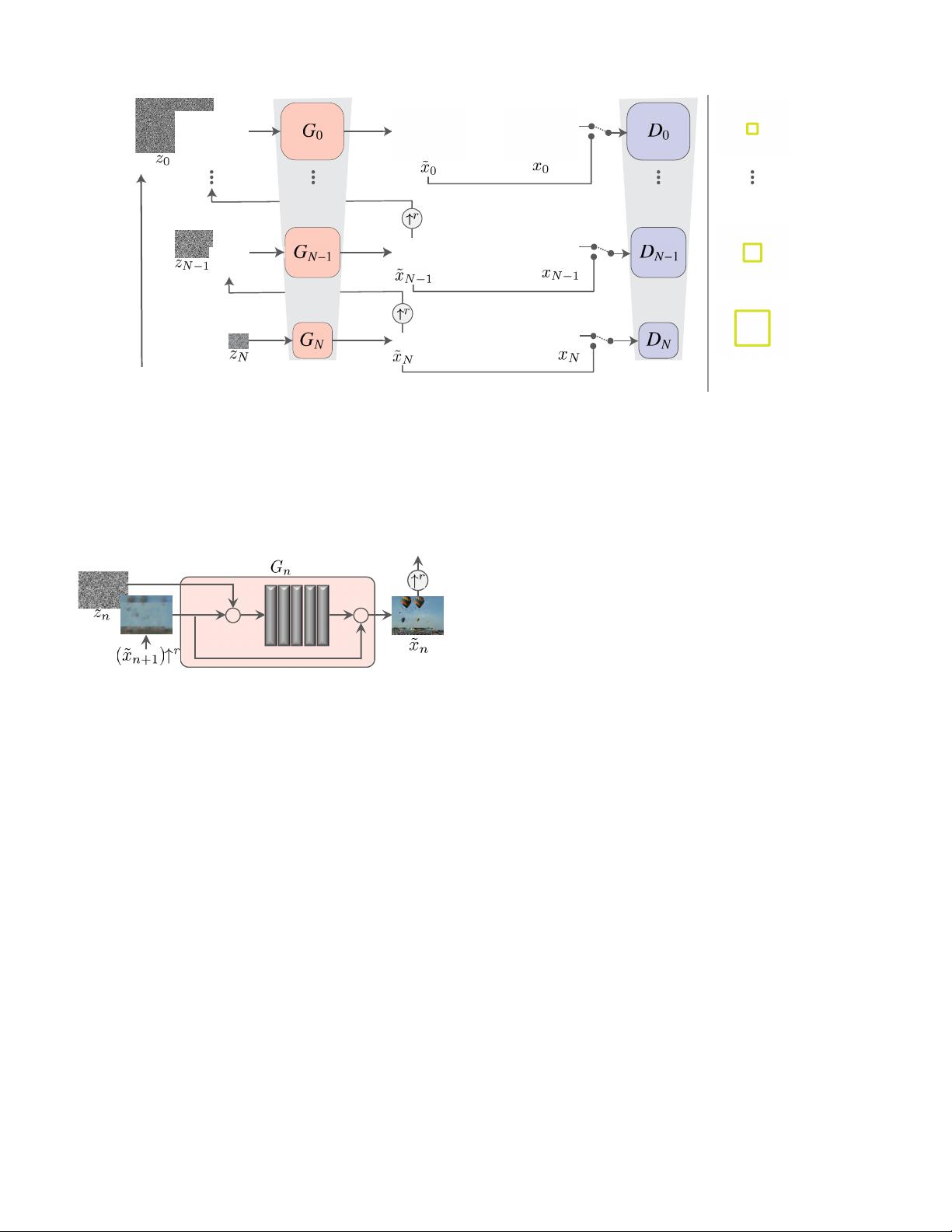

Figure 5: Single scale generation. At each scale n, the im-

age from the previous scale, ˜x

n+1

, is upsampled and added

to the input noise map, z

n

. The result is fed into 5 conv

layers, whose output is a residual image that is added back

to (˜x

n+1

) ↑

r

. This is the output ˜x

n

of G

n

.

sider a different source of training data – all the overlapping

patches at multiple scales of a single natural image. We

show that a powerful generative model can be learned from

this data, and can be used in a number of image manipula-

tion tasks.

2. Method

Our goal is to learn an unconditional generative model

that captures the internal statistics of a single training im-

age x. This task is conceptually similar to the conven-

tional GAN setting, except that here the training samples

are patches of a single image, rather than whole image sam-

ples from a database.

We opt to go beyond texture generation, and to deal

with more general natural images. This requires capturing

the statistics of complex image structures at many different

scales. For example, we want to capture global properties

such as the arrangement and shape of large objects in the

image (e.g. sky at the top, ground at the bottom), as well

as fine details and texture information. To achieve that, our

generative framework, illustrated in Fig. 4, consists of a hi-

erarchy of patch-GANs (Markovian discriminator) [31, 26],

where each is responsible for capturing the patch distribu-

tion at a different scale of x. The GANs have small recep-

tive fields and limited capacity, preventing them from mem-

orizing the single image. While similar multi-scale archi-

tectures have been explored in conventional GAN settings

(e.g. [28, 52, 29, 52, 13, 24]), we are the first explore it for

internal learning from a single image.

2.1. Multi-scale architecture

Our model consists of a pyramid of generators,

{G

0

, . . . , G

N

}, trained against an image pyramid of x:

{x

0

, . . . , x

N

}, where x

n

is a downsampled version of x by

a factor r

n

, for some r > 1. Each generator G

n

is responsi-

ble of producing realistic image samples w.r.t. the patch dis-

tribution in the corresponding image x

n

. This is achieved

through adversarial training, where G

n

learns to fool an as-

sociated discriminator D

n

, which attempts to distinguish

patches in the generated samples from patches in x

n

.

The generation of an image sample starts at the coarsest

scale and sequentially passes through all generators up to

the finest scale, with noise injected at every scale. All the

generators and discriminators have the same receptive field

and thus capture structures of decreasing size as we go up

the generation process. At the coarsest scale, the generation

is purely generative, i.e. G

N

maps spatial white Gaussian

noise z

N

to an image sample ˜x

N

,

4571

Authorized licensed use limited to: GUILIN UNIVERSITY OF ELECTRONIC TECHNOLOGY. Downloaded on March 21,2023 at 05:32:04 UTC from IEEE Xplore. Restrictions apply.