where A is a matrix of transition scores such that

A

i,j

represents the score of a transition from the

tag i to tag j. y

0

and y

n

are the start and end

tags of a sentence, that we add to the set of possi-

ble tags. A is therefore a square matrix of size k +2.

A softmax over all possible tag sequences yields a

probability for the sequence y:

p(y|X) =

e

s(X,y)

P

e

y∈Y

X

e

s(X,

e

y)

.

During training, we maximize the log-probability of

the correct tag sequence:

log(p(y|X)) = s(X, y) − log

X

e

y∈Y

X

e

s(X,

e

y)

= s(X, y) − logadd

e

y∈Y

X

s(X,

e

y), (1)

where Y

X

represents all possible tag sequences

(even those that do not verify the IOB format) for

a sentence X. From the formulation above, it is ev-

ident that we encourage our network to produce a

valid sequence of output labels. While decoding, we

predict the output sequence that obtains the maxi-

mum score given by:

y

∗

= argmax

e

y∈Y

X

s(X,

e

y). (2)

Since we are only modeling bigram interactions

between outputs, both the summation in Eq. 1 and

the maximum a posteriori sequence y

∗

in Eq. 2 can

be computed using dynamic programming.

2.3 Parameterization and Training

The scores associated with each tagging decision

for each token (i.e., the P

i,y

’s) are defined to be

the dot product between the embedding of a word-

in-context computed with a bidirectional LSTM—

exactly the same as the POS tagging model of Ling

et al. (2015b) and these are combined with bigram

compatibility scores (i.e., the A

y,y

0

’s). This archi-

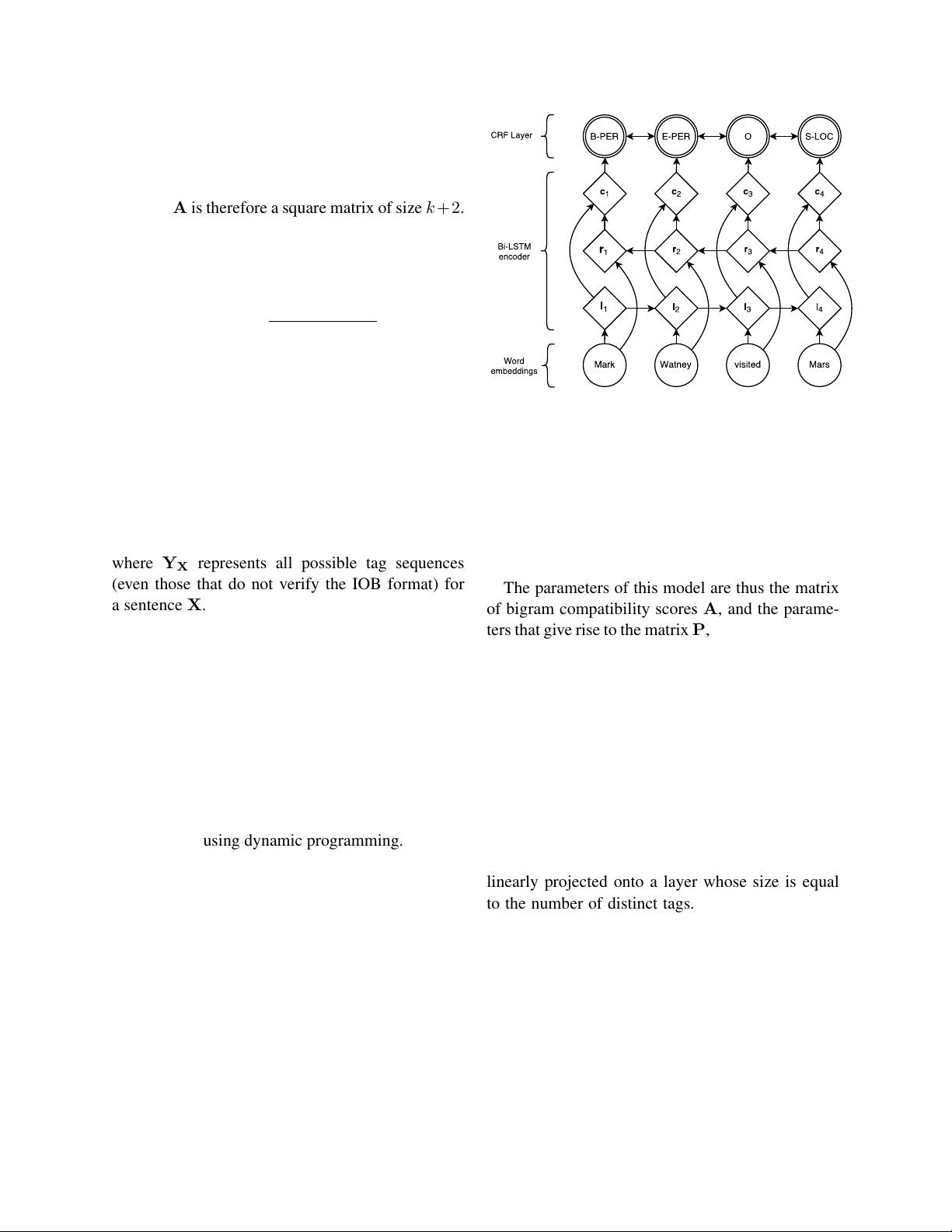

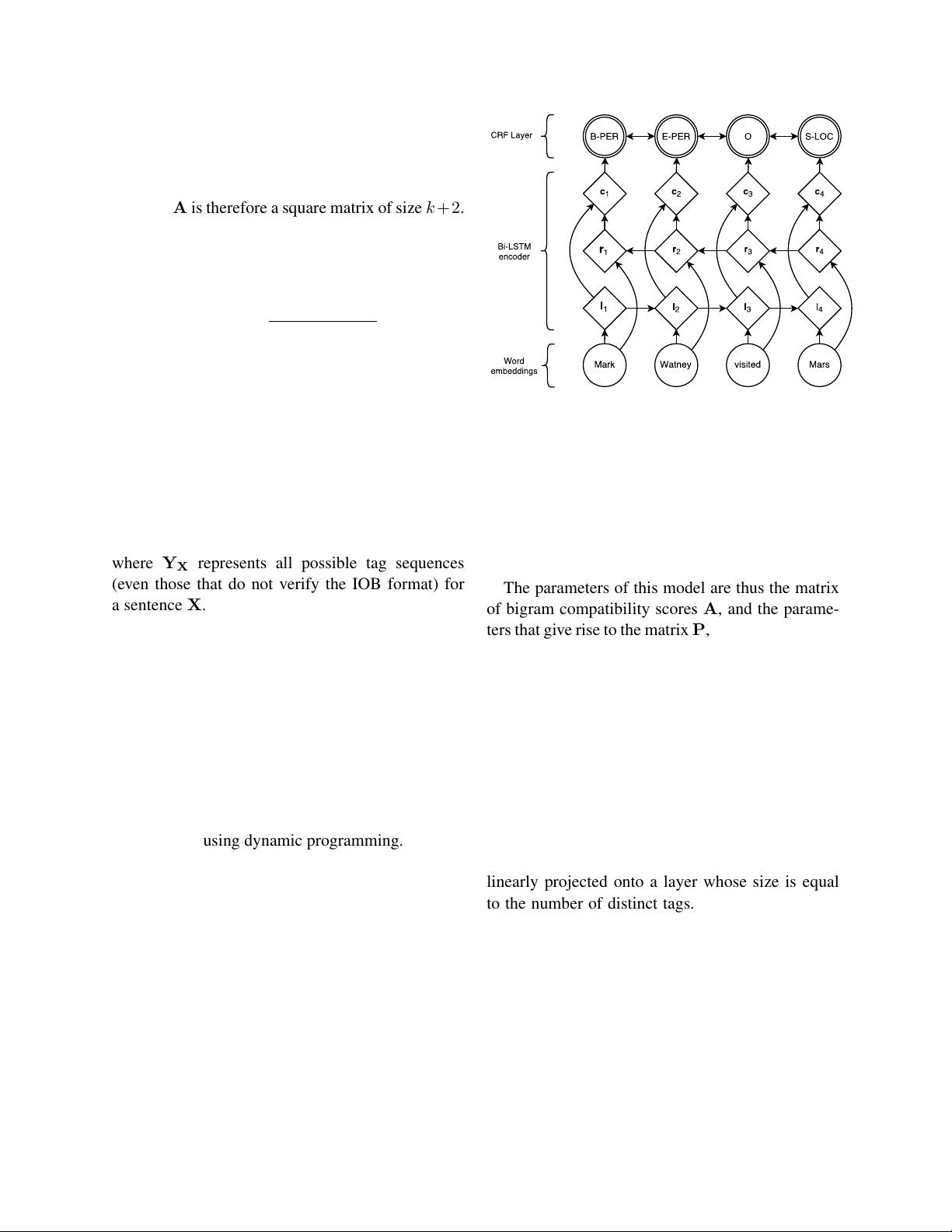

tecture is shown in figure 1. Circles represent ob-

served variables, diamonds are deterministic func-

tions of their parents, and double circles are random

variables.

Figure 1: Main architecture of the network. Word embeddings

are given to a bidirectional LSTM. l

i

represents the word i and

its left context, r

i

represents the word i and its right context.

Concatenating these two vectors yields a representation of the

word i in its context, c

i

.

The parameters of this model are thus the matrix

of bigram compatibility scores A, and the parame-

ters that give rise to the matrix P, namely the param-

eters of the bidirectional LSTM, the linear feature

weights, and the word embeddings. As in part 2.2,

let x

i

denote the sequence of word embeddings for

every word in a sentence, and y

i

be their associated

tags. We return to a discussion of how the embed-

dings x

i

are modeled in Section 4. The sequence of

word embeddings is given as input to a bidirectional

LSTM, which returns a representation of the left and

right context for each word as explained in 2.1.

These representations are concatenated (c

i

) and

linearly projected onto a layer whose size is equal

to the number of distinct tags. Instead of using the

softmax output from this layer, we use a CRF as pre-

viously described to take into account neighboring

tags, yielding the final predictions for every word

y

i

. Additionally, we observed that adding a hidden

layer between c

i

and the CRF layer marginally im-

proved our results. All results reported with this

model incorporate this extra-layer. The parameters

are trained to maximize Eq. 1 of observed sequences

of NER tags in an annotated corpus, given the ob-

served words.