首页

使用java语言利用kafka读取influxdb中的内容

使用java语言利用kafka读取influxdb中的内容

时间: 2023-03-13 22:18:47

浏览: 153

使用Java语言通过Kafka可以从InfluxDB中读取内容,可以通过使用InfluxDB的Java客户端库,如influxdb-java或influx4j,来查询InfluxDB中的数据并将其发送到Kafka。

阅读全文

相关推荐

kafka读取写入数据

kafka读取写入数据

kafka-influxdb:InfluxDB的高性能Kafka使用者。 支持收集的消息格式

一个用Python编写的InfluxDB的Kafka使用者。 支持InfluxDB 0.9.x及更高版本。 要获得InfluxDB 0.8.x支持,请签出。 :warning: 该项目应能按预期工作,并且非常欢迎进行错误修复,但是有关新功能的活动很少。 对于...

基于Java语言的Kafka消息队列系统设计源码

该项目是基于Java语言的Kafka消息队列系统设计源码,共计43个文件,其中包括27个Java源文件、6个XML配置文件、3个Markdown文档、1个Git忽略文件、1个LICENSE声明文件、1个YAML配置文件、1个属性文件、1个SQL脚本文件...

flink-kafka-influx-grafana-framework:该应用程序是基本的Flink-Kafka-InfluxDB工作流程的示例

使用来自Kafka主题的数据(在另一个线程中),并将其也下沉到InfluxDB 在窗口中拆分无限流并计算“动态时间扭曲”距离,以便识别输入 通过HTTP请求将包含已识别字符的注释添加到Grafana 如何使用 只需在jobs / App....

SpringBoot + Kafka + Redis + InfluxDB + HBase + Grafana 风控系统.zip

综上所述,这个风控系统通过SpringBoot构建基础架构,利用Kafka处理实时数据流,Redis提供高速缓存,InfluxDB存储时序数据,HBase处理海量历史数据,Grafana进行数据可视化,共同打造了一个高效、灵活且可扩展的风控...

tsdb:从kafka获取源链主从同步接时序数据库InfluxDB

tsdbInfluxDB数据库Java客户端介绍代理网关influxdb-proxy使用influxdb-java客户端连接...项目编译运行项目技术栈Maven + (Spring Boot 1.5.12 + kafka + Jedis + Redis + Influxdb-java)构建本项目使用Maven来进行

Kafka Connect的监控和日志管理

它允许用户配置连接器(connectors),这些连接器可以捕获来自外部系统的数据并将其发布到Kafka主题中,或者订阅Kafka主题的数据并将其传输到外部系统中。 ## 1.2 Kafka Connect的工作原理 Kafka Connect采用分布式...

Java分布式系统设计:CAP理论与实践案例

Java分布式系统设计概述 ## 1.1 现代应用环境与分布式系统的兴起 随着互联网技术的发展,用户规模的扩大,单一服务器难以满足大规模并发访问的需求,分布式系统应运而生。分布式系统通过将应用拆分成多个可独立...

Java WatchService与现代应用:微服务架构下的监控策略研究

在现代的Java应用程序中,对于文件系统中文件或目录变化的检测是一个常见的需求。Java提供了WatchService API,允许应用程序侦测文件系统的变化事件,如创建、修改或删除文件。这种机制特别适用于需要实时反应文件...

使用Debezium进行真实时间业务指标监控

## 1. 引言 ### 1.1 介绍实时业务指标监控的重要性 实时业务指标监控是指对业务数据的实时监控和分析,以便及时发现和...它提供了一种可靠的方法来捕获关系数据库的变更,并将这些变更作为事件流传送到Apache Kafka

自动化部署中的日志管理与分析

自动化部署是指利用软件工具或脚本自动化地完成代码构建、测试和部署的过程。通过自动化部署,开发人员可以更快速、高效地将应用程序部署到生产环境中,从而加速软件交付的速度和频率。 自动化部署的基本概念包括...

时序数据库中的实时数据处理与分析

# 第一章:时序数据库简介 ## 1.1 时序数据库的定义和特点 时序数据库(Time Series Database)是一种专门用于存储和处理时间序列数据的数据库系统。它具有以下几个特点: - 高效存储:时序数据库针对时间序列...

时序数据库中的复杂查询与数据分析方法

# 第一章:时序数据库简介 ## 1.1 时序数据库概述 时序数据库是针对时间序列数据特点而设计的数据库系统,它具有高效存储、快速查询和方便分析时序数据的特点。时序数据库通常用于处理物联网、金融、监控等领域的...

流量控制与限流在高并发系统中的策略与实践

流量控制和限流是指在一个系统中控制和限制流入或流出的数据量,以保证系统的稳定性和可靠性。流量控制是指通过调整发送方的发送速率来控制数据流入的速度,而限流则是通过调整接收方的处理速率来控制数据流出的速度...

数据流算法在机器学习中的应用:实时数据训练,提升模型准确性

...

Java基于springboot+vue的校园自助洗衣服务管理系统的设计与实现.rar

【基于Springboot+Vue的设计与实现】高分通过项目,已获导师指导。 本项目是一套基于Springboot+Vue的管理系统,主要针对计算机相关专业的正在做毕设的学生和需要项目实战练习的Java学习者。也可作为课程设计、期末大作业 包含:项目源码、数据库脚本、开发说明文档、部署视频、代码讲解视频、全套软件等,该项目可以直接作为毕设使用。 项目都经过严格调试,确保可以运行! 环境说明: 开发语言:Java 框架:springboot,mybatis JDK版本:JDK1.8 数据库:mysql 5.7数据库工具:Navicat11开发软件:eclipse/idea Maven包:Maven3.3

广义表的基本操作与高级功能

这份资料详细介绍了广义表(Generalized List)这一重要的数据结构。广义表是一种递归数据结构,其元素可以是原子(基本数据类型,如数字、字符)或者子表(另一个广义表),具有灵活性和递归性的特点。 资料主要包含七个部分:基本概念介绍、表示方法、存储结构、基本操作、高级操作、应用场景和优化策略。在基本操作部分,详细讲解了创建、遍历、插入、删除等功能的具体实现,每个操作都配有完整的C语言代码示例。在应用场景部分,展示了广义表在表示嵌套表达式、树结构和多层嵌套数据等实际场景中的应用。针对实现过程中可能遇到的内存管理、递归效率、栈溢出等问题,资料也提供了相应的优化策略和解决方案。

舷侧和端射天线阵列辐射方向图 matlab代码.rar

1.版本:matlab2014/2019a/2024a 2.附赠案例数据可直接运行matlab程序。 3.代码特点:参数化编程、参数可方便更改、代码编程思路清晰、注释明细。 4.适用对象:计算机,电子信息工程、数学等专业的大学生课程设计、期末大作业和毕业设计。

图引擎模块(GE)是MindSpore的一个子模块,其代码由C++实现,前端和 Ascend 处理器之间的连接链接模块

图引擎模块(GE)是MindSpore的一个子模块,其代码由C++实现,位于前端模块ME和底层硬件之间,起到承接作用。图引擎模块以ME下发的图作为输入,然后进行一系列的深度图优化操作,最后输出一张可以在底层硬件上高效运行的图。GE针对昇腾AI处理器的硬件结构特点,做了特定的优化工作,以此来充分发挥出昇腾AI处理器的强大算力。在进行模型训练/推理时,GE会被自动调用而用户并不感知。GE主要由GE API和GE Core两部分组成

CSDN会员

开通CSDN年卡参与万元壕礼抽奖

海量

VIP免费资源

千本

正版电子书

商城

会员专享价

千门

课程&专栏

全年可省5,000元

立即开通

全年可省5,000元

立即开通

最新推荐

Kafka使用Java客户端进行访问的示例代码

Java 是一种广泛使用的编程语言,很多开发者使用 Java 进行 Kafka 的开发。那么,如何使用 Java 客户端来访问 Kafka 呢?下面我们将详细介绍如何使用 Java 客户端来访问 Kafka。 一、环境依赖 首先,我们需要准备...

kafka生产者和消费者的javaAPI的示例代码

在使用 Kafka 的 Java API 时,需要添加相应的依赖项。在 Maven 项目中,可以添加以下依赖项: ```xml <groupId>org.apache.kafka <artifactId>kafka_2.11 <version>0.10.1.1 <!-- 其他依赖项 --> ``` ...

Spring Boot集群管理工具KafkaAdminClient使用方法解析

Spring Boot 集群管理工具 KafkaAdminClient 使用方法解析 KafkaAdminClient 是 Spring Boot 集群管理工具中的一部分,主要用于管理和检视 Kafka 集群中的Topic、Broker、ACL 等对象。下面将详细介绍 Kafka...

kafka+flume 实时采集oracle数据到hive中.docx

首先,需要使用Kafka Connector从Oracle数据库中提取日志信息,然后将其写入到Kafka Topic中。然后,使用Flume从Kafka Topic中提取数据,并将其写入到HDFS中。最后,使用Hive从HDFS中读取数据,并将其存储到Hive表中...

一篇看懂Java中的Unsafe类

Java中的`Unsafe`类是一个非常特殊的工具类,它位于`sun.misc`包下,不属于Java标准库的一部分。尽管如此,由于其强大的底层操作能力,它在许多高性能的Java框架和库中扮演着重要角色,例如Netty、Hadoop和Kafka。`...

平尾装配工作平台运输支撑系统设计与应用

资源摘要信息:"该压缩包文件名为‘行业分类-设备装置-用于平尾装配工作平台的运输支撑系统.zip’,虽然没有提供具体的标签信息,但通过文件标题可以推断出其内容涉及的是航空或者相关重工业领域内的设备装置。从标题来看,该文件集中讲述的是有关平尾装配工作平台的运输支撑系统,这是一种专门用于支撑和运输飞机平尾装配的特殊设备。 平尾,即水平尾翼,是飞机尾部的一个关键部件,它对于飞机的稳定性和控制性起到至关重要的作用。平尾的装配工作通常需要在一个特定的平台上进行,这个平台不仅要保证装配过程中平尾的稳定,还需要适应平尾的搬运和运输。因此,设计出一个合适的运输支撑系统对于提高装配效率和保障装配质量至关重要。 从‘用于平尾装配工作平台的运输支撑系统.pdf’这一文件名称可以推断,该PDF文档应该是详细介绍这种支撑系统的构造、工作原理、使用方法以及其在平尾装配工作中的应用。文档可能包括以下内容: 1. 支撑系统的设计理念:介绍支撑系统设计的基本出发点,如便于操作、稳定性高、强度大、适应性强等。可能涉及的工程学原理、材料学选择和整体结构布局等内容。 2. 结构组件介绍:详细介绍支撑系统的各个组成部分,包括支撑框架、稳定装置、传动机构、导向装置、固定装置等。对于每一个部件的功能、材料构成、制造工艺、耐腐蚀性以及与其他部件的连接方式等都会有详细的描述。 3. 工作原理和操作流程:解释运输支撑系统是如何在装配过程中起到支撑作用的,包括如何调整支撑点以适应不同重量和尺寸的平尾,以及如何进行运输和对接。操作流程部分可能会包含操作步骤、安全措施、维护保养等。 4. 应用案例分析:可能包含实际操作中遇到的问题和解决方案,或是对不同机型平尾装配过程的支撑系统应用案例的详细描述,以此展示系统的实用性和适应性。 5. 技术参数和性能指标:列出支撑系统的具体技术参数,如载重能力、尺寸规格、工作范围、可调节范围、耐用性和可靠性指标等,以供参考和评估。 6. 安全和维护指南:对于支撑系统的使用安全提供指导,包括操作安全、应急处理、日常维护、定期检查和故障排除等内容。 该支撑系统作为专门针对平尾装配而设计的设备,对于飞机制造企业来说,掌握其详细信息是提高生产效率和保障产品质量的重要一环。同时,这种支撑系统的设计和应用也体现了现代工业在专用设备制造方面追求高效、安全和精确的趋势。"

管理建模和仿真的文件

管理Boualem Benatallah引用此版本:布阿利姆·贝纳塔拉。管理建模和仿真。约瑟夫-傅立叶大学-格勒诺布尔第一大学,1996年。法语。NNT:电话:00345357HAL ID:电话:00345357https://theses.hal.science/tel-003453572008年12月9日提交HAL是一个多学科的开放存取档案馆,用于存放和传播科学研究论文,无论它们是否被公开。论文可以来自法国或国外的教学和研究机构,也可以来自公共或私人研究中心。L’archive ouverte pluridisciplinaire

MATLAB遗传算法探索:寻找随机性与确定性的平衡艺术

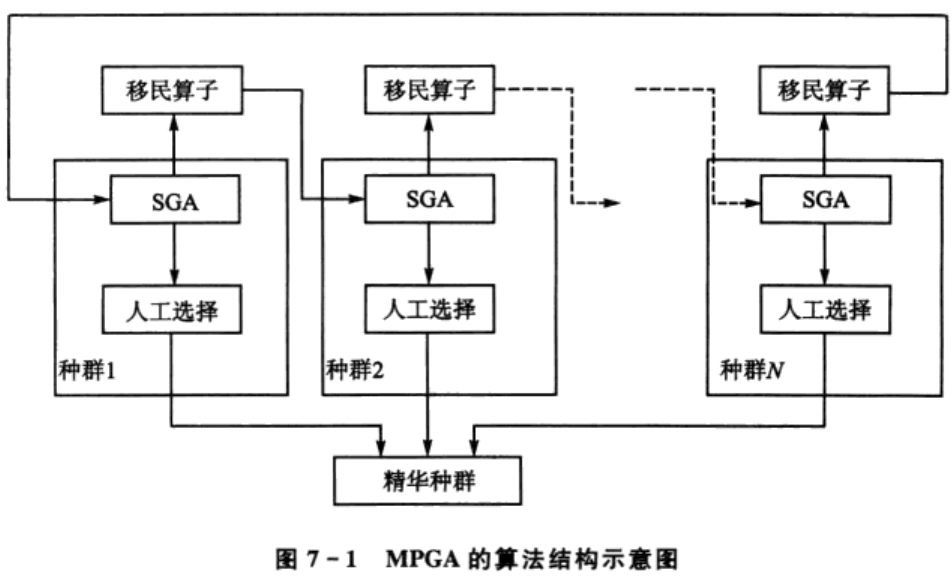

# 1. 遗传算法的基本概念与起源 遗传算法(Genetic Algorithm, GA)是一种模拟自然选择和遗传学机制的搜索优化算法。起源于20世纪60年代末至70年代初,由John Holland及其学生和同事们在研究自适应系统时首次提出,其理论基础受到生物进化论的启发。遗传算法通过编码一个潜在解决方案的“基因”,构造初始种群,并通过选择、交叉(杂交)和变异等操作模拟生物进化过程,以迭代的方式不断优化和筛选出最适应环境的

如何在S7-200 SMART PLC中使用MB_Client指令实现Modbus TCP通信?请详细解释从连接建立到数据交换的完整步骤。

为了有效地掌握S7-200 SMART PLC中的MB_Client指令,以便实现Modbus TCP通信,建议参考《S7-200 SMART Modbus TCP教程:MB_Client指令与功能码详解》。本教程将引导您了解从连接建立到数据交换的整个过程,并详细解释每个步骤中的关键点。 参考资源链接:[S7-200 SMART Modbus TCP教程:MB_Client指令与功能码详解](https://wenku.csdn.net/doc/119yes2jcm?spm=1055.2569.3001.10343) 首先,确保您的S7-200 SMART CPU支持开放式用户通

MAX-MIN Ant System:用MATLAB解决旅行商问题

资源摘要信息:"Solve TSP by MMAS: Using MAX-MIN Ant System to solve Traveling Salesman Problem - matlab开发" 本资源为解决经典的旅行商问题(Traveling Salesman Problem, TSP)提供了一种基于蚁群算法(Ant Colony Optimization, ACO)的MAX-MIN蚁群系统(MAX-MIN Ant System, MMAS)的Matlab实现。旅行商问题是一个典型的优化问题,要求找到一条最短的路径,让旅行商访问每一个城市一次并返回起点。这个问题属于NP-hard问题,随着城市数量的增加,寻找最优解的难度急剧增加。 MAX-MIN Ant System是一种改进的蚁群优化算法,它在基本的蚁群算法的基础上,对信息素的更新规则进行了改进,以期避免过早收敛和局部最优的问题。MMAS算法通过限制信息素的上下界来确保算法的探索能力和避免过早收敛,它在某些情况下比经典的蚁群系统(Ant System, AS)和带有局部搜索的蚁群系统(Ant Colony System, ACS)更为有效。 在本Matlab实现中,用户可以通过调用ACO函数并传入一个TSP问题文件(例如"filename.tsp")来运行MMAS算法。该问题文件可以是任意的对称或非对称TSP实例,用户可以从特定的网站下载多种标准TSP问题实例,以供测试和研究使用。 使用此资源的用户需要注意,虽然该Matlab代码可以免费用于个人学习和研究目的,但若要用于商业用途,则需要联系作者获取相应的许可。作者的电子邮件地址为***。 此外,压缩包文件名为"MAX-MIN%20Ant%20System.zip",该压缩包包含Matlab代码文件和可能的示例数据文件。用户在使用之前需要将压缩包解压,并将文件放置在Matlab的适当工作目录中。 为了更好地理解和应用该资源,用户应当对蚁群优化算法有初步了解,尤其是对MAX-MIN蚁群系统的基本原理和运行机制有所掌握。此外,熟悉Matlab编程环境和拥有一定的编程经验将有助于用户根据个人需求修改和扩展算法。 在实际应用中,用户可以根据问题规模调整MMAS算法的参数,如蚂蚁数量、信息素蒸发率、信息素增量等,以获得最优的求解效果。此外,也可以结合其他启发式或元启发式算法,如遗传算法、模拟退火等,来进一步提高算法的性能。 总之,本资源为TSP问题的求解提供了一种有效的算法框架,且Matlab作为编程工具的易用性和强大的计算能力,使得该资源成为算法研究人员和工程技术人员的有力工具。通过本资源的应用,用户将能够深入探索并实现蚁群优化算法在实际问题中的应用,为解决复杂的优化问题提供一种新的思路和方法。