首页for i in range(training_steps): train_step() if i % display_step == 0: print("loss: %f " % (custom_loss()))

for i in range(training_steps): train_step() if i % display_step == 0: print("loss: %f " % (custom_loss()))

时间: 2024-04-28 16:24:33 浏览: 14

这段代码是一个训练循环,用于训练机器学习模型。循环次数为training_steps。在每一次循环中,调用train_step()函数进行一次训练。如果当前循环次数可以被display_step整除,就打印出当前模型的损失值(loss), 其中custom_loss()是自定义的损失函数。这个过程可以用来监控模型的训练进度,并且可以在训练的过程中进行调试和优化。

CSDN会员

开通CSDN年卡参与万元壕礼抽奖

最新推荐

解决keras,val_categorical_accuracy:,0.0000e+00问题

index = [i for i in range(len(x_train))] np.random.shuffle(index) x_train = x_train[index] y_train = y_train[index] # 继续进行模型训练 model.fit(x_train, y_train, batch_size=32, epochs=10, validation...

BSC关键绩效财务与客户指标详解

BSC(Balanced Scorecard,平衡计分卡)是一种战略绩效管理系统,它将企业的绩效评估从传统的财务维度扩展到非财务领域,以提供更全面、深入的业绩衡量。在提供的文档中,BSC绩效考核指标主要分为两大类:财务类和客户类。

1. 财务类指标:

- 部门费用的实际与预算比较:如项目研究开发费用、课题费用、招聘费用、培训费用和新产品研发费用,均通过实际支出与计划预算的百分比来衡量,这反映了部门在成本控制上的效率。

- 经营利润指标:如承保利润、赔付率和理赔统计,这些涉及保险公司的核心盈利能力和风险管理水平。

- 人力成本和保费收益:如人力成本与计划的比例,以及标准保费、附加佣金、续期推动费用等与预算的对比,评估业务运营和盈利能力。

- 财务效率:包括管理费用、销售费用和投资回报率,如净投资收益率、销售目标达成率等,反映公司的财务健康状况和经营效率。

2. 客户类指标:

- 客户满意度:通过包装水平客户满意度调研,了解产品和服务的质量和客户体验。

- 市场表现:通过市场销售月报和市场份额,衡量公司在市场中的竞争地位和销售业绩。

- 服务指标:如新契约标保完成度、续保率和出租率,体现客户服务质量和客户忠诚度。

- 品牌和市场知名度:通过问卷调查、公众媒体反馈和总公司级评价来评估品牌影响力和市场认知度。

BSC绩效考核指标旨在确保企业的战略目标与财务和非财务目标的平衡,通过量化这些关键指标,帮助管理层做出决策,优化资源配置,并驱动组织的整体业绩提升。同时,这份指标汇总文档强调了财务稳健性和客户满意度的重要性,体现了现代企业对多维度绩效管理的重视。

管理建模和仿真的文件

管理Boualem Benatallah引用此版本:布阿利姆·贝纳塔拉。管理建模和仿真。约瑟夫-傅立叶大学-格勒诺布尔第一大学,1996年。法语。NNT:电话:00345357HAL ID:电话:00345357https://theses.hal.science/tel-003453572008年12月9日提交HAL是一个多学科的开放存取档案馆,用于存放和传播科学研究论文,无论它们是否被公开。论文可以来自法国或国外的教学和研究机构,也可以来自公共或私人研究中心。L’archive ouverte pluridisciplinaire

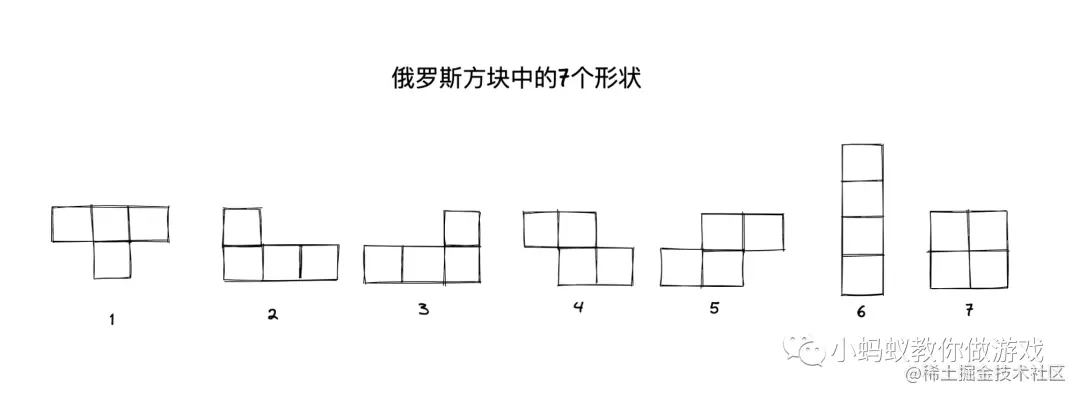

【实战演练】俄罗斯方块:实现经典的俄罗斯方块游戏,学习方块生成和行消除逻辑。

# 1. 俄罗斯方块游戏概述**

俄罗斯方块是一款经典的益智游戏,由阿列克谢·帕基特诺夫于1984年发明。游戏目标是通过控制不断下落的方块,排列成水平线,消除它们并获得分数。俄罗斯方块风靡全球,成为有史以来最受欢迎的视频游戏之一。

# 2.

卷积神经网络实现手势识别程序

卷积神经网络(Convolutional Neural Network, CNN)在手势识别中是一种非常有效的机器学习模型。CNN特别适用于处理图像数据,因为它能够自动提取和学习局部特征,这对于像手势这样的空间模式识别非常重要。以下是使用CNN实现手势识别的基本步骤:

1. **输入数据准备**:首先,你需要收集或获取一组带有标签的手势图像,作为训练和测试数据集。

2. **数据预处理**:对图像进行标准化、裁剪、大小调整等操作,以便于网络输入。

3. **卷积层(Convolutional Layer)**:这是CNN的核心部分,通过一系列可学习的滤波器(卷积核)对输入图像进行卷积,以

绘制企业战略地图:从财务到客户价值的六步法

"BSC资料.pdf"

战略地图是一种战略管理工具,它帮助企业将战略目标可视化,确保所有部门和员工的工作都与公司的整体战略方向保持一致。战略地图的核心内容包括四个相互关联的视角:财务、客户、内部流程和学习与成长。

1. **财务视角**:这是战略地图的最终目标,通常表现为股东价值的提升。例如,股东期望五年后的销售收入达到五亿元,而目前只有一亿元,那么四亿元的差距就是企业的总体目标。

2. **客户视角**:为了实现财务目标,需要明确客户价值主张。企业可以通过提供最低总成本、产品创新、全面解决方案或系统锁定等方式吸引和保留客户,以实现销售额的增长。

3. **内部流程视角**:确定关键流程以支持客户价值主张和财务目标的实现。主要流程可能包括运营管理、客户管理、创新和社会责任等,每个流程都需要有明确的短期、中期和长期目标。

4. **学习与成长视角**:评估和提升企业的人力资本、信息资本和组织资本,确保这些无形资产能够支持内部流程的优化和战略目标的达成。

绘制战略地图的六个步骤:

1. **确定股东价值差距**:识别与股东期望之间的差距。

2. **调整客户价值主张**:分析客户并调整策略以满足他们的需求。

3. **设定价值提升时间表**:规划各阶段的目标以逐步缩小差距。

4. **确定战略主题**:识别关键内部流程并设定目标。

5. **提升战略准备度**:评估并提升无形资产的战略准备度。

6. **制定行动方案**:根据战略地图制定具体行动计划,分配资源和预算。

战略地图的有效性主要取决于两个要素:

1. **KPI的数量及分布比例**:一个有效的战略地图通常包含20个左右的指标,且在四个视角之间有均衡的分布,如财务20%,客户20%,内部流程40%。

2. **KPI的性质比例**:指标应涵盖财务、客户、内部流程和学习与成长等各个方面,以全面反映组织的绩效。

战略地图不仅帮助管理层清晰传达战略意图,也使员工能更好地理解自己的工作如何对公司整体目标产生贡献,从而提高执行力和组织协同性。

"互动学习:行动中的多样性与论文攻读经历"

多样性她- 事实上SCI NCES你的时间表ECOLEDO C Tora SC和NCESPOUR l’Ingén学习互动,互动学习以行动为中心的强化学习学会互动,互动学习,以行动为中心的强化学习计算机科学博士论文于2021年9月28日在Villeneuve d'Asq公开支持马修·瑟林评审团主席法布里斯·勒菲弗尔阿维尼翁大学教授论文指导奥利维尔·皮耶昆谷歌研究教授:智囊团论文联合主任菲利普·普雷教授,大学。里尔/CRISTAL/因里亚报告员奥利维耶·西格德索邦大学报告员卢多维奇·德诺耶教授,Facebook /索邦大学审查员越南圣迈IMT Atlantic高级讲师邀请弗洛里安·斯特鲁布博士,Deepmind对于那些及时看到自己错误的人...3谢谢你首先,我要感谢我的两位博士生导师Olivier和Philippe。奥利维尔,"站在巨人的肩膀上"这句话对你来说完全有意义了。从科学上讲,你知道在这篇论文的(许多)错误中,你是我可以依

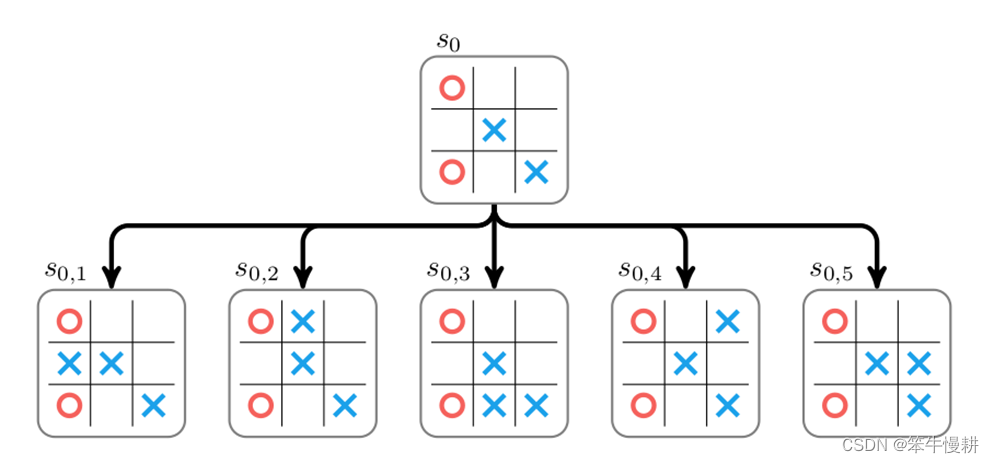

【实战演练】井字棋游戏:开发井字棋游戏,重点在于AI对手的实现。

# 2.1 井字棋游戏规则

井字棋游戏是一个两人对弈的游戏,在3x3的棋盘上进行。玩家轮流在空位上放置自己的棋子(通常为“X”或“O”),目标是让自己的棋子连成一条直线(水平、垂直或对角线)。如果某位玩家率先完成这一目标,则该玩家获胜。

游戏开始时,棋盘上所有位置都为空。玩家轮流放置自己的棋子,直到出现以下情况之一:

* 有玩家连成一条直线,获胜。

* 棋盘上所有位置都被占满,平局。

transformer模型对话

Transformer模型是一种基于自注意力机制的深度学习架构,最初由Google团队在2017年的论文《Attention is All You Need》中提出,主要用于自然语言处理任务,如机器翻译和文本生成。Transformer完全摒弃了传统的循环神经网络(RNN)和卷积神经网络(CNN),转而采用全连接的方式处理序列数据,这使得它能够并行计算,极大地提高了训练速度。

在对话系统中,Transformer模型通过编码器-解码器结构工作。编码器将输入序列转化为固定长度的上下文向量,而解码器则根据这些向量逐步生成响应,每一步都通过自注意力机制关注到输入序列的所有部分,这使得模型能够捕捉到

BSC关键绩效指标详解:财务与运营效率评估

BSC(Balanced Scorecard,平衡计分卡)是一种企业绩效管理系统,它将公司的战略目标分解为四个维度:财务、客户、内部流程和学习与成长。在这个文档中,我们看到的是针对特定行业(可能是保险或保险经纪)的BSC绩效考核指标汇总,专注于财务类和非财务类的关键绩效指标(KPIs)。

财务类指标:

1. 部门费用预算达成率:衡量实际支出与计划费用之间的对比,通过公式 (实际部门费用/计划费用)*100% 来计算,数据来源于部门的预算和实际支出记录。

2. 项目研究开发费用预算达成率:同样用于评估研发项目的资金管理,公式为 (实际项目研究开发费用/计划费用)*100%。

3. 课题费用预算达成率、招聘费用预算达成率、培训费用预算达成率 和 新产品研究开发费用预算达成率:这些都是人力资源相关开支的预算执行情况,涉及到费用的实际花费与计划金额的比例。

4. 承保利润:衡量保险公司盈利能力的重要指标,包括赔付率和寿险各险种的死差损益(实际死亡率与预期死亡率的差异)。

5. 赔付率:反映保险公司的赔付情况,是业务健康度的一个关键指标。

6. 内嵌价值的增加:代表了保单的价值增长,反映了公司长期盈利能力。

7. 人力成本总额控制率:通过比较实际人力成本与计划成本来评估人力成本的有效管理。

8. 标准保费达成率:衡量公司的销售业绩,即实际收取保费与目标保费的比率。

9. 其他费用比率,如附加佣金、续期推动费用、业务推动费用等,用来评估营销费用的效率。

非财务类指标:

1. 销售目标达成率:衡量销售团队完成预定目标的程度,通过实际销售额与计划销售额的比率计算。

2. 理赔率:体现客户服务质量和效率,涉及保险公司处理理赔请求的速度和成功率。

3. 产品/服务销售收入达成率:衡量产品或服务的实际销售效果,反映市场响应和客户满意度。

这些指标集合在一起,提供了全面的视角来评估公司的经营效率、财务表现以及战略执行情况。通过定期跟踪和分析这些数据,企业可以持续优化策略,提升业绩,确保与整体战略目标的一致性。每个指标的数据来源通常来自于相关部门的预算和实际操作记录,确保信息的准确性。