INTERNATIONAL JOURNAL OF PROGNOSTICS AND HEALTH MANAGEMENT

3

where:

c

11

is the number of true positives;

c

12

is the number of false positives;

c

21

is the number of false negatives; and

c

22

is the number of true negatives.

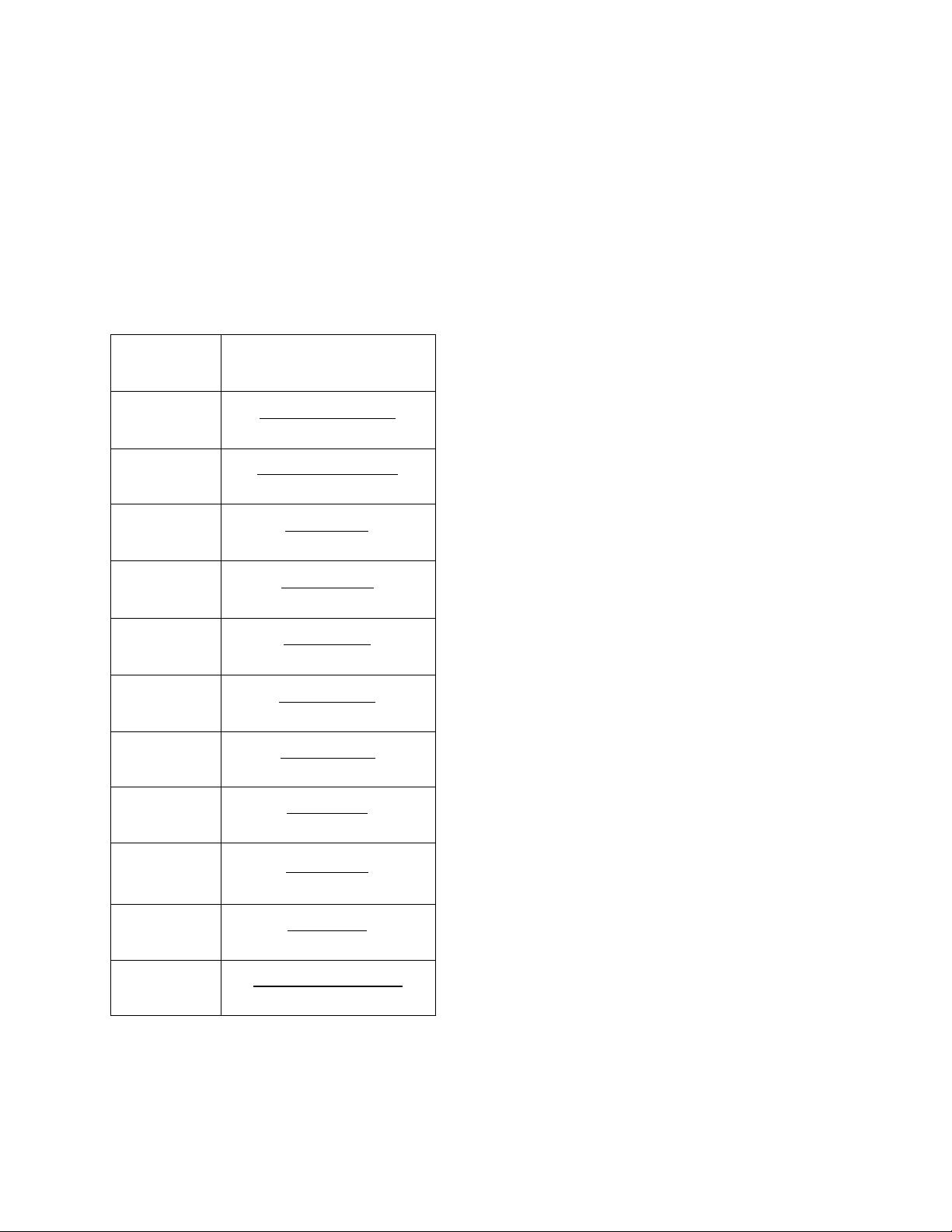

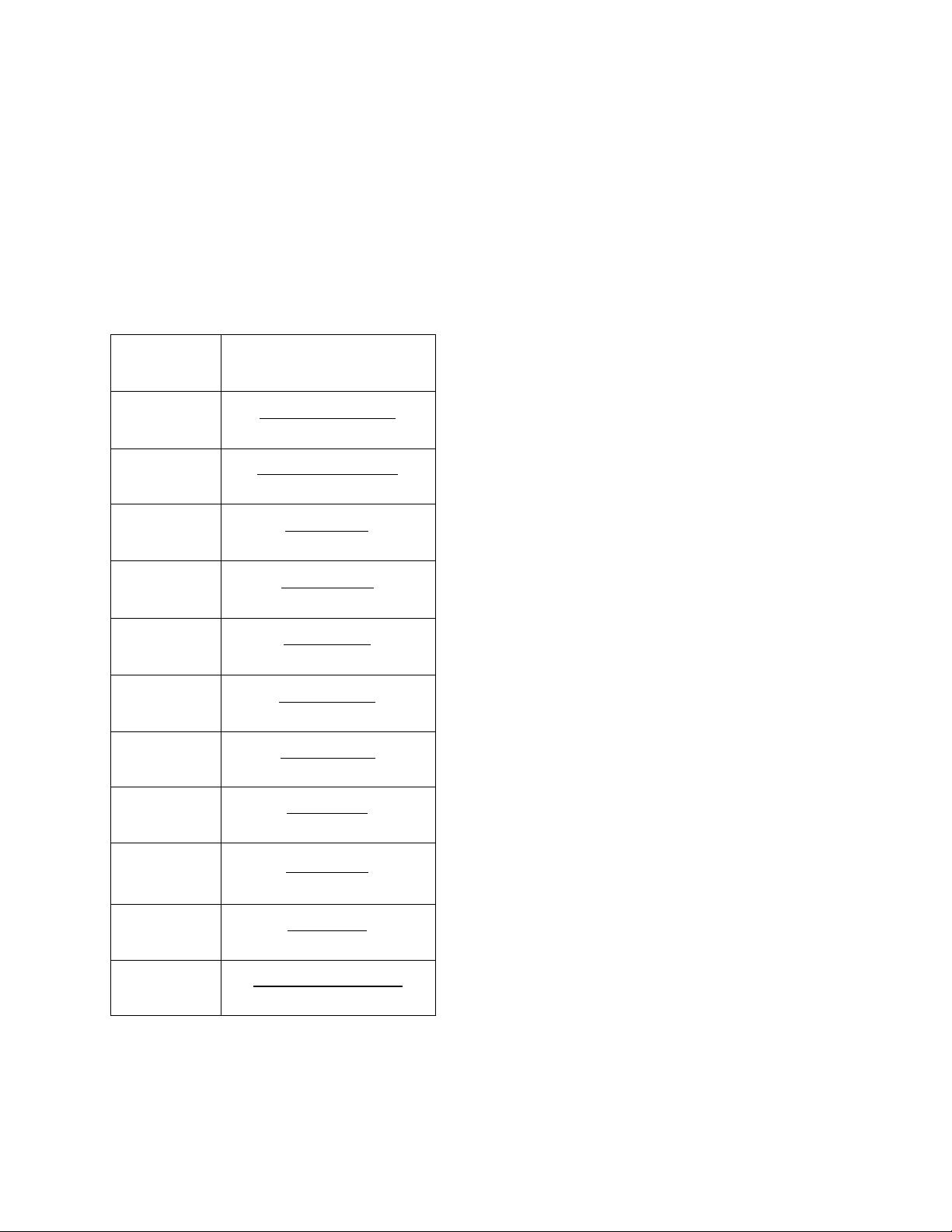

Table 1. The scoring metrics

precisionrecall

precisionrecall

2

From this confusion matrix, scoring metrics are computed

as shown in Table 1. These scoring metrics are widely used

in comparing or ranking the performance of classifiers

because they are simple, easy-to-compute, and easy-to-

understand. Such an evaluation typically examines unbiased

estimates of predictive accuracy of different classifiers

(Srinivasan 1999). The assumption is that the estimates of

performance are subject to sampling errors only and that the

classifier with the highest accuracy would be the “best”

choice of the classifiers for a given problem. These metrics

overlook two important practical concerns: class distribution

and the cost of misclassification. For example, the accuracy

metric assumes that the class distribution is known in the

training dataset and testing dataset and that the

misclassification costs for false positive and false negative

error are equal (Provost 1998). In real-world application, the

cost of misclassification for different errors may not be the

same. It is sometimes desirable to minimize the

misclassification cost rather than the error-rate in

classification task.

2.3. Graphical methods

To overcome the limitations of scoring metrics and

incorporate the consideration of prior class distributions and

the misclassification costs, several graphical methods had

been developed. These graphic methods are ROC

(Receiver Operating Characteristics) Space, ROCCH (ROC

Convex Hull), Isometrics, AUC (Area under ROC curve),

Cost Curve, DEA (Data Envelopment Analysis), and Lift

curve. They are also useful for visualizing performance of a

classifier. Here is an overview for each method.

2.3.1. ROC Space

The ROC analysis was initially developed to express the

tradeoffs between hit rate and false alert rate in signal

detection theory (Egan 1975). It is now also used for

evaluating classifier performance (Bradley 1997, Provost

and Fawcett 2001, 1997). In particular, ROC is a powerful

way for performance evaluation of binary classifiers, and it

has become a popular method due to its simple graphical

representation of overall performance.

Using TPR and FPR from Table 1 as the Y-axis and X-axis

respectively, a ROC space can be plotted. In this ROC

space, each point (FPR, TPR) represents a classifier

(Fawcett 2003, Flach 2004, 2003). Figure 1 shows an

example of a basic ROC graph for five discrete classifiers.

Based on the position of a classifier in ROC space, we can

evaluate or rank the performance of classifiers. In ROC

space, the point (0, 1) represents a perfect classifier which

has 100% accuracy and zero error rates. The upper left

points indicated that a classifier has a higher TPR and a

lower FPR. For instance, C4.5 has a better TPR and a lower

FPR than nB. The classifier on the upper right-hand side

of an ROC space makes positive classification with relative

weak evidence and has a higher TPR and a higher FPR.

Therefore, the performance of a classifier is determined by a

trade-off between TPR and FPR. However, it is hard to

decide which classifier is best from ROC space. For