and robustness is presented in Section 4. Section 5 presents the

experimental results and explores the rationality of DRMF. Finally,

Section 6 offers conclusions and future work.

2. Related work

Assume that x

i

¼½x

i1

; ...; x

in

T

is a sample represented by a set F

of n features and every sample is generated independently at

random according to some fixed but unknown distribution D. Let

X be an N n matrix containing the training set and

Y ¼½y

1

; ...; y

N

T

be an N-dimensional vector containing the class

labels for the data, where y

i

is a class label of x

i

from the set of

the class labels f

x

1

; ...;

x

c

g. Let fC

1

; ...; C

L

g be the set of base clas-

sifiers in an ensemble. In this paper, our aim is to obtain an ensem-

ble system with small generalization error via optimizing the

margin distribution. Here, the generalization error of a classifier

C

j

is the probability of C

j

ðxÞ – y when an example ðx; yÞ is chosen

at random according to the distribution D and denoted as

P

D

½C

j

ðxÞ – y. The margin distribution is a function of h which gives

the fraction of samples whose margin is smaller than h. A good

margin distribution means that most examples have large margins.

Definition 1. Given x

i

2 X; h

ij

ðj ¼ 1; 2 ...; LÞ is the output of x

i

from

C

j

. We define

d

ij

¼

1; if y

i

¼ h

ij

1; if y

i

– h

ij

;

ð1Þ

where y

i

is the real class label of x

i

.

From this definition, we know that d

ij

¼ 1ifx

i

is correctly

classified by C

j

; otherwise d

ij

¼1.

Definition 2 [42]. Given x

i

2 X, the margin of x

i

in terms of the

ensemble is defined as

mðx

i

Þ¼

X

L

j¼1

w

j

d

ij

; ð2Þ

where w

j

is the weight of C

j

and w

j

> 0.

In [42,51], it is shown that a small generalization error for a vot-

ing classifier can be obtained by a good margin distribution on the

training set. Obviously, the performances of the base classifiers have

a significant effect on the margin of x

i

. At the same time, the diver-

sity among base classifiers is another key factor. In [46], the under-

lying relationship between diversity and margin was analyzed.

Theorem 1 [46]. Let

H

be the average classification accuracy of the

base classifiers. If

H

is regarded as a constant and if maximum

diversity is achievable, maximization of the diversity among base

classifiers is equivalent to maximization of the minimal margin of the

ensemble on the training samples.

It should be noted that our aim is not to maximize the minimal

margin of the ensemble, but to optimize the margin distribution.

We use a disagreement measure [30] to measure the diversity of

the base classifiers in our approach. The diversity between classifi-

ers C

j

and C

k

is thus computed as

Dis

jk

¼

N

01

þ N

10

N

11

þ N

10

þ N

01

þ N

00

; ð3Þ

where N

00

denotes the number of samples misclassified by both

classifiers, N

11

is the number of samples correctly classified by both,

N

10

denotes the number of samples which were correctly classified

by C

j

but misclassified by C

k

, and N

01

denotes the number of sam-

ples misclassified by C

j

but correctly classified by C

k

. For multiple

base classifiers, the overall diversity is computed as the average

diversity of classifier pairs.

In [39], Rodríguez and Kuncheva designed a method to generate

ensembles based on feature transformation. The diversity of base

classifiers is promoted by random splits of the feature set into dif-

ferent subsets. The original feature space is split into K subspaces

(the subsets may be disjoint or may intersect). Then, PCA is applied

to linearly rotate the subspaces along the ‘‘rotation’’ matrix. Diver-

sity is obtained by random splits of the feature set.

Cai et al. [12] proposed a supervised algorithm for feature trans-

formation, which can find a projection that maximizes the margin

between different classes. For x

i

2 X, denote by ðx

i

Þ¼fx

1

i

; ...; x

e

i

g

the set of its e nearest neighbors and by y

i

the class label of x

i

.We

define

s

ðx

i

Þ¼fx

j

i

jy

j

i

¼ y

i

; 1 6 j 6 eg; ð4Þ

and

b

ðx

i

Þ¼fx

j

i

jy

j

i

– y

i

; 1 6 j 6 eg; ð5Þ

so that

s

ðx

i

Þ contains the neighbors which share the same label

with x

i

, while

b

ðx

i

Þ is the set of the neighbors which belong to

the other classes.

For any x

i

and x

j

, we define

V

b;ij

¼

1ifx

i

2

b

ðx

j

Þ or x

j

2

b

ðx

i

Þ

0 otherwise

;

ð6Þ

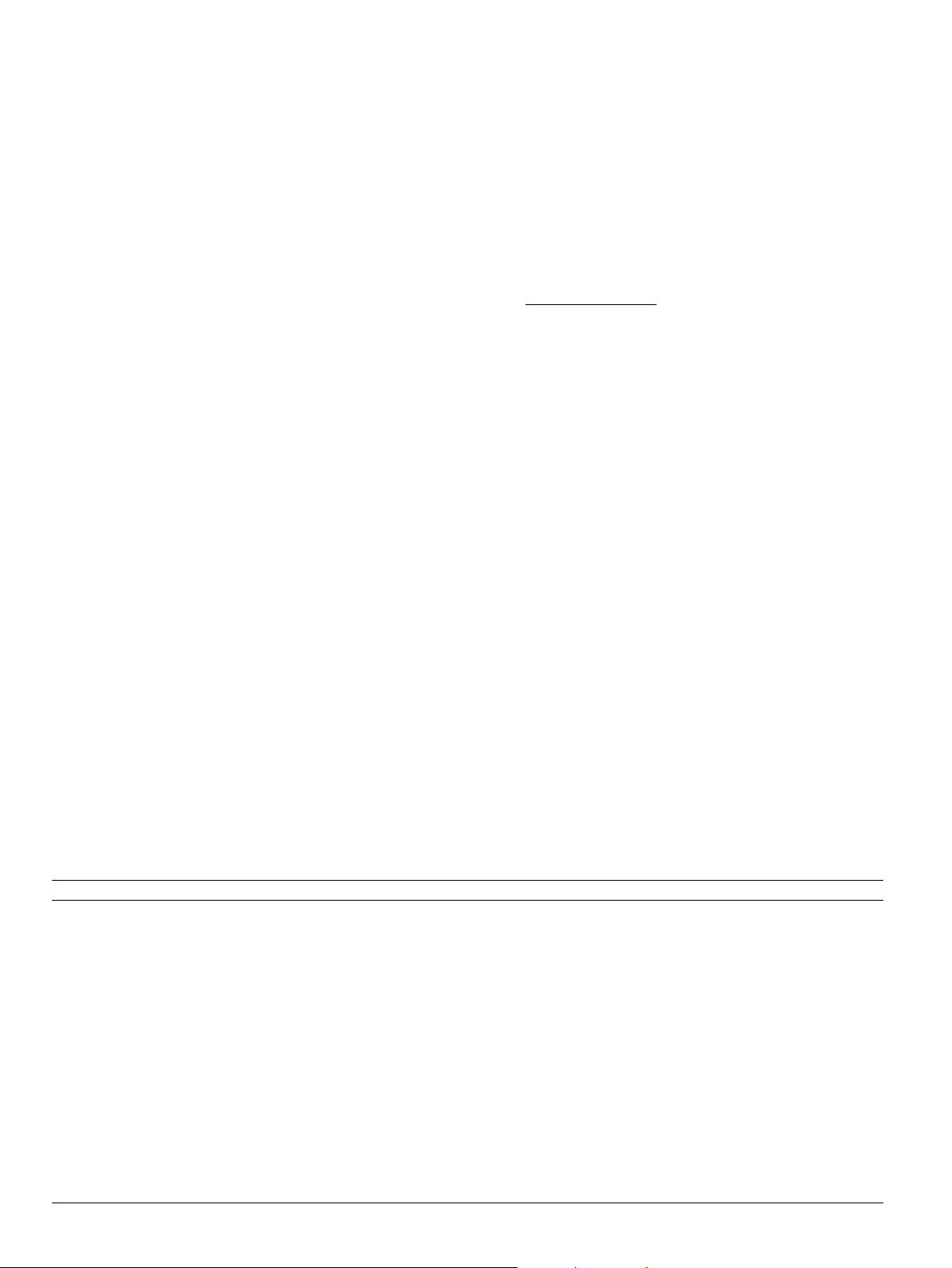

Table 3

Classification performance with different numbers of candidate base classifiers.

Data set L =20 L =40 L =60 L =80 L = 100

Australian 86.96 ± 3.01 88.13 ± 3.98 87.54 ± 2.90 87.97 ± 3.27 88.11 ± 3.48

Crx 85.66 ± 14.10 85.51 ± 14.66 85.64 ± 15.10 86.81 ± 13.20 86.37 ± 13.66

Cmc 52.82 ± 3.41 53.97 ± 3.30 53.70 ± 3.28 54.18 ± 2.95 54.24 ± 3.25

Derm 96.47 ± 3.90 96.98 ± 4.24 95.91 ± 5.26 96.47 ± 4.12 96.75 ± 3.84

German 75.40 ± 3.60 77.00 ± 3.40 77.00 ± 3.02 77.70 ± 2.45 77.80 ± 2.94

Glass 72.44 ± 12.14 74.44 ± 11.43 74.89 ± 13.25 78.14 ± 10.91 76.64 ± 10.61

Heart 83.33 ± 3.60 83.70 ± 4.68 84.81 ± 4.43 84.81 ± 4.77 84.44 ± 4.88

Horse 92.95 ± 4.06 92.94 ± 4.06 93.49 ± 3.38 93.22 ± 3.39 93.49 ± 3.83

ICU 94.04 ± 4.69 94.04 ± 4.69 93.56 ± 4.80 94.09 ± 5.21 93.56 ± 4.80

Iono 93.20 ± 4.00 92.92 ± 4.60 93.49 ± 4.95 93.77 ± 5.09 93.47 ± 4.76

Iris 94.67 ± 5.26 94.67 ± 5.26 94.67 ± 5.26 96.67 ± 4.71 98.67 ± 2.81

Movement 80.56 ± 15.15 82.78 ± 16.50 82.78 ± 16.57 82.44 ± 16.29 82.44 ± 16.29

Pima 77.87 ± 4.98 78.52 ± 3.90 78.39 ± 4.53 78.39 ± 4.32 78.78 ± 3.76

Rice 89.73 ± 10.18 88.82 ± 10.46 90.73 ± 12.86 89.82 ± 13.17 89.82 ± 13.17

Spectf 82.61 ± 5.18 83.34 ± 4.39 82.12 ± 7.21 83.25 ± 7.01 82.48 ± 7.91

Thyroid 94.83 ± 6.10 95.30 ± 6.31 94.83 ± 5.21 95.78 ± 5.19 96.26 ± 4.86

Wiscon 97.34 ± 2.59 97.43 ± 2.59 97.71 ± 2.15 97.34 ± 2.59 97.86 ± 2.36

Wdbc 97.19 ± 2.06 97.72 ± 1.66 98.43 ± 1.53 97.72 ± 1.66 97.72 ± 1.66

Yeast 73.11 ± 3.26 73.45 ± 3.12 73.45 ± 3.64 73.38 ± 3.61 73.25 ± 3.47

Zoo 94.39 ± 8.39 94.39 ± 8.39 94.39 ± 8.39 94.39 ± 8.39 94.39 ± 8.39

Average 85.78 86.30 86.38 86.82 86.83

92 Q. Hu et al. / Knowledge-Based Systems 67 (2014) 90–104