SHAO et al.: ASYMMETRIC CODING OF MULTI-VIEW VIDEO PLUS DEPTH BASED 3-D VIDEO FOR VIEW RENDERING 159

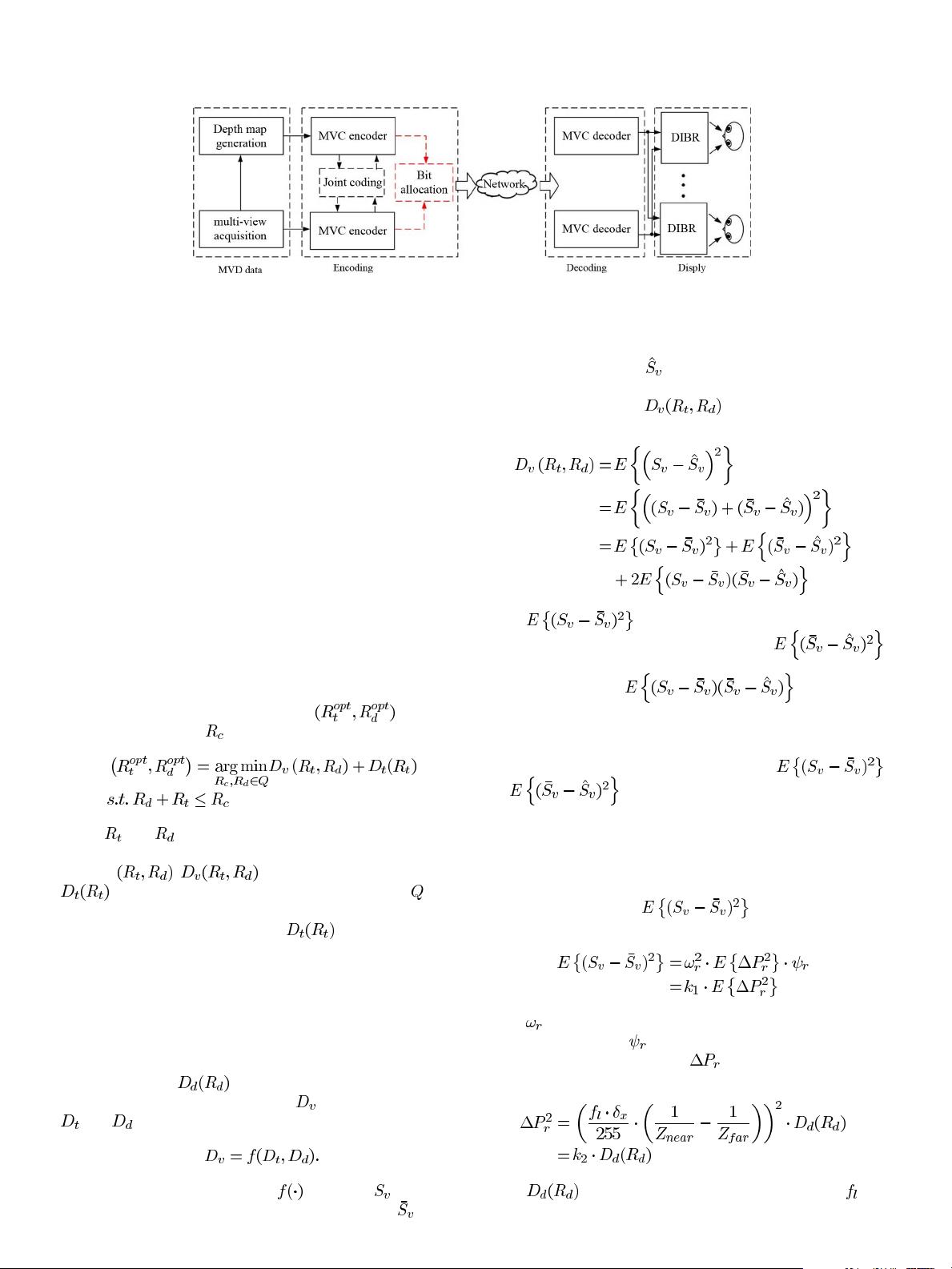

Fig. 1. System framework of the MVD-based 3-D video system.

asymmetric coding methods of 2-D video, the proposed asym-

metric coding method of 3-D video mainly consists of two parts,

that is, asymmetric coding of texture videos and depth maps, and

asymmetric coding of texture videos. In the system framework,

the first part is fulfilled by a bit allocation model which only

takes the objective quality as the optimization criterion, and the

second part is fulfilled by a chrominance reconstruction model

which takes the visual characteristics of binocular suppression

into consideration. Then, the two parts are combined into one

framework by implementing appropriate bitrate allocation and

rate control strategies. As a result, the objective quality of the

rendered virtual view will be improved while keeping the same

or nearly the same perceptual visual quality.

In the MVD-based 3-D video system, virtual views are ren-

dered from the compressed texture videos and depth maps, and

the distorted texture videos and depth maps resulting from com-

pression can be propagated to the virtual views. Therefore, the

optimal bitrate ratio problem between texture videos and depth

maps is necessary to be solved in MVD-based 3-D video coding.

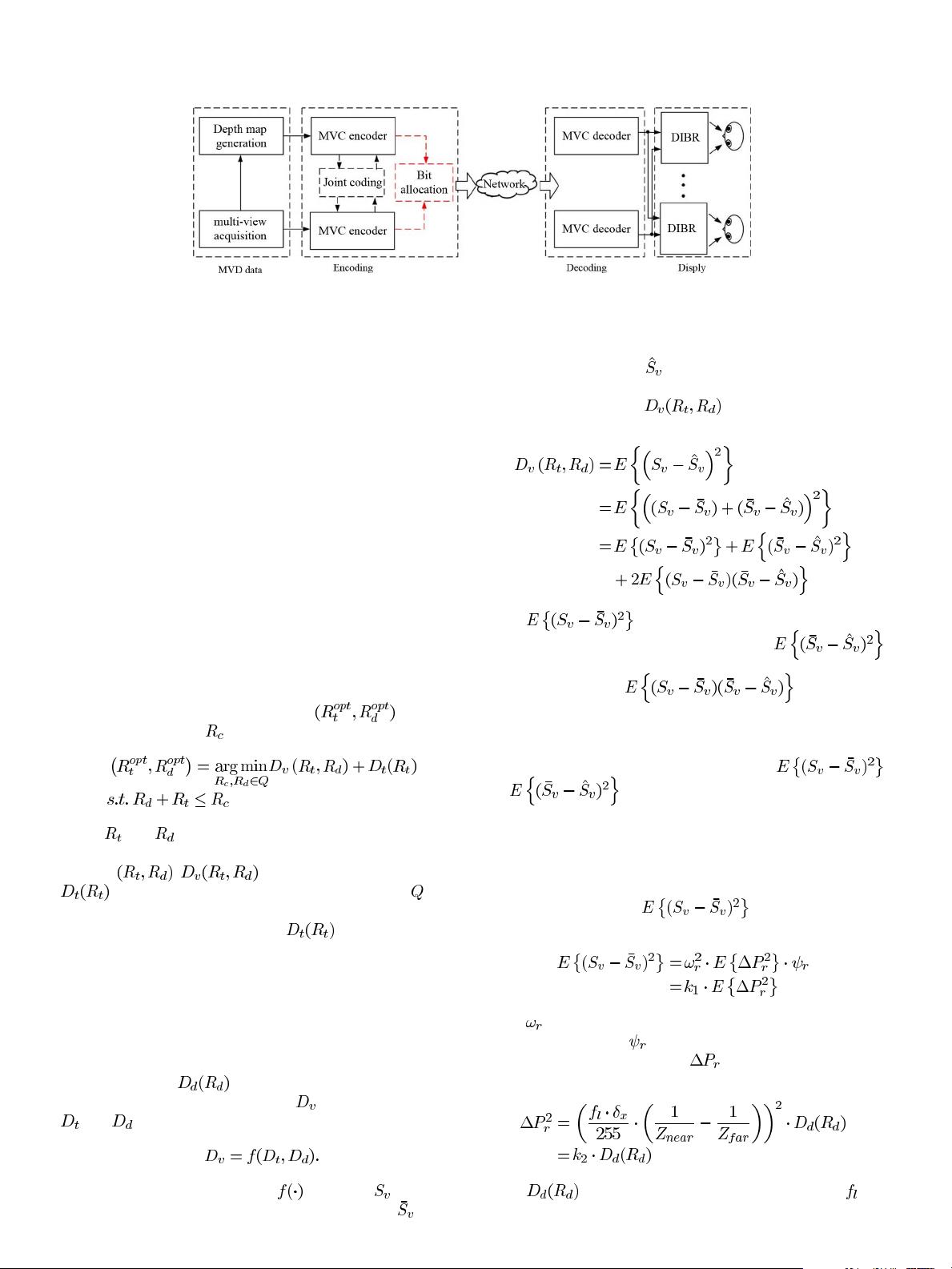

In order to seek the optimal bitrate pair,

, under the

total bitrate constraint

, the problem is formulated as

(1)

where

and are the coding bitrates of texture videos and

depth maps, respectively; they are constructed a bitrate pair, de-

noted as

. is the view rendering distortion,

is the coding distortion of texture videos, and is the

candidate set of the bitrate pair. An important feature of the

model is that the coding distortion

of texture videos is

also included, because texture videos usually need to maintain

higher quality for the purpose of being compatible with 2-D dis-

play.

It is assumed that the multi-view acquisition, depth gener-

ation, view rendering, and 3-D display modules in Fig. 1 are

fixed; thus, the quality of the rendered virtual view may be

mainly affected by the coding distortions of texture videos and

depth maps. Let

denote coding distortion of depth

maps, the view rendering distortion

can be represented by

and as a function of

(2)

In order to model the function

in (2), let denote the

original texture image at the virtual view position,

denote

the image rendered by the original texture images and the com-

pressed depth maps, and

denote the image rendered by the

compressed texture images and the compressed depth maps, the

view rendering distortion

can be approximately de-

composed into two components

(3)

where

represents the average view rendering

distortion induced by depth compression, and

represents the average view rendering distortion induced by tex-

ture compression, and

approximates

to zero [25]. In practical view rendering implementation, the

virtual view is rendered from multiple adjacent views. Theo-

retically, the impact of different views on the same virtual view

should be taken in account in the distortions

and . Since the depth maps of different views

have large amounts of uniform contents, the impact of different

views on the same virtual view may be similar if the impact of

occlusion is ignored. For simplicity, we only consider the im-

pact from one adjacent view in the above distortions.

It is supposed that the location of virtual view is known, for

a particular virtual view,

can be characterized

by a linear model and expressed as [26]

(4)

where

is the weighting factor of the rendered virtual image

from a particular view,

is the linear parameter which is asso-

ciated with image contents, and

acts as the warping posi-

tion error, which is computed as [27]

(5)

where

is the coding distortion of depth maps, de-

notes the focal length of the camera in the horizontal direction,