be limited by any kernel contention, particularly from lower-

criticality interference. We seek a particularly strict sense of

scalability: even in the worst-case the latency bounds made

on a single core should hold with increases in core count.

G1 Avoid cache-line contention for real-time tasks. Given

the overheads of modifying a shared cache-line, SPECK is

designed to (1) ensure objects fit into a single cache-line and

provide synchronization at that finest-possible granularity of

contention, (2) ensure that all contention is explicit to user-

level through the system-call API so that it can be avoided, and

(3) ensure that the common-case uses of the kernel perform no

shared cache-line modifications. One key to avoiding cache-

line modifications in the common case is to provide liveness

tracking of kernel objects. The key question of “when can an

object be deallocated?” is complicated by parallel accesses to

it, and common solutions such as reference counting do not

scale.

G2 User-level definition of policy, and pervasive inter-

component isolation. A fundamental goal of COMPOSITE

is the extraction of resource management policy from the

kernel to user-level components. SPECK takes this one step

further, and enables the component-based control over all

kernel scalability properties. This is essential to provide strong

temporal isolation boundaries between components to match

the spatial isolation provided by hardware protection.

G3 Aggressive optimization of the fast-paths. Optimizing for

scalability often implies slowing and complicating the code.

For example, fine-grained locking increases parallelism, but

imposes more overhead [6]. SPECK is intended to not only

provide latencies that do not increase with an increasing core

count, but with latencies that are competitive on a single core

with existing state-of-the-art systems.

IV. DESIGN AND IMPLEMENTATION OF SPECK

SPECK leverages a clean-slate co-design of the ker-

nel’s data-structures, interface, and contention mechanisms

to achieve the required scalable predictability guarantees.

This co-design enables the finest-gained synchronization at

the level of individual kernel-object cache-lines, an explicit

interface mapping from the kernel namespace to cache-lines

that enables explicit cache-line contention avoidance, and

a quiescence-based reclamation scheme based on the very

predictability guarantees made by the kernel.

SPECK also maintains the fine-grained component-based

isolation of COMPOSITE systems, and the implementation

of all resource management policy in user-level components.

Components here refer to a unit of code of data that 1) are

memory isolated and execute in user-level, 2) export a set

of functions that can be invoked by other components, and

3) have a set of functional dependencies on other components.

A system is composed from a collection of specifically chosen

components by satisfying all of their functional dependencies.

Components in COMPOSITE include application processes

and middle-ware in addition to low-level policies such as

schedulers, memory managers, synchronization managers, file

systems, and networking protocols.

A. SPECK Kernel Abstractions

SPECK has a small number of abstractions. These are

focused around providing 1) the access control necessary to

strictly control both the system resources accessible by each

component, and the modification permissions to each of those

resources to limit cache coherency operations, 2) efficient

and bounded-latency access to those resources, and 3) the

capability to define system resource management policies in

user-level components.

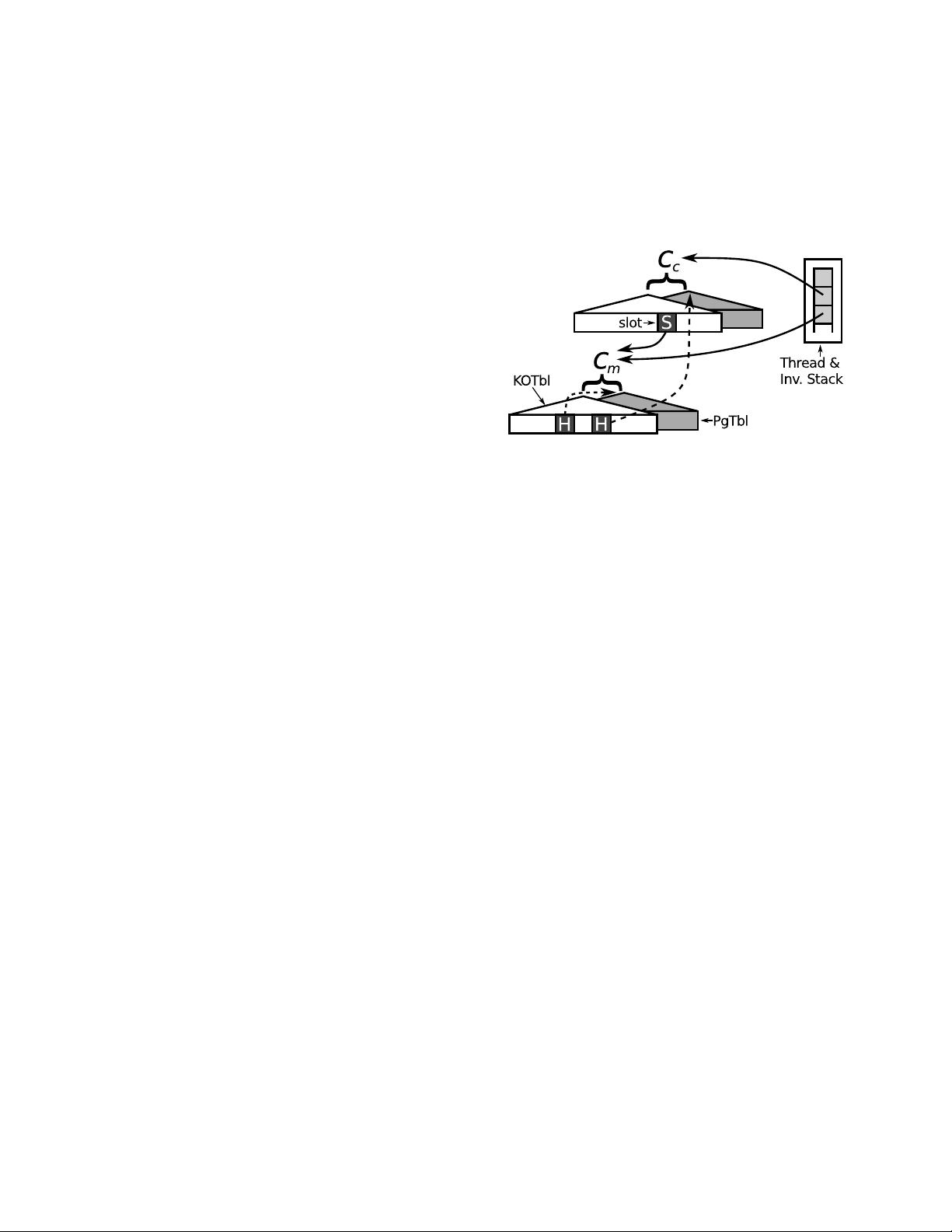

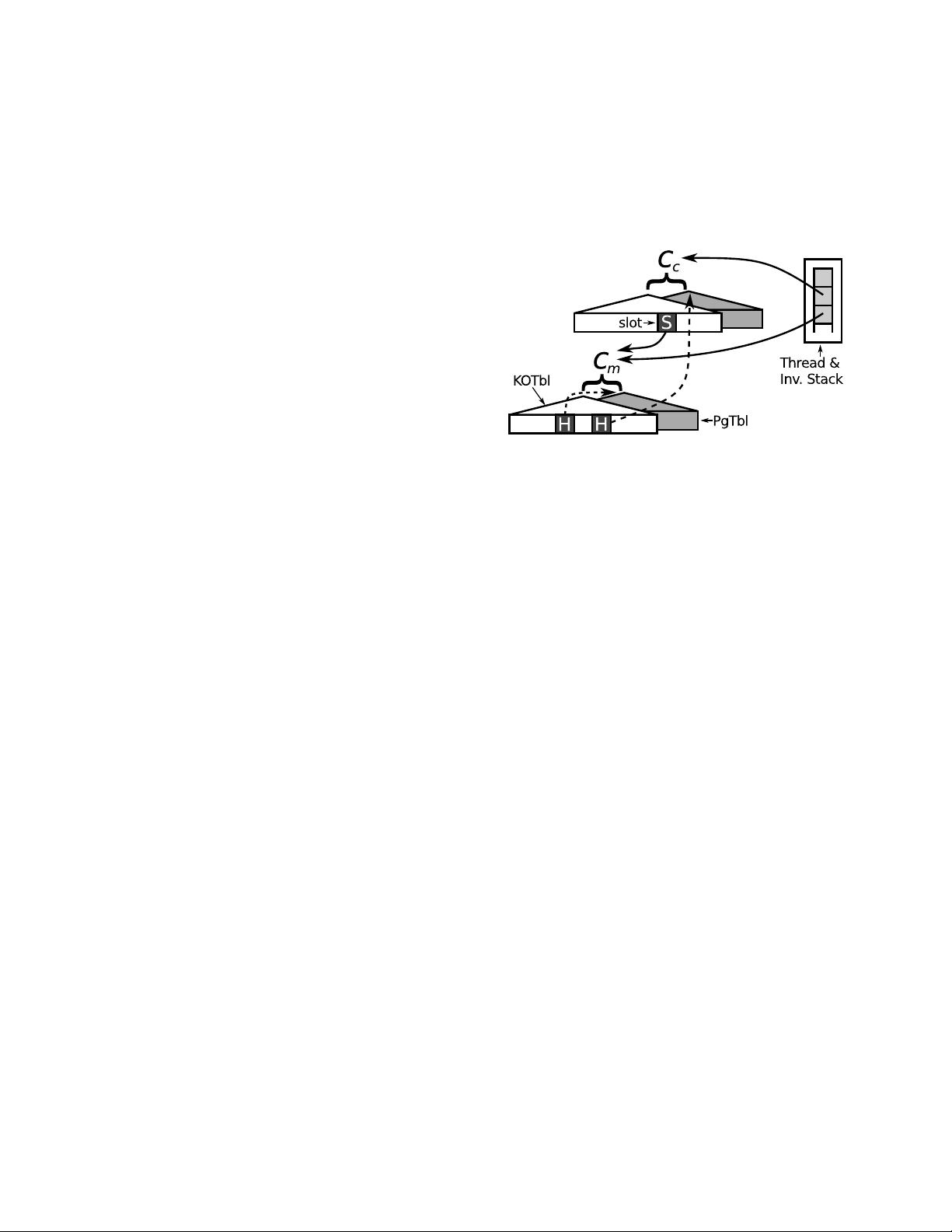

Fig. 1: SPECK’s main data-structures. Components (here, a client

C

c

, and a manager, C

m

) each include a KOTBL and PGTBL. C

c

has a SINV object in a KOTBL slot (labeled S), that enables it to

invoke C

m

. In this case, C

m

provides a memory mapping service, so

slots (labeled H) in its KOTBL include higher-order PGTBLs. Thus,

C

m

can modify the PGTBL of the client by adding pages into it.

A kernel thread structure includes an invocation stack that tracks a

thread’s execution as it migrates between components. In this case,

the thread has invoked C

m

from C

c

(the stack grows down). System

memory is referenced within the PGTBLs.

Resource tables (RESTBLs). Each component is defined by a

set of resource tables that index and control access to system

resources. Each resource table is a radix trie consisting of

nodes up to a fixed depth. Each node in the radix trie has a

number of slots that hold references to other kernel objects.

Slots in nodes that are internal in the radix trie (i.e. non-leaf

nodes) hold references to nodes of the next level. Each radix

trie maps an index into a slot in a leaf node that references

a resource (e.g. a thread, memory frame, and other resource

tables). This same data-structure is used for various system

structures, each with different internal node radices, different

numbers of slots in the leaves, and different node sizes. These

include:

• KOTBL – the Kernel Object tables enable components to

access and use kernel objects. The only system call in SPECK

performs an operation on the kernel object at an index in the

KOTBL.

• PGTBL – page-tables are the main mechanism for tracking

not only virtual mappings for the component, but also access

to physical memory used for kernel data-structures.

• LIVENESS TABLE – This structure is used to track the

liveness and quiescence of kernel objects. This structure will

be elaborated in Section IV-F.

The only mechanism to make modifications to resource

tables is higher-order resource tables – references within

resource tables to resource tables, themselves. Most compo-

nents in the system do not have such access, thus make no

modifications to shared kernel structures. Components with

access to higher order resource tables are in charge of handling

delegation of, and access to, resources throughout the system.