4

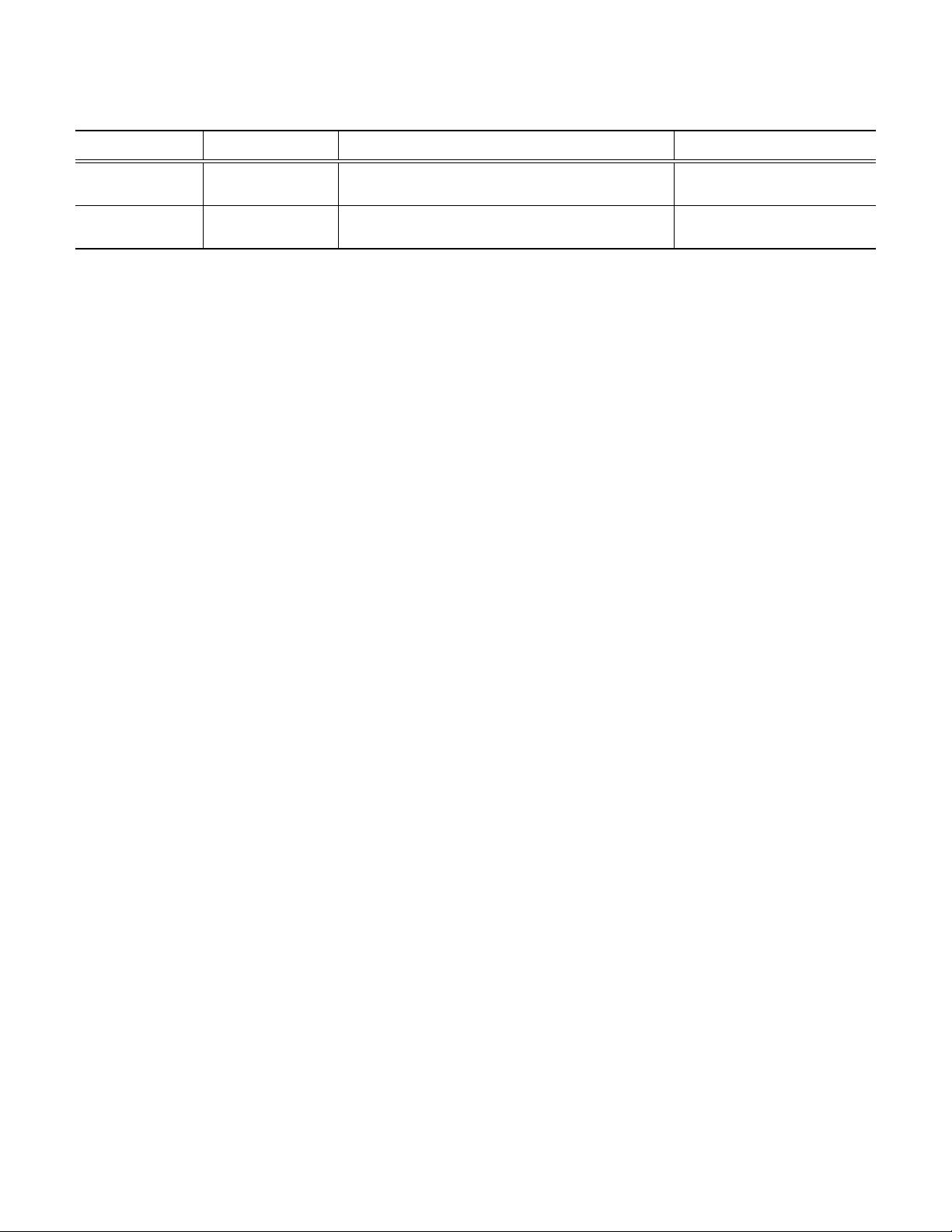

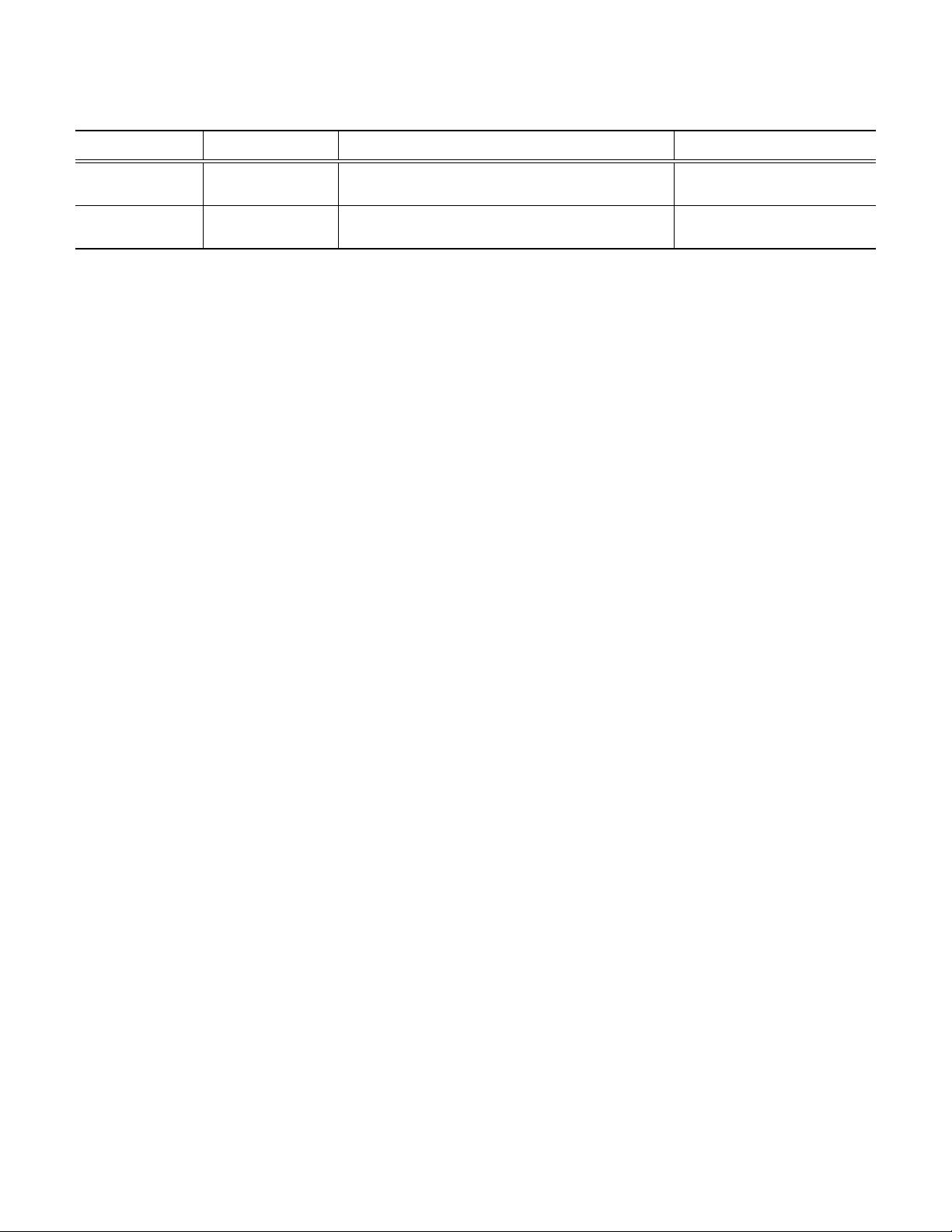

TABLE 1

Average PSNR(dB) results of different methods with noise levels 15, 25 and 50 on the widely-used Set12 and BSD68 [3], [44], [49] datasets. The

best and second best results are highlighted in red and blue colors, respectively.

Datasets

Noise

BM3D WNNM DnCNN

N

3

Net

NLRN RNAN FOCNet IRCNN FFDNet DRUNet

Level

15 32.37 32.70 32.86 – 33.16 – 33.07 32.77 32.75 33.25

Set12 25 29.97 30.28 30.44 30.55 30.80 – 30.73 30.38 30.43 30.94

50 26.72 27.05 27.18 27.43 27.64 27.70 27.68 27.14 27.32 27.90

15 31.08 31.37 31.73 – 31.88 – 31.83 31.63 31.63 31.91

BSD68 25 28.57 28.83 29.23 29.30 29.41 – 29.38 29.15 29.19 29.48

50 25.60 25.87 26.23 26.39 26.47 26.48 26.50 26.19 26.29 26.59

design for better restoration. However, these methods learn

a separate model for each noise level. Perhaps the most

suitable denoiser for plug-and-play IR is FFDNet [18] which

can handle a wide range of noise levels by taking the

noise level map as input. Nevertheless, FFDNet only has

a comparable performance to DnCNN and IRCNN, thus

lacking effectiveness to boost the performance of plug-and-

play IR. For this reason, we propose to improve FFDNet

by taking advantage of the widely-used U-Net [20] and

ResNet [19] for architecture design.

3.1 Denoising Network Architecture

It is well-known that U-Net [20] is effective and efficient for

image-to-image translation, while ResNet [19] is superior

in increasing the modeling capacity by stacking multiple

residual blocks. Following FFDNet [18] that takes the noise

level map as input, the proposed denoiser, namely DRUNet,

further integrates residual blocks into U-Net for effective

denoiser prior modeling. Note that this work focuses on

providing a flexible and powerful pre-trained denoiser to

benefit existing plug-and-play IR methods rather than de-

signing new denoising network architecture. Actually, the

similar idea of combining U-Net and ResNet can also be

found in other works such as [61], [62].

The architecture of DRUNet is illustrated in Fig. 1. Like

FFDNet, DRUNet has the ability to handle various noise

levels via a single model. The backbone of DRUNet is

U-Net which consists of four scales. Each scale has an

identity skip connection between 2 × 2 strided convolution

(SConv) downscaling and 2 × 2 transposed convolution

(TConv) upscaling operations. The number of channels in

each layer from the first scale to the fourth scale are 64,

128, 256 and 512, respectively. Four successive residual

blocks are adopted in the downscaling and upscaling of

each scale. Inspired by the network architecture design for

super-resolution in [63], no activation function is followed

by the first and the last convolutional (Conv) layers, as well

as SConv and TConv layers. In addition, each residual block

only contains one ReLU activation function.

It is worth noting that the proposed DRUNet is bias-

free, which means no bias is used in all the Conv, SConv

and TConv layers. The reason is two-fold. First, bias-free

network with ReLU activation and identity skip connection

naturally enforces scaling invariance property of many im-

age restoration tasks, i.e., f (ax) = af(x) holds true for any

scalar a ≥ 0 (please refer to [64] for more details). Second,

we have empirically observed that, for the network with

bias, the magnitude of bias would be much larger than that

of filters, which in turn may harm the generalizability.

3.2 Training Details

It is well known that CNN benefits from the availability of

large-scale training data. To enrich the denoiser prior for

plug-and-play IR, instead of training on a small dataset that

includes 400 Berkeley segmentation dataset (BSD) images

of size 180×180 [9], we construct a large dataset consisting

of 400 BSD images, 4,744 images of Waterloo Exploration

Database [65], 900 images from DIV2K dataset [66], and

2,750 images from Flick2K dataset [63]. Because such a

dataset covers a larger image space, the learned model can

slightly improve the PSNR results on BSD68 dataset [3]

while having an obvious PSNR gain on testing datasets from

a different domain.

As a common setting for Gaussian denoising, the noisy

counterpart y of clean image x is obtained by adding

AWGN with noise level σ. Correspondingly, the noise level

map is a uniform map filled with σ and has the same spatial

size as noisy image. To handle a wide range of noise levels,

the noise level σ is randomly chosen from [0, 50] during

training. Note that the noisy images are not clipped into the

range of [0, 255]. The reason is that the clipping operation

would change the distribution of the noise, which in turn

will give rise to inaccurate solution for plug-and-play IR.

The network parameters are optimized by minimizing the

L1 loss rather than L2 loss between the denoised image and

its ground-truth with Adam algorithm [67]. Although there

is no direct evidence on which loss would result in better

performance, it is widely acknowledged that L1 loss is more

robust than L2 loss in handling outliers [68]. Regarding

to denoising, outliers may occur during the sampling of

AWGN. In this sense, L1 loss tends to be more stable than

L2 loss for denoising network training. The learning rate

starts from 1e-4 and then decreases by half every 100,000

iterations and finally ends once it is smaller than 5e-7. In

each iteration during training, 16 patches with patch size

of 128×128 were randomly sampled from the training data.

We separately learn a denoiser model for grayscale image

and color image. It takes about four days to train the model

with PyTorch and an Nvidia Titan Xp GPU.

3.3 Denoising Results

3.3.1 Grayscale Image Denoising

For grayscale image denoising, we compared the proposed

DRUNet denoiser with several state-of-the-art denoising

methods, including two representative model-based meth-

ods (i.e., BM3D [23] and WNNM [10]), five CNN-based

methods which separately learn a single model for each

noise level (i.e., DnCNN [44], N

3

Net [60], NLRN [59],

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功