NATURAL LANGUAGE PROCESSING (ALMOST) FROM SCRATCH

As a special case, hAi

1

i

represents the i

th

column of matrix A. For a vector v, we denote [v]

i

the

scalar at index i in the vector. Finally, a sequence of element {x

1

, x

2

, . . . , x

T

} is written [x]

T

1

. The i

th

element of the sequence is [x]

i

.

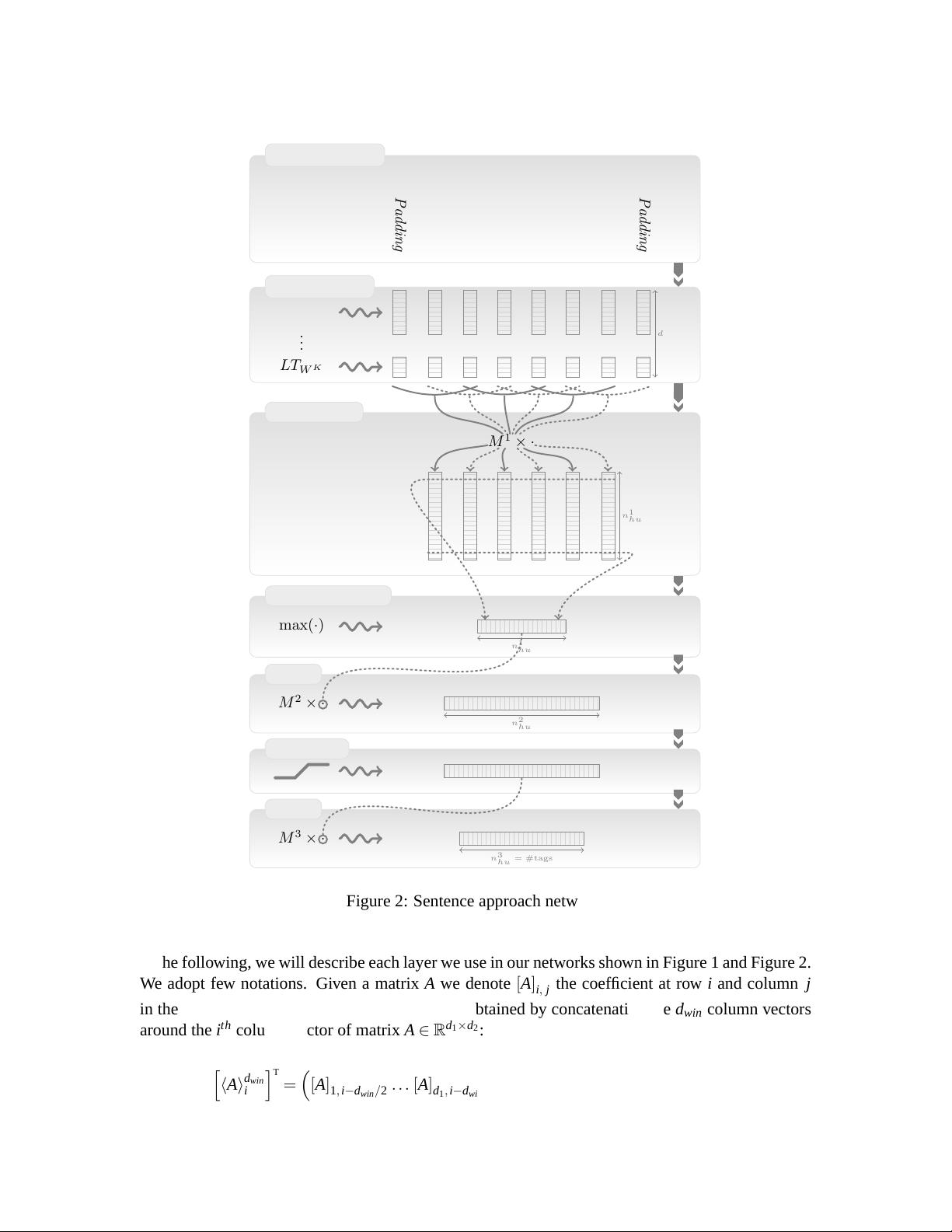

3.2 Transforming Words into Feature Vectors

One of the key points of our architecture is its ability to perform well with the use of (almost

8

)

raw words. The ability for our method to learn good word representations is thus crucial to our

approach. For efficiency, words are fed to our architecture as indices taken from a finite dictionary

D. Obviously, a simple index does not carry much useful information about the word. However,

the first layer of our network maps each of these word indices into a feature vector, by a lookup

table operation. Given a task of interest, a relevant representation of each word is then given by

the corresponding lookup table feature vector, which is trained by backpropagation, starting from

a random initialization.

9

We will see in Section 4 that we can learn very good word representa-

tions from unlabeled corpora. Our architecture allow us to take advantage of better trained word

representations, by simply initializing the word lookup table with these representations (instead of

randomly).

More formally, for each word w ∈ D , an internal d

wrd

-dimensional feature vector representation

is given by the lookup table layer LT

W

(·):

LT

W

(w) = hWi

1

w

,

where W ∈ R

d

wrd

×|D|

is a matrix of parameters to be learned, hWi

1

w

∈ R

d

wrd

is the w

th

column of W

and d

wrd

is the word vector size (a hyper-parameter to be chosen by the user). Given a sentence or

any sequence of T words [w]

T

1

in D, the lookup table layer applies the same operation for each word

in the sequence, producing the following output matrix:

LT

W

([w]

T

1

) =

hWi

1

[w]

1

hWi

1

[w]

2

... hWi

1

[w]

T

. (1)

This matrix can then be fed to further neural network layers, as we will see below.

3.2.1 EXTENDING TO ANY DISCRETE FEATURES

One might want to provide features other than words if one suspects that these features are helpful

for the task of interest. For example, for the NER task, one could provide a feature which says if a

word is in a gazetteer or not. Another common practice is to introduce some basic pre-processing,

such as word-stemming or dealing with upper and lower case. In this latter option, the word would

be then represented by three discrete features: its lower case stemmed root, its lower case ending,

and a capitalization feature.

Generally speaking, we can consider a word as represented by K discrete features w ∈ D

1

×

··· × D

K

, where D

k

is the dictionary for the k

th

feature. We associate to each feature a lookup table

LT

W

k

(·), with parameters W

k

∈ R

d

k

wrd

×|D

k

|

where d

k

wrd

∈ N is a user-specified vector size. Given a

8. We did some pre-processing, namely lowercasing and encoding capitalization as another feature. With enough (un-

labeled) training data, presumably we could learn a model without this processing. Ideally, an even more raw input

would be to learn from letter sequences rather than words, however we felt that this was beyond the scope of this

work.

9. As any other neural network layer.

2501