Optimized Skeleton-based Action Recognition via

Sparsified Graph Regression

Xiang Gao, Wei Hu, Jiaxiang Tang, Jiaying Liu, Zongming Guo

Institute of Computer Science and Technology, Peking University, China

{gyshgx868, forhuwei, hawkey1999, liujiaying, guozongming}@pku.edu.cn

Abstract—With the prevalence of accessible depth sensors,

dynamic human body skeletons have attracted much attention as

a robust modality for action recognition. Previous methods model

skeletons based on RNN or CNN, which has limited expressive

power for irregular skeleton joints. While graph convolutional

networks (GCN) have been proposed to address irregular graph-

structured data, the fundamental graph construction remains

challenging. In this paper, we represent skeletons naturally on

graphs, and propose a graph regression based GCN (GR-GCN)

for skeleton-based action recognition, aiming to capture the

spatio-temporal variation in the data. As the graph representation

is crucial to graph convolution, we first propose graph regres-

sion to statistically learn the underlying graph from multiple

observations. In particular, we provide spatio-temporal modeling

of skeletons and pose an optimization problem on the graph

structure over consecutive frames, which enforces the sparsity of

the underlying graph for efficient representation. The optimized

graph not only connects each joint to its neighboring joints in

the same frame strongly or weakly, but also links with relevant

joints in the previous and subsequent frames. We then feed

the optimized graph into the GCN along with the coordinates

of the skeleton sequence for feature learning, where we deploy

high-order and fast Chebyshev approximation of spectral graph

convolution. Further, we provide analysis of the variation charac-

terization by the Chebyshev approximation. Experimental results

validate the effectiveness of the proposed graph regression and

show that the proposed GR-GCN achieves the state-of-the-art

performance on the widely used NTU RGB+D, UT-Kinect and

SYSU 3D datasets.

Index Terms—Graph regression, graph convolutional net-

works, spatio-temporal graph modeling, skeleton-based action

recognition

I. INTRODUCTION

Action recognition is an active research direction in com-

puter vision, with widespread applications in video surveil-

lance, human computer interaction, robot vision, autonomous

driving and so on. Among the multiple modalities [1]–[5] that

are able to recognize human action, such as appearance, depth

and body skeletons [6], [7], the skeleton-based sequences

are springing up in recent years, due to the prevalence of

affordable depth sensors (e.g., Kinect) and effective pose esti-

mation algorithms [8]. Skeletons convey compact 3D position

information of the major body joints, which are robust to

variations of viewpoints, body scales and motion speeds [9].

Hence, skeleton-based action recognition has attracted more

and more attention [10]–[16].

Different from modalities defined on regular grids such as

images or videos, dynamic human skeletons are non-Euclidean

geometric data, which consist of a series of human joint coor-

dinates. This poses challenges in capturing both the intra-frame

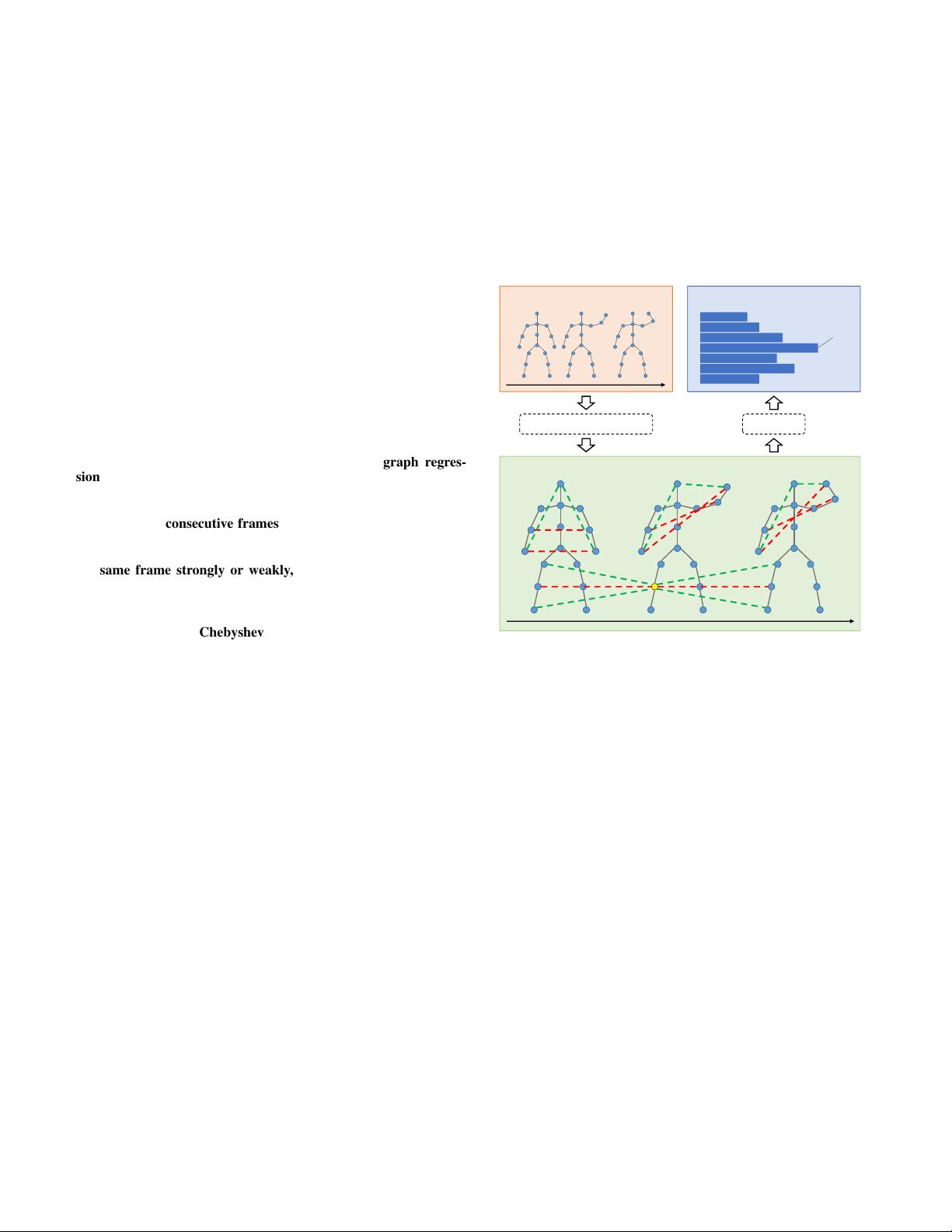

Sparsified Spatio-Temporal Graph

t

Graph Regression (GR)

Input Skeleton Sequence

t

GCN

Output Classification Score

Hand

Waving

Fig. 1. The pipeline of the proposed GR-GCN for skeleton-based action

recognition. Given a sequence of human body joints, we first learn a common

sparsified spatio-temporal graph over each frame, its previous frame and the

subsequent one via graph regression. This leads to a spatio-temporal graph

with strong and physical edges (black solid lines), strong and non-physical

edges (red dashed lines) and weak edges (green dashed ones) for variation

modeling. We then feed the sparsified spatio-temporal graph into a graph

convolutional network (GCN) along with the 3D coordinates of joints for

variation learning, which leads to the output classification scores.

features and temporal dependencies. Recent methods learn

these features via deep models like recurrent neural networks

(RNN) [6], [7], [17]–[23] and convolutional neural networks

(CNN) [21], [24]–[27]. Nevertheless, the topology in skeletons

is not fully exploited in the grid-shaped representation of RNN

and CNN.

A natural way to represent skeletons is graph, where each

joint is treated as a vertex in the graph, and the relationship

among the joints is interpreted by edges with weights. As

unordered graphs cannot be fed into RNN or CNN directly,

graph convolutional networks (GCN) have been proposed to

deal with data defined on irregular graphs for a variety of

applications [28]–[31]. Yan et al. [32] and Li et al. [33] are

the first to propose graph-based skeleton representation, which

is then fed into the GCN to automatically learn the spatial

and temporal patterns from data. Tang et al. [34] propose a

arXiv:1811.12013v2 [cs.CV] 15 Apr 2019