A Face-to-Face Neural Conversation Model

Hang Chu

1,2

Daiqing Li

1

Sanja Fidler

1,2

1

University of Toronto

2

Vector Institute

{chuhang1122, daiqing, fidler}@cs.toronto.edu

Abstract

Neural networks have recently become good at engaging

in dialog. However, current approaches are based solely

on verbal text, lacking the richness of a real face-to-face

conversation. We propose a neural conversation model that

aims to read and generate facial gestures alongside with

text. This allows our model to adapt its response based on

the “mood” of the conversation. In particular, we intro-

duce an RNN encoder-decoder that exploits the movement

of facial muscles, as well as the verbal conversation. The

decoder consists of two layers, where the lower layer aims

at generating the verbal response and coarse facial expres-

sions, while the second layer fills in the subtle gestures,

making the generated output more smooth and natural. We

train our neural network by having it “watch” 250 movies.

We showcase our joint face-text model in generating more

natural conversations through automatic metrics and a hu-

man study. We demonstrate an example application with a

face-to-face chatting avatar.

1. Introduction

We make conversation everyday. We talk to our fam-

ily, friends, colleagues, and sometimes we also chat with

robots. Several online services employ robot agents to di-

rect customers to the service they are looking for. Question-

answering systems like Apple Siri and Amazon Alexa have

also become a popular accessory. However, while most of

these automatic systems feature a human voice, they are far

from acting like human beings. They lack in expressivity,

and are typically emotionless.

Language alone can often be ambiguous with respect to

the person’s mood, unless indicative sentiment words are

being used. In real life, people make gestures and read other

people’s gestures when they communicate. Whether some-

one is smiling, crying, shouting, or frowning when saying

“thank you” can indicate various feelings from gratitude to

irony. People also form their response depending on such

demo/data: http://www.cs.toronto.edu/face2face

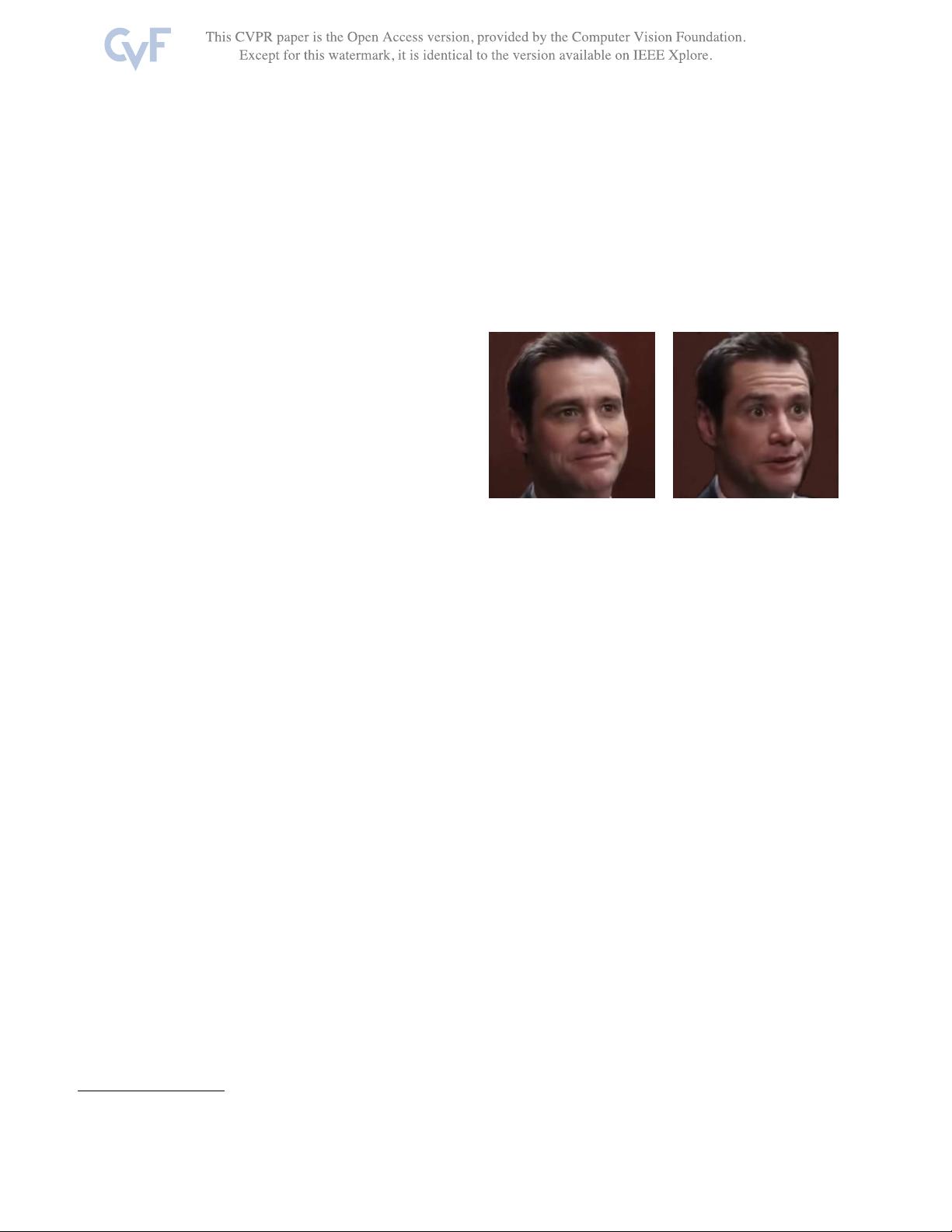

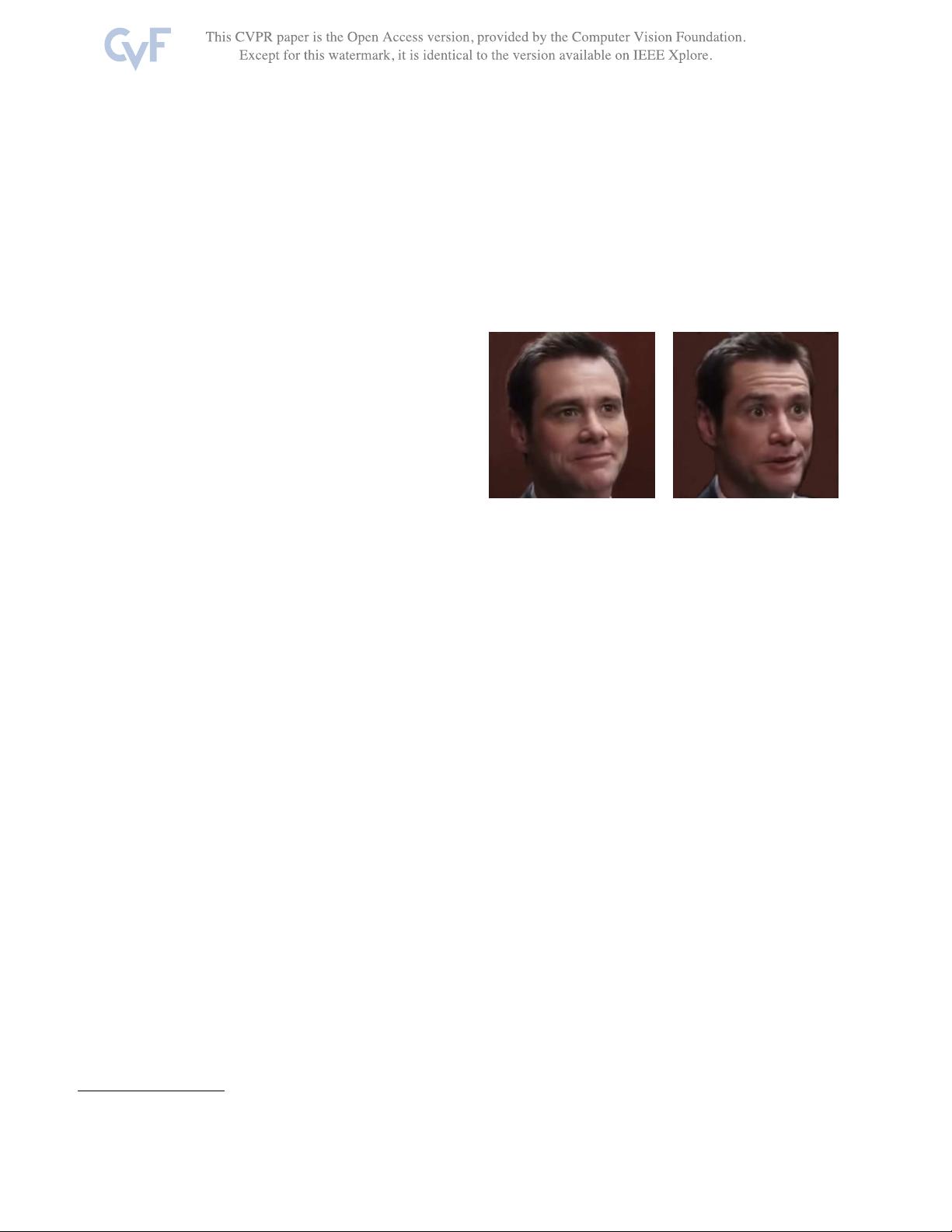

Figure 1: Facial gestures convey sentiment information. Words

have different meanings with different facial gestures. Saying

“Thank you” with different gestures could either express gratitude,

or irony. Therefore, a different response should be triggered.

context, not only in what they say but also in how they say

it. We aim at developing a more natural conversation model

that jointly models text and gestures, in order to act and

converse in a more natural way.

Recently, neural networks have been shown to be good

conversationalists [33, 15]. These typically make use of

an RNN encoder which represents the history of the ver-

bal conversation and an RNN decoder that generates a re-

sponse. [16] built on top of this idea with the aim to person-

alize the model by adapting the conversation to a particular

user. However, all these approaches are based solely on text,

lacking the richness of a real face-to-face conversation.

In this paper, we introduce a neural conversation model

that reads and generates both a verbal response (text) and

facial gestures. We exploit movies as a rich resource of

such information. Movies show a variety of social situa-

tions with diverse emotions, reactions, and topics of con-

versation, making them well suited for our task. Movies

are also multi-modal, allowing us to exploit both visual as

well as dialogue information. However, the data itself is

also extremely challenging due to many characters that ap-

pear on-screen at any given time, as well as large variance

in pose, scale, and recording style.

Our model adopts the encoder-decoder architecture and

adds gesture information in both the encoder as well as the

decoder. We exploit the FACS representation [8] of ges-

1

7113

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功