the network was trained anew for each game, meaning the visual system and the policy are highly

specialized for the games it was trained on. More recent work has shown how these game-specific

networks can share visual features (Rusu et al., 2016) or be used to train a multi-task network

(Parisotto, Ba, & Salakhutdinov, 2016), achieving modest benefits of transfer when learning to

play new games.

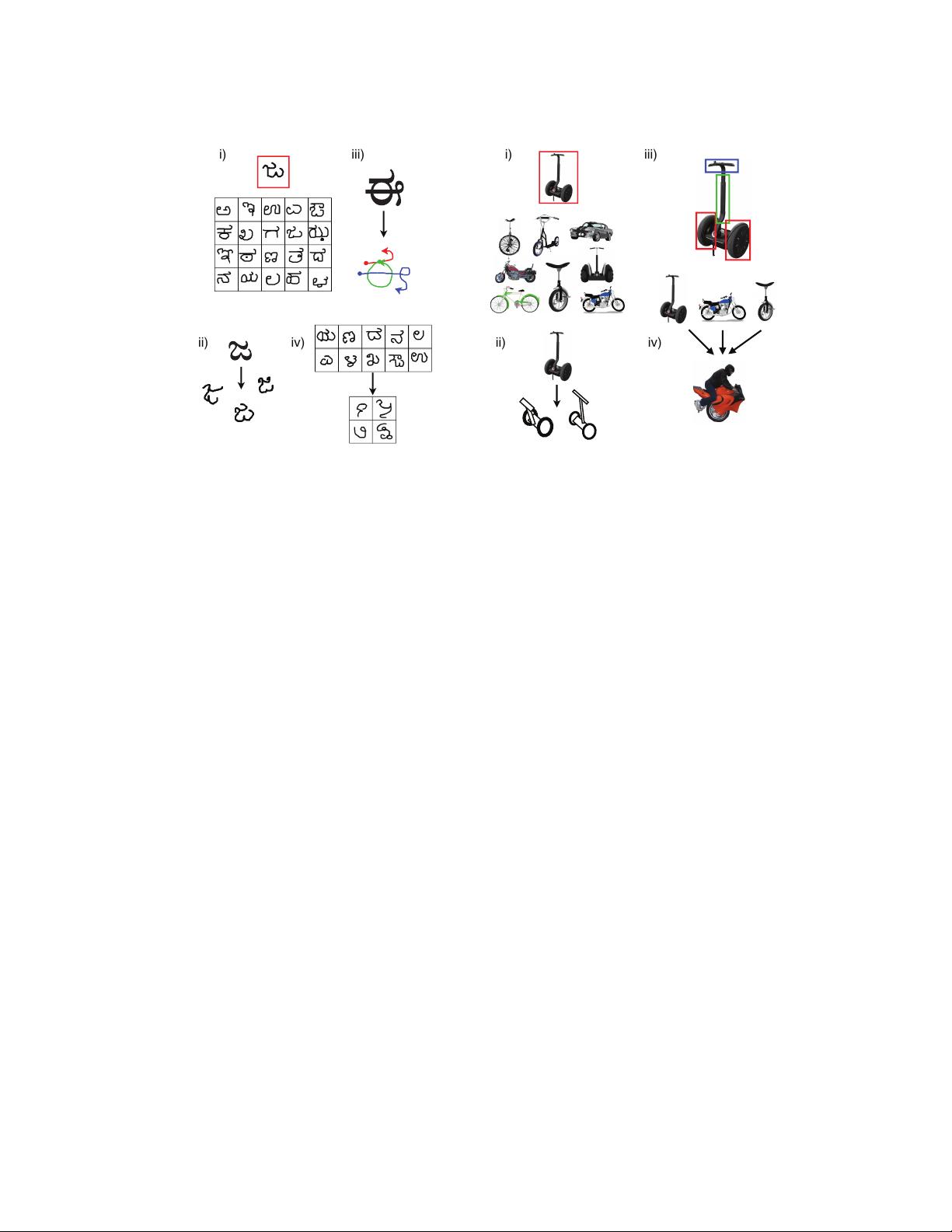

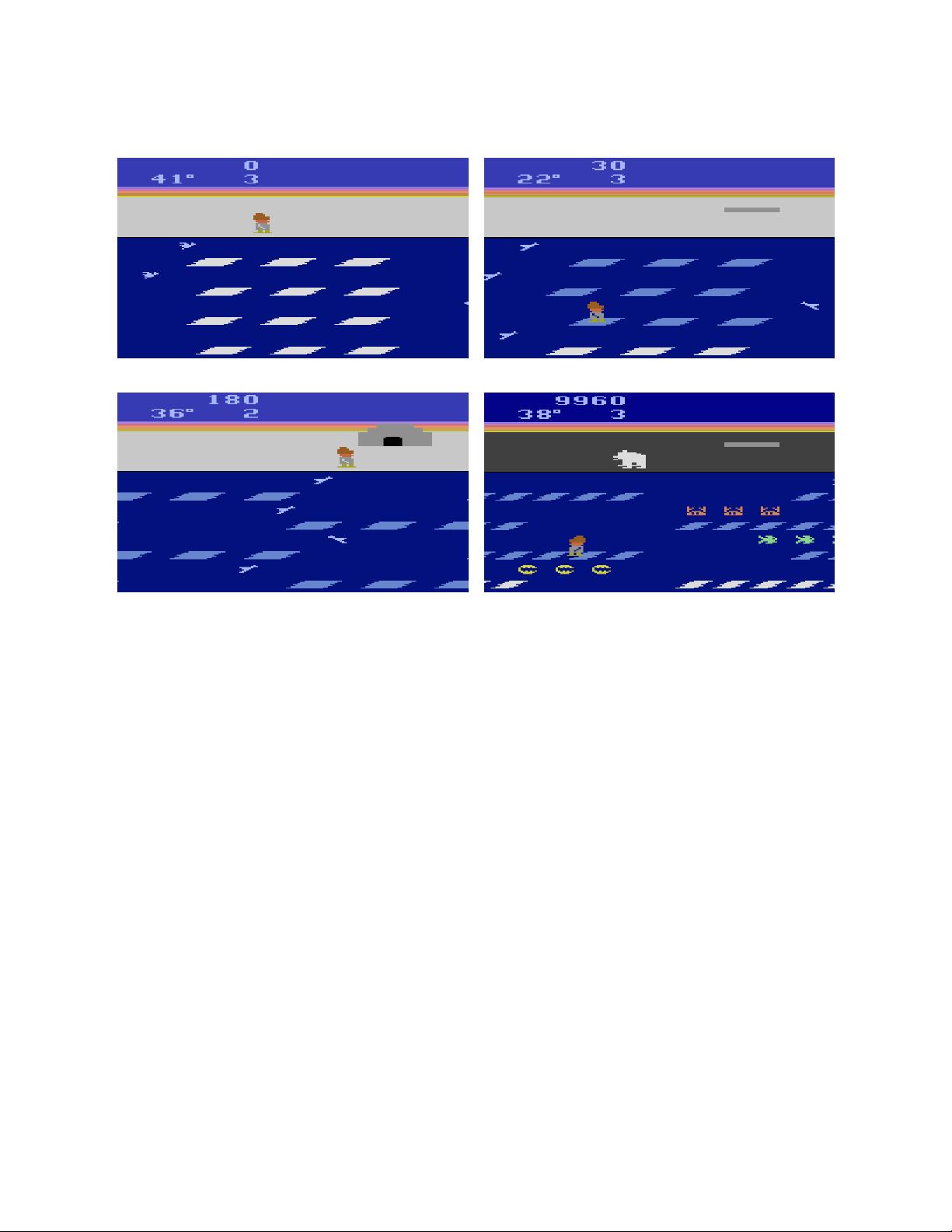

Although it is interesting that the DQN learns to play games at human-level performance while

assuming very little prior knowledge, the DQN may be learning to play Frostbite and other games

in a very different way than people do. One way to examine the differences is by considering the

amount of experience required for learning. In V. Mnih et al. (2015), the DQN was compared with a

professional gamer who received approximately two hours of practice on each of the 49 Atari games

(although he or she likely had prior experience with some of the games). The DQN was trained on

200 million frames from each of the games, which equates to approximately 924 hours of game time

(about 38 days), or almost 500 times as much experience as the human received.

2

Additionally, the

DQN incorporates experience replay, where each of these frames is replayed approximately 8 more

times on average over the course of learning.

With the full 924 hours of unique experience and additional replay, the DQN achieved less than

10% of human-level performance during a controlled test session (see DQN in Fig. 3). More recent

variants of the DQN have demonstrated superior performance (Schaul et al., 2016; Stadie et al.,

2016; van Hasselt, Guez, & Silver, 2016; Wang et al., 2016), reaching 83% of the professional

gamer’s score by incorporating smarter experience replay (Schaul et al., 2016) and 96% by using

smarter replay and more efficient parameter sharing (Wang et al., 2016) (see DQN+ and DQN++

in Fig. 3).

3

But they requires a lot of experience to reach this level: the learning curve provided

in Schaul et al. (2016) shows performance is around 46% after 231 hours, 19% after 116 hours, and

below 3.5% after just 2 hours (which is close to random play, approximately 1.5%). The differences

between the human and machine learning curves suggest that they may be learning different kinds

of knowledge, using different learning mechanisms, or both.

The contrast becomes even more dramatic if we look at the very earliest stages of learning. While

both the original DQN and these more recent variants require multiple hours of experience to

perform reliably better than random play, even non-professional humans can grasp the basics

of the game after just a few minutes of play. We speculate that people do this by inferring a

general schema to describe the goals of the game and the object types and their interactions,

using the kinds of intuitive theories, model-building abilities and model-based planning mecha-

nisms we describe below. While novice players may make some mistakes, such as inferring that

fish are harmful rather than helpful, they can learn to play better than chance within a few min-

utes. If humans are able to first watch an expert playing for a few minutes, they can learn even

faster. In informal experiments with two of the authors playing Frostbite on a Javascript emu-

lator (http://www.virtualatari.org/soft.php?soft=Frostbite), after watching videos of expert play

on YouTube for just two minutes, we found that we were able to reach scores comparable to or

2

The time required to train the DQN (compute time) is not the same as the game (experience) time. Compute

time can be longer.

3

The reported scores use the “human starts” measure of test performance, designed to prevent networks from just

memorizing long sequences of successful actions from a single starting point. Both faster learning (Blundell et al.,

2016) and higher scores (Wang et al., 2016) have been reported using other metrics, but it is unclear how well the

networks are generalizing with these alternative metrics.

12

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功