10

−3

10

−2

10

−1

10

−3

10

−2

10

−1

10

0

Packet Loss Rate

Timeout Pr.

100KB (testbed)

100KB (analysis)

10KB (testbed)

10KB (analysis)

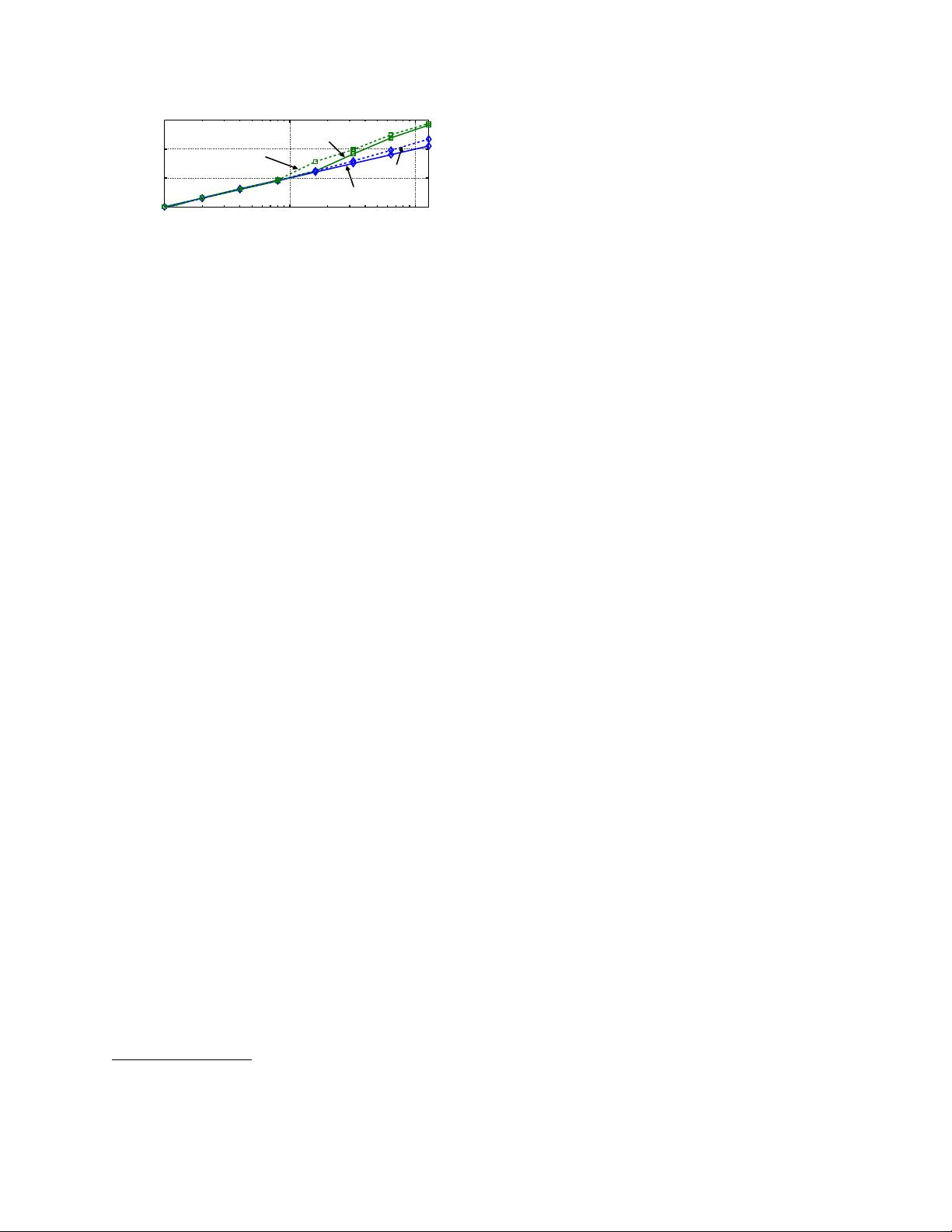

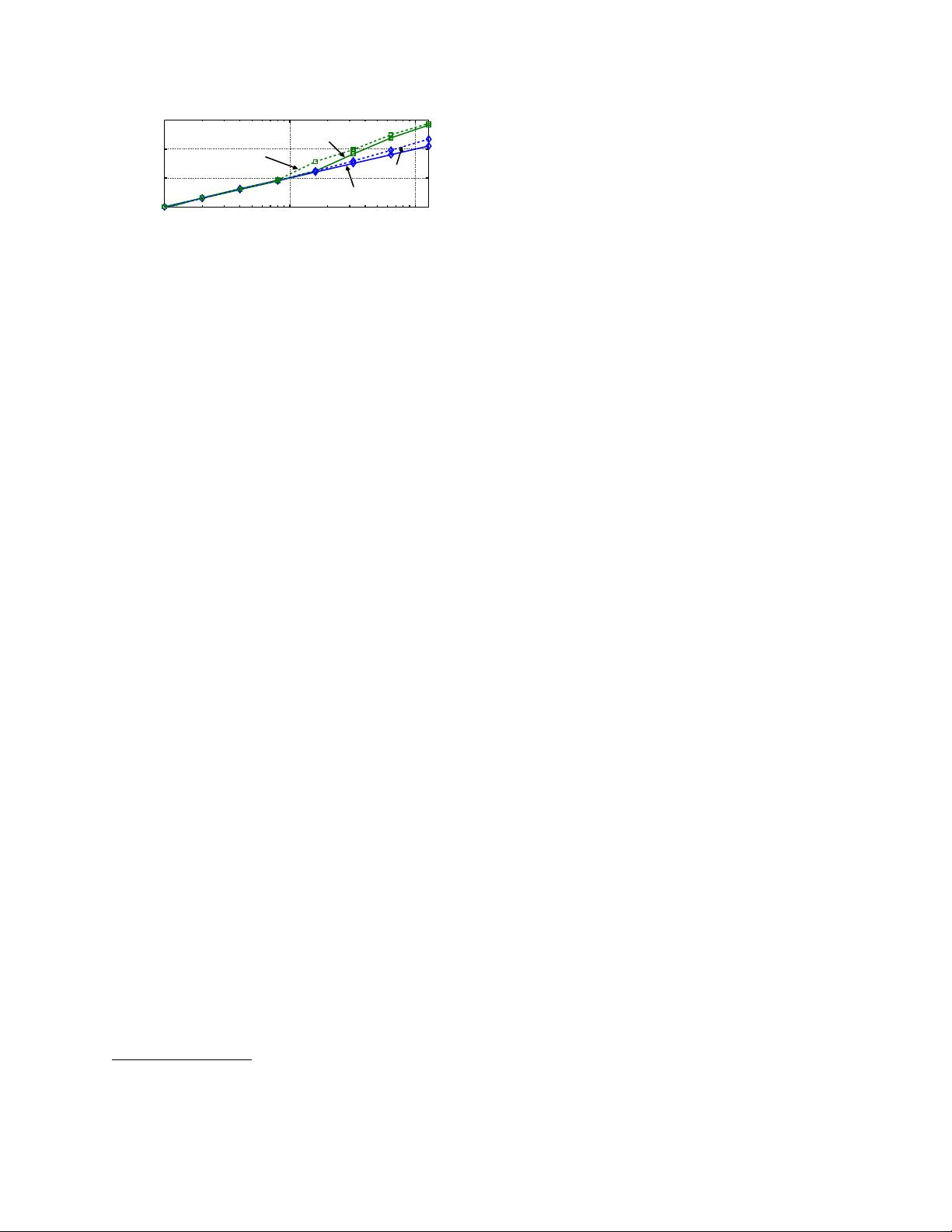

Figure 2: Timeout probability of various flows passing a path with

different random packet loss rate.

ToR, i.e., level1-3). About 22%, 24%, 25% and 29%

of lossy links are located respectively at server↔ToR,

ToR↔Agg, Agg↔Spine and Spine↔Core.

In summary, even in well-engineered modern data

center networks, packet losses are inevitable. Although

the overall loss rate is low, the packet loss rate in some ar-

eas (e.g., links) can exceed several percents, when there

are failures such as malfunctioning hardware or severe

congestions. Moreover, most losses happen in the net-

work instead of the edge.

2.2 Impact of Packet Loss

Once a packet gets lost in the network, TCP needs to

recover it to provide reliable communication. There are

two existing loss detection and recovery mechanisms in

TCP

1

: fast recovery and retransmission timeout (RTO).

Fast recovery detects a packet loss by monitoring dupli-

cated ACKs (or DACKs) and starts to retransmit an old

packet once a certain number (i.e., three) of DACKs have

been received. If there are not enough DACKs, TCP has

to rely on RTO and retransmits all un-ACKed packets

after the timeout. To prevent premature timeouts and

also limited by the kernel timer resolution, the RTO value

is set rather conservatively, usually several times of the

round-trip-time (RTT). Specifically, in a production data

center, the minimum RTO is set to be 5ms [1,3] (the low-

est value supported in current Linux kernel [16]), while

the RTT is usually hundreds of µs [1, 3, 16]. As a con-

sequence, for a latency-sensitive flow, which is usually

small in size, encountering merely one RTO would al-

ready increase its completion time by several times and

cause unacceptable performance degradation.

Therefore, the core issue in achieving low FCT for

small latency-sensitive flows when facing packet losses

is to avoid RTO. However, current TCP still has to rely

on RTO to recover from packet loss in the following three

cases [4, 17, 18]. i) The last packet or a series of consec-

utive packets at the tail of a TCP flow are lost (i.e., tail

loss), where the TCP sender cannot get enough DACKs

to trigger fast recovery and will incur an RTO. ii) A

whole window worth of packets are lost (i.e., whole win-

dow loss). iii) A retransmitted packet also gets lost (i.e.,

retransmission loss).

1

Many production data centers also use DCTCP [1] as their network

transport protocol. DCTCP has the same loss recovery scheme as TCP.

Thus, for ease of presentation, we use TCP to stand for both TCP and

DCTCP while discussing the loss recovery.

To understand how likely RTO may occur to a flow,

we take both a simple mathematical analysis (estimated

lower bound) and testbed experiments to analyze the

timeout probability of a TCP flow with different flow

sizes and different loss rates. We consider one-way ran-

dom loss condition here for simplicity, but the impact

on TCP performance and our FUSO scheme are by no

means limited to this loss pattern (see §5).

Let’s first assume the network path has a loss proba-

bility of p. Assuming the TCP sender needs k DACKs

to trigger fast recovery, any of the last k packets get-

ting lost will lead to an RTO. This tail loss probability is

p

tail

= 1 − (1 − p)

k

. For standard TCP, k = 3, but recent

Linux kernel which implement’s early retransmit [19] re-

duces k to 1 at the end of the transaction. Therefore, if

we consider early retransmit, the tail loss probability is

simply p. The whole window loss probability can eas-

ily be derived as p

win

= p

w

, where w is the TCP win-

dow size. For retransmission loss, clearly, the proba-

bility that both the original packet and its retransmis-

sion are lost is p

2

. Let x be the number of packets in

a TCP flow. The probability that the flow encounters at

least one retransmission loss is p

retx

= 1 − (1 − p

2

)

x

. In

summary, the timeout probability of the flow should be

p

RTO

≥ max(p

tail

, p

win

, p

retx

). The solid lines in Fig. 2

show the analyzed lower bound timeout probability of

a TCP flow with different flow sizes under various loss

rates. Here, we consider the early retransmit (k = 1).

To verify our analysis, we also conduct a testbed ex-

periment to generate TCP flows between two servers. All

flows pass through a path with one-way random loss.

Netem [20, 21] is used to generate different loss rate on

the path. More details about the testbed settings can be

found in §4.2 and §5. The dotted lines in Fig. 2 shows

the testbed results, which verify that our analysis serves

as a good lower bound of the timeout probability.

There are a few observations. Firstly, for tiny flows

(e.g., 10KB), the timeout probability linearly grows with

the random loss rate. This is because the tail loss proba-

bility dominates. However, a tiny loss probability would

affect the tail of FCT. For example, a moderate rise of

the probability to 1% would cause a timeout probability

larger than 1%, which means the 99

th

percentile of FCT

would be greatly impacted. Secondly, when the flow size

increases, e.g., ≥100KB, the retransmission loss may

dominate, especially when the random hardware loss rate

is larger than 1%. We can see a clear rise in timeout prob-

abilities for the flows with 100KB in Fig. 2. In summary,

we conclude that a small random loss rate (i.e., >1%)

would already cause enough flows to timeout to affect

the 99

th

percentile of FCT. This can also explain why a

malfunctioning switch in the Azure datacenter that drops

∼2% of the packets causes great performance degrada-

tion of all the services that traverse this switch [3].

3