5

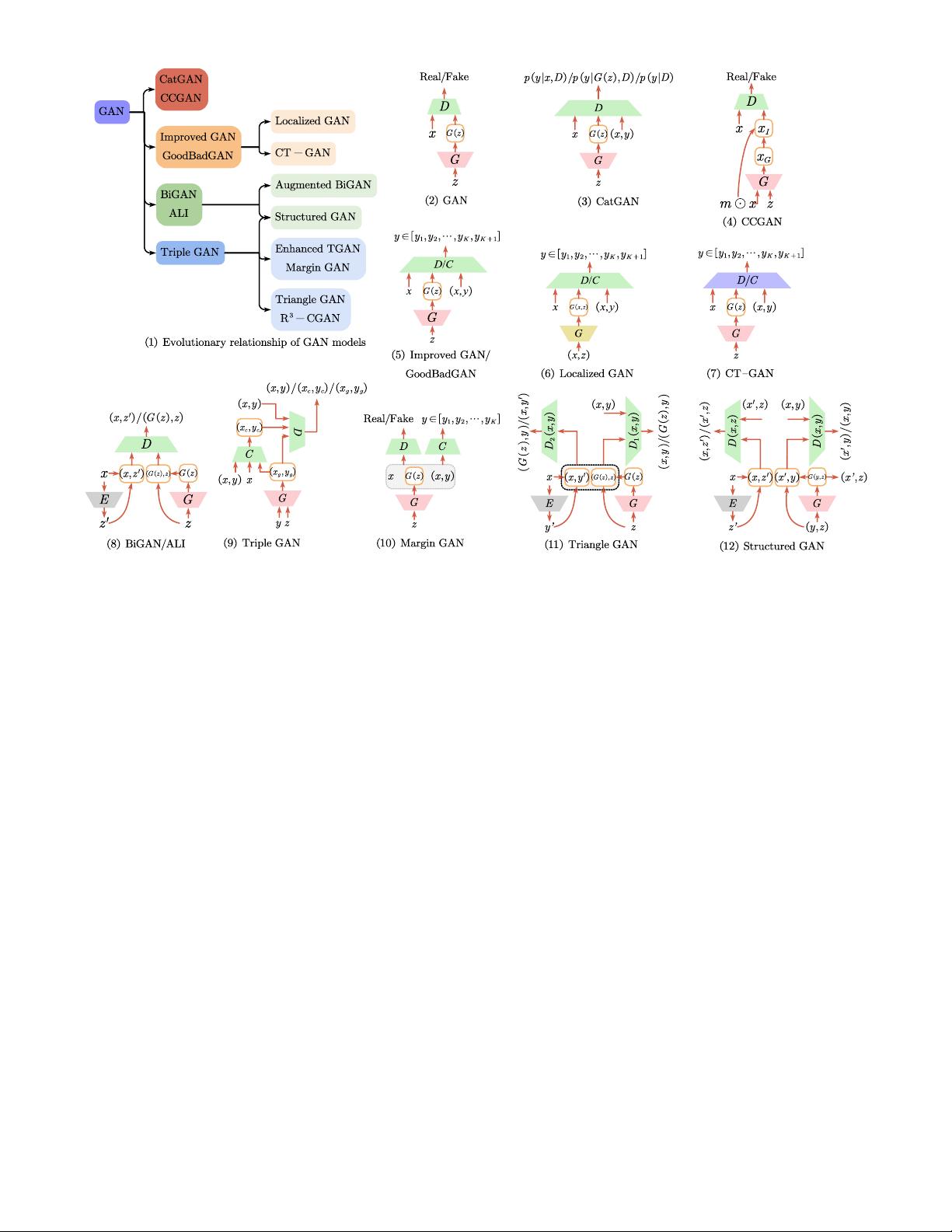

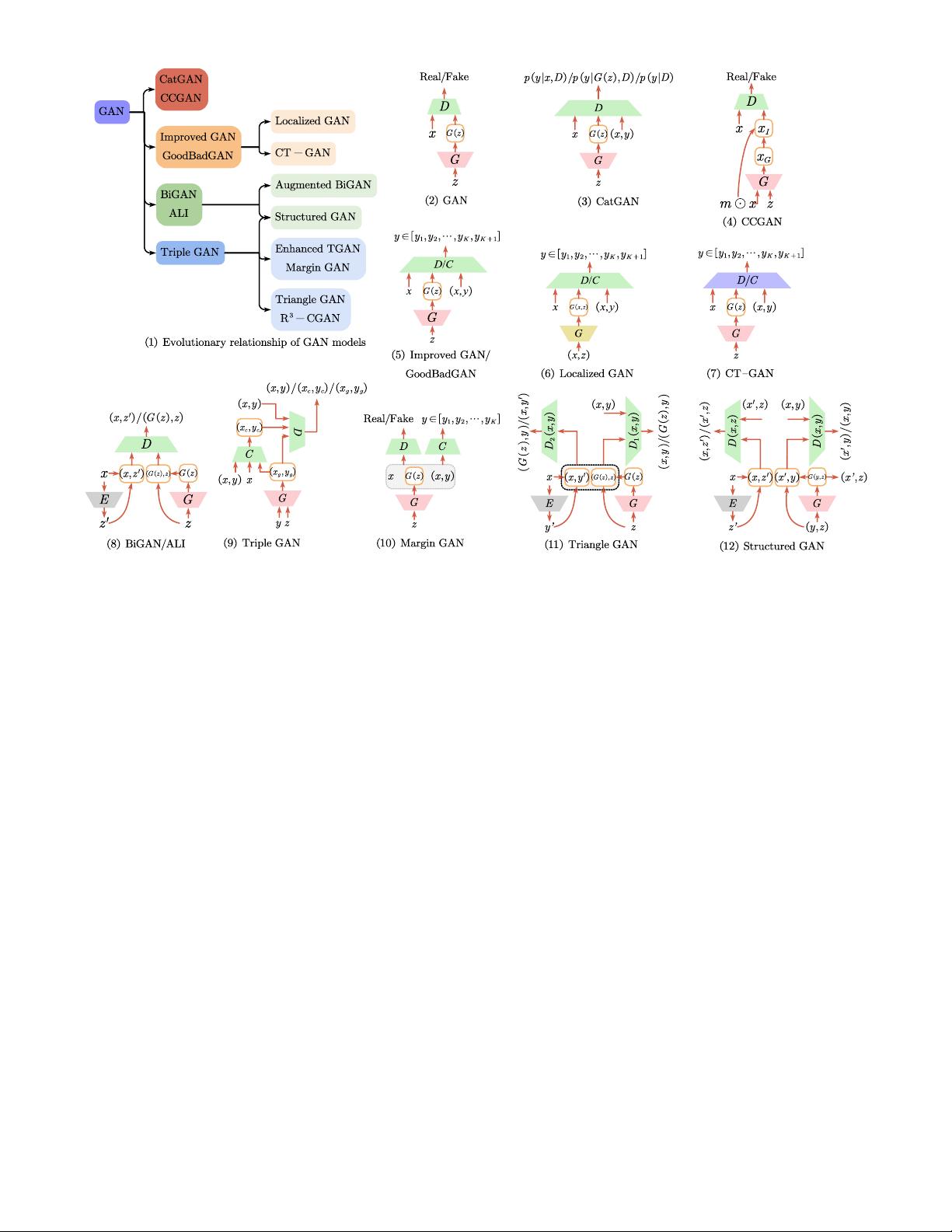

Fig. 2. A glimpse of the diverse range of architectures used for GAN-based deep generative semi-supervised methods. The characters ‘‘D, G” and

“E” represent Discriminator, Generator and Encoder, respectively. In Figure (6), Localized GAN is equipped with a local generator G(x, z), so we

use the yellow box to distinguish it. Similarly, in CT-GAN, the purple box is used to denote a discriminator that introduces consistency constraint.

the standard GAN (Eq. (2)). The structure is illustrated in

Fig. 2(3). This method aims to learn a discriminator which

distinguishes the samples into K categories by labeling y

to each x, instead of learning a binary discriminator value

function. Moreover, the CatGAN discriminator loss function

the supervised loss is also a cross-entropy term between

the predicted conditional distribution p(y|x, D) and the

true label distribution of examples.n consists of three parts:

(1) entropy H[p(y|x, D)] which to obtain certain category

assignment for samples; (2) H[p(y|G(z), D)] for uncertain

predictions from generated samples; (3) the marginal class

entropy H[p(y|D)] to uniform usage of all classes. The

proposed framework uses the feature space learned by the

discriminator for the final learning task. For the labeled data,

the supervised loss is also a cross-entropy term between

the conditional distribution p(y|x, D) and the samples’ true

label distribution.

CCGAN. Context-Conditional Generative Adversarial

Networks (CCGAN) [141] is proposed to use an adversarial

loss for harnessing unlabeled image data based on image

in-painting. The architecture of the CCGAN is shown in

Fig. 2(4). The main highlight of this work is context infor-

mation provided by the surrounding parts of the image. The

method trains a GAN where the generator is to generate pix-

els within a missing hole. The discriminator is to discrimi-

nate between the real unlabeled images and these in-painted

images. More formally, m x as input to a generator, where

m denotes a binary mask to drop out a specified portion

of an image and denotes element-wise multiplication.

Thus the in-painted image x

I

= (1 − m) x

G

+ m x

with generator outputs x

G

= G(m x, z). The in-painted

examples provided by the generator cause the discrimina-

tor to learn features that generalize to the related task of

classifying objects. The penultimate layer of components of

the discriminator is then shared with the classifier, whose

cross-entropy loss is used combined with the discriminator

loss.

Improved GAN. There are several methods to adapt

GANs into a semi-supervised classification scenario. Cat-

GAN [140] forces the discriminator to maximize the mutual

information between examples and their predicted class dis-

tributions instead of training the discriminator to learn a bi-

nary classification. To overcome the learned representations’

bottleneck of CatGAN, Semi-supervised GAN (SGAN) [142]

learns a generator and a classifier simultaneously. The clas-

sifier network can have (K + 1) output units corresponding

to [y

1

, y

2

, . . . , y

K

, y

K+1

], where the y

K+1

represents the

outputs generated by G. Similar to SGAN, Improved GAN

[143] solves a (K +1)-class classification problem. The struc-

ture of Improved GAN is shown in Fig. 2(5). Real examples

for one of the first K classes and the additional (K + 1)th

class consisted of the synthetic images generated by the

generator G. This work proposes the improved techniques

to train the GANs, i.e., feature matching, minibatch dis-

crimination, historical averaging one-sided label smoothing,

and virtual batch normalization, where feature matching is

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功