Real-Time Fog using Post-processing in OpenGL

Anonymous

∗

A Research

ABSTRACT

Fog is often used to add realism to a computer generated scene, but

support for fog in current graphics APIs such as OpenGL is limited.

The standard fog models are very simplistic, allowing for a uniform

density fog with slight variations in attenuation functions. While re-

cent extensions to the OpenGL standard provide height dependent,

or layered fog, it does not correctly account for line-of-sight effects

as the viewpoint moves through the fog.

In this paper, we present a new, fast but simple method for gen-

erating heterogeneous fog as a post processing step. Using stan-

dard OpenGL Shading Language features, it requires only the depth

buffer from the normal rendering process as input, evaluating fog

integrals along line-of-sight to produce realistic heterogeneous fog

effects in real-time.

Keywords: Algorithm, Post-processing, Real-Time fog, Shader.

Index Terms: I.3.3 [Computer Graphics]: Picture/Image

Generation—Display algorithms; I.3.1 [Computer Graphics]:

Hardware Architecture—Graphics Processors

1 INTRODUCTION

As far back as version 1.1, OpenGL has provided support for fog.

However, fog has always been implemented as a relatively cheap,

and simplistic, depth based effect. The basic idea behind fog is that

the distance between the viewpoint and a fragment is computed.

This distance is then used to blend the fragment color with a fog

color. While this produces reasonable results, there are a few prob-

lems. The OpenGL specification permits implementations to ap-

proximate the fragment distance using only the fragment depth, or

Z value. Most API implementations use this approximation, which

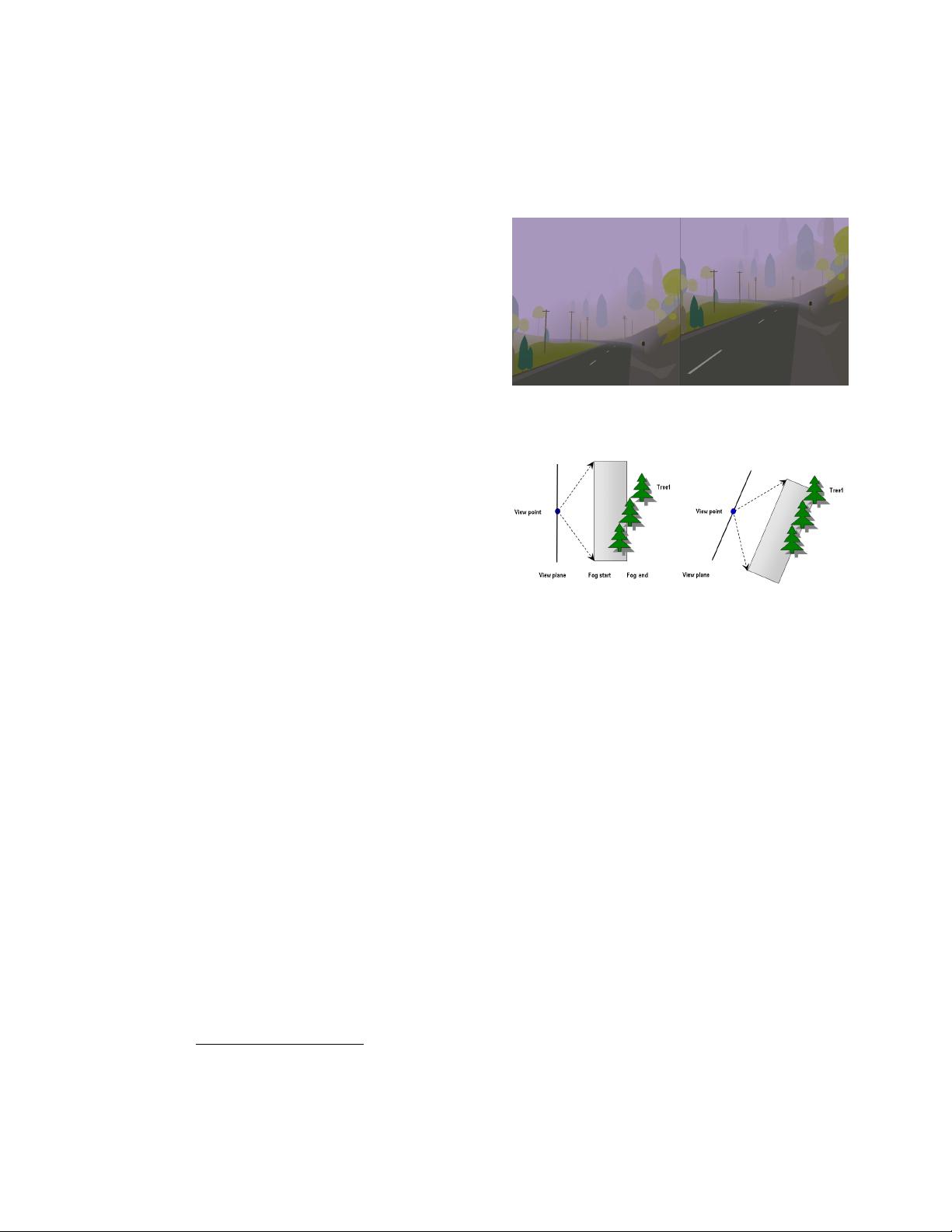

leads to some undesirable effects as shown in Figure 1, where more

trees are visible along the horizon in the right image. Both images

are generated from the same camera location. However, in the right

image, the camera has been tilted downwards. While the camera-

object distance has not changed for these trees, the rotation of the

camera has resulted in reduced Z values. This causes a reduction

in the computed fog density, allowing trees that were previously

invisible, to become visible.

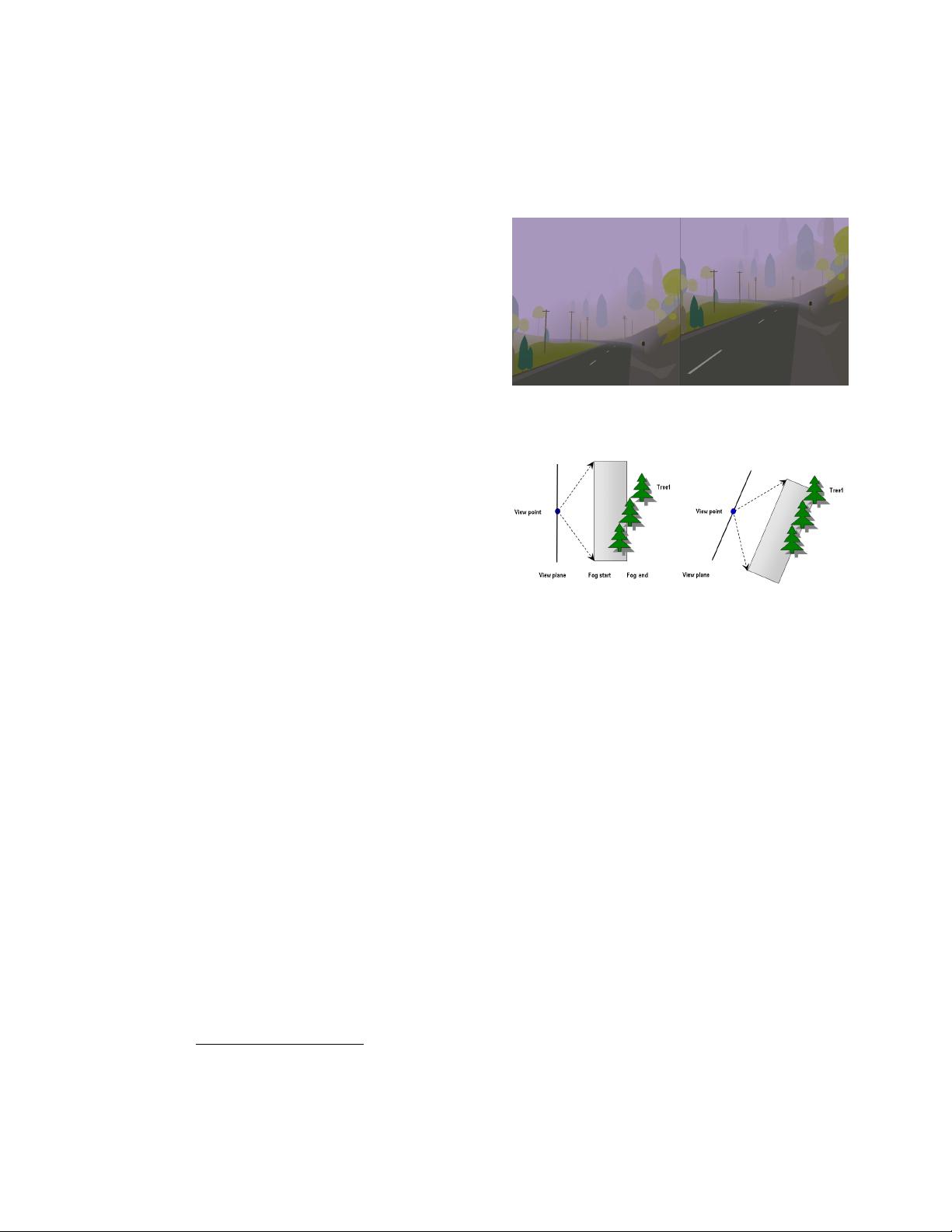

Figure 2 shows how this artifact can occur using OpenGL fog

model. The grey band shows the visible region from no fog to com-

plete fog. In the left diagram, Tree 1 is in the fully fogged region.

With the camera rotated in the right diagram, Tree 1 falls into the

partially fogged region, and thus become visible.

Recent additions to the OpenGL standard include the ability to

specify the effective

0

Z

0

value, or depth of a vertex for the purposes

of fog computations. Often referred to as the fog extension, this

permits significantly more control over the fog generation and al-

lows effects such as height dependent fog, but at the expense of

providing more per-vertex data - already a potential bottleneck, and

more CPU cycles.

∗

e-mail: a@aol.com

Figure 1: Problems with standard fog. The right image shows more

trees on the hillside when the camera is tilted downwards.

Figure 2: Fog artifacts

Another problem with the fog model in OpenGL is that only

rendered objects are fogged, leaving the background unaffected, as

shown by Figure 3.

The obvious solution to this problem is to set the background

color to the fog color, but this only works for homogeneous fog,

not the fog extension, which usually requires a graded background.

Thus, the standard fog model employed by OpenGL is somewhat

limited in its application.

Considerable work has been done to improve the situation using

either physically-based or appearance-based approaches [1] [2] [4]

[5] [6] [8] [9] [10] [11] [12] [16] [18] [19] [21] [22] [23] [25], with

the ultimate goal of simulating fully heterogeneous fog in three di-

mensions. However, most approaches either cannot achieve the per-

formance characteristics required for real time applications, or re-

quire sophisticated algorithms that must be integrated into the main

rendering pipeline.

In this paper we present a new method of generating real-time

heterogeneous fog using post-processing technique. Our algorithm

uses analytical functions to evaluate the fog integral along the line

of sight, accurately computing the fog density on a per-pixel basis to

produce heterogeneous fog effects. It requires only the depth buffer

generated during the normal rendering process, making it very easy

to integrate into existing rendering applications. Additionally, it is

also extremely fast, being implemented entirely on the GPU.

In the next section, we review the existing approaches for real

time fog rendering. Section 3 describes the technical details in-

cluding fog approximation in appearance based fog simulation and

our new algorithm with hardware acceleration technique. Section

4 presents the implementation as well as the integration of our al-