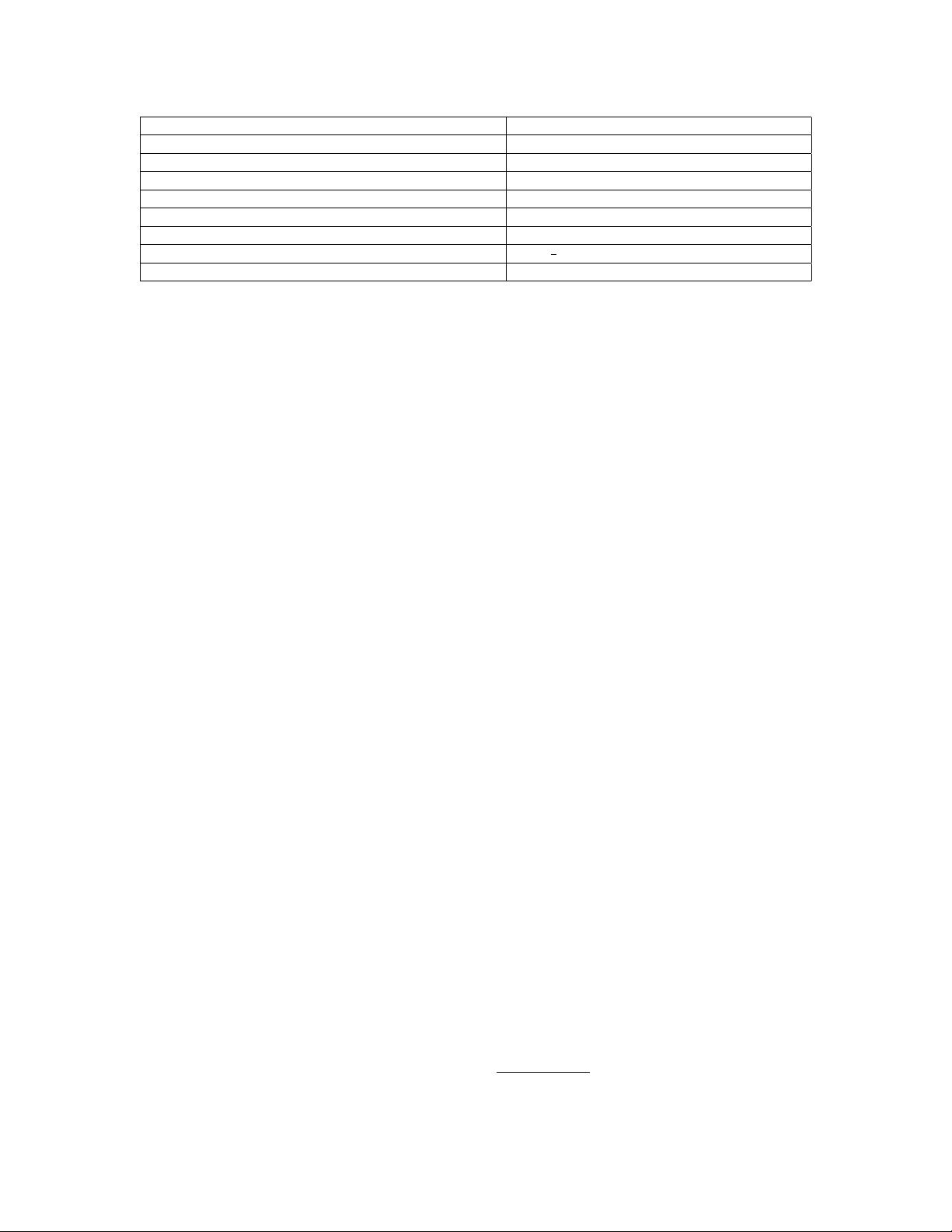

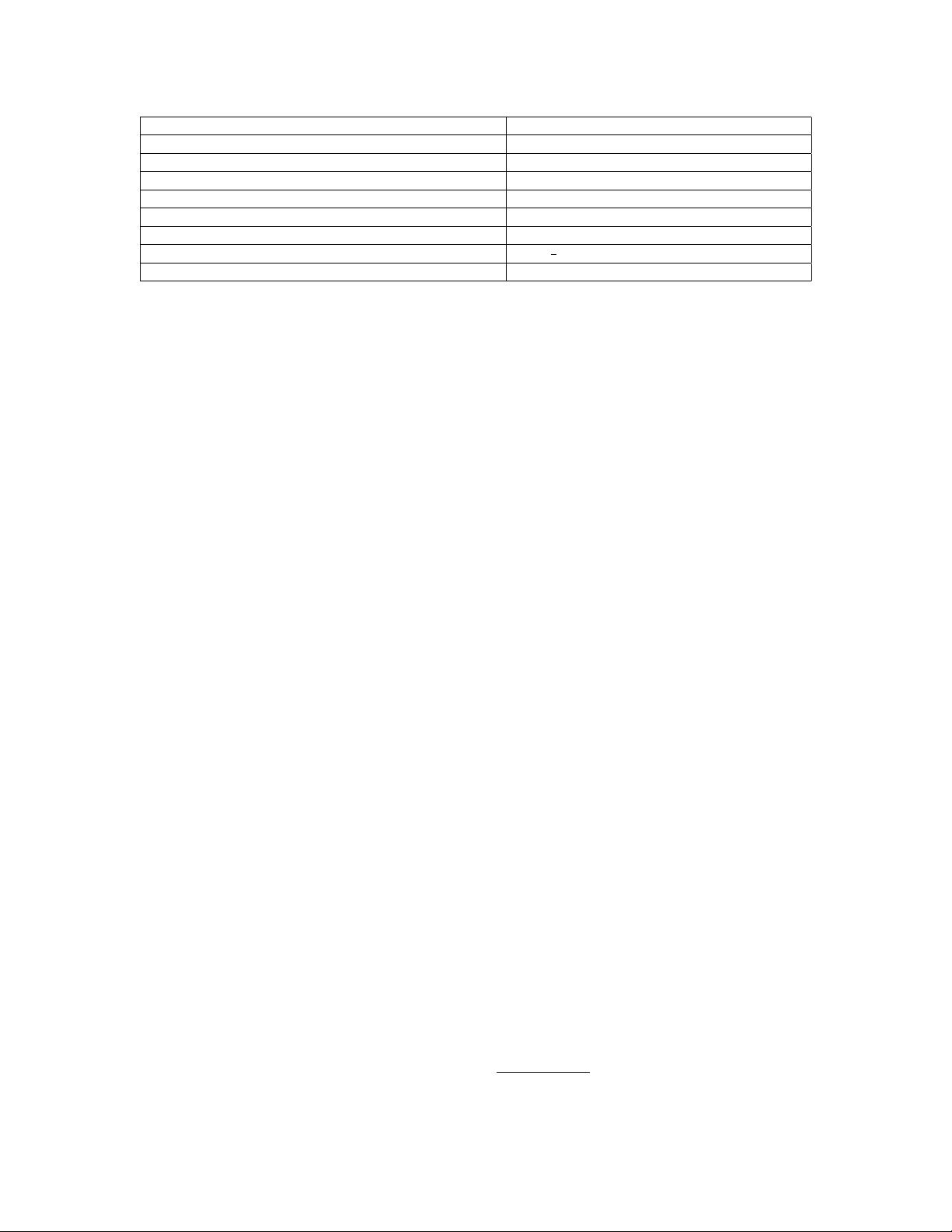

Table 1: Semi-definite rank minimization algorithms.

Optimization Algorithms and References Description

(a) convex trace norm (Fazel 2002; Cand

`

es and Tao 2010) rank(X) ≈ tr(X)

(b) nonlinear factorization (Burer and Monteiro 2003) rank(X) ≤ k, with X ≈ LL

T

, L ∈ R

n×k

(c) Schatten `

p

norm (Lu 2014; Nie, Huang, and Ding 2012) rank(X) ≈ kσ(X)k

p

(d) log-det heuristic (Fazel, Hindi, and Boyd 2003; Deng et al. 2013) rank(X) ≈ log det(X + I)

(e) truncated nuclear norm (Hu et al. 2013; Miao, Pan, and Sun 2015) rank(X) ≤ k ⇔ tr(X) = kσ(X)k

top-k

(f) pseudo-inverse reformulation(Zhao 2012) rank(A) = rank(A

†

A) = tr(A

†

A)

(g) iterative hard thresholding (Zhang and Lu 2011; Lu and Zhang 2013) min

X

1

2

kX − X

0

k

2

F

+ rank(X)

(h) MPEC reformulation [this paper],(Yuan and Ghanem 2015) rank(X)= min

0VI

tr(I − V), s.t. hV, Xi = 0

the high dimensional solution to the desirable space us-

ing eigenvalue decomposition, but this generally only pro-

duces sub-optimal results. Second-order cone programming

relaxation was proposed in (Tseng 2007), which has su-

perior polynomial complexity. However, this technique ob-

tains good results only when the anchor nodes are placed

on the outer boundary, since the positions of the estimat-

ed remaining nodes lie within the convex hull of the an-

chor nodes. Due to the high computational complexity of

the standard SDP algorithm, the work of (Wang et al. 2008;

Pong and Tseng 2011) considers further relaxations of the

semi-definite programming approach to address the sensor

network localization problem. Very recently, the work of (Ji

et al. 2013) explores the use of a nonconvex surrogate of

the rank function, namely the Schatten `

p

-norm, in network

localization. Although the resulting optimization is noncon-

vex, they show that a first-order critical point can be approx-

imated in polynomial time by an interior-point algorithm.

Several semi-definite rank minimization algorithms have

been studied in the literature (See Table 1). (a) Convex trace

norm (Fazel 2002) is a lower bound of the rank function in

the sense of operator (or spectral) norm. It is proven to lead

to a near optimal low-rank solution (Cand

`

es and Tao 2010;

Recht, Fazel, and Parrilo 2010) under certain incoherence

assumptions. However, such assumptions may be violated

in real applications. (b) Nonlinear factorization (Burer and

Monteiro 2003; 2005) replaces the solution matrix X by a

nonlinear matrix multiplication LL

T

. One important fea-

ture of this approach is avoiding the need to perform eigen-

value decomposition. (c) Schatten `

p

norm with p ∈ (0, 1)

was considered by (Lu 2014; Nie, Huang, and Ding 2012;

Lu et al. 2014) to approximate the discrete rank function.

It results in a local gradient Lipschitz continuous function,

to which some smooth optimization algorithms can be ap-

plied. (d) Log-det heuristic (Fazel, Hindi, and Boyd 2003;

Deng et al. 2013) minimizes the first-order Taylor series ex-

pansion of the objective function iteratively to find a local

minimum. Since its first iteration is equivalent to solving the

trace convex relaxation problem, it can be viewed as a re-

finement of the trace norm. (e) Truncated trace norm (Hu et

al. 2013; Miao, Pan, and Sun 2015; Law, Thome, and Cord

2014) minimizes the summation of the smallest (n − k)

eigenvalues, where k is the matrix rank. This is due to the

fact that these eigenvalues have little effect on the approxi-

mation of the matrix rank. (f) Pseudo-inverse reformulations

(Zhao 2012) consider an equivalent formulation to the rank

function: rank(A) = tr(A

†

A). However, similar to matrix

rank, the pseudo-inverse function is not continuous. Fortu-

nately, one can use a Tikhonov regularization technique

1

to approximate the pseudo-inverse. Inspired by this fact, the

work of (Zhao 2012) proves that rank minimization can be

approximated to any level of accuracy via continuous op-

timization. (g) Iterative hard thresholding (Zhang and Lu

2011) considers directly and iteratively setting the largest (in

magnitude) elements to zero in a gradient descent format. It

has been incorporated into the Penalty Decomposition Al-

gorithm (PDA) framework (Lu and Zhang 2013). Although

PDA is guaranteed to converge to a local minimum, it lacks

stability. The value of the penalty function can be very large,

and the solution can be degenerate when the minimization

subproblem is not exactly solved.

From above, we observe that existing methods either pro-

duce approximate solutions (method (a), (c), (d) and (g)), or

limited to solving feasibility problems (method (b) and (e)).

The only existing exact method (method (g)) is the penalty

method. However, it often gives much worse results even as

compared with the simple convex methods, as shown in our

experiments. This unappealing feature motivates us to de-

sign a new exact multiplier method in this paper. Recently,

the work of (Li and Qi 2011) considers a continuous varia-

tional reformulation of the low-rank problem to solve sym-

metric semi-definite optimization problems subject to a rank

constraint. They design an ADM algorithm that finds a sta-

tionary point of the rank-constrained optimization problem.

Inspired by this work, we consider a augmented Lagrangian

method to solve the general semi-definite rank minimiza-

tion problem by handling its equivalent MPEC reformula-

tion. Note that the formulation in (Li and Qi 2011) can be

viewed as a special case of ours, since it assumes that the

solution has unit diagonal entries, i.e. diag(X) = 1.

3 Proposed Optimization Algorithm

This section presents our proposed optimization algorithm.

Specifically, we first reformulate the optimization problem

in Eq (1) as an equivalent MPEC (Mathematical Program

with Equilibrium Constraints) in Section 3.1, and then solve

the equality constrained optimization problem by a proxi-

mal Alternating Direction Method (ADM) in Section 3.2. In

Subsection 3.3, we discuss the merits of the MPEC reformu-

lation and the proximal ADM algorithm.

1

A

†

= lim

→0

(A

T

A + I)

−1

A

T

= lim

→0

A

T

(AA

T

+ I)

−1