Published as a conference paper at ICLR 2020

be evaluated for all latent entity and mention pairs, which is prohibitively expensive. However, by

restricting s

t

to be an inner product we can implement this efficiently (§2.2).

To highlight the differentiability of the proposed overall scheme, we can represent the computation

in Eq. 2 as matrix operations. We pre-compute the TFIDF term for all entities and mentions into

a sparse matrix, which we denote as A

E→M

[e, m] =

1

(G(e) · F (m) > ). Then entity expansion

to co-occuring mentions can be done using a sparse-matrix by sparse-vector multiplication between

A

E→M

and z

t−1

. For the relevance scores, let T

K

(s

t

(m, z

t−1

, q)) denote the top-K relevant men-

tions encoded as a sparse vector in R

|M|

. Finally, the aggregation of mentions to entities can be

formulated as multiplication with another sparse-matrix B

M→E

, which encodes coreference, i.e.

mentions corresponding to the same entity. Putting all these together, using to denote element-

wise product, and defining Z

t

= [Pr(z

t

= e

1

|q); . . . ; Pr(z

t

= e

|E|

|q)], we can observe that for large

K (i.e., as K → |M|), Eq. 2 becomes equivalent to:

Z

t

= softmax

Z

T

t−1

A

E→M

T

K

(s

t

(m, z

t−1

, q))

B

M→E

. (4)

Note that every operation in above equation is differentiable and between sparse matrices and vec-

tors: we will discuss efficient implementations in §2.2. Further, the number of non-zero entries in Z

t

is bounded by K, since we filtered (the element-wise product in Eq. 4) to top-K relevant mentions

among TFIDF based expansion and since each mention can only point to a single entity in B

M→E

.

This is important, as it prevents the number of entries in Z

t

from exploding across hops (which

might happen if, for instance, we added the relevance and TFIDF scores instead).

We can view Z

t−1

, Z

t

as weighted multisets of entities, and s

t

(m, z, q) as implicitly selecting men-

tions which correspond to a relation R. Then Eq. 4 becomes a differentiable implementation of

Z

t

= Z

t−1

.follow(R), i.e. mimicking the graph traversal in a traditional KB. We thus call Eq. 4 a

textual follow operation.

Training and Inference. The model is trained end-to-end by optimizing the cross-entropy loss

between Z

T

, the weighted set of entities after T hops, and the ground truth answer set A. We use

a temperature coefficient λ when computing the softmax in Eq, 4 since the inner product scores of

the top-K retrieved mentions are typically high values, which would otherwise result in very peaked

distributions of Z

t

. We also found that taking a maximum over the mention set of an entity M

z

t

in

Eq. 2 works better than taking a sum. This corresponds to optimizing only over the most confident

mention of each entity, which works for corpora like Wikipedia that do not have much redundancy.

A similar observation was made by Min et al. (2019) in weakly supervised settings.

2.2 EFFICIENT IMPLEMENTATION

Sparse TFIDF Mention Encoding. To compute the sparse-matrix A

E→M

for entity-mention ex-

pansion in Eq. 4, the TFIDF vectors F(m) and G(z

t−1

) are constructed over unigrams and bigrams,

hashed to a vocabulary of 16M buckets. While F computes the vector from the whole passage

around m, G only uses the surface form of z

t−1

. This corresponds to retrieving all mentions in a

document using z

t−1

as the query. We limit the number of retrieved mentions per entity to a maxi-

mum of µ, which leads to a |E| × |M| sparse-matrix.

10

2

10

3

10

4

10

5

10

6

|E| = Num entities

10

1

10

0

10

1

10

2

10

3

millisec

sparse x sparse

sparse x dense

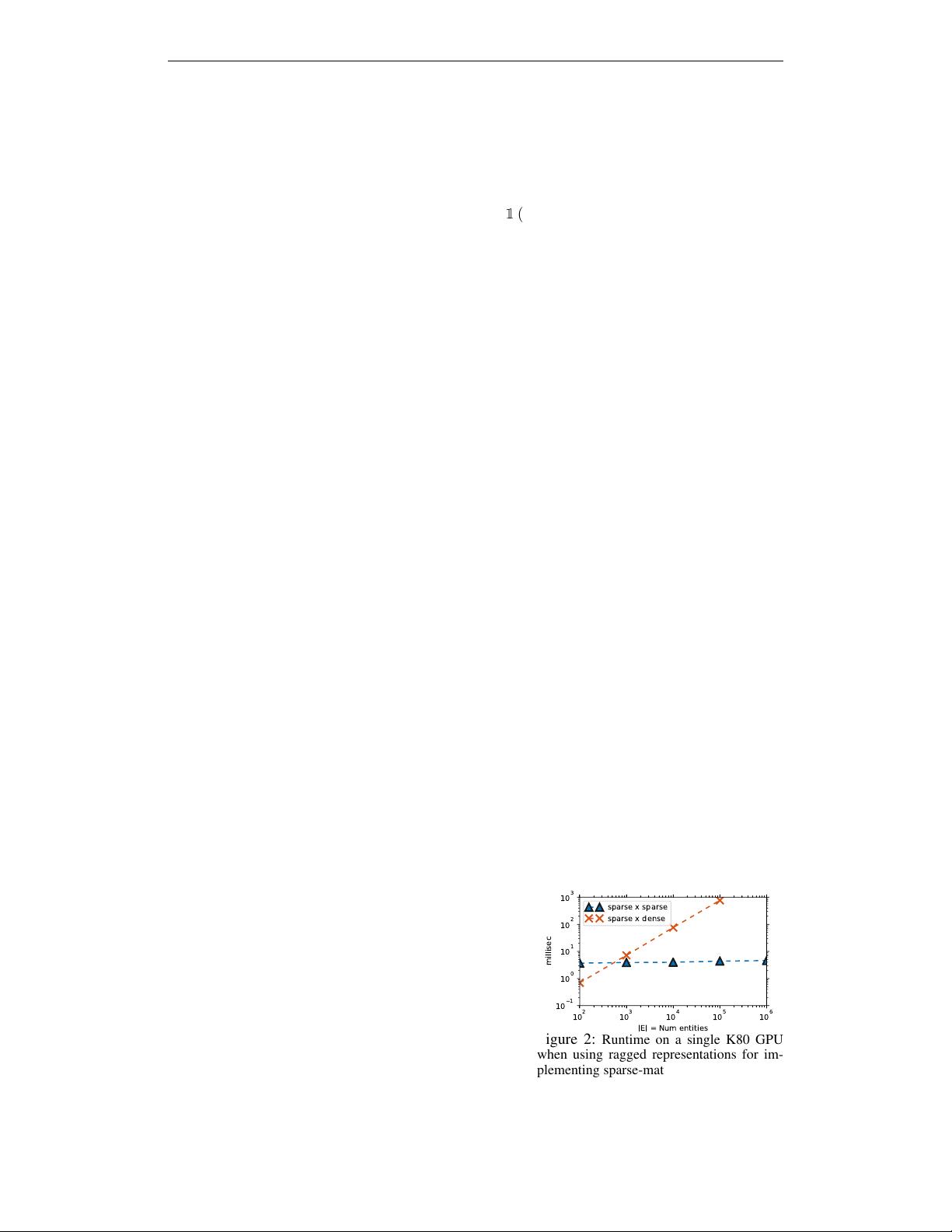

Figure 2: Runtime on a single K80 GPU

when using ragged representations for im-

plementing sparse-matrix vector product, vs

the default sparse-matrix times dense vector

product available in TensorFlow. |E| > 10

5

leads to OOM for the latter.

Efficient Entity-Mention expansion. The expansion

from a set of entities to mentions occurring around them

can be computed using the sparse-matrix by sparse-vector

product Z

T

t−1

A

E→M

. A simple lower bound for multiply-

ing a sparse |E| × |M| matrix, with maximum µ non-

zeros in each row, by a sparse |E| × 1 vector with K

non-zeros is Ω(Kµ). Note that this lower bound is in-

dependent of the size of matrix A

E→M

, or in other words

independent of the number of entities or mentions. To at-

tain the lower bound, the multiplication algorithm must

be vector driven, because any matrix-driven algorithms

need to at least iterate over all the rows. Instead we slice

out the relevant rows from A

E→M

. To enable this our

solution is to represent the sparse-matrix A

E→M

as two

row-wise lists of variable-sized lists of the indices and

4