TAMP, a read query makes a local copy of the tuple to ensure re-

peatable reads since it is not protected by locks. When a transaction

is aborted, it is assigned a new timestamp and then restarted. This

corresponds to the “basic T/O” algorithm as described in [3], but

our implementation uses a decentralized scheduler.

Multi-version Concurrency Control (MVCC): Under MVCC,

every write operation creates a new version of a tuple in the database [4,

5]. Each version is tagged with the timestamp of the transaction

that created it. The DBMS maintains an internal list of the versions

of an element. For a read operation, the DBMS determines which

version in this list the transaction will access. Thus, it ensures a

serializable ordering of all operations. One benefit of MVCC is that

the DBMS does not reject operations that arrive late. That is, the

DBMS does not reject a read operation because the element that it

targets has already been overwritten by another transaction [5].

Optimistic Concurrency Control (OCC): The DBMS tracks

the read/write sets of each transaction and stores all of their write

operations in their private workspace [28]. When a transaction

commits, the system determines whether that transaction’s read set

overlaps with the write set of any concurrent transactions. If no

overlap exists, then the DBMS applies the changes from the trans-

action’s workspace into the database; otherwise, the transaction is

aborted and restarted. The advantage of this approach for main

memory DBMSs is that transactions write their updates to shared

memory only at commit time, and thus the contention period is

short [42]. Modern implementations of OCC include Silo [42] and

Microsoft’s Hekaton [11, 29]. In this paper, our algorithm is simi-

lar to Hekaton in that we parallelize the validation phase and thus

is more scalable than the original algorithm [28].

T/O with Partition-level Locking (H-STORE): The database is

divided into disjoint subsets of memory called partitions. Each

partition is protected by a lock and is assigned a single-threaded

execution engine that has exclusive access to that partition. Each

transaction must acquire the locks for all of the partitions that it

needs to access before it is allowed to start running. This requires

the DBMS to know what partitions that each individual transac-

tion will access before it begins [34]. When a transaction request

arrives, the DBMS assigns it a timestamp and then adds it to all

of the lock acquisition queues for its target partitions. The execu-

tion engine for a partition removes a transaction from the queue

and grants it access to that partition if the transaction has the oldest

timestamp in the queue [38]. Smallbase was an early proponent of

this approach [22], and more recent examples include H-Store [27].

3. MANY-CORE DBMS TEST-BED

Since many-core chips do not yet exist, we performed our anal-

ysis through Graphite [30], a CPU simulator that can scale up to

1024 cores. For the DBMS, we implemented a main memory OLTP

engine that only contains the functionality needed for our experi-

ments. The motivation for using a custom DBMS is two fold. First,

we can ensure that no other bottlenecks exist other than concur-

rency control. This allows us to study the fundamentals of each

scheme in isolation without interference from unrelated features.

Second, using a full-featured DBMS is impractical due to the con-

siderable slowdown of simulators (e.g., Graphite has an average

slowdown of 10,000×). Our engine allows us to limit the experi-

ments to reasonable times. We now describe the simulation infras-

tructure, the DBMS engine, and the workloads used in this study.

3.1 Simulator and Target Architecture

Graphite [30] is a fast CPU simulator for large-scale multi-core

systems. Graphite runs off-the-shelf Linux applications by creat-

Host%Machines%

Target%Mul2core%

Application

core%

core%

core%

core%

core%

core%

core%

core%

core%

core%

core%

core%

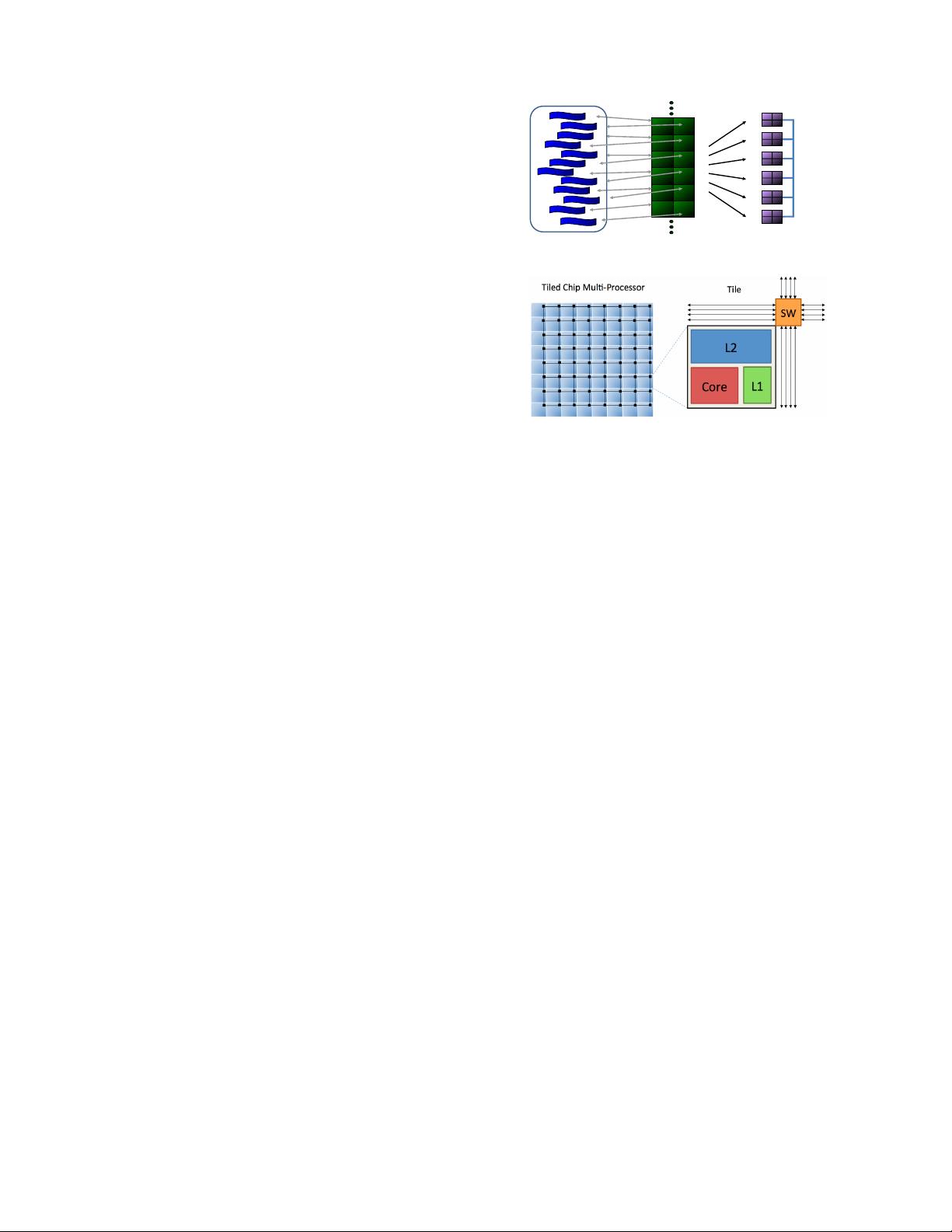

Figure 1: Graphite Simulator Infrastructure – Application threads are

mapped to simulated core threads deployed on multiple host machines.

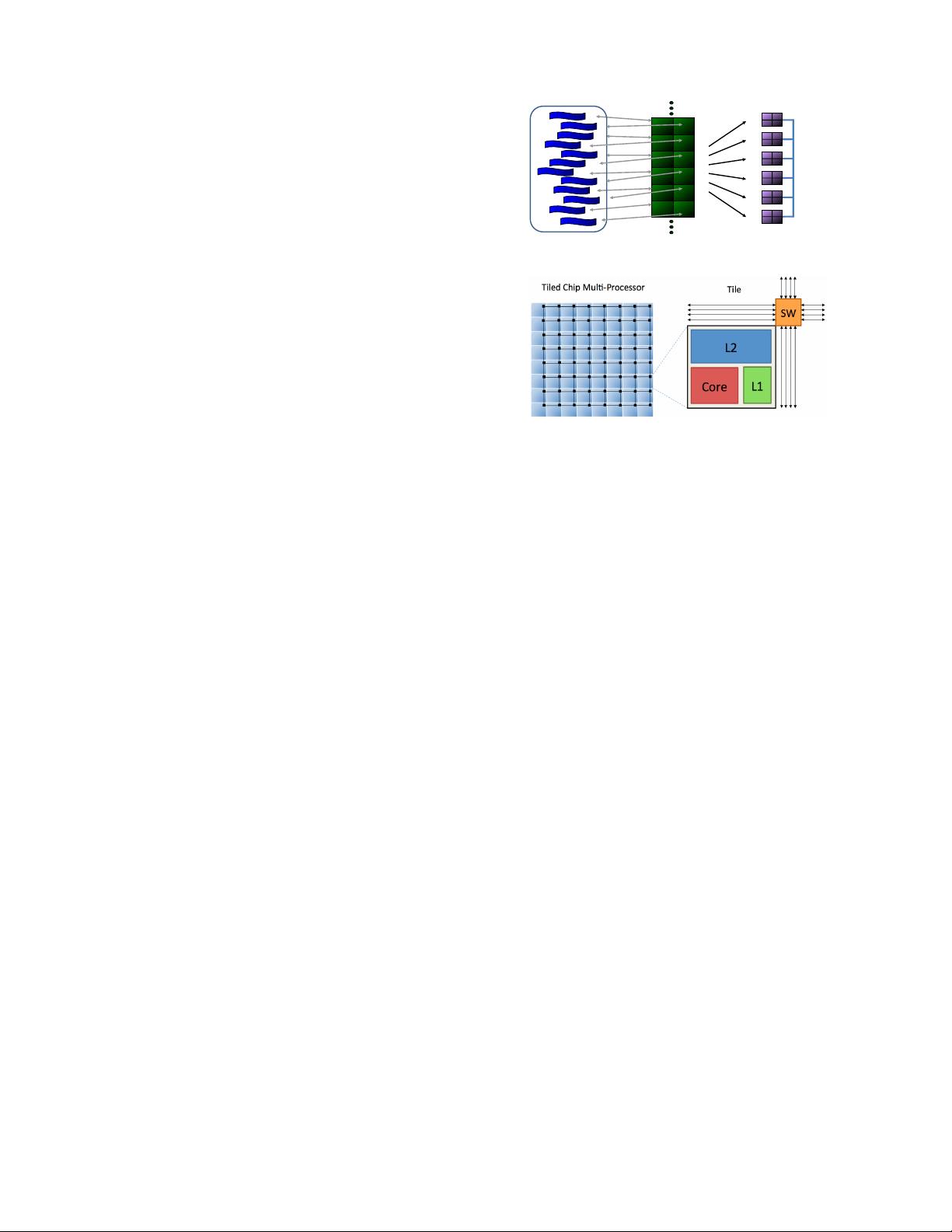

Figure 2: Target Architecture – Tiled chip multi-processor with 64 tiles

and a 2D-mesh network-on-chip. Each tile contains a processing core, L1

and L2 caches, and a network switch (SW).

ing a separate thread for each core in the architecture. As shown

in Fig. 1, each application thread is attached to a simulated core

thread that can then be mapped to different processes on separate

host machines. For additional performance, Graphite relaxes cy-

cle accuracy, using periodic synchronization mechanisms to model

instruction-level granularity. As with other similar CPU simulators,

it only executes the application and does not model the operating

system. For this paper, we deployed Graphite on a 22-node cluster,

each with dual-socket Intel Xeon E5-2670 and 64GB of DRAM.

The target architecture is a tiled multi-core CPU, where each tile

contains a low-power, in-order processing core, 32KB L1 instruc-

tion/data cache, a 512KB L2 cache slice, and a network router.

This is similar to other commercial CPUs, such as Tilera’s Tile64

(64 cores), Intel’s SCC (48 cores), and Intel’s Knights Landing (72

cores) [1]. Tiles are interconnected using a high-bandwidth, 2D-

mesh on-chip network, where each hop takes two cycles. Both the

tiles and network are clocked at 1GHz frequency. A schematic of

the architecture for a 64-core machine is depicted in Fig. 2.

We use a shared L2-cache configuration because it is the most

common last-level cache design for commercial multi-cores. In a

comparison experiment between shared and private L2-caches, we

observe that shared caches lead to significantly less memory traffic

and higher performance for OLTP workloads due to its increased

aggregate cache capacity (results not shown). Since L2 slices are

distributed among the different tiles, the simulated multi-core sys-

tem is a NUCA (Non-Uniform Cache Access) architecture, where

L2-cache latency increases with distance in the 2D-mesh.

3.2 DBMS

We implemented our own lightweight main memory DBMS based

on pthreads to run in Graphite. It executes as a single process with

the number of worker threads equal to the number of cores, where

each thread is mapped to a different core. All data in our DBMS is

stored in memory in a row-oriented manner. The system supports

basic hash table indexes and a pluggable lock manager that allows

us swap in the different implementations of the concurrency con-

trol schemes described in Section 2. It also allows the indexes and

lock manager to be partitioned (as in the case with the H-STORE

scheme) or run in a centralized mode.

211

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功