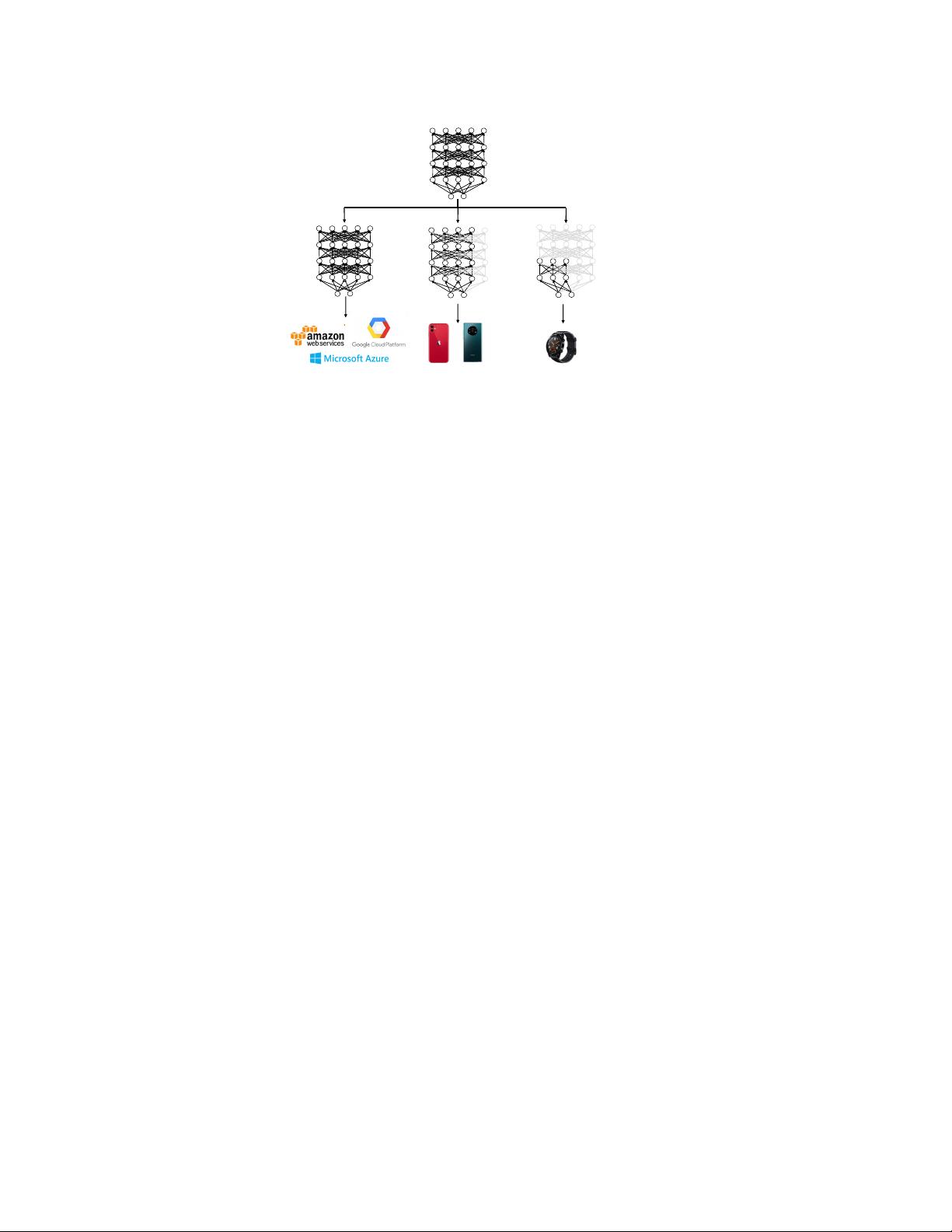

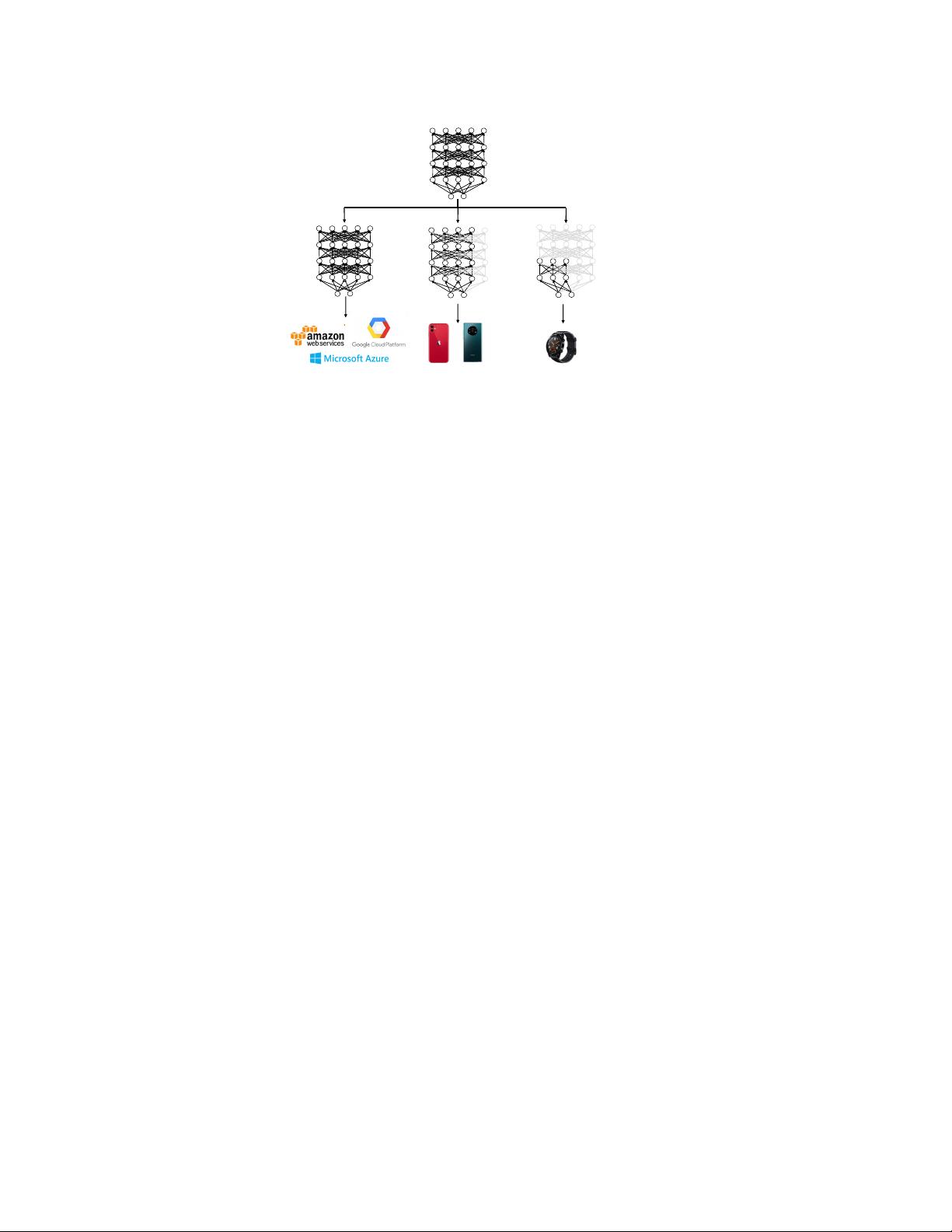

Figure 1: A network with adaptive width and depth. One single model can run at different depths

and widths to satisfy various deployment requirements.

9], considering compression and acceleration only in the depth direction can be limited. Recent

studies show that the width direction of the Transformer-based models also has high redundancy.

For example, it is shown in [23, 17] that comparable accuracy can be well maintained even when

many attention heads are pruned.

3 Method

In this section, we elaborate the training method of our DynaBERT model. The training process

includes two stages. We first train a width-adaptive DynaBERT

W

in Section 3.1 and then train the

both width- and depth-adaptive DynaBERT in Section 3.2.

3.1 Training DynaBERT

W

with Adaptive Width

Before describing the training process, we first need to define the width of BERT model. Compared

to CNNs stacked with regular convolutional layers, the BERT model stacked with Transformer lay-

ers is much more complicated. In each Transformer layer, the computation of the MHA contains

the linear transformation and multiplications of keys, queries, values for multiple heads. Moreover,

the MHA and the FFN in each Transformer layer perform transformations in different dimensions,

making it hard to trivially determine the width of the Transformer layer.

3.1.1 Using Attention Heads and Intermediate Neurons in FFN to Adapt the Width

Following [17], we divide the computation of the MHA into the computations for each attention

head as in (1). Thus the width of the MHA can be decided by the number of attention heads. The

width of the FFN can be decided by the number of neurons in the intermediate layer. We do not adapt

the number of neurons in the embedding dimension because they are connected through skip con-

nections across all Transformer layers and cannot be flexibly scaled for one particular Transformer

layer. Therefore, for a Transformer layer, we adapt its width by varying the number of attention

heads of the MHA and neurons in the intermediate layer of the FFN.

In each Transformer layer, when the width multiplier is m

w

, the MHA retains the leftmost bm

w

N

H

c

attention heads, and the FFN intermediate layer retains the leftmost bm

w

d

ff

c neurons. In this case,

each Transformer layer is roughly compressed by the ratio m

w

. Note that this is not strictly equal

because layer normalization and biases in linear layers also have a small fraction of parameters.

Different Transformer layers, or the attention heads and the neurons in the same layer, can also have

different width multipliers. In this paper, for simplicity, we focus on using the same width multiplier

for the attention heads and neurons in all Transformer layers.

4