没有合适的资源?快使用搜索试试~ 我知道了~

首页视觉问答权威综述Visual Question Answering: A Survey of Methods and Datasets

资源详情

资源推荐

Visual Question Answering: A Survey of Methods and Datasets

Qi Wu, Damien Teney, Peng Wang, Chunhua Shen

∗

, Anthony Dick, Anton van den Hengel

e-mail: firstname.lastname@adelaide.edu.au

School of Computer Science, The University of Adelaide, SA 5005, Australia

Abstract

Visual Question Answering (VQA) is a challenging task that has received increasing attention from both the computer

vision and the natural language processing communities. Given an image and a question in natural language, it requires

reasoning over visual elements of the image and general knowledge to infer the correct answer. In the first part of

this survey, we examine the state of the art by comparing modern approaches to the problem. We classify methods

by their mechanism to connect the visual and textual modalities. In particular, we examine the common approach

of combining convolutional and recurrent neural networks to map images and questions to a common feature space.

We also discuss memory-augmented and modular architectures that interface with structured knowledge bases. In the

second part of this survey, we review the datasets available for training and evaluating VQA systems. The various

datatsets contain questions at different levels of complexity, which require different capabilities and types of reasoning.

We examine in depth the question/answer pairs from the Visual Genome project, and evaluate the relevance of the

structured annotations of images with scene graphs for VQA. Finally, we discuss promising future directions for the

field, in particular the connection to structured knowledge bases and the use of natural language processing models.

Keywords: Visual Question Answering, Natural Language Processing, Knowledge Bases, Recurrent Neural

Networks

Contents

1 Introduction 2

2 Methods for VQA 3

2.1 Joint embedding approaches . . . . . . 3

2.2 Attention mechanisms . . . . . . . . . 6

2.3 Compositional Models . . . . . . . . . 7

2.3.1 Neural Module Networks . . . . 8

2.3.2 Dynamic Memory Networks . . 9

2.4 Models using external knowledge bases 9

3 Datasets and evaluation 10

3.1 Datasets of natural images . . . . . . . 11

3.2 Datasets of clipart images . . . . . . . . 16

3.3 Knowledge base-enhanced datasets . . . 17

3.4 Other datasets . . . . . . . . . . . . . . 18

4 Structured scene annotations for VQA 18

5 Discussion and future directions 21

∗

Corresponding author

6 Conclusion 22

Preprint submitted to Elsevier July 21, 2016

arXiv:1607.05910v1 [cs.CV] 20 Jul 2016

1. Introduction

Visual question answering is a task that was pro-

posed to connect computer vision and natural language

processing (NLP), to stimulate research, and push the

boundaries of both fields. On the one hand, computer

vision studies methods for acquiring, processing, and

understanding images. In short, its aim is to teach

machines how to see. On the the other hand, NLP

is the field concerned with enabling interactions be-

tween computers and humans in natural language, i.e.

teaching machines how to read, among other tasks.

Both computer vision and NLP belong to the domain

of artificial intelligence and they share similar methods

rooted in machine learning. However, they have histor-

ically developed separately. Both fields have seen sig-

nificant advances towards their respective goals in the

past few decades, and the combined explosive growth

of visual and textual data is pushing towards a mar-

riage of efforts from both fields. For example, re-

search in image captioning, i.e. automatic image de-

scription [15, 35, 54, 77, 93, 85] has produced power-

ful methods for jointly learning from image and text in-

puts to form higher-level representations. A successful

approach is to combine convolutional neural networks

(CNNs), trained on object recognition, with word em-

beddings, trained on large text corpora.

In the most common form of Visual Question An-

swering (VQA), the computer is presented with an im-

age and a textual question about this image (see exam-

ples in Figures 3–5). It must then determine the correct

answer, typically a few words or a short phrase. Vari-

ants include binary (yes/no) [3, 98] and multiple-choice

settings [3, 100], in which candidate answers are pro-

posed. A closely related task is to “fill in the blank”

[95], where an affirmation describing the image must be

completed with one or several missing words. These

affirmations essentially amount to questions phrased in

declarative form. A major distinction between VQA and

other tasks in computer vision is that the question to be

answered is not determined until run time. In traditional

problems such as segmentation or object detection, the

single question to be answered by an algorithm is pre-

determined and only the input image changes. In VQA,

in contrast, the form that the question will take is un-

known, as is the set of operations required to answer

it. In this sense, it more closely reflects the challenge

of general image understanding. VQA is related to the

task of textual question answering, in which the answer

is to be found in a specific textual narrative (i.e. read-

ing comprehension) or in large knowledge bases (i.e.

information retrieval). Textual QA has been studied for

a long time in the NLP community, and VQA is its

extension to additional visual supporting information.

The added challenge is significant, as images are much

higher dimensional, and typically more noisy than pure

text. Moreover, images lack the structure and grammat-

ical rules of language, and there is no direct equivalent

to the NLP tools such as syntactic parsers and regular

expression matching. Finally, images capture more of

the richness of the real world, whereas natural language

already represents a higher level of abstraction. For ex-

ample, compare the phrase ‘a red hat’ with the mul-

titude of its representations that one can picture, and in

which many styles could not be described in a short sen-

tence.

Visual question answering is a significantly more

complex problem than image captioning, as it fre-

quently requires information not present in the image.

The type of this extra required information may range

from common sense to encyclopedic knowledge about

a specific element from the image. In this respect,

VQA constitutes a truly AI-complete task [3], as it

requires multimodal knowledge beyond a single sub-

domain. This comforts the increased interest in VQA,

as it provides a proxy to evaluate our progress towards

AI systems capable of advanced reasoning combined

with deep language and image understanding. Note

that image understanding could in principle be evalu-

ated equally well through image captioning. Practically

however, VQA has the advantage of an easier evaluation

metric. Answers typically contain only a few words.

The long ground truth image captions are more difficult

to compare with predicted ones. Although advanced

evaluation metrics have been studied, this is still an open

research problem [43, 26, 76].

One of the first integrations of vision and language

is the “SHRDLU” from system from 1972 [84] which

allowed users to use language to instruct a computer

to move various objects around in a “blocks world”.

More recent attempts at creating conversational robotic

agents [39, 9, 55, 64] are also grounded in the visual

world. However, these works were often limited to

specific domains and/or on restricted language forms.

In comparison, VQA specifically addresses free-form

open-ended questions. The increasing interest in VQA

is driven by the existence of mature techniques in both

computer vision and NLP and the availability of relevant

large-scale datasets. Therefore, a large body of litera-

ture on VQA has appeared over the last few years. The

aim of this survey is to give a comprehensive overview

of the field, covering models, datasets, and to suggest

promising future directions. To the best of our knowl-

edge, this article is the first survey in the field of VQA.

2

In the first part of this survey (Section 2), we present

a comprehensive review of VQA methods through four

categories based on the nature of their main contribu-

tion. Incremental contributions means that most meth-

ods belong to multiple of these categories (see Table 2).

First, the joint embedding approaches (Section 2.1) are

motivated by the advances of deep neural networks in

both computer vision and NLP. They use convolutional

and recurrent neural networks (CNNs and RNNs) to

learn embeddings of images and sentences in a com-

mon feature space. This allows one to subsequently

feed them together to a classifier that predicts an an-

swer [22, 52, 49]. Second, attention mechanisms (Sec-

tion 2.2) improve on the above method by focusing on

specific parts of the input (image and/or question). At-

tention in VQA [100, 90, 11, 32, 2, 92] was inspired by

the success of similar techniques in the context of im-

age captioning [91]. The main idea is to replace holistic

(image-wide) features with spatial feature maps, and to

allow interactions between the question and specific re-

gions of these maps. Third, compositional models (Sec-

tion 2.3) allow to tailor the performed computations to

each problem instance. For example, Andreas et al.

[2] use a parser to decompose a given question, then

build a neural network out of modules whose composi-

tion reflect the structure of the question. Fourth, knowl-

edge base-enhanced approaches (Section 2.4) address

the use of external data by querying structured knowl-

edge bases. This allows retrieving information that is

not present in the common visual datasets such as Ima-

geNet [14] or COCO [45], which are only labeled with

classes, bounding boxes, and/or captions. Information

available from knowledge bases ranges from common

sense to encyclopedic level, and can be accessed with

no need for being available at training time [87, 78].

In the second part of this survey (Section 3), we

examine datasets available for training and evaluating

VQA systems. These datasets vary widely along three

dimensions: (i) their size, i.e. the number of images,

questions, and different concepts represented. (ii) the

amount of required reasoning, e.g. whether the detec-

tion of a single object is sufficient or whether inference

is required over multiple facts or concepts, and (iii) how

much information beyond that present in the actual

images is necessary, be it common sense or subject-

specific information. Our review points out that ex-

isting datasets lean towards visual-level questions, and

require little external knowledge, with few exceptions

[78, 79]. These characteristics reflect the struggle with

simple visual questions still faced by the current state of

the art, but these characteristics must not be forgotten

when VQA is presented as an AI-complete evaluation

proxy. We conclude that more varied and sophisticated

datasets will eventually be required.

Another significant contribution of this survey is an

in-depth analysis of the question/answer pairs provided

in the Visual Genome dataset (Section 4). They consti-

tute the largest VQA dataset available at the time of this

writing, and, importantly, it includes rich structured im-

ages annotations in the form of scene graphs [41]. We

evaluate the relevance of these annotations for VQA,

by comparing the occurrence of concepts involved in

the provided questions, answers, and image annotations.

We find out that only about 40% of the answers directly

match elements in the scene graphs. We further show

that this matching rate can be significantly increased by

relating scene graphs to external knowledge bases. We

conclude this paper in Section 5 by discussing the po-

tential of better connection to such knowledge bases, to-

gether with better use of existing work from the field of

NLP.

2. Methods for VQA

One of the first attempts at “open-world” visual ques-

tion answering was proposed by Malinowski et al. [51].

They described a method combining semantic text pars-

ing with image segmentation in a Bayesian formulation

that samples from nearest neighbors in the training set.

The method requires human-defined predicates, which

are inevitably dataset-specific and difficult to scale. It is

also very dependent on the accuracy of the image seg-

mentation algorithm and of the estimated image depth

information. Another early attempt at VQA by Tu et

al. [74] was based on a joint parse graph from text and

videos. In [23], Geman et al. proposed an automatic

“query generator” that is trained on annotated images

and then produces a sequence of binary questions from

any given test image. A common characteristic of these

early approaches is to restrict questions to predefined

forms. The remainder of this article focuses on modern

approaches aimed at answering free-form open-ended

questions. We will present methods through four cat-

egories: joint embedding approaches, attention mech-

anisms, compositional models, and knowledge base-

enhanced approaches. As summarized in Table 2, most

methods combine multiple strategies and thus belong to

several categories.

2.1 Joint embedding approaches

Motivation The concept of jointly embedding images

and text was first explored for the task of image cap-

tioning [15, 35, 54, 77, 93, 85]. It was motivated by

3

Joint Attention Compositional Knowledge Answer Image

Method embedding mechanism model base class. / gen. features

Neural-Image-QA [52] X generation GoogLeNet [71]

VIS+LSTM [63] X classification VGG-Net [68]

Multimodal QA [22] X generation GoogLeNet [71]

DPPnet [58] X classification VGG-Net [68]

MCB [21] X classification ResNet [25]

MCB-Att [21] X X classification ResNet [25]

MRN [38] X X classification ResNet [25]

Multimodal-CNN [49] X classification VGG-Net [68]

iBOWING [99] X classification GoogLeNet [71]

VQA team [3] X classification VGG-Net [68]

Bayesian [34] X classification ResNet [25]

DualNet [65] X classification VGG-Net [68] & ResNet [25]

MLP-AQI [31] X classification ResNet [25]

LSTM-Att [100] X X classification VGG-Net [68]

Com-Mem [32] X X generation VGG-Net [68]

QAM [11] X X classification VGG-Net [68]

SAN [92] X X classification GoogLeNet [71]

SMem [90] X X classification GoogLeNet [71]

Region-Sel [66] X X classification VGG-Net [68]

FDA [29] X X classification ResNet [25]

HieCoAtt [48] X X classification ResNet [25]

NMN [2] X X classification VGG-Net [68]

DMN+ [89] X X classification VGG-Net [68]

Joint-Loss [57] X X classification ResNet [25]

Attributes-LSTM [85] X X generation VGG-Net [68]

ACK [87] X X generation VGG-Net [68]

Ahab [78] X generation VGG-Net [68]

Facts-VQA [79] X generation VGG-Net [68]

Multimodal KB [101] X generation ZeilerNet [96]

Table 1: Overview of existing approaches to VQA, characterized by the use of a joint embedding of image and language features (Section 2.1), the

use of an attention mechanism (Section 2.2), an explicitly compositional neural network architecture (Section 2.3), and the use of information from

an external structured knowledge base (Section 2.4). We also note whether the output answer is obtained by classification over a predefined set

of common words and short phrases, or generated, typically with a recurrent neural network. The last column indicates the type of convolutional

network used to obtain image feature.

the success of deep learning methods in both computer

vision and NLP, which allow one to learn representa-

tions in a common feature space. In comparison to the

task of image captioning, this motive is further rein-

forced in VQA by the need to perform further reason-

ing over both modalities together. A representation in

a common space allows learning interactions and per-

forming inference over the question and the image con-

tents. Practically, image representations are obtained

with convolutional neural networks (CNNs) pre-trained

on object recognition. Text representations are obtained

with word embeddings pre-trained on large text corpora.

Word embeddings practically map words to a space in

which distances reflect semantic similarities [56, 61].

The embeddings of the individual words of a question

are then typically fed to a recurrent neural network to

capture syntactic patterns and handle variable-length se-

quences.

Methods Malinowski et al. [52] propose an approach

named “Neural-Image-QA” with a Recurrent Neural

Network (RNN) implemented with Long Short-Term

Memory cells (LSTMs) (Figure 1). The motivation be-

hind RNNs is to handle inputs (questions) and outputs

(answers) of variable size. Image features are produced

by a CNN pre-trained for object recognition. Question

and image features are both fed together to a first “en-

coder” LSTM. It produces a feature vector of fixed-size

4

CNN

Joint embedding

Sentence

Top answers in a

predefined set

What's in the background ?

RNN

Classif.

A snow covered mountain range

mountains

sky

clouds

Variable-length sentence generation

RNN

CNN

What's in the background ?

RNN

Classif.

RNN

...

Region-specific features

Attention

weights

...

...

...

Attent.

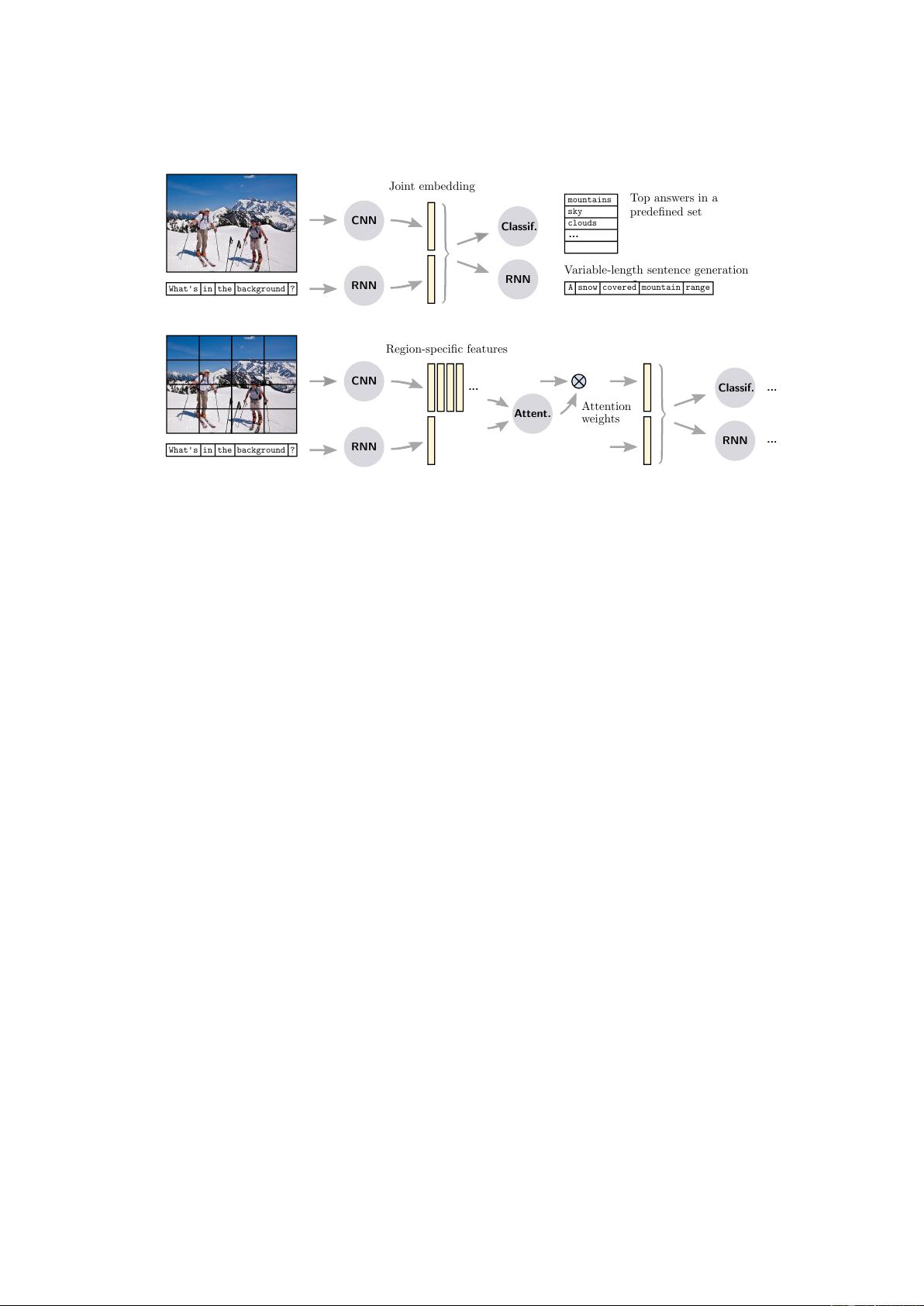

Figure 1: (Top) A common approach to VQA is to map both the input image and question to a common embedding space (Section 2.1). These

features are produced by deep convolutional and recurrent neural networks. They are combined in an output stage, which can take the form of a

classifier (e.g. a multilayer perceptron) to predict short answers from predefined set or a recurrent network (e.g. an LSTM) to produce variable-

length phrases. (Bottom) Attention mechanisms build up on this basic approach with a spatial selection of image features. Attention weights are

derived from both the image and the question and allow the output stage to focus on relevant parts of the image.

that is then passed to a second “decoder” LSTM. The

decoder produces variable-length answers, one word per

recurrent iteration. At each iteration, the last predicted

word is fed through the recurrent loop into the LSTM

until a special <END> symbol is predicted. Several vari-

ants of this approach were proposed. For example, the

“VIS+LSTM” of Ren et al. [63] directly feed the feature

vector produced by the encoder LSTM into a classifier

to produce single-word answers from a predefined vo-

cabulary. In other words, they formulate the answering

as a classification problem, whereas Malinowski et al.

[52] was treating it as a sequence generation procedure.

Ren et al. [63] propose other technical improvements

with the “2-VIS+BLSTM” model. It uses two sources

of image features as input, fed to the LSTM at the start

and at the end of the question sentence. It also uses

LSTMs that scan questions in both forward and back-

ward directions. Those bidirectional LSTMs better cap-

ture relations between distant words in the question.

Gao et al. [22] propose a slightly different method

named “Multimodal QA” (mQA). It employs LSTMs

to encode the question and produce the answer, with

two differences from [52]. First, whereas [52] used

common shared weights between the encoder and de-

coder LSTMs, mQA learns distinct parameters and only

shares the word embedding. This is motivated by poten-

tially different properties (e.g. in terms of grammar) of

questions and answers. Second, the CNN features used

as image representations are not fed into the encoder

prior to the question, but at every time step.

Noh et al. [58] tackle VQA by learning a CNN with a

dynamic parameter layer (DPPnet) of which the weights

are determined adaptively based on the question. For

the adaptive parameter prediction, they employ a sep-

arate parameter prediction network, which consists of

gated recurrent units (GRUs, a variant of LSTMs) tak-

ing a question as input and producing candidate weights

through a fully-connected layer at its output. This ar-

rangement was shown to significantly improve answer-

ing accuracy compared to [52, 63]. One can note a

similarity in spirit with the modular approaches of Sec-

tion 2.3, in the sense that the question is used to tailor

the main computations to each particular instance.

Fukui et al. [21] propose a pooling method to per-

form the joint embedding visual and text features. They

perform their “Multimodal Compact Bilinear pooling”

(MCB) by randomly projecting the image and text fea-

tures to a higher-dimensional space and then convolve

both vectors with multiplications in the Fourier space

for efficiency. Kim et al. [38] use a multimodal residual

learning framework (MRN) to learn the joint represen-

tation of images and language. Saito et al. [65] pro-

pose a “DualNet” which integrates two kinds of oper-

ations, namely element-wise summations and element-

wise multiplications to embed their visual and textual

features. Similarly as [63, 58], they formulate the an-

5

剩余24页未读,继续阅读

zhuf14

- 粉丝: 16

- 资源: 57

上传资源 快速赚钱

我的内容管理

收起

我的内容管理

收起

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

会员权益专享

最新资源

- VMP技术解析:Handle块优化与壳模板初始化

- C++ Primer 第四版更新:现代编程风格与标准库

- 计算机系统基础实验:缓冲区溢出攻击(Lab3)

- 中国结算网上业务平台:证券登记操作详解与常见问题

- FPGA驱动的五子棋博弈系统:加速与创新娱乐体验

- 多旋翼飞行器定点位置控制器设计实验

- 基于流量预测与潮汐效应的动态载频优化策略

- SQL练习:查询分析与高级操作

- 海底数据中心散热优化:从MATLAB到动态模拟

- 移动应用作业:MyDiaryBook - Google Material Design 日记APP

- Linux提权技术详解:从内核漏洞到Sudo配置错误

- 93分钟快速入门 LaTeX:从入门到实践

- 5G测试新挑战与罗德与施瓦茨解决方案

- EAS系统性能优化与故障诊断指南

- Java并发编程:JUC核心概念解析与应用

- 数据结构实验报告:基于不同存储结构的线性表和树实现

资源上传下载、课程学习等过程中有任何疑问或建议,欢迎提出宝贵意见哦~我们会及时处理!

点击此处反馈

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功