2-3

Cisco Data Center Infrastructure 2.5 Design Guide

OL-11565-01

Chapter 2 Data Center Multi-Tier Model Design

Data Center Core Layer

Data Center Core Layer

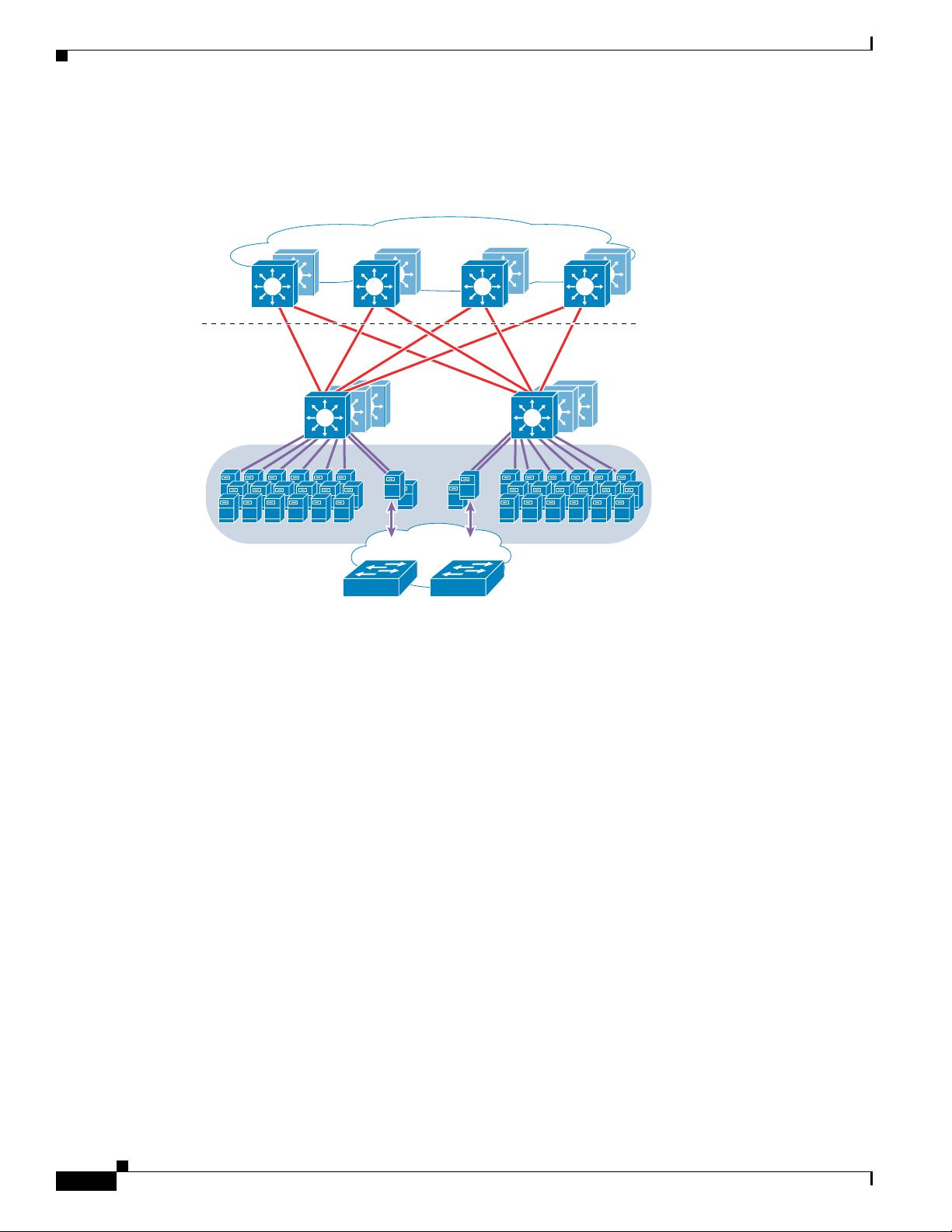

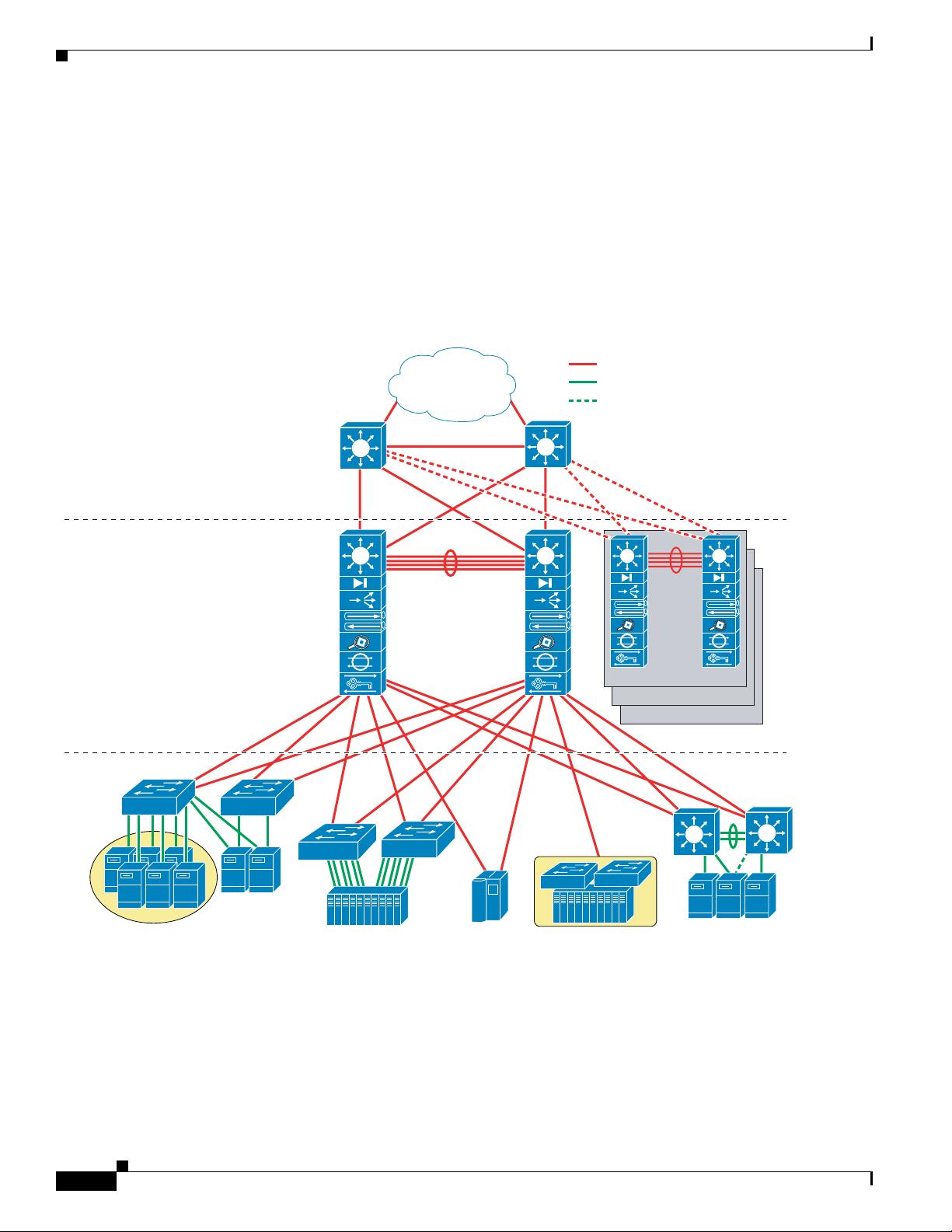

The data center core layer provides a fabric for high-speed packet switching between multiple

aggregation modules. This layer serves as the gateway to the campus core where other modules connect,

including, for example, the extranet, WAN, and Internet edge. All links connecting the data center core

are terminated at Layer 3 and typically use 10 GigE interfaces for supporting a high level of throughput,

performance, and to meet oversubscription levels.

The data center core is distinct from the campus core layer, with a different purpose and responsibilities.

A data center core is not necessarily required, but is recommended when multiple aggregation modules

are used for scalability. Even when a small number of aggregation modules are used, it might be

appropriate to use the campus core for connecting the data center fabric.

When determining whether to implement a data center core, consider the following:

• Administrative domains and policies—Separate cores help isolate campus distribution layers and

data center aggregation layers in terms of administration and policies, such as QoS, access lists,

troubleshooting, and maintenance.

• 10 GigE port density—A single pair of core switches might not support the number of 10 GigE ports

required to connect the campus distribution layer as well as the data center aggregation layer

switches.

• Future anticipation—The business impact of implementing a separate data center core layer at a later

date might make it worthwhile to implement it during the initial implementation stage.

Recommended Platform and Modules

In a large data center, a single pair of data center core switches typically interconnect multiple

aggregation modules using 10 GigE Layer 3 interfaces.

The recommended platform for the enterprise data center core layer is the Cisco Catalyst 6509 with the

Sup720 processor module. The high switching rate, large switch fabric, and 10 GigE density make the

Catalyst 6509 ideal for this layer. Providing a large number of 10 GigE ports is required to support

multiple aggregation modules. The Catalyst 6509 can support 10 GigE modules in all positions because

each slot supports dual channels to the switch fabric (the Catalyst 6513 cannot support this). We do not

recommend using non-fabric-attached (classic) modules in the core layer.

Note By using all fabric-attached CEF720 modules, the global switching mode is compact, which allows the

system to operate at its highest performance level.

The data center core is interconnected with both the campus core and aggregation layer in a redundant

fashion with Layer 3 10 GigE links. This provides for a fully redundant architecture and eliminates a

single core node from being a single point of failure. This also permits the core nodes to be deployed

with only a single supervisor module.