3.1.1 Single thread optimization

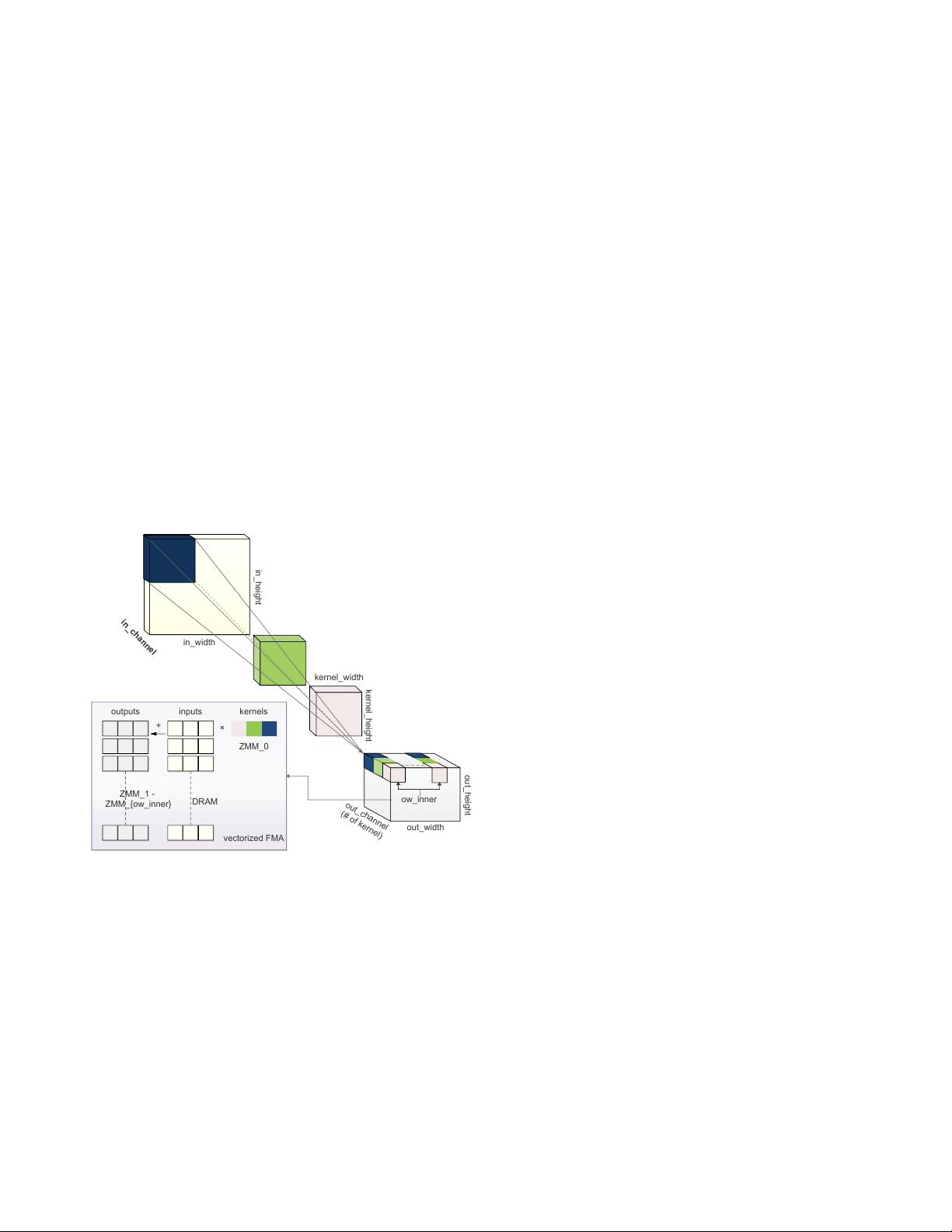

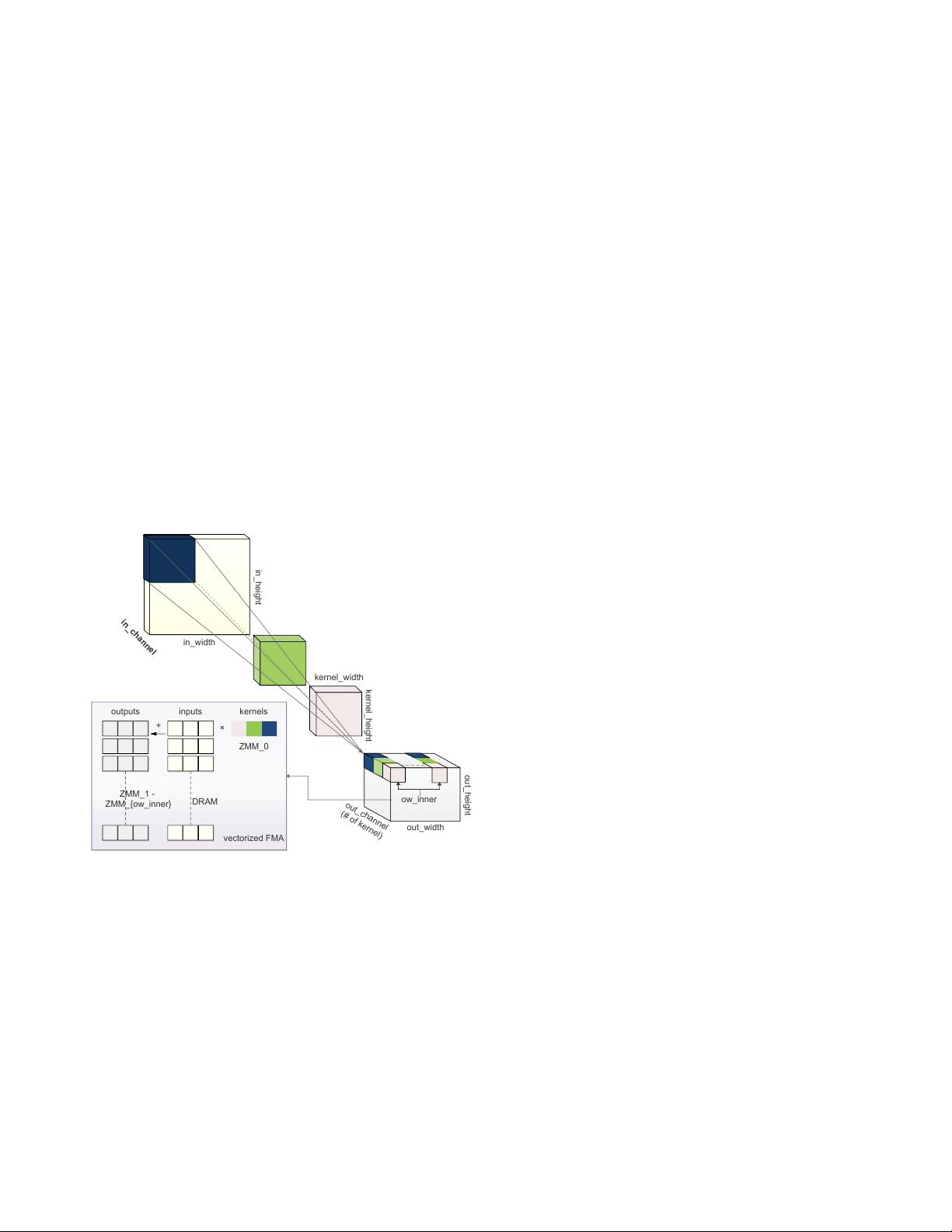

We started from optimizing CONV within one thread. CONV

is computationally-intensive which traverses its operands mul-

tiple times for computation. Therefore, it is critical to man-

age the layout of the data fed to the CONV to reduce the

memory access overhead. We first revisit the computation

of CONV to illustrate our memory management scheme. A

2D CONV in CNN takes a 3D feature map (height

×

width

×

channels) and a number of 3D convolution kernels (nor-

mally smaller height and width but the same number of chan-

nels) to convolve to output another 3D tensor. The calculation

is illustrated in Figure 1, which implies loops of 6 dimen-

sions: in_channel, kernel_height, kernel_width, out_channel,

out_height and out_width. Each kernel slides over the input

feature map along the height and width dimensions, does

element-wise product and accumulates the values to produce

the corresponding element in the output feature map, which

can naturally leverage FMA. The number of kernels forms

out_channel. Note that three of the dimensions (in_channel,

kernel_height and kernel_width) are reduction axes that can-

not be embarrassingly parallelized.

in_height

in_width

kernel_width

kernel_height

out_width

out_height

out_channel

(# of kernel)

in_channel

ow_inner

inputs kernels

ZMM_0

ZMM_1 -

ZMM_{ow_inner}

+

×

DRAM

outputs

vectorized FMA

Figure 1: The illustration of CONV and the efficient imple-

mentation in AVX-512 instructions as an example. There

are three kernels depicted in dark blue, green and light pink.

To do efficient FMA, multiple kernel values are packed into

one

ZMM

register and reused to multiply with different input

values and accumulate to output values in different

ZMM

registers.

We use the conventional notation NCHW to describe the

default data layout, which means the input and output are 4-D

tensors with batch size N, number of channels C, feature map

height H, feature map width W, where N is the outermost and

W is the innermost dimension of the data. The related layout

of kernel is KCRS, in which K, C, R, S stand for the output

channel, input channel, kernel height and kernel width.

Following the common practice [25, 42], we organized

the feature map layout as NCHW[x]c for better memory ac-

cess patterns, in which c is a split sub-dimension of chan-

nel C in super-dimension, and the number x indicates the

split size of the sub-dimension (i.e.

#channels = sizeo f (C)×

sizeo f (c)

, where

sizeo f (c) = x

). The output has the same

layout NCHW[y]c as the input, while the split factor can be

different. Correspondingly, the convolution kernel is orga-

nized in KCRS[x]c[y]k, in which c with split size

x

and k

with split size

y

are the sub-dimensions of input channel C

and output channel K, respectively. It is worth noting that a

significant amount of data transformation overhead needs to

be paid to get the desired layout.

In addition to the dimension reordering, for better uti-

lizing the latest vectorization instructions (e.g. AVX-512,

AVX2, NEON, etc.), we split out_width to ow_outer and

ow_inner using a factor reg_n and move the loop of ow_inner

inside for register blocking. For example, on a CPU fea-

tured AVX-512, we can utilize its 32 512-bit width registers

ZMM

0

− ZMM

31

[26] as follows. We maintain the loop hier-

archy to use one ZMM register to store the kernel data while

others storing the feature map. The kernel values stored in

one

ZMM

register (up to 512 bits, a.k.a, 16 output channels

in float32) are used to multiply with a number of input feature

map values continuously stored in the DRAM via AVX-512F

instructions [26], whose results are then accumulated to other

ZMM

registers storing the output values. Figure 1 illustrates

this idea. For other vectorized instructions, the same idea ap-

plies but the split factor of out_width (i.e. reg_n) may change.

Algorithm 1 summarizes our optimization of CONV in

single thread, which essentially is about 1) dimension order-

ing for friendly memory locality and 2) register blocking

for good vectorization instruction utilization, as in previous

works. However, unlike others, we made it a template in high-

level language (see supplementary material), in which the

block size (

x

,

y

), the number of utilized registers (reg_n), and

the loop-unroll strategy (

unroll_ker

) are easily configurable.

Consequently, the computing logic can be adjusted according

to different CPU architectures (cache size, registered vector

width, etc.) as well as different workloads (feature map size,

convolution kernel size, etc.). This is flexible and enables

graph-level optimization we will discuss later.

3.1.2 Thread-level parallelization

It is a common practice to partition CONV into disjoint pieces

to parallelize among multiple cores of a modern CPU. Kernel

libraries like Intel MKL-DNN usually uses off-the-shelf multi-

threading solution such as OpenMP. However, we observe

that the resulting scalability of the off-the-shelf parallelization

solution is not desirable (Section 4.2.4).

Therefore, we implemented a customized thread pool to

efficiently process this kind of embarrassing parallelization.

4

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功