没有合适的资源?快使用搜索试试~ 我知道了~

首页动态实例归一化:任意风格转换的革新方法

"Dynamic Instance Normalization for Arbitrary Style Transfer" 是一篇关于计算机视觉和人工智能领域的研究论文,发表在顶级会议上。该论文主要关注图像风格转移技术,这是一种将一种艺术风格应用到另一张图像上的方法,旨在实现更加灵活且高效的风格转换。传统的方法依赖于预定义的风格变换参数,这通常涉及到昂贵的手动设置过程,并且需要为风格和内容编码共享编码器。这种方法在移动设备等资源受限环境中部署时可能会显得笨重。 论文提出了一种新颖的、通用的规范化模块,称为动态实例归一化(Dynamic Instance Normalization,简称DIN)。DIN突破了传统的限制,它结合了实例归一化和动态卷积,使得风格图像能够被编码成可学习的卷积参数。这意味着风格转换不再受限于固定模式,而是可以根据输入内容自适应调整,从而降低了对预先设定的需求,提高了系统的灵活性和效率。 通过DIN,风格转换模型能够在不增加额外负担的情况下处理各种类型的风格,这对于移动设备等资源有限的环境尤为重要。此外,DIN的提出还可能推动后续的研究,探索如何进一步优化模型架构,减少计算成本,以及在实际应用场景中实现更流畅、实时的风格转换体验。 论文的核心贡献在于创新的归一化策略和动态参数生成机制,这不仅提升了艺术风格迁移的性能,也为其他领域,如图像编辑、虚拟现实和增强现实中的实时渲染提供了新的可能性。这篇论文对于提升基于AI的风格转换技术在实际应用中的可扩展性和实用性具有重要意义。

资源详情

资源推荐

Dynamic Instance Normalization for Arbitrary Style Transfer

Yongcheng Jing

1

, Xiao Liu

2

, Yukang Ding

2

, Xinchao Wang

3

, Errui Ding

2

,

Mingli Song

1∗

, Shilei Wen

2

1

Zhejiang University,

2

Department of Computer Vision Technology (VIS), Baidu Inc.,

3

Stevens Institute of Technology

{ycjing, brooksong}@zju.edu.cn, {liuxiao12, dingyukang, dingerrui, wenshilei}@baidu.com, xinchao.wang@stevens.edu

Abstract

Prior normalization methods rely on affine transformations

to produce arbitrary image style transfers, of which the pa-

rameters are computed in a pre-defined way. Such manually-

defined nature eventually results in the high-cost and shared

encoders for both style and content encoding, making style

transfer systems cumbersome to be deployed in resource-

constrained environments like on the mobile-terminal side. In

this paper, we propose a new and generalized normalization

module, termed as Dynamic Instance Normalization (DIN),

that allows for flexible and more efficient arbitrary style trans-

fers. Comprising an instance normalization and a dynamic

convolution, DIN encodes a style image into learnable con-

volution parameters, upon which the content image is styl-

ized. Unlike conventional methods that use shared complex

encoders to encode content and style, the proposed DIN intro-

duces a sophisticated style encoder, yet comes with a compact

and lightweight content encoder for fast inference. Experi-

mental results demonstrate that the proposed approach yields

very encouraging results on challenging style patterns and,

to our best knowledge, for the first time enables an arbitrary

style transfer using MobileNet-based lightweight architec-

ture, leading to a reduction factor of more than twenty in com-

putational cost as compared to existing approaches. Further-

more, the proposed DIN provides flexible support for state-

of-the-art convolutional operations, and thus triggers novel

functionalities, such as uniform-stroke placement for non-

natural images and automatic spatial-stroke control.

Introduction

Image stylization has been a long-standing research topic.

It has been studied in the domain of computer graphics, or

more specifically, the area of Non-Photorealistic Render-

ing (NPR) (Gooch and Gooch 2001; Rosin and Collomosse

2012). In the field of computer vision, image stylization is

studied as a generalized problem of texture synthesis (Efros

and Leung 1999). Built upon the recent progress in visual

texture modelling (Gatys, Ecker, and Bethge 2015) and im-

age reconstruction (Mahendran and Vedaldi 2015), Gatys et

al. (Gatys, Ecker, and Bethge 2016) propose to exploit Con-

∗

Corresponding author

Copyright

c

2020, Association for the Advancement of Artificial

Intelligence (www.aaai.org). All rights reserved.

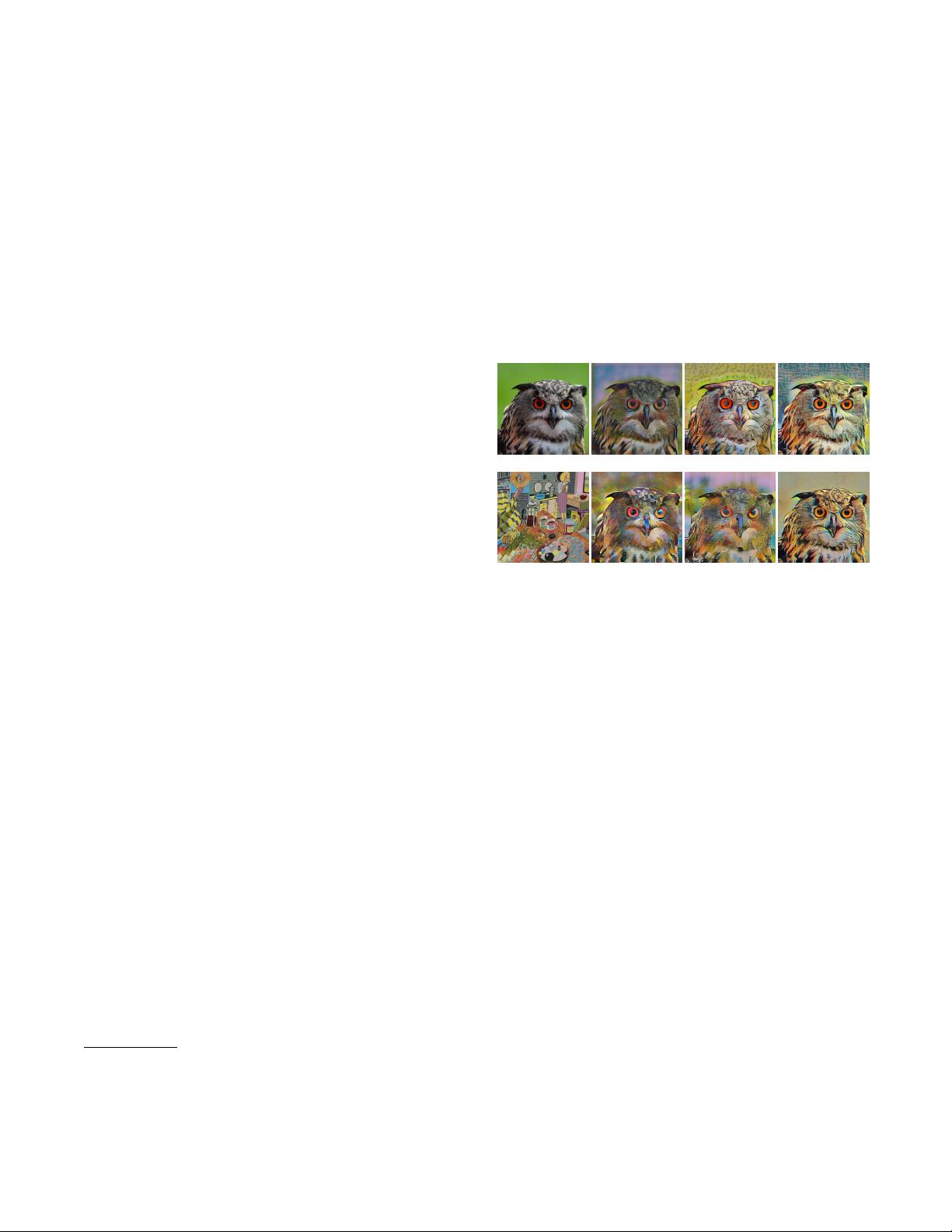

(a) Content

(b) Chen et al. (c) Huang et al.

(d) Ours (VGG)

(e) Style

(f) Li et al. (g) Sheng et al.

(h) Ours (MobileNet)

Figure 1: Existing ASPM methods either barely transfer

style to the target (Chen et al., Huang et al.), or produce

distorted style patterns (Li et al., Sheng et al.) while rely-

ing on high-cost encoders. By contrast, the proposed DIN

achieves superior performance using the same architecture

(Ours (VGG)), and for the first time endows a much smaller

lightweight network to transfer arbitrary styles (Ours (Mo-

bileNet)).

volutional Neural Networks (CNNs) to render a content im-

age in different styles, pioneering a new field called Neural

Style Transfer (NST) (Jing et al. 2019).

The inspiring work of Gatys et al. is, however, built upon

an iterative image optimization in the pixel space, which

turns out to be computationally expensive due to the on-

line optimization. To address this efficiency issue, model-

optimization-based NST algorithms are proposed, which

optimize feed-forward models in an offline training man-

ner. The earliest model-optimization-based NST algorithms,

namely Per-Style-Per-Model (PSPM), train separate style-

specific models for each particular style, and are there-

fore burdensome to be adopted for real-world applications.

(Johnson, Alahi, and Fei-Fei 2016; Ulyanov et al. 2016;

Li and Wand 2016). To address this issue, Multiple-Style-

Per-Model (MSPM) algorithms are proposed by incorporat-

ing multiple styles into one single model (Zhang and Dana

2017; Chen et al. 2017; Li et al. 2017b; Dumoulin, Shlens,

and Kudlur 2017). Unfortunately, MSPM also suffers from

arXiv:1911.06953v1 [cs.CV] 16 Nov 2019

下载后可阅读完整内容,剩余8页未读,立即下载

DeepLearning小舟

- 粉丝: 2384

- 资源: 57

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

最新资源

- JavaScript DOM事件处理实战示例

- 全新JDK 1.8.122版本安装包下载指南

- Python实现《点燃你温暖我》爱心代码指南

- 创新后轮驱动技术的电动三轮车介绍

- GPT系列:AI算法模型发展的终极方向?

- 3dsmax批量渲染技巧与VR5插件兼容性

- 3DsMAX破碎效果插件:打造逼真碎片动画

- 掌握最简GPT模型:Andrej Karpathy带你走进AI新时代

- 深入解析XGBOOST在回归预测中的应用

- 深度解析机器学习:原理、算法与应用

- 360智脑企业内测开启,探索人工智能新场景应用

- 3dsmax墙砖地砖插件应用与特性解析

- 微软GPT-4助力大模型指令微调与性能提升

- OpenSARUrban-1200:平衡类别数据集助力算法评估

- SQLAlchemy 1.4.39 版本特性分析与应用

- 高颜值简约个人简历模版分享

资源上传下载、课程学习等过程中有任何疑问或建议,欢迎提出宝贵意见哦~我们会及时处理!

点击此处反馈

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功