HWU 2011 06-ch01-003-014-9780123859631 2011/9/8 19:06 Page 6 #4

6 CHAPTER 1 Large-Scale GPU Search

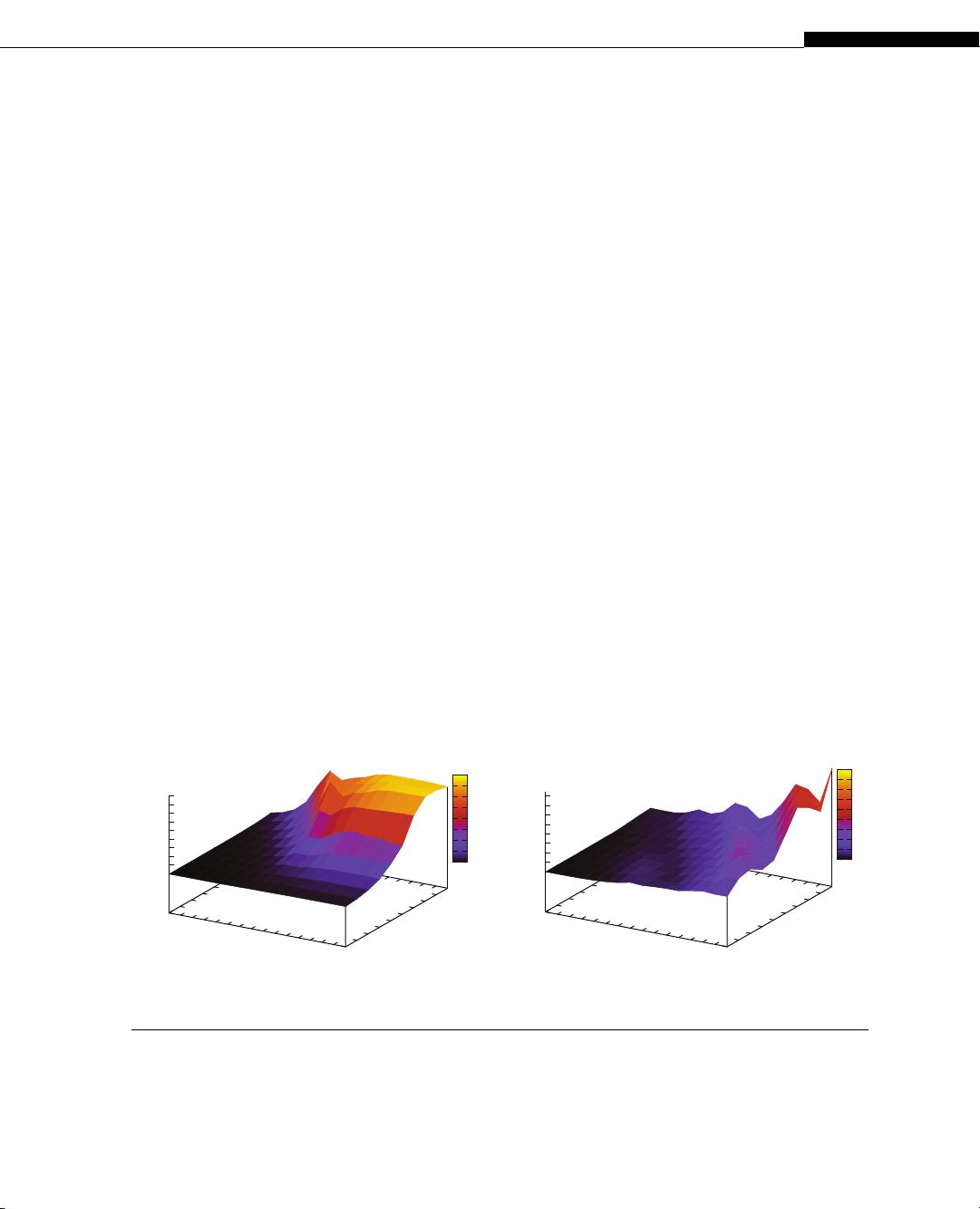

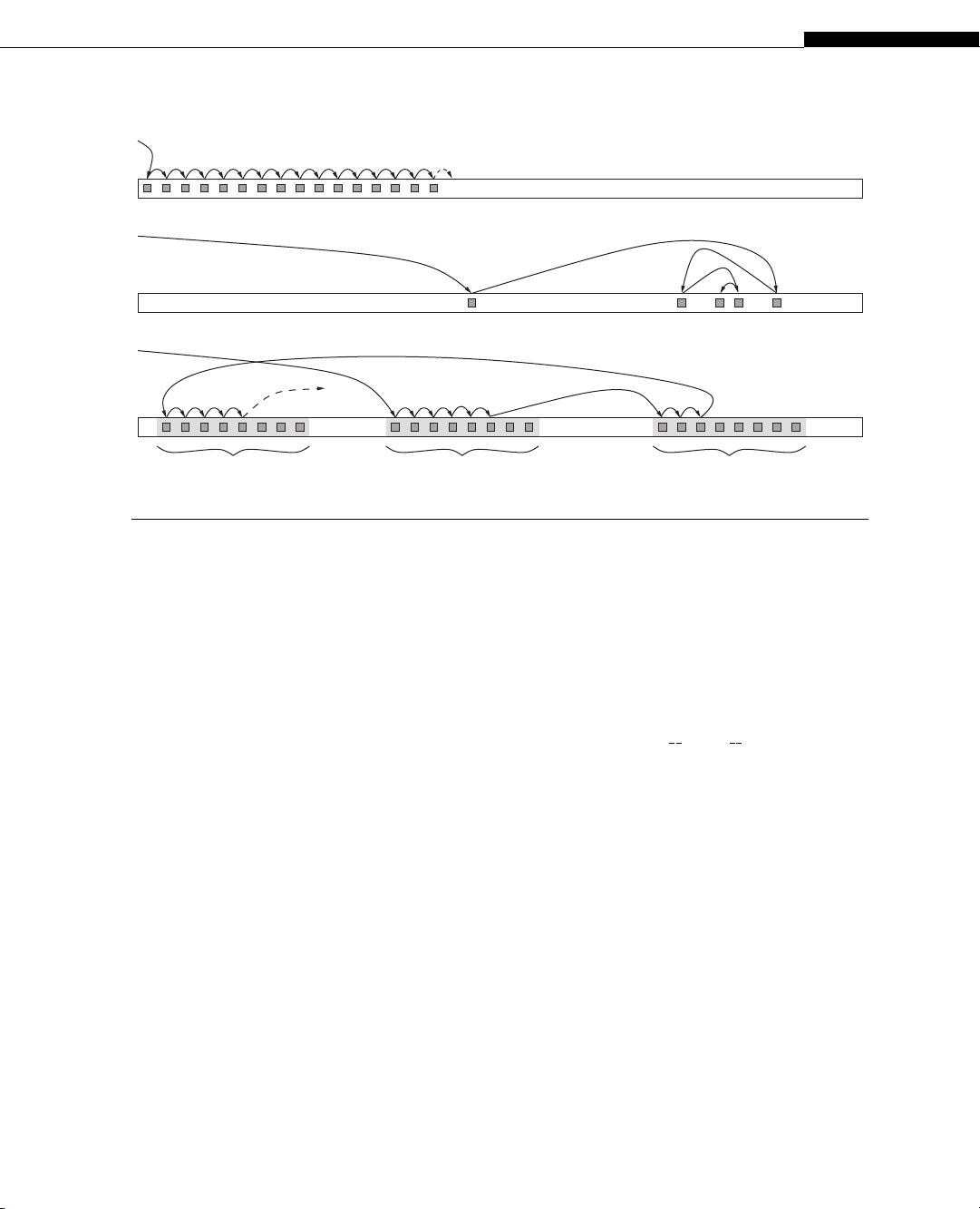

Returning to Little’s Law, we notice that it assumes that the full bandwidth be utilized, meaning,

that all 64 bytes transferred with each memory block are useful bytes actually requested by an applica-

tion, and not bytes that are transferred just because they belong to the same memory block. When any

amount of data is accessed, with a minimum of one single byte, the entire 64-byte block that the data

belongs to is actually transferred. To make sure that all bytes transferred are useful, it is necessary that

accesses are coalesced, i.e. requests from different threads are presented to the memory management

unit (MMU) in such a way that they can be packed into accesses that will use an entire 64-byte block. If,

for example, the MMU can only find 10 threads that read 10 4-byte words from the same block, 40 bytes

will actually be used and 24 will be discarded. It is clear that coalescing is extremely important to

achieve high memory utilization, and that it is much easier when the access pattern is regular and con-

tiguous. The experimental results in Figure 1.1b confirm that random-access memory bandwidth is sig-

nificantly lower than in the coalesced case. A more comprehensive explanation of memory architecture,

coalescing, and optimization techniques can be found in Nvidia’s CUDA Programming Guide [7].

1.3 SEARCHING LARGE DATA SETS

The problem of searching is not theoretically difficult in itself, but quickly searching a large data set

offers an exquisite example of how finding the right combination of algorithms and data structures for

a specific architecture can dramatically improve performance.

1.3.1 Data Structures

Searching for specific values in unsorted data leaves no other choice than scanning the entire data set.

The time to search an unsorted data set in memory is determined by sequential memory bandwidth,

because the access pattern is nothing else but sequential reads. Performance is identical to that of coa-

lesced reads (Figure 1.1a), which peak at about 150 GB/s on the GPU when using many thread blocks

and many threads per block. On the other hand, searching sorted data or indexes can be implemented

much more efficiently.

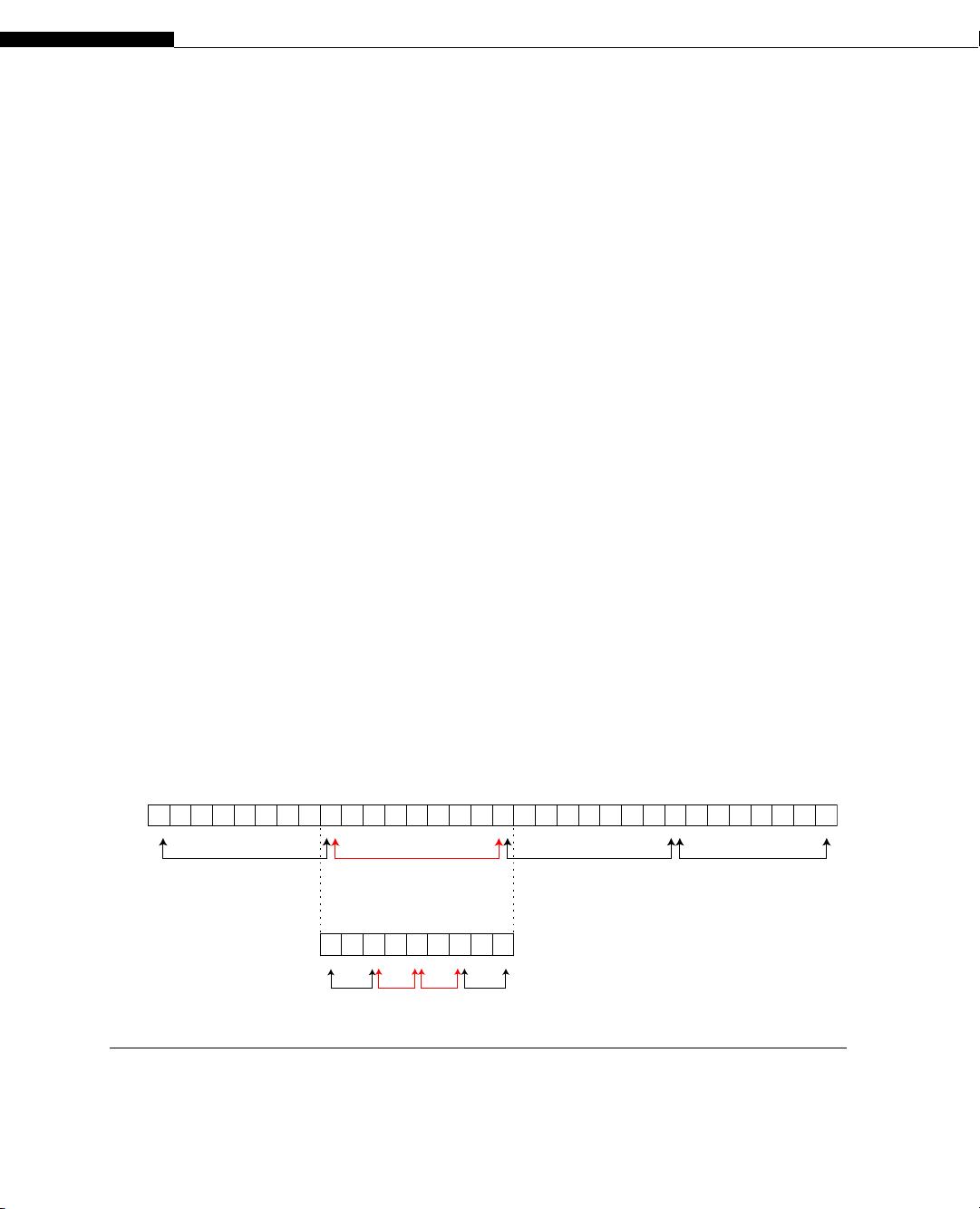

Databases and many other applications use indexes, stored as sorted lists or B-trees, to acceler-

ate searches. For example, searching a sorted list using binary search

1

requires O(log

2

(n)) memory

accesses as opposed to O(n) using linear search, for a data set of size n. However, the data access

pattern of binary search is not amenable to caching and prefetching, as each iteration’s mem-

ory access is data dependent and distant (Figure 1.2). Although each access incurs full memory

latency this approach is orders of magnitude faster on sorted data. For example, assuming a mem-

ory latency of 500 clock cycles, searching a 512 MB data set of 32-bit integers in the worst case takes

log

2

(128M) ∗ 500cc = 13, 500cc, as opposed to millions when scanning the entire data set. B-trees

2

1

Binary search compares the search key with the (pivot) element in the middle of a sorted data set. Based on whether the

search key is larger than, smaller than, or equal to the pivot element, the algorithm then searches the upper or lower half of

the data set, or returns the current location if the search key was found.

2

Searching a B-tree can be implemented comparing the search key with the elements in a node in ascending order, starting

at the root. When an element larger than the search key is found, it takes the corresponding branch to the child node, which

only contains elements in the same range as the search key, smaller than the current element and larger than the previous

one. When an element equals the search key its position is returned.

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功