Entropy 2017, 19, 424 6 of 33

Entropy 2017, 19, 424 6 of 32

IG( , )

λ

2

1

=,=,=

2

−abp

λ

λ

μ

=2 , 0, =→ab p

λ

Beta( , )ab

U(0,1)

1, 1==ab

St( , , )

b

0→a

1

~Lap(0, )x

λ

−

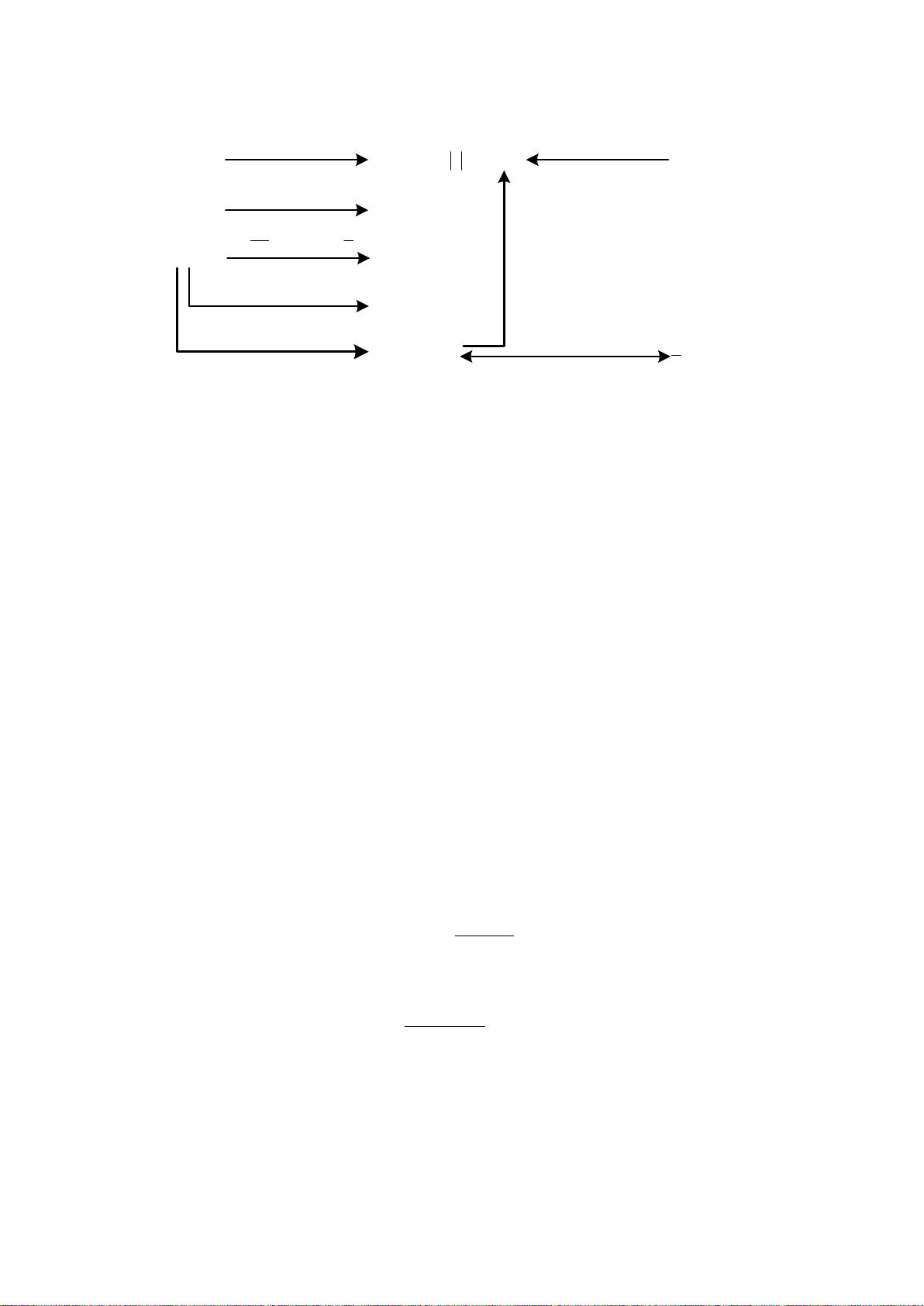

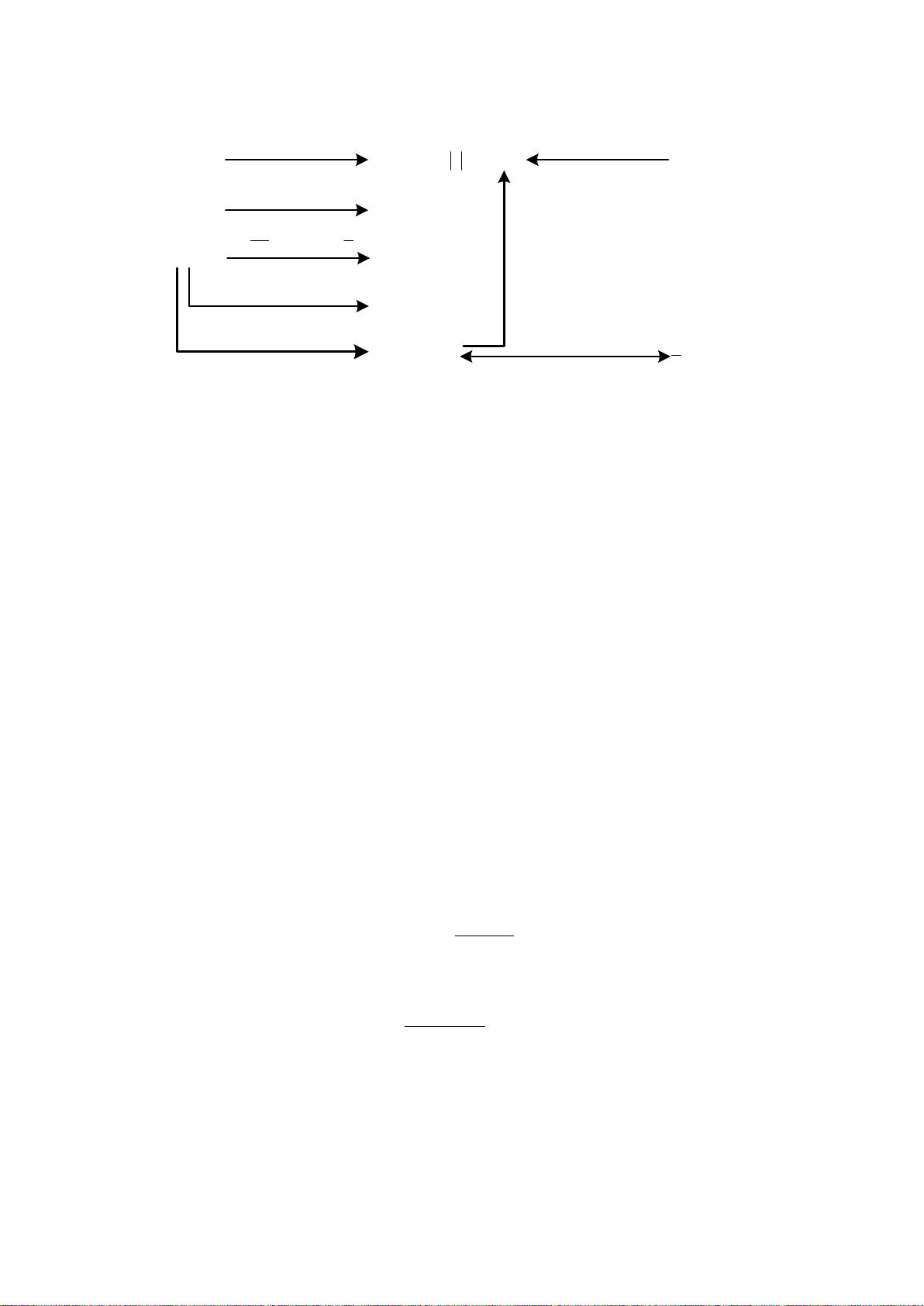

Figure 1. Relationships among several probability distributions.

2.2. Conjugate Priors

Let

x be a random vector with the parameter vector z and

12

, ,...,

N

X = xx x a collection of N

observed samples. In the presence of latent variables, they are also absorbed into

z . For given z , the

conditional probability density/mass function of

x is denoted by (|)p xz. Thus, we can construct

the likelihood function:

1

(| ) ( |) ( |).

N

i

i

LXpX p

=

==

∏

zzxz

(6)

As for variational Bayesian methods, the parameter vector

z is usually assumed to be

stochastic. Here, the prior distribution of

z is expressed as ()p z .

To simplify Bayesian analysis, we hope that the posterior distribution

(| )pXz is in the same

functional form as the prior ()p z . Under this circumstance, the prior and the posterior are called

conjugate distributions and the prior is also called a conjugate prior for the likelihood function

(| )LXz

[54,66]. In the following, we provide three most commonly-used examples of conjugate priors.

Example 3. Assume that random variable x obeys the Bernoulli distribution with parameter

. We have

the likelihood function for

x

:

11

1

11

(|) Bern( |) (1 ) (1 )

NN

ii

iii i

NN

xNx

xx

i

ii

LX x

μμμμμμ

==

−

−

==

==−=−

∏∏

(7)

where the observations

{0,1}

i

x ∈ . In consideration of the form of (|)LX

, we stipulate the prior

distribution of

as the Beta distribution with parameters

a

and

b

:

11

()

() Beta(|,) (1 ) .

()()

ab

ab

pab

ab

μμ μμ

−−

Γ+

== −

ΓΓ

(8)

At this moment, we get the posterior distribution of

via the Bayes’ rule:

()(|)

(|) ()(|).

()

ppX

pX ppX

pX

μμ

=∝

(9)

Because

()

1

1

11

11

11

()( |) ()(| )

(1 ) 1

(1 )

N

N

i

i

i

i

NN

ii

ii

Nx

x

ab

ax bNx

ppX pL X

μμμμ

μμμ μ

μμ

=

=

==

−

−−

+− +−−

=

∝− −

∝−

(10)

Figure 1. Relationships among several probability distributions.

2.2. Conjugate Priors

Let

x

be a random vector with the parameter vector

z

and

X =

{

x

1

, x

2

, . . . , x

N

}

a collection of

N observed samples. In the presence of latent variables, they are also absorbed into

z

. For given

z

,

the conditional probability density/mass function of

x

is denoted by

p(x|z)

. Thus, we can construct

the likelihood function:

L(z|X) = p(X|z) =

N

∏

i=1

p(x

i

|z). (6)

As for variational Bayesian methods, the parameter vector

z

is usually assumed to be stochastic.

Here, the prior distribution of z is expressed as p(z).

To simplify Bayesian analysis, we hope that the posterior distribution

p(z|X)

is in the same

functional form as the prior

p(z)

. Under this circumstance, the prior and the posterior are called

conjugate distributions and the prior is also called a conjugate prior for the likelihood function

L(z|X)

[

54

,

66

]. In the following, we provide three most commonly-used examples of conjugate priors.

Example 3.

Assume that random variable

x

obeys the Bernoulli distribution with parameter

µ

. We have the

likelihood function for x:

L(µ|X) =

N

∏

i=1

Bern(x

i

|µ) =

N

∏

i=1

µ

x

i

(1 −µ)

1−x

i

= µ

∑

N

i=1

x

i

(1 −µ)

N−

∑

N

i=1

x

i

(7)

where the observations

x

i

∈

{

0, 1

}

. In consideration of the form of

L(µ|X)

, we stipulate the prior distribution

of µ as the Beta distribution with parameters a and b:

p(µ) = Beta(µ|a, b) =

Γ(a + b)

Γ(a)Γ(b)

µ

a−1

(1 −µ)

b−1

. (8)

At this moment, we get the posterior distribution of µ via the Bayes’ rule:

p(µ|X) =

p(µ)p(X|µ)

p(X)

∝ p(µ)p(X|µ). (9)

Because

p(µ)p(X|µ) = p(µ)L(µ|X)

∝ µ

a−1

(1 −µ)

b−1

µ

∑

N

i=1

x

i

(

1 − µ

)

N−

∑

N

i=1

x

i

∝ µ

a+

∑

N

i=1

x

i

−1

(1 −µ)

b+N−

∑

N

i=1

x

i

−1

(10)