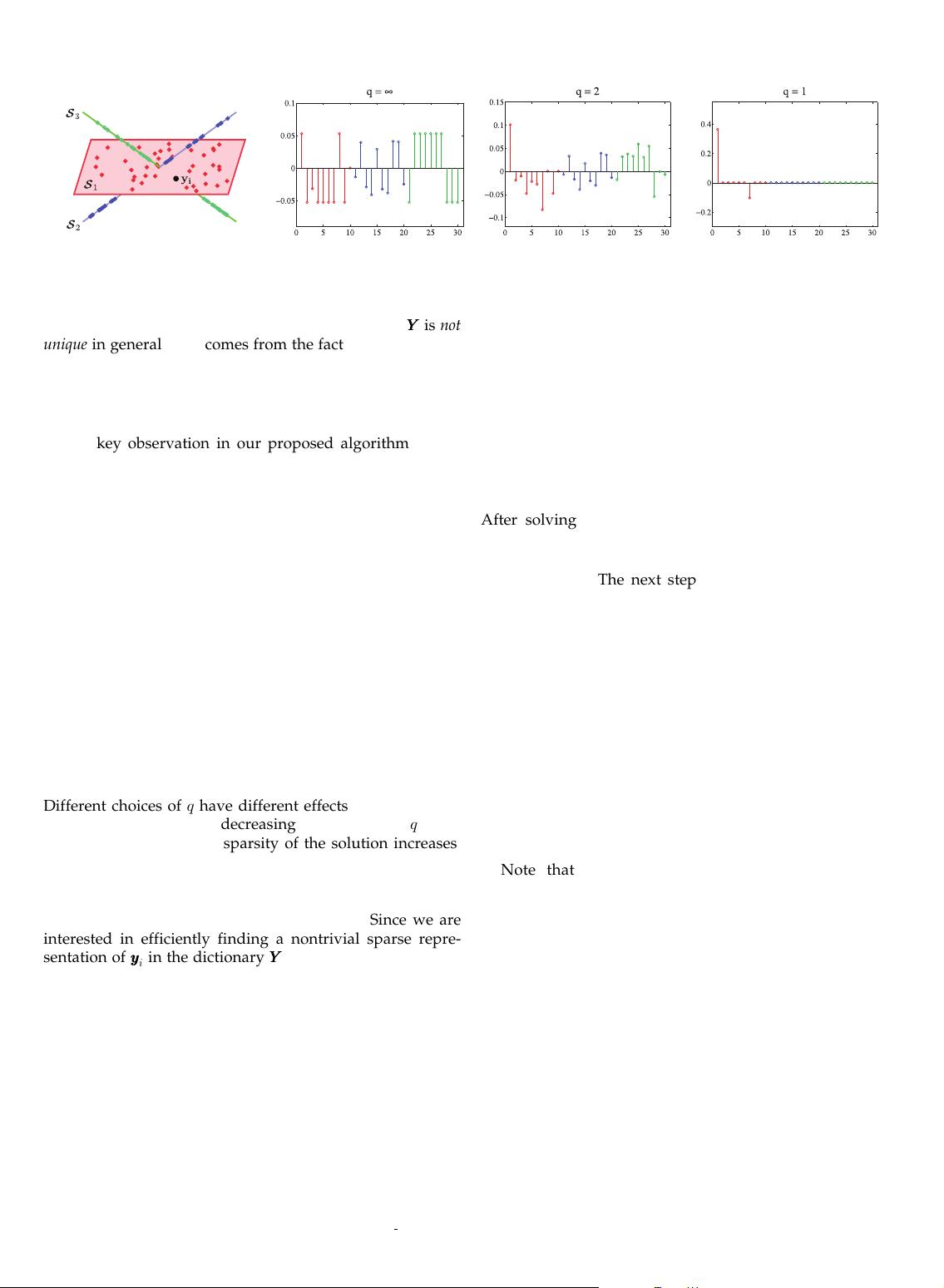

However, the representation of yyyy

i

in the dictionary YYYY is not

unique in general. This comes from the fact that the number

of data points in a subspace is often greater than its

dimension, i.e., N

‘

>d

‘

. As a result, each YYYY

‘

, and conse-

quently YYYY , has a nontrivial nullspace, giving rise to

infinitely many representations of each data point.

The key observation in our proposed algorithm is that

among all solutions of (2),

there exists a sparse solution, cccc

i

, whose nonzero entries correspond

to data points from the same subspace as yyyy

i

. We refer to such a

solution as a subspace-sparse representation.

More specifi cally, a data point yyyy

i

that lies in the

d

‘

-dimensional subspace S

‘

can be written as a linear

combination of d

‘

other points in general directions from S

‘

.

As a result, ideally, a sparse representation of a data point

finds points from the same subspace where the number of

the nonzero elements corresponds to the dimension of the

underlying subspace.

For a system of equations such as (2) with infinitely

many solutions, one can restrict the set of solutions by

minimizing an objective function such as the ‘

q

-norm of the

solution

1

as

minkcccc

i

k

q

s:t:yyyy

i

¼ YYYYcccc

i

;c

ii

¼ 0: ð3Þ

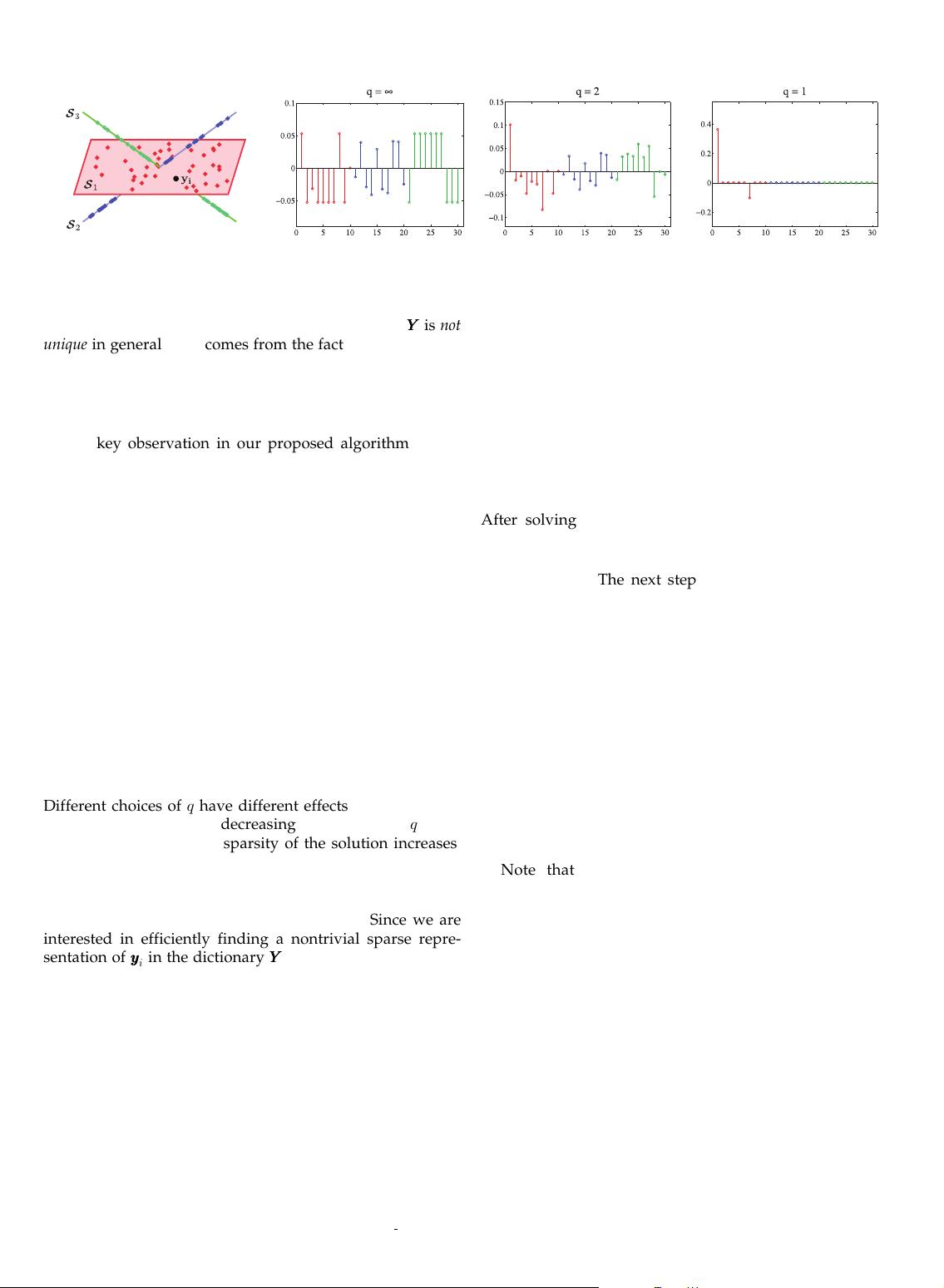

Different choices of q have different effects in the obtained

solution. Typically, by decreasing the value of q from

infinity toward zero, the sparsity of the solution increases,

as shown in Fig. 3. The extreme case of q ¼ 0 corresponds to

the general NP-hard problem [51] of finding the sparsest

representation of the given point, as the ‘

0

-norm counts the

number of nonzero elements of the solution. Since we are

interested in efficiently finding a nontrivial sparse repre-

sentation of yyyy

i

in the dictionary YYYY , we consider minimizing

the tightest convex relaxation of the ‘

0

-norm, i.e.,

minkcccc

i

k

1

s:t:yyyy

i

¼ YYYYcccc

i

;c

ii

¼ 0; ð4Þ

which can be solved efficiently using convex programming

tools [48], [49], [50] and is known to prefer sparse solutions

[29], [30], [31].

We can also rewrite the sparse optimization program (4)

for all data points i ¼ 1; ...;N in matrix form as

minkCCCCk

1

s:t:YYYY ¼ YYYYCCCC; diagðCCCCÞ¼00; ð5Þ

where CCCC ¼

4

½cccc

1

cccc

2

... cccc

N

2IR

NN

is the matrix whose

ith column corresponds to the sparse representation of

yyyy

i

, cccc

i

, and diagðCCCCÞ2IR

N

is the vector of the diagonal

elements of CCCC.

Ideally, the solution of (5) corresponds to subspace-

sparse representations of the data points, which we use next

to infer the clustering of the data. In Section 4, we study

conditions under which the convex optimization program

in (5) is guaranteed to recover a subspace-sparse represen-

tation of each data point.

2.2 Clustering Using Sparse Coefficients

After solving the proposed optimization program in (5),

we obtain a sparse representation for each data point whose

nonzero elements ideally correspond to points from the

same subspace. The next step of the algorithm is to infer

the segmentation of the data into different subspaces using

the sparse coefficients.

To address this problem, we build a weighted graph

G¼ðV; E;WWWWÞ, where V denotes the set of N nodes of the

graph corresponding to N data points and EVV

denotes the set of edges between nodes. WWWW 2 IR

NN

is a

symmetric nonnegative similarity matrix representing the

weights of the edges, i.e., node i is connected to node j by

an edge whose weight is equal to w

ij

. An ideal similarity

matrix WWWW , hence an ideal similarity graph G, is one in which

nodes that correspond to points from the same subspace are

connected to each other and there are no edges between

nodes that correspond to points in different subspaces.

Note that the sparse optimization program ideally

recovers to a subspace-sparse representation of each point,

i.e., a representation whose nonzero elements correspond to

points from the same subspace of the given data point. This

provides an immediate choice of the similarity matrix as

WWWW ¼jCCCCjþjCCCCj

>

. In other words, each node i connects itself

to a node j by an edge whose weight is equal to jc

ij

jþjc

ji

j.

The reason for the symmetrization is that, in general, a data

point yyyy

i

2S

‘

can write itself as a linear combination of some

points including yyyy

j

2S

‘

. However, yyyy

j

may not necessarily

choose yyyy

i

in its sparse representation. By this particular

choice of the weight, we make sure that nodes i and j get

connected to each other if either yyyy

i

or yyyy

j

is in the sparse

representation of the other.

2

2768 IEEE TRANSACTIONS ON PATTERN ANALYSIS AND MACHINE INTELLIGENCE, VOL. 35, NO. 11, NOVEMBER 2013

Fig. 3. Three subspaces in IR

3

with 10 data points in each subspace, ordered such that the fist and the last 10 points belong to S

1

and S

3

,

respectively. The solution of the ‘

q

-minimization program in (3) for yyyy

i

lying in S

1

for q ¼ 1; 2; 1 is shown. Note that as the value of q decreases, the

sparsity of the solution increases. For q ¼ 1, the solution corresponds to choosing two other points lying in S

1

.

1. The ‘

q

-norm of cccc

i

2 IR

N

is defined as kcccc

i

k

q

¼

4

ð

P

N

j¼1

jc

ij

j

q

Þ

1

q

.

2. To obtain a symmetric similarity matrix, one can directly impose the

constraint of CCCC ¼ CCCC

>

in the optimization program. However, this results in

increasing the complexity of the optimization program and, in practice,

does not perform better than the postsymmetrization of CCCC, as described

above. See also [52] for other processing approaches of the similarity matrix.

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功