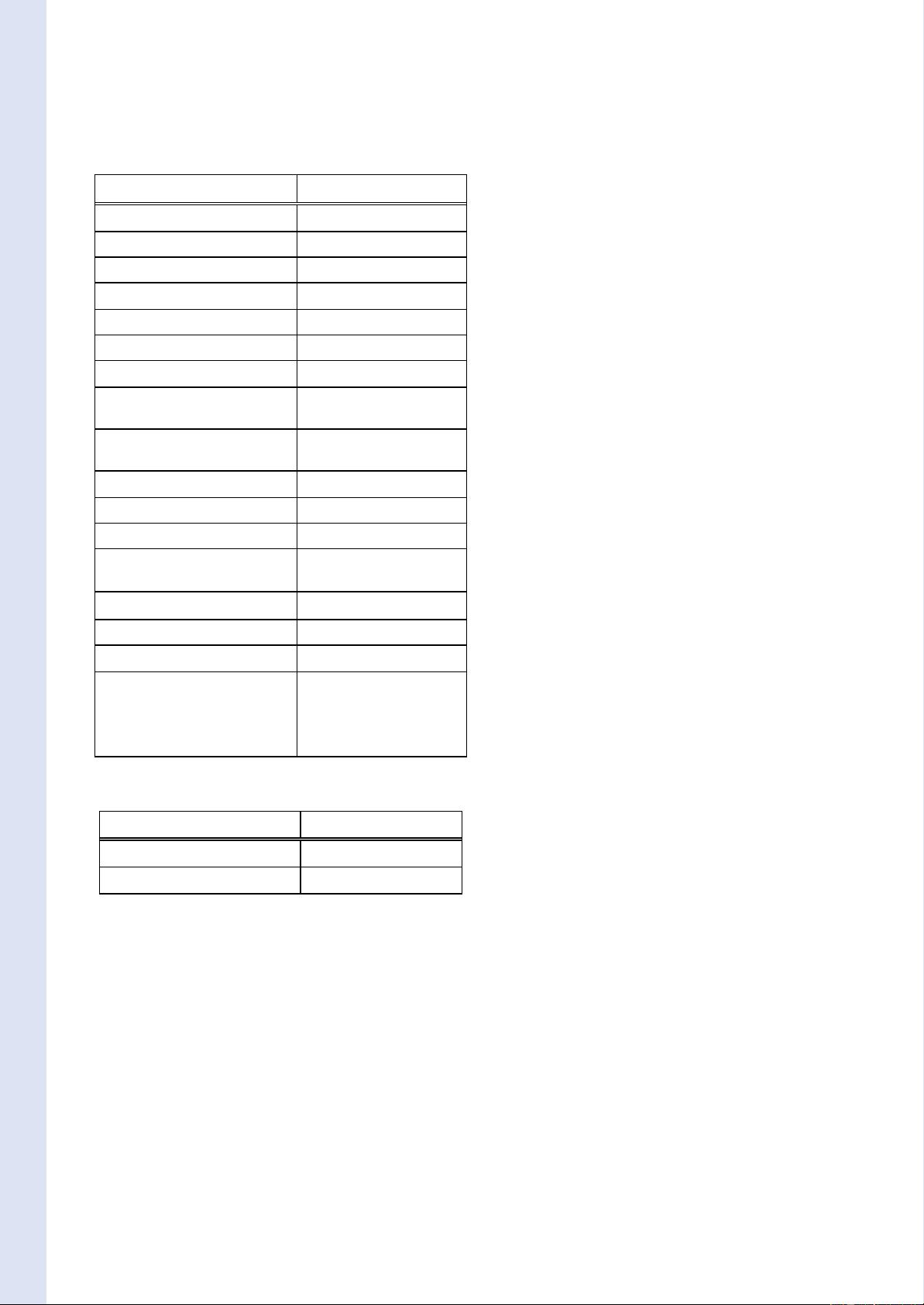

Table 1. Challenges and MOG Versions

Critical Situations References

Noise Image (NI) [61 -64]

Camera jitter (CJ) [58, 62, 65, 66]

Camera Adjustements (CA)

Auto Gain Control [67]

Auto White Balance [68]

Automatic Exposure Correction [69]

Gradual Illumination Changes (TD) [1, 24, 38, 63, 70-73, 74, 75]

Sudden Illumination Changes (LS) [24, 61, 63, 65, 67, 68, 70, 71,

74-81]

Bootstrapping during initialization

(B)

[59, 82, 83]

Bootstrapping during running (B) [84 -88]

Camouflage (C) [42, 72, 73, 88-92]

Foreground Aperture (FA) [93]

Moved background objects (MBO) [60, 63, 70, 74, 75, 80 ,85, 87,

88]

Inserted background objects (IBO) [60, 63, 70, 74, 75, 85, 87, 88]

Multimodal background (MB) [1, 61, 64, 84, 86, 90, 94-107]

Waking foreground object (WFO) [74 -75, 80, 85, 87, 88]

Sleeping foreground objects (SFO) [1, 42, 60,74,75,78,79, 85, 87,

88, 108-114]

Shadows and highlights (S) [61, 62, 68-70, 81, 101, 109,

115-123]

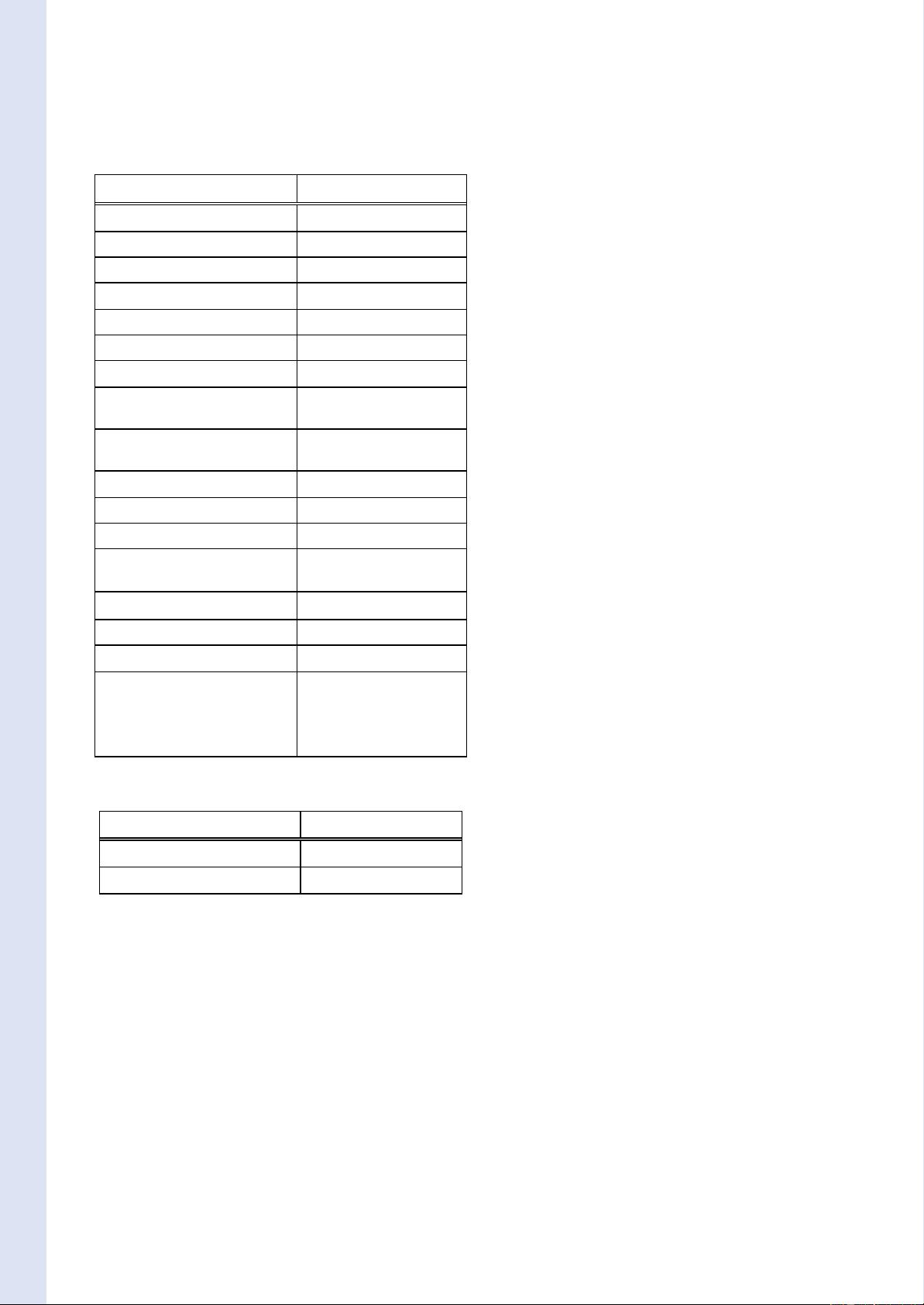

Table 2. Real Time Constraints and MOG Versions

Real-Time Constraints References

Computation Time (CT) [24, 43, 92,124-131]

Memory Requirement (MR) [127, 128]

time. To solve this problem, Zivkovic [94] proposes an

online algorithm that estimates the parameters of the MOG

and simultaneously selects the number of Gaussians using

the Dirichlet prior. The consequence is that K is dynamically

adapted to the multimodality of each pixel. In the same idea,

Cheng et al. [95] propose a stochastic approximation

procedure which is used to recursively estimate the

parameters of MOG and obtains the asymptotically optimal

number of Gaussians. Another approach proposed by

Shimada et al. [96] consists in a dynamic control of the

number of Gaussians. This approach automatically changes

the number of Gaussians in each pixel. The number of

Gaussians increases when pixel values often change. On the

other hand, when pixel values are constant in a while, some

Gaussians are eliminated or integrated. Another idea

proposed by Tan et al. [97] consists in a modified online EM

procedure to construct an adaptive MOG in which the

number K can adaptively reflect the complexity of pattern at

the pixel. Carminati et al. [98] estimate the optimal number

of K Gaussians for each pixel in a training set using an

ISODATA algorithm. This method is less adaptive than the

others because K isn’t updated after the training period.

3.2. Initialization of the Weight, the Mean and the

Variance

Stauffer and Grimson [1] initialized the weight, the mean

and the variance of each Gaussian using a K-means

algorithm. A training sequence without foreground is

needed. This initialization scheme is improved as follows:

By using another algorithm for the initialization:

Pavlidis et al. [99] show that an EM algorithm [51] is a

superior initialization method that provides fast learning and

exceptional stability to the foreground detection. This is

especially true when initialization happens during challen-

ging weather conditions like fast moving clouds or other

cases of multimodal background (MB). The disadvantage is

that the EM algorithm is computationally intensive. In the

continuity, Lee [84] proposes an approximation of the EM

algorithm to avoid unnecessary computation or storage. His

results on both synthetic data and surveillance videos show

better learning efficiency and robustness in case of (B) and

(MB) than the algorithm used by Friedman and Russel [50],

Stauffer and Grimson [1], and Bowden et al. [132].

By allowing presence of foreground objects in the

training sequence: Following the assumption that the

background’s pixels appear in the image sequence with the

maximum frequency, Zhang et al. [60] propose a

background reconstruction algorithm to initialize the MOG

even in presence of foreground in the scene. Another

approach proposed by Amintoosi et al. [82] consists in a QR-

decomposition based algorithm. To be more robust when

large parts of the background are occluded by moving

objects and parts of the background are never seen, Lepisk

[83] proposes to use the optic flow to reason about if the

background has been seen or not. This method is more robust

in the case of bootstrapping (B).

3.3. Maintenance of the Weight, the Mean and the

Variance

Stauffer and Grimson [1] updated the weight, the mean

and the variance of each Gaussians with an IIR filter using a

constant learning rate

for the weight update and a

learning rate

for the mean and variance update. This

maintenance scheme is optimized in the literature through

three different ways:

(1) Maintenance Rules: The update of the parameters in

Stauffer and Grimson [1] is made using an IIR filter like

shown in the Equation (6). The disadvantage is that it is

necessary to choose using a training sequence the learning

rate

which is then fixed for all the sequence. To improve

the robustness and sensitively to gradual illumination chan-

ges (TD), Han and Lin [38] update the MOG via adaptive

Kalman filtering. The main interest is that the Kalman filter

proposed adjusts its gain depending on the normalized

hal-00338206, version 1 - 12 Nov 2008

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功