7Why use concurrency?

sets of responsibilities: not only does it have to read the data from the disk, decode the

images and sound, and send them to the graphics and sound hardware in a timely

fashion so the

DVD

plays without glitches, but it must also take input from the user,

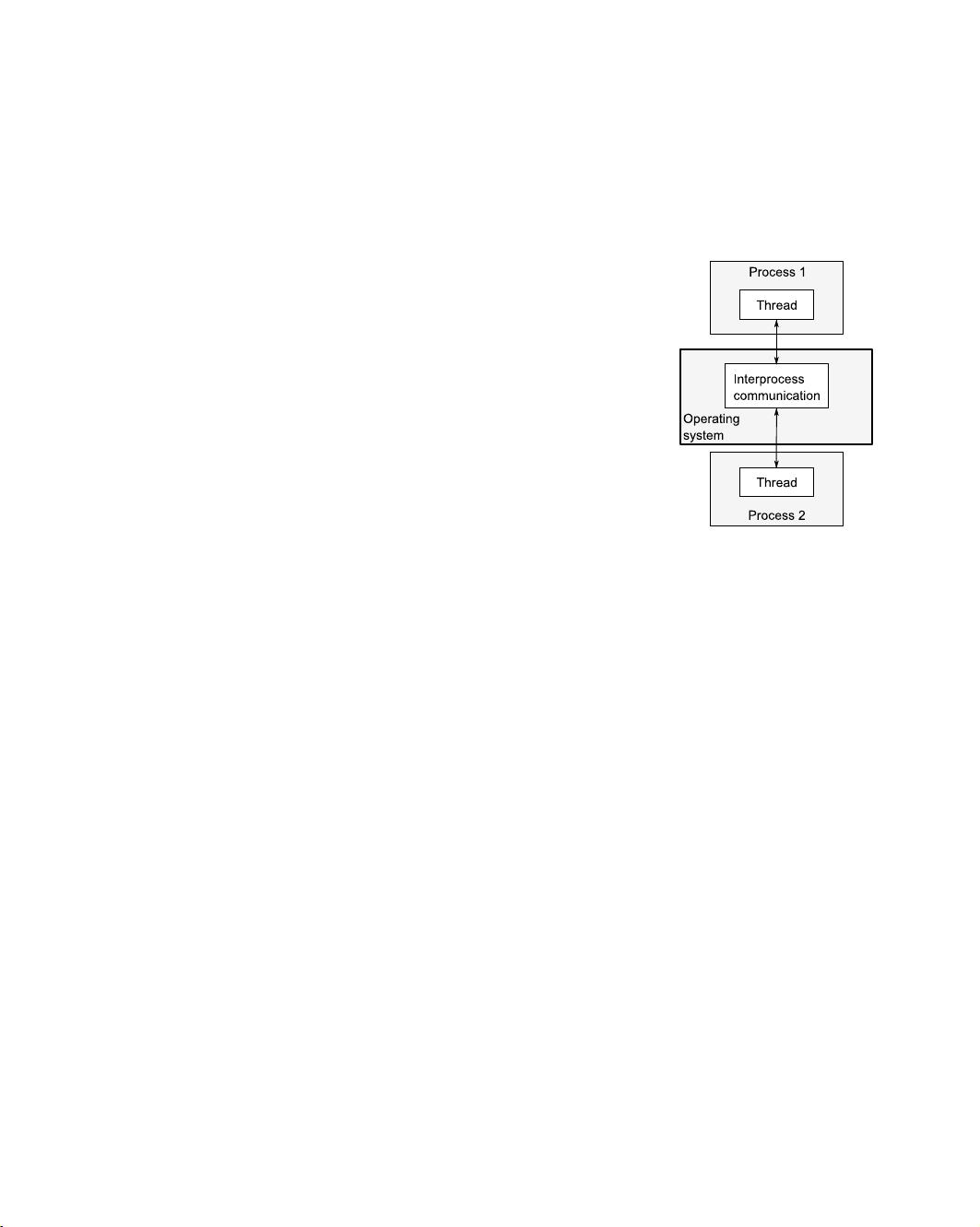

such as when the user clicks Pause or Return To Menu, or even Quit. In a single

thread, the application has to check for user input at regular intervals during the play-

back, thus conflating the

DVD

playback code with the user interface code. By using

multithreading to separate these concerns, the user interface code and

DVD

playback

code no longer have to be so closely intertwined; one thread can handle the user

interface and another the

DVD

playback. There will have to be interaction between

them, such as when the user clicks Pause, but now these interactions are directly

related to the task at hand.

This gives the illusion of responsiveness, because the user interface thread can typ-

ically respond immediately to a user request, even if the response is simply to display a

busy cursor or Please Wait message while the request is conveyed to the thread doing

the work. Similarly, separate threads are often used to run tasks that must run contin-

uously in the background, such as monitoring the filesystem for changes in a desktop

search application. Using threads in this way generally makes the logic in each thread

much simpler, because the interactions between them can be limited to clearly identi-

fiable points, rather than having to intersperse the logic of the different tasks.

In this case, the number of threads is independent of the number of

CPU

cores

available, because the division into threads is based on the conceptual design rather

than an attempt to increase throughput.

1.2.2 Using concurrency for performance

Multiprocessor systems have existed for decades, but until recently they were mostly

found only in supercomputers, mainframes, and large server systems. But chip manu-

facturers have increasingly been favoring multicore designs with 2, 4, 16, or more pro-

cessors on a single chip over better performance with a single core. Consequently,

multicore desktop computers, and even multicore embedded devices, are now

increasingly prevalent. The increased computing power of these machines comes not

from running a single task faster but from running multiple tasks in parallel. In the

past, programmers have been able to sit back and watch their programs get faster with

each new generation of processors, without any effort on their part. But now, as Herb

Sutter put it, “The free lunch is over.”

1

If software is to take advantage of this increased

computing power, it must be designed to run multiple tasks concurrently. Programmers must

therefore take heed, and those who have hitherto ignored concurrency must now

look to add it to their toolbox.

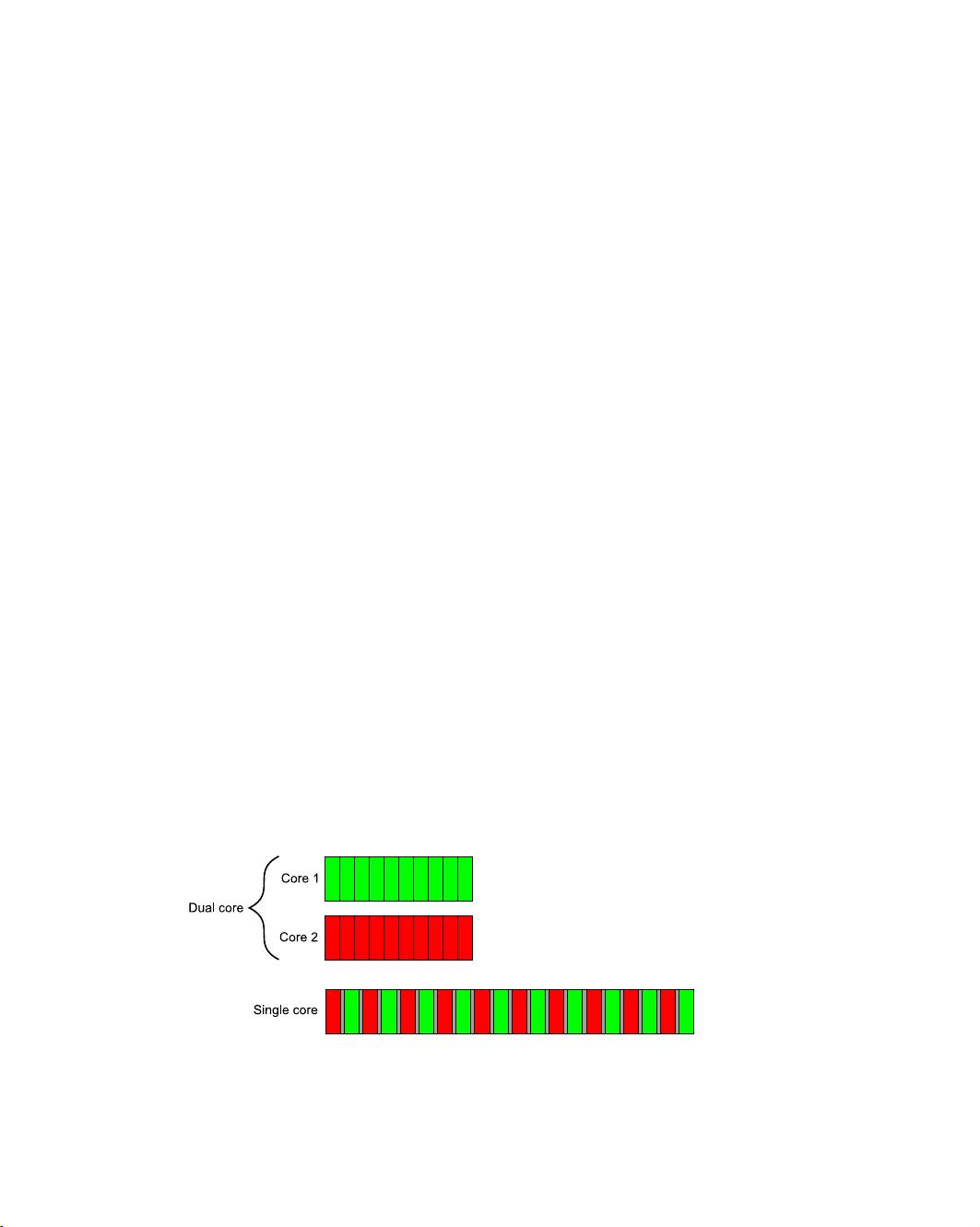

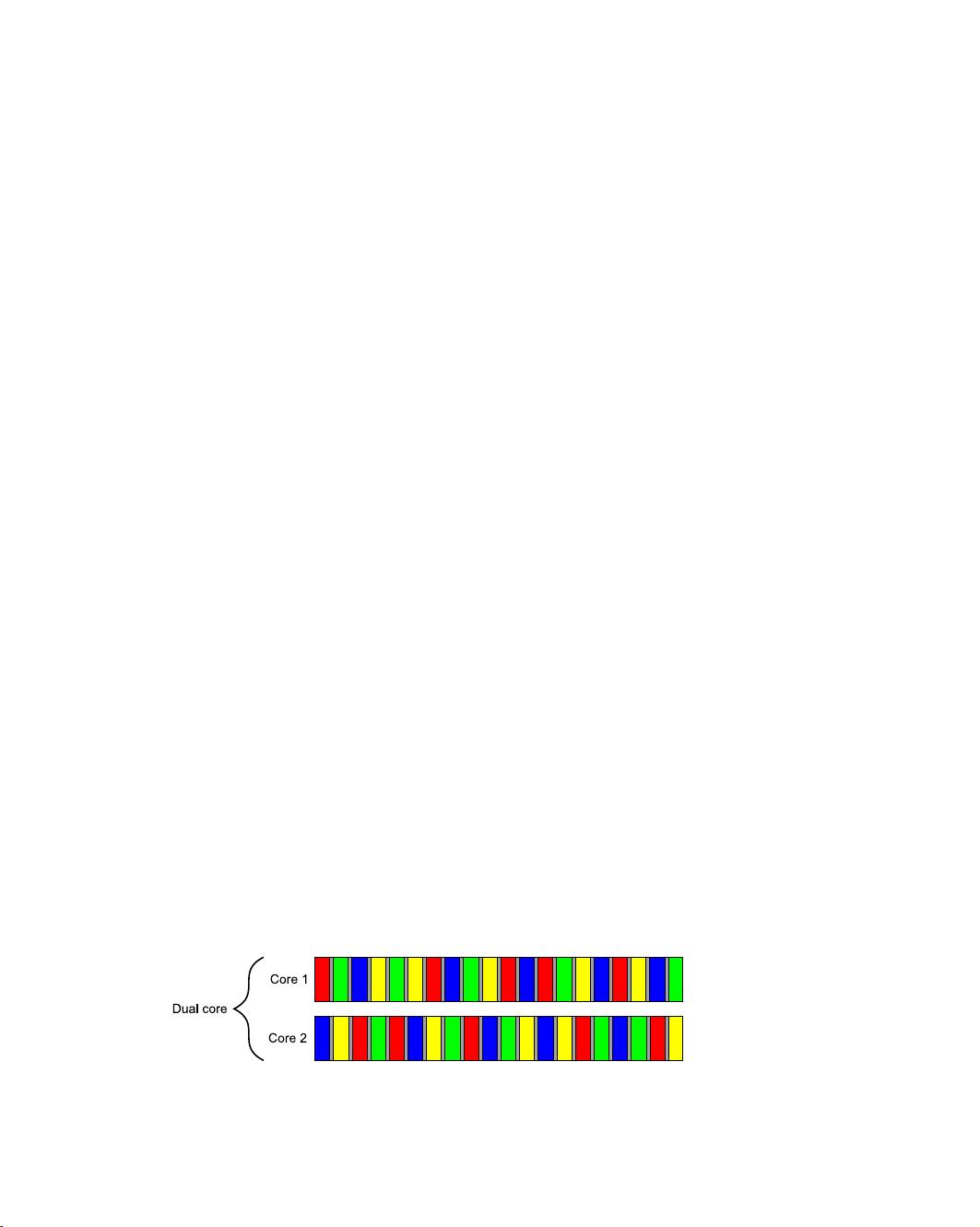

There are two ways to use concurrency for performance. The first, and most obvi-

ous, is to divide a single task into parts and run each in parallel, thus reducing the

total runtime. This is task parallelism. Although this sounds straightforward, it can be

1

“The Free Lunch Is Over: A Fundamental Turn Toward Concurrency in Software,” Herb Sutter, Dr. Dobb’s

Journal, 30(3), March 2005. http://www.gotw.ca/publications/concurrency-ddj.htm.

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功