6

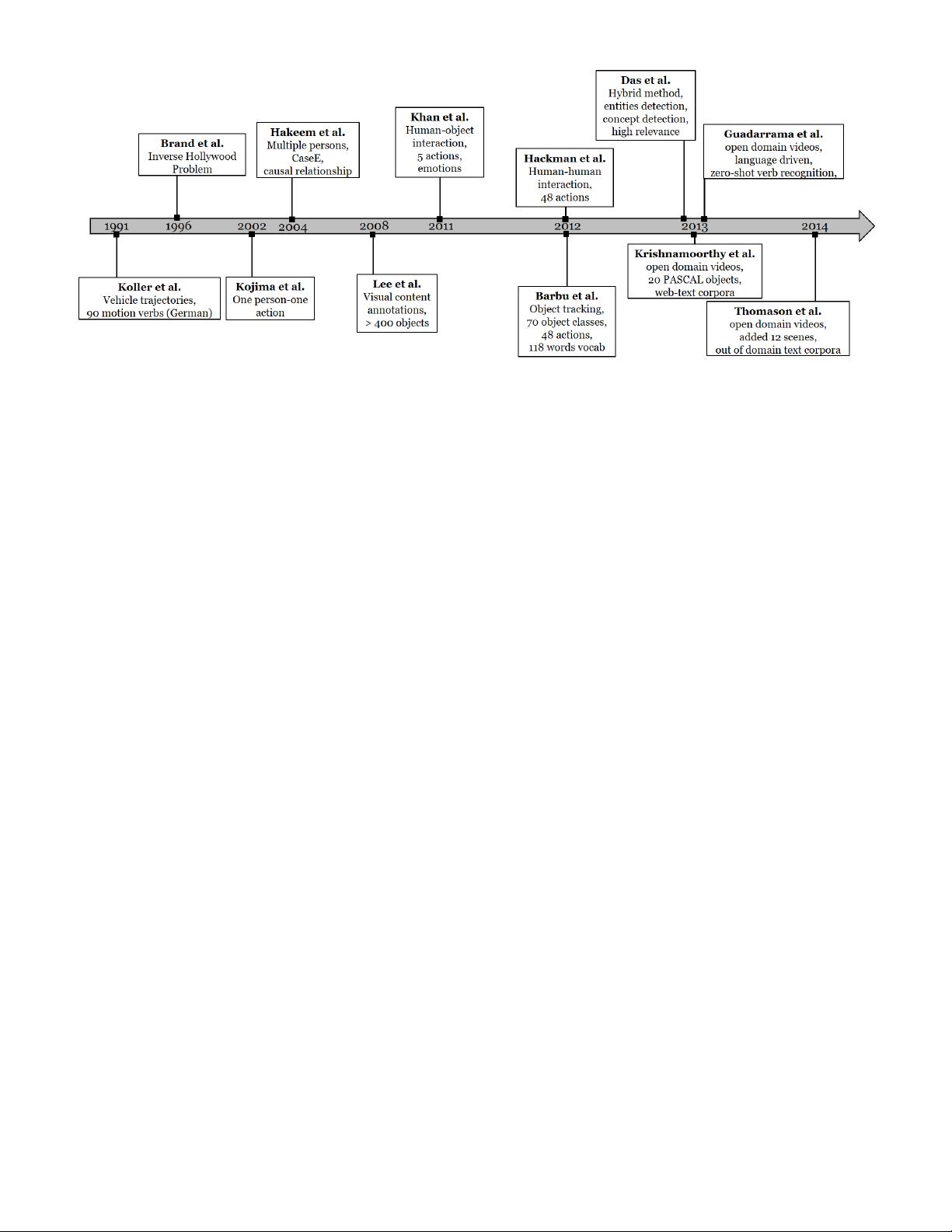

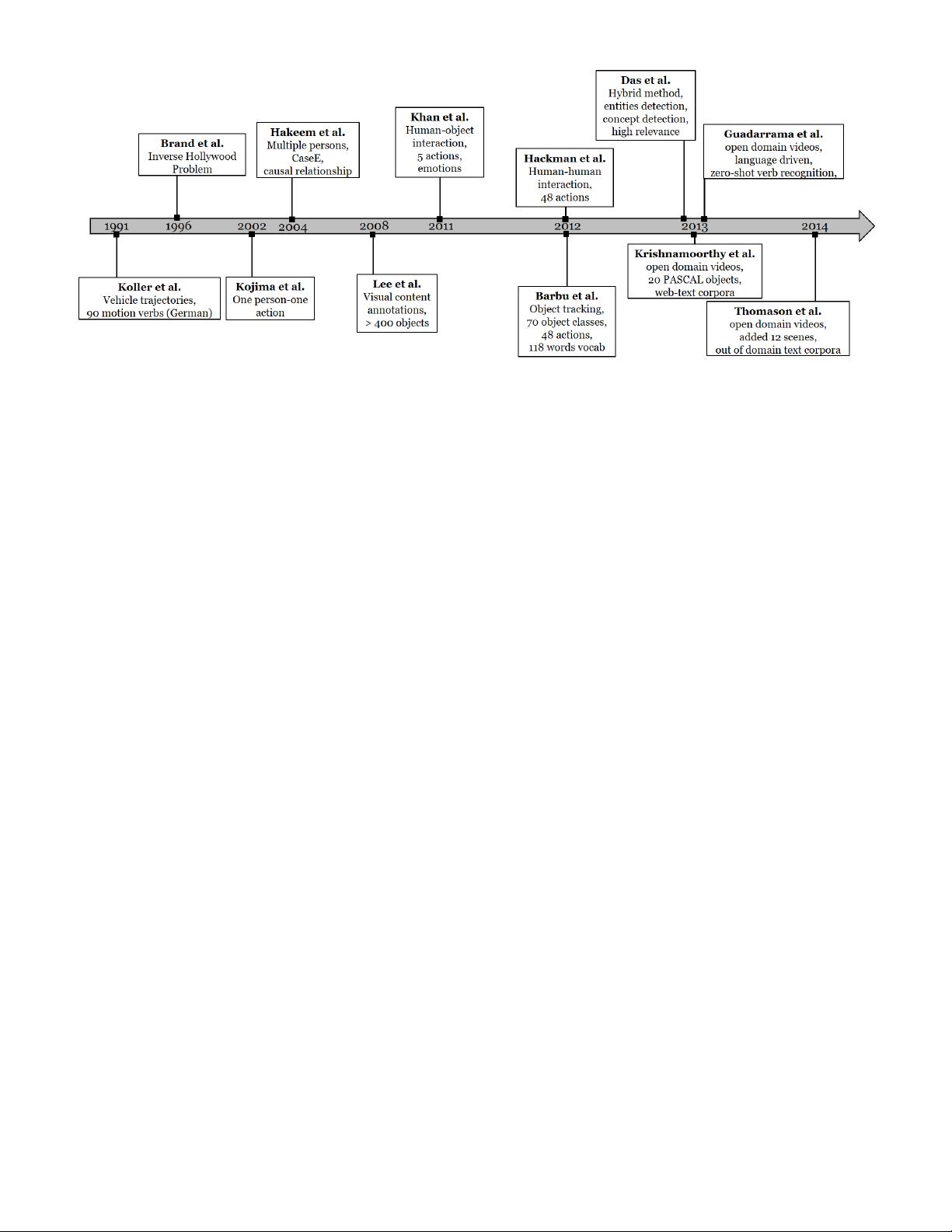

Fig. 5: Evolution of classical methods over time. In general the focus of these methods moved from subjects (humans) to

actions and objects and then to open domain videos containing all three SVO categories.

stochastic attribute image grammar (SAIG) [192] is em-

ployed to produce a visual vocabulary i.e. a list of visual

entities present in the frame along with their relationships.

This output is then fed into an “event inference engine”,

which extracts semantic and contextual information of vi-

sual events, along with their relationships. Video Event

Markup Language (VEML) [111] is used to represent se-

mantic information. In the final stage, head-driven phrase

structure grammar (HPSG) [122] is used to generate text

description from the semantic representation. Compared to

Kojima et al. [84], grammar-based methods can infer and

annotate a wider range of scenes and events. Ten streams

of urban traffic and maritime scenes over a period of 120

minutes, containing more than 400 moving objects are used

for evaluation. Some detected events include “entering the

scene, moving, stopping, turning, approaching traffic inter-

section, watercraft approaching maritime markers and land

areas and scenarios where one object follows the other” [94].

Recall and Precision rates are employed to evaluate the

accuracy of the events that are detected with respect to

manually labeled ground truth. Due to poor estimation of

the motion direction from low number of perspective views,

their method does not perform well on “turning” events.

Hanckmann et al. [68] proposed a method to automat-

ically describe events involving multiple actions (7 on av-

erage), performed by one or more individuals. Unlike Khan

et al. [78], human-human interactions are taken into account

in addition to human-object interactions. Bag-of-features (48

in total) are collected as action detectors [29] for detecting

and classifying actions in a video. The description generator

subsequently describes the verbs relating the actions to the

scene entities. It finds the appropriate actors among objects

or persons and connects them to the appropriate verbs. In

contrast to Khan et al. [78] who assume that the subject is al-

ways a person, Hanckmann et al. [68] generalizes subjects to

include vehicles as well. Furthermore, the number of human

actions is much richer. Compared to the five verbs in Khan

et al. [78]), they have 48 verbs capturing a diverse range

of actions such as approach, arrive, bounce, carry,

catch and etc.

Barbu et al. [24] generated sentence descriptions for short

videos of highly constrained domains consisting of 70 object

classes, 48 action classes and a vocabulary of 118 words.

They rendered a detected object and action as noun and verb

respectively. Adjectives are used for the object properties

and prepositions are used for their spatial relationships.

Their approach comprises of three steps. In the first step,

object detection [53] is carried out on each frame by limiting

12 detections per frame to avoid over detections. Second,

object tracking [144], [154] is performed to increase the preci-

sion. Third, using dynamic programming the optimal set of

detections is chosen. Verb labels corresponding to actions in

the videos are then produced using Hidden Markov Models

(HMMs). After getting the verb, all tracks are merged to

generate template based sentences that comply to grammar

rules.

Despite the reasonably accurate lingual descriptions gen-

erated for videos in constrained environments, the afore-

mentioned methods have trouble scaling to accommodate

increased number of objects and actions in open domain and

large video corpora. To incorporate all the relevant concepts,

these methods require customized detectors for each entity.

Furthermore, the texts generated by existing methods of the

time have mostly been in the form of putting together lists

of keywords using grammars and templates without any

semantic verification. To address the issue of lacking seman-

tic verification, Das et. al [41] proposed a hybrid method

that produces content of high relevance compared to simple

keyword annotation methods. They borrowed ideas from

image captioning techniques. This hybrid model comprises

of three steps in a hierarchical manner. First, in a bottom

up approach, keywords are predicted using low level video

features. In this approach they first find a proposal distri-

bution over the training set of vocabulary using multimodal

latent topic models. Then by using grammar rules and parts

of speech (POS) tagging, most probable subjects, objects and

verbs are selected. Second, in a top down approach, a set of

concepts is detected and stitched together. A tripartite graph

template is then used for converting the stitched concepts

to a natural language description. Finally, for semantic ver-

ification, they produced a ranked set of natural language

sentences by comparing the predicted keywords with the