没有合适的资源?快使用搜索试试~ 我知道了~

首页深度学习:Ian Goodfellow, Yoshua Bengio, Aaron Courville合著

深度学习:Ian Goodfellow, Yoshua Bengio, Aaron Courville合著

"《Deep Learning》是由谷歌大脑团队的科学家Ian Goodfellow、Yoshua Bengio和Aaron Courville合著的一本深度学习领域的经典著作,由MIT出版社出版。这本书全面介绍了深度学习的基础理论、技术和应用,是深度学习研究者和从业者的重要参考书。"

在深度学习这个领域,《Deep Learning》提供了丰富的知识内容,涵盖了从基础知识到最新进展的多个方面。书中的内容主要分为几个部分,首先,它以介绍性章节为开端,引导读者理解深度学习的背景和重要性,明确适合阅读本书的读者群体。

1. 引言(Introduction):这部分旨在让读者对深度学习有一个初步的了解,包括深度学习的历史发展趋势,以及为什么深度学习会在当前的计算环境中变得如此重要。作者强调了深度学习在图像识别、自然语言处理、语音识别等领域的广泛应用。

2. 应用数学与机器学习基础(Applied Math and Machine Learning Basics):这部分深入浅出地讲解了深度学习所需的基本数学概念,如线性代数。书中详细阐述了:

- 数量(Scalars)、向量(Vectors)、矩阵(Matrices)和张量(Tensors)的概念。

- 矩阵与向量的乘法,以及其运算规则。

- 单位矩阵(Identity matrices)和逆矩阵(Inverse matrices)的作用。

- 线性相关性和向量空间(Linear Dependence and Span),这对于理解神经网络的线性变换至关重要。

- 范数(Norms)的概念,它们在优化算法中用于度量向量或矩阵的大小。

- 特殊类型的矩阵和向量,如正交矩阵、对角矩阵等。

- 主成分分析(Eigen-decomposition),这是许多机器学习算法,包括深度学习中的正则化技术的基础。

这些基础知识对于理解和实现深度学习模型至关重要,为后续的章节打下了坚实的理论基础。

除了这些基础理论,书中还详细讨论了神经网络的构建、训练和优化方法,包括反向传播算法、损失函数、激活函数、优化算法(如梯度下降及其变种)以及正则化策略。此外,书中也涵盖了深度学习在计算机视觉、自然语言处理、强化学习等领域的应用,以及最新的进展,如生成对抗网络(GANs)、卷积神经网络(CNNs)、递归神经网络(RNNs)和自注意力机制等。

《Deep Learning》这本书是深度学习领域的权威之作,无论是初学者还是经验丰富的研究者,都能从中受益匪浅。通过深入阅读和理解书中的内容,读者将能够更好地掌握深度学习的核心原理,并能运用这些知识解决实际问题。

Chapter

Chapter

Chapter

Chapter

Chapter

Chapter

ChapterChapter

1

1

1

1

1

1

11

In

In

In

In

In

In

InIn

tro

tro

tro

tro

tro

tro

trotro

duction

duction

duction

duction

duction

duction

ductionduction

Inventors have long dreamed of creating machines that think. This desire dates

back to at least the time of ancient Greece. The mythical figures Pygmalion,

Daedalus, and Hephaestus may all be interpreted as legendary inventors, and

Galatea, Talos, and Pandora may all be regarded as artificial life ( ,Ovid and Martin

2004 Sparkes 1996 Tandy 1997; , ; , ).

When programmable computers were first conceived, people wondered whether

such machines might become intelligent, over a hundred years before one was

built (Lovelace 1842, ). Today,

artificial intelligence

(AI) is a thriving field with

many practical applications and active research topics. We look to intelligent

software to automate routine labor, understand speech or images, make diagnoses

in medicine and support basic scientific research.

In the early days of artificial intelligence, the field rapidly tackled and solved

problems that are intellectually difficult for human beings but relatively straight-

forward for computers—problems that can be described by a list of formal, math-

ematical rules. The true challenge to artificial intelligence proved to be solving

the tasks that are easy for people to perform but hard for people to describe

formally—problems that we solve intuitively, that feel automatic, like recognizing

spoken words or faces in images.

This book is about a solution to these more intuitive problems. This solution is

to allow computers to learn from experience and understand the world in terms of a

hierarchy of concepts, with each concept defined in terms of its relation to simpler

concepts. By gathering knowledge from experience, this approach avoids the need

for human operators to formally specify all of the knowledge that the computer

needs. The hierarchy of concepts allows the computer to learn complicated concepts

by building them out of simpler ones. If we draw a graph showing how these

1

CHAPTER 1. INTRODUCTION

concepts are built on top of each other, the graph is deep, with many layers. For

this reason, we call this approach to AI .deep learning

Many of the early successes of AI took place in relatively sterile and formal

environments and did not require computers to have much knowledge about

the world. For example, IBM’s Deep Blue chess-playing system defeated world

champion Garry Kasparov in 1997 ( , ). Chess is of course a very simpleHsu 2002

world, containing only sixty-four locations and thirty-two pieces that can move

in only rigidly circumscribed ways. Devising a successful chess strategy is a

tremendous accomplishment, but the challenge is not due to the difficulty of

describing the set of chess pieces and allowable moves to the computer. Chess

can be completely described by a very brief list of completely formal rules, easily

provided ahead of time by the programmer.

Ironically, abstract and formal tasks that are among the most difficult mental

undertakings for a human being are among the easiest for a computer. Computers

have long been able to defeat even the best human chess player, but are only

recently matching some of the abilities of average human beings to recognize objects

or speech. A person’s everyday life requires an immense amount of knowledge

about the world. Much of this knowledge is subjective and intuitive, and therefore

difficult to articulate in a formal way. Computers need to capture this same

knowledge in order to behave in an intelligent way. One of the key challenges in

artificial intelligence is how to get this informal knowledge into a computer.

Several artificial intelligence projects have sought to hard-code knowledge about

the world in formal languages. A computer can reason about statements in these

formal languages automatically using logical inference rules. This is known as the

knowledge base

approach to artificial intelligence. None of these projects has led

to a major success. One of the most famous such projects is Cyc ( ,Lenat and Guha

1989). Cyc is an inference engine and a database of statements in a language

called CycL. These statements are entered by a staff of human supervisors. It is an

unwieldy process. People struggle to devise formal rules with enough complexity

to accurately describe the world. For example, Cyc failed to understand a story

about a person named Fred shaving in the morning ( , ). Its inferenceLinde 1992

engine detected an inconsistency in the story: it knew that people do not have

electrical parts, but because Fred was holding an electric razor, it believed the

entity “FredWhileShaving” contained electrical parts. It therefore asked whether

Fred was still a person while he was shaving.

The difficulties faced by systems relying on hard-coded knowledge suggest

that AI systems need the ability to acquire their own knowledge, by extracting

patterns from raw data. This capability is known as

machine learning

. The

2

CHAPTER 1. INTRODUCTION

introduction of machine learning allowed computers to tackle problems involving

knowledge of the real world and make decisions that appear subjective. A simple

machine learning algorithm called

logistic regression

can determine whether to

recommend cesarean delivery (Mor-Yosef 1990et al., ). A simple machine learning

algorithm called naive Bayes can separate legitimate e-mail from spam e-mail.

The performance of these simple machine learning algorithms depends heavily

on the

representation

of the data they are given. For example, when logistic

regression is used to recommend cesarean delivery, the AI system does not examine

the patient directly. Instead, the doctor tells the system several pieces of relevant

information, such as the presence or absence of a uterine scar. Each piece of

information included in the representation of the patient is known as a

feature

.

Logistic regression learns how each of these features of the patient correlates with

various outcomes. However, it cannot influence the way that the features are

defined in any way. If logistic regression was given an MRI scan of the patient,

rather than the doctor’s formalized report, it would not be able to make useful

predictions. Individual pixels in an MRI scan have negligible correlation with any

complications that might occur during delivery.

This dependence on representations is a general phenomenon that appears

throughout computer science and even daily life. In computer science, opera-

tions such as searching a collection of data can proceed exponentially faster if

the collection is structured and indexed intelligently. People can easily perform

arithmetic on Arabic numerals, but find arithmetic on Roman numerals much

more time-consuming. It is not surprising that the choice of representation has an

enormous effect on the performance of machine learning algorithms. For a simple

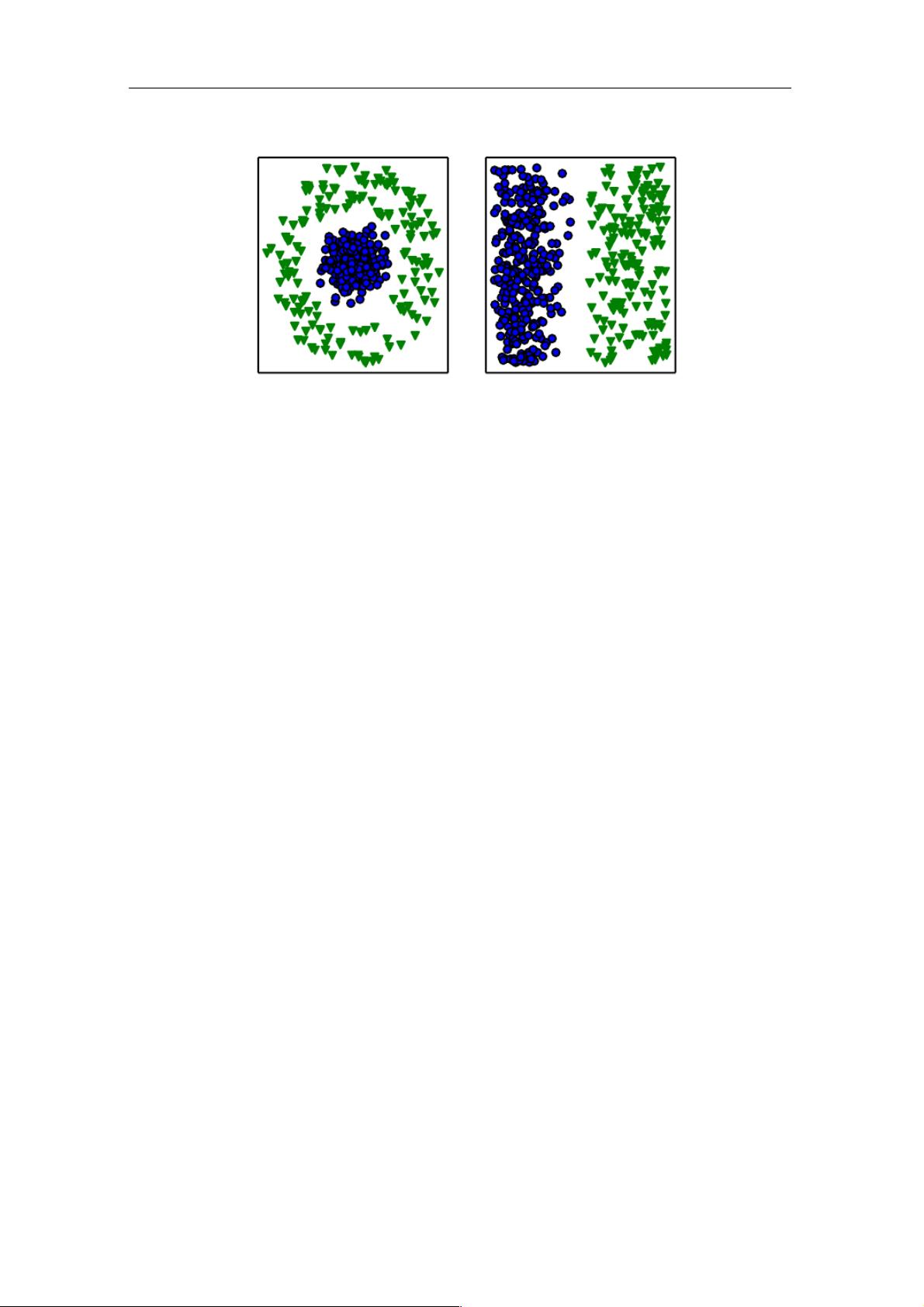

visual example, see figure .1.1

Many artificial intelligence tasks can be solved by designing the right set of

features to extract for that task, then providing these features to a simple machine

learning algorithm. For example, a useful feature for speaker identification from

sound is an estimate of the size of speaker’s vocal tract. It therefore gives a strong

clue as to whether the speaker is a man, woman, or child.

However, for many tasks, it is difficult to know what features should be extracted.

For example, suppose that we would like to write a program to detect cars in

photographs. We know that cars have wheels, so we might like to use the presence

of a wheel as a feature. Unfortunately, it is difficult to describe exactly what a

wheel looks like in terms of pixel values. A wheel has a simple geometric shape but

its image may be complicated by shadows falling on the wheel, the sun glaring off

the metal parts of the wheel, the fender of the car or an object in the foreground

obscuring part of the wheel, and so on.

3

CHAPTER 1. INTRODUCTION

Figure 1.1: Example of different representations: suppose we want to separate two

categories of data by drawing a line between them in a scatterplot. In the plot on the left,

we represent some data using Cartesian coordinates, and the task is impossible. In the plot

on the right, we represent the data with polar coordinates and the task becomes simple to

solve with a vertical line. Figure produced in collaboration with David Warde-Farley.

One solution to this problem is to use machine learning to discover not only

the mapping from representation to output but also the representation itself.

This approach is known as

representation learning

. Learned representations

often result in much better performance than can be obtained with hand-designed

representations. They also allow AI systems to rapidly adapt to new tasks, with

minimal human intervention. A representation learning algorithm can discover a

good set of features for a simple task in minutes, or a complex task in hours to

months. Manually designing features for a complex task requires a great deal of

human time and effort; it can take decades for an entire community of researchers.

The quintessential example of a representation learning algorithm is the

au-

toencoder

. An autoencoder is the combination of an

encoder

function that

converts the input data into a different representation, and a

decoder

function

that converts the new representation back into the original format. Autoencoders

are trained to preserve as much information as possible when an input is run

through the encoder and then the decoder, but are also trained to make the new

representation have various nice properties. Different kinds of autoencoders aim to

achieve different kinds of properties.

When designing features or algorithms for learning features, our goal is usually

to separate the

factors of variation

that explain the observed data. In this

context, we use the word “factors” simply to refer to separate sources of influence;

the factors are usually not combined by multiplication. Such factors are often not

4

CHAPTER 1. INTRODUCTION

quantities that are directly observed. Instead, they may exist either as unobserved

objects or unobserved forces in the physical world that affect observable quantities.

They may also exist as constructs in the human mind that provide useful simplifying

explanations or inferred causes of the observed data. They can be thought of as

concepts or abstractions that help us make sense of the rich variability in the data.

When analyzing a speech recording, the factors of variation include the speaker’s

age, their sex, their accent and the words that they are speaking. When analyzing

an image of a car, the factors of variation include the position of the car, its color,

and the angle and brightness of the sun.

A major source of difficulty in many real-world artificial intelligence applications

is that many of the factors of variation influence every single piece of data we are

able to observe. The individual pixels in an image of a red car might be very close

to black at night. The shape of the car’s silhouette depends on the viewing angle.

Most applications require us to the factors of variation and discard thedisentangle

ones that we do not care about.

Of course, it can be very difficult to extract such high-level, abstract features

from raw data. Many of these factors of variation, such as a speaker’s accent,

can be identified only using sophisticated, nearly human-level understanding of

the data. When it is nearly as difficult to obtain a representation as to solve the

original problem, representation learning does not, at first glance, seem to help us.

Deep learning

solves this central problem in representation learning by intro-

ducing representations that are expressed in terms of other, simpler representations.

Deep learning allows the computer to build complex concepts out of simpler con-

cepts. Figure shows how a deep learning system can represent the concept of1.2

an image of a person by combining simpler concepts, such as corners and contours,

which are in turn defined in terms of edges.

The quintessential example of a deep learning model is the feedforward deep

network or

multilayer perceptron

(MLP). A multilayer perceptron is just a

mathematical function mapping some set of input values to output values. The

function is formed by composing many simpler functions. We can think of each

application of a different mathematical function as providing a new representation

of the input.

The idea of learning the right representation for the data provides one perspec-

tive on deep learning. Another perspective on deep learning is that depth allows the

computer to learn a multi-step computer program. Each layer of the representation

can be thought of as the state of the computer’s memory after executing another

set of instructions in parallel. Networks with greater depth can execute more

instructions in sequence. Sequential instructions offer great power because later

5

剩余799页未读,继续阅读

2018-02-26 上传

2018-05-20 上传

2016-04-11 上传

2017-09-07 上传

2021-10-25 上传

2016-07-24 上传

2021-08-19 上传

2017-12-26 上传

FreeA7

- 粉丝: 1

- 资源: 4

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

最新资源

- 天池大数据比赛:伪造人脸图像检测技术

- ADS1118数据手册中英文版合集

- Laravel 4/5包增强Eloquent模型本地化功能

- UCOSII 2.91版成功移植至STM8L平台

- 蓝色细线风格的PPT鱼骨图设计

- 基于Python的抖音舆情数据可视化分析系统

- C语言双人版游戏设计:别踩白块儿

- 创新色彩搭配的PPT鱼骨图设计展示

- SPICE公共代码库:综合资源管理

- 大气蓝灰配色PPT鱼骨图设计技巧

- 绿色风格四原因分析PPT鱼骨图设计

- 恺撒密码:古老而经典的替换加密技术解析

- C语言超市管理系统课程设计详细解析

- 深入分析:黑色因素的PPT鱼骨图应用

- 创新彩色圆点PPT鱼骨图制作与分析

- C语言课程设计:吃逗游戏源码分享

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功