90 B. Sun et al. / Knowledge-Based Systems 102 (2016) 87–1 02

Input : Training data set: D

tr

= { (x

1

, y

1

) , . . . , (x

N

, y

N

) } , where

x

i

∈ R

d

(d > 1) , y

i

∈ Y = {−1 , 1 } , i = 1 , 2 , . . . , N; Base

classifier learning algorithm: Learn ; Ensemble size:

T; Maximum iteration time: I .

Output : Ensemble classifier: f

(T

)

=

T

t=1

α

t

g

t

, where T

is

the actual number of generated base classifiers.

• Init ializat ion

1 Weights of training examples: w

1

i

= 1 /N, i = 1 , 2 , . . . , N;

t = 0 ; iter = 0 .

• Iteration pro cess

2 while (t < T ) and (iter < I) do

3 t = t + 1 ; iter = iter + 1 ;

4 Train a base classifier g

t

on the training set D

tr

with

weight distribution { w

iter

i

}

N

i =1

: g

t

= Learn (D

tr

, { w

iter

i

}

N

i =1

) ;

5 Apply classifier g

t

to classify training set D

tr

;

6 Use the noise-detection function NDF to determine the

noise label φ

iter

(x

i

) of training example (x

i

, y

i

) ,

i = 1 , 2 , . . . , N;

7 Calculate the weighted training error of g

t

:

err

t

=

y

i

g

t

(x

i

) φ

iter

(x

i

)= −1

w

iter

i

;

8 Compute the weight of classifier g

t

: α

t

=

1

2

ln (

1 −err

t

err

t

) ;

9 if err

t

≥ 0 . 5 or err

t

= 0 then

10 Generate uniform weight distribution: w

iter+1

i

= 1 /N,

i = 1 , 2 , . . . , N;

11 Re-train the tth classifier in the next iteration:

t = t − 1 ;

12 break;

13 end

14 Update the weights of training examples:

w

iter+1

i

= w

iter

i

exp

− α

t

y

i

g

t

(x

i

) φ

iter

(x

i

)

, i = 1 , 2 , . . . , N;

15 Normalize the weights of training examples:

w

iter+1

i

=

w

iter+1

i

N

j=1

w

iter+1

j

, i = 1 , 2 , . . . , N;

16 end

Algorithm 1: The main steps of the ND_AdaBoost algorithm.

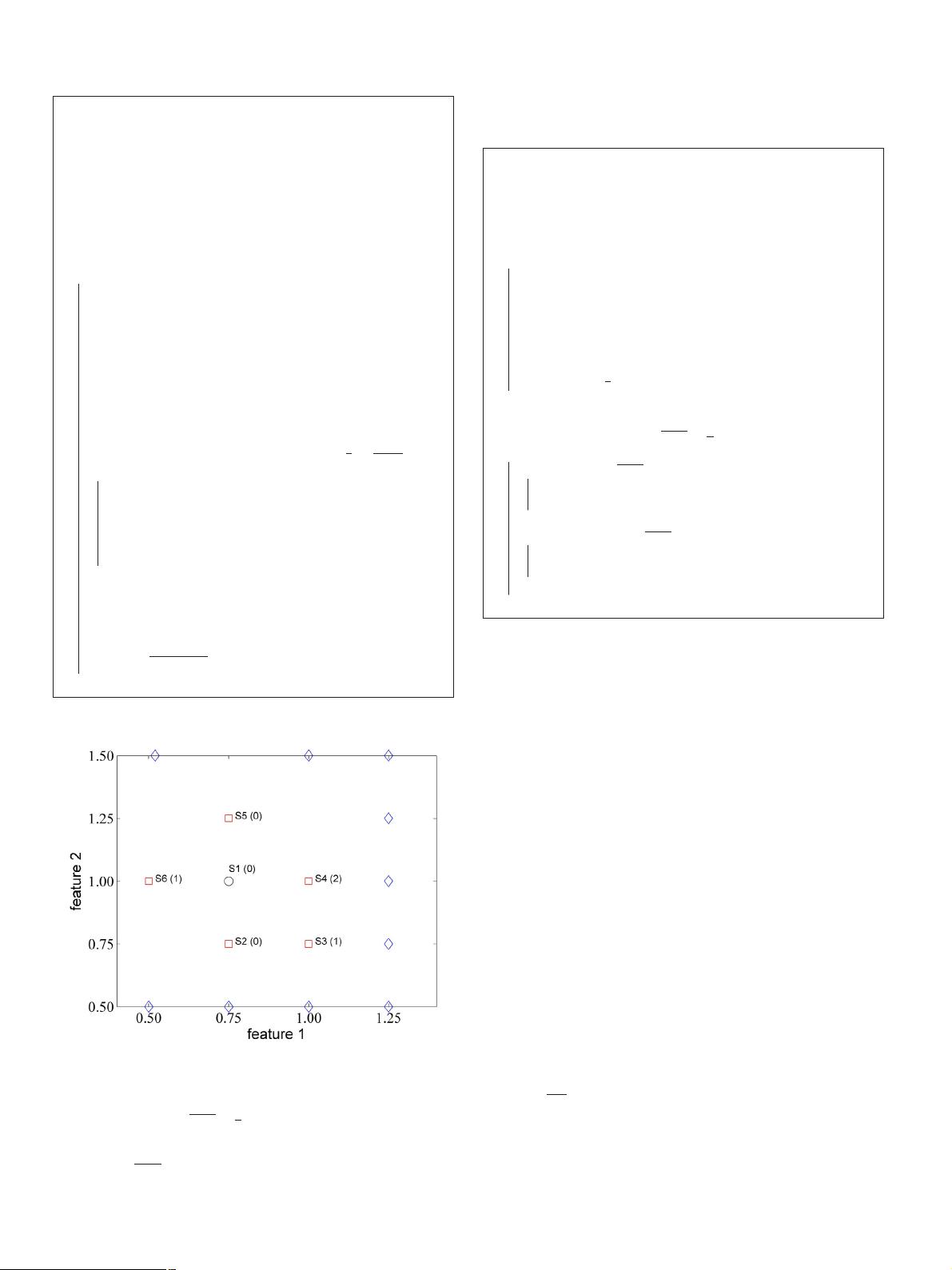

Fig. 1. A simple instance illustrating a training example’s neighbors.

examples is obtained: μ

iter

=

1

N

N

i =1

μ

iter

(x

i

, y

i

) . Finally, we deter-

mine the noise label of each example. For the i th (i = 1 , 2 , . . . , N)

example ( x

i

, y

i

), if its probability is greater than the average value:

μ

iter

(x

i

, y

i

) > μ

iter

, it is considered as a mislabeled noise and its

noise label is set to −1 : φ

iter

(x

i

) = −1 ; otherwise, it is considered

as a non-noisy example, i.e., φ

iter

(x

i

) = 1 . The general procedure of

the NDF is formally demonstrated in Algorithm 2

Input : Training data set: D

tr

= { (x

1

, y

1

) , . . . , (x

N

, y

N

) };

Classification

results of classifier g

t

on training set D

tr

:

{ g

t

(x

i

) }

N

i =1

; Size of neighborhood: k .

Output : The detected noise labels of all the training

examples in the iterth iteration: { φ

iter

(x

i

) }

N

i =1

.

• Determination pro cess

1 for each example (x

i

, y

i

) in D

tr

do

2 Find its k nearest neighbors in D

tr

: neighbors (x

i

, y

i

) =

{ (x

ij

, y

ij

) }

k

j=1

, where ij ∈ { 1 , 2 , . . . , N} , ij = i , and the

Euclidean distance is used as the distance metric;

3 Calculate the probability of example (x

i

, y

i

) being a

mislabeled noise, i.e., the classification error rate of

classifier g

t

in its k nearest neighbors:

μ

iter

(x

i

, y

i

) =

1

k

k

j=1

I(g

t

(x

ij

) = y

ij

) ;

4 end

5 Calculate the average value over the obtained probabilities of

all the training examples: μ

iter

=

1

N

N

i =1

μ

iter

(x

i

, y

i

) ;

6 for each example (x

i

, y

i

) in D

tr

do

7 if μ

iter

(x

i

, y

i

) > μ

iter

then

8 Its noise label is set to −1: φ

iter

(x

i

) = −1 , put it into

the noisy example set NS: NS = NS ∪ { (x

i

, y

i

) } ;

9 end

10 else if μ

iter

(x

i

, y

i

) ≤ μ

iter

then

11 Its noise label is set to 1: φ

iter

(x

i

) = 1 , put it into the

non-noisy example set N N S: N N S = N N S ∪ { (x

i

, y

i

) } ;

12 end

13 end

Algorithm 2: The procedure of the noise-detection function

NDF .

3.2. Multi-class AdaBoost algorithm SAMME

Although there already exist many multi-class AdaBoost algo-

rithms, such as SAMME [21] , AdaBoost.Cost [27] , AdaBoost.M1 [28] ,

AdaBoost.MO [29] , AdaBoost.MH [30] , AdaBoost.MR [30] , Ad-

aBoost.HM [31] , etc., we choose Zhu et al.’s multi-class AdaBoost

algorithm SAMME (Stagewise Additive Modeling using a M ulti-

class Exponential loss function) [21] as the foundation of our

algorithm. This is due to that SAMME is a very effective and

popular multi-class AdaBoost algorithm (has been cited 309 times

since 2009) and can be directly applied to the multi-class classi-

fication case without reducing it to multiple two-class problems.

In this section, we first introduce the multi-class exponential loss

function of SAMME, then give its main steps.

3.2.1. Multi-class exponential loss function

In the multi-class classification scenario, the class label y

i

of

example ( x

i

, y

i

) in training set D

tr

= { (x

1

, y

1

) , . . . , (x

N

, y

N

) } ( x

i

∈

R

d

, y

i

∈ Y = { 1 , 2 , . . . , K} , i = 1 , 2 , . . . , N, K ≥ 3 is the number of

distinct class labels) is encoded by a K dimensional vector: Y

i

=

(Y

i 1

, Y

i 2

, . . . , Y

iK

) , where each element in Y

i

is defined as follows:

Y

ij

=

1 if y

i

= j,

−

1

K−1

if y

i

= j.

(1)

It is easy to see that the K elements in Y

i

satisfy: Y

i 1

+ Y

i 2

+ ···+

Y

iK

= 0 .

Definition 1 (The multi-class exponential loss function) . Using the

above encoding strategy, the multi-class exponential loss function