Supervised Hashing with Soft Constraints

Cong Leng, Jian Cheng, Jiaxiang Wu, Xi Zhang, Hanqing Lu

National Laboratory of Pattern Recognition

Institute of Automation, Chinese Academy of Sciences

Beijing, China

{cong.leng, jcheng, jiaxiang.wu, zhangxi, luhq}@nlpr.ia.ac.cn

ABSTRACT

Due to the ability to preserve semantic similarity in Ham-

ming space, supervised hashing has been extensively studied

recently. Most existing approaches encourage two dissimilar

samples to have maximum Hamming distance. This may

lead to an unexpected consequence that two unnecessarily

similar samples would have the same code if they are both

dissimilar with another sample. Besides, in existing method-

s, all labeled pairs are treated with equal importance with-

out considering the semantic gap, which is not conducive to

thoroughly leverage the supervised information. We present

a general framework for supervised hashing to address the

above two limitations. We do not toughly require a dissim-

ilar pair to have maximum Hamming distance. Instead, a

soft constraint which can be viewed as a regularization to

avoid over-fitting is utilized. Moreover, we impose differen-

t weights to different training pairs, and these weights can

be automatically adjusted in the learning process. Experi-

ments on two benchmarks show that the proposed method

can easily outperform other state-of-the-art methods.

Categories and Subject Descriptors

H.3.3 [Information Systems]: Information Search and Re-

trieval

Keywords

Supervised Hashing; Soft Constraints; Weights; Bo osting

1. INTRODUCTION

Hashing based approximate nearest neighbor (ANN) search

methods have attracted much attention recently. Hashing

methods map the two nearby points in the original space

to close binary codes in a compact Hamming space. This

enables very fast searching since Hamming distance can b e

efficiently calculated with XOR operation in modern CPU.

According to whether supervised information is utilized or

not in the training process, hashing methods can be divided

Permission to make digital or hard copies of all or part of this work for personal or

classroom use is granted without fee provided that copies are not made or distributed

for profit or commercial advantage and that copies bear this notice and the full cita-

tion on the first page. Copyrights for components of this work owned by others than

ACM must be honored. Abstracting with credit is permitted. To copy otherwise, or re-

publish, to post on servers or to redistribute to lists, requires prior specific permission

and/or a fee. Request permissions from permissions@acm.org.

CIKM’14, November 3–7, 2014, Shanghai, China.

Copyright 2014 ACM 978-1-4503-2598-1/14/11 ...$15.00.

http://dx.doi.org/10.1145/2661829.2661937.

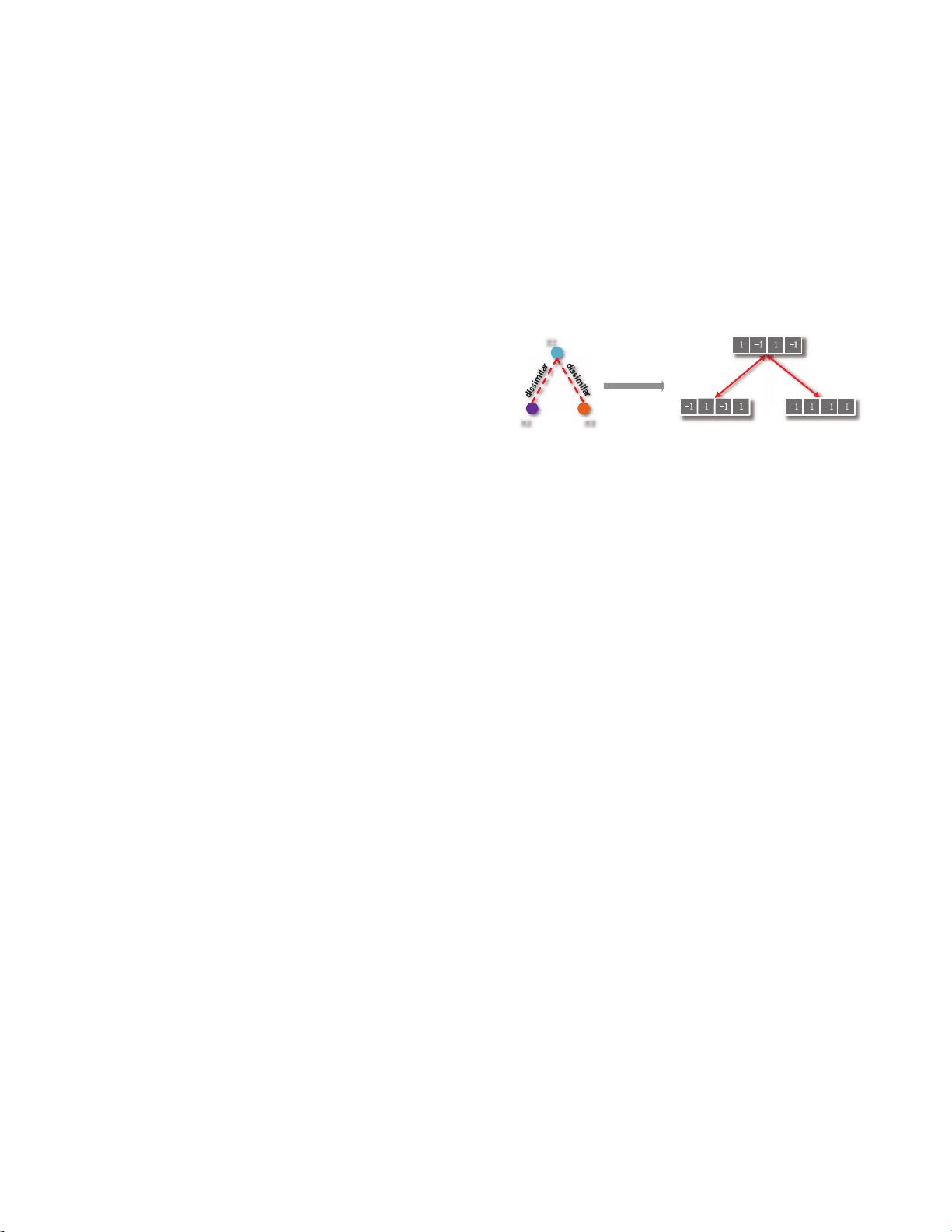

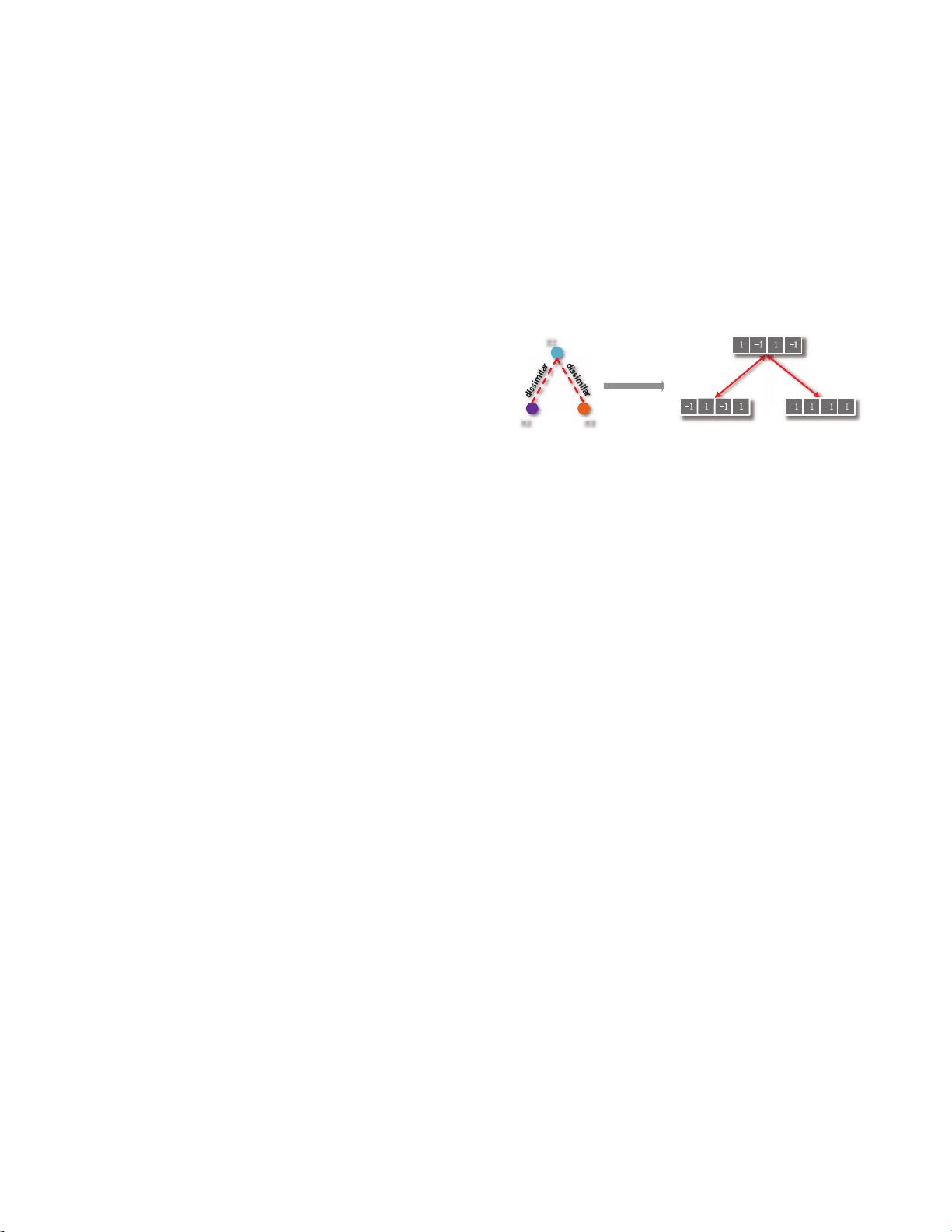

X1

X2 X3

(a) The labeled data

Hashing with

hard constraints

code of X1

code of X2 code of X3

max dis.: 4max dis.: 4

(b) Optimized hashing code

Figure 1: Paradox in traditional supervised Hashing

methods. Two unnecessarily similar data x

2

and x

3

will have the same code.

into unsupervised and supervised categories. In the unsu-

pervised setting, hashing methods such as Locality Sensitive

Hashing (LSH) [1] and Iterative Quantization (ITQ) [2] at-

tempt to preserve the data similarity defined in Euclidean

space, e.g., l

2

distance. However, this is not sufficient for

various practical applications such as image retrieval, where

semantically similar neighbors are preferred.

In order to construct efficient hash functions that pre-

serve the semantic similarity, supervised hashing methods

[3, 6, 5, 4, 7] have been extensively studied. The super-

vised information here is typically based on some pairwise

constraints, i.e., “A and B is similar” or “A and B is dis-

similar”, which is analogous to the “must link” and “cannot

link” constraints in metric learning [8]. Some representative

supervised hashing methods include Binary Reconstruction

Embedding (BRE) [3], Kernel Supervised Hashing (KSH) [5]

and Two Step Hashing (TSH) [4]. These sup ervised meth-

ods can be formally formulated with following objective [4]:

min

Φ

∑

(x

i

,x

j

)∈L

L (Φ(x

i

), Φ(x

j

); y

ij

) (1)

where Φ(x) ∈ {−1, 1}

r

is the r bits code of x. L(·) is a loss

function that measures how well the codes match the ground

truth y

ij

. Different algorithms corresponds to different loss

functions, for example, l

2

loss for BRE and KSH. Although

promising performance has been shown from these methods,

some limitations exist in them.

Inspired by metric learning, all these supervised meth-

ods attempt to learn codes whose Hamming distances are

minimized on similar pairs and simultaneously maximized

on dissimilar pairs. This principle is widely used in metric

learning and proved to be effective. However, metric learn-

ing executes in continuous real number space while hashing

executes in discrete Hamming space. Importantly, although

it makes sense in metric learning, we argue that maximiz-