three baseline methods (which use only one information source

or use a naive combination of the two sources) on real tick-by-

tick data.

The rest of this paper is organized as follows. Section 2 reviews

the literature on traditional approaches for stock market predic-

tion, and the formulation of MKL and its applications. Section 3

presents our proposed system and describes the design of

the experiments. Section 4 shows the experimental results and

discussions. Section 5 gives our conclusion and future work

directions.

2. Related work and background

2.1. Traditional approaches

Some useful observations have been made in the finance

domain. Ederington and Lee [8] observe that there is always a

big increment of the standard deviation of five-minutes returns on

a day when a government announcement on a financial relevant

policy is released at 8:30 am. Tetlock [44] analyzes the content of

the “Abreast of the Market” column in Wall Street Journal, and

finds that pessimistic words predict low stock returns. Tetlock

et al. [45] also find that firm-specific future earnings and returns

could be predicted by news when they analyze the tone of firm-

specific news.

Analysis of news articles has been reported in the literature of

computer sciences. Following the approach of text mining on news

articles, Seo et al. [39] build a multi-agent system for intelligent

portfolio management, which can assess the risk associated with

companies by analyzing news articles. Yu et al. [52] propose a

four-stage Support Vector Machine (SVM) based on the multi-

agent ensemble learning approach for credit risk evaluation. Fung

et al. [13] classify news articles into different categories and

predict the directional impact of newly released news articles.

The AZFinText system, built by Schumaker and Chen [37], makes

not only directional prediction but also quantified estimation of

prices.

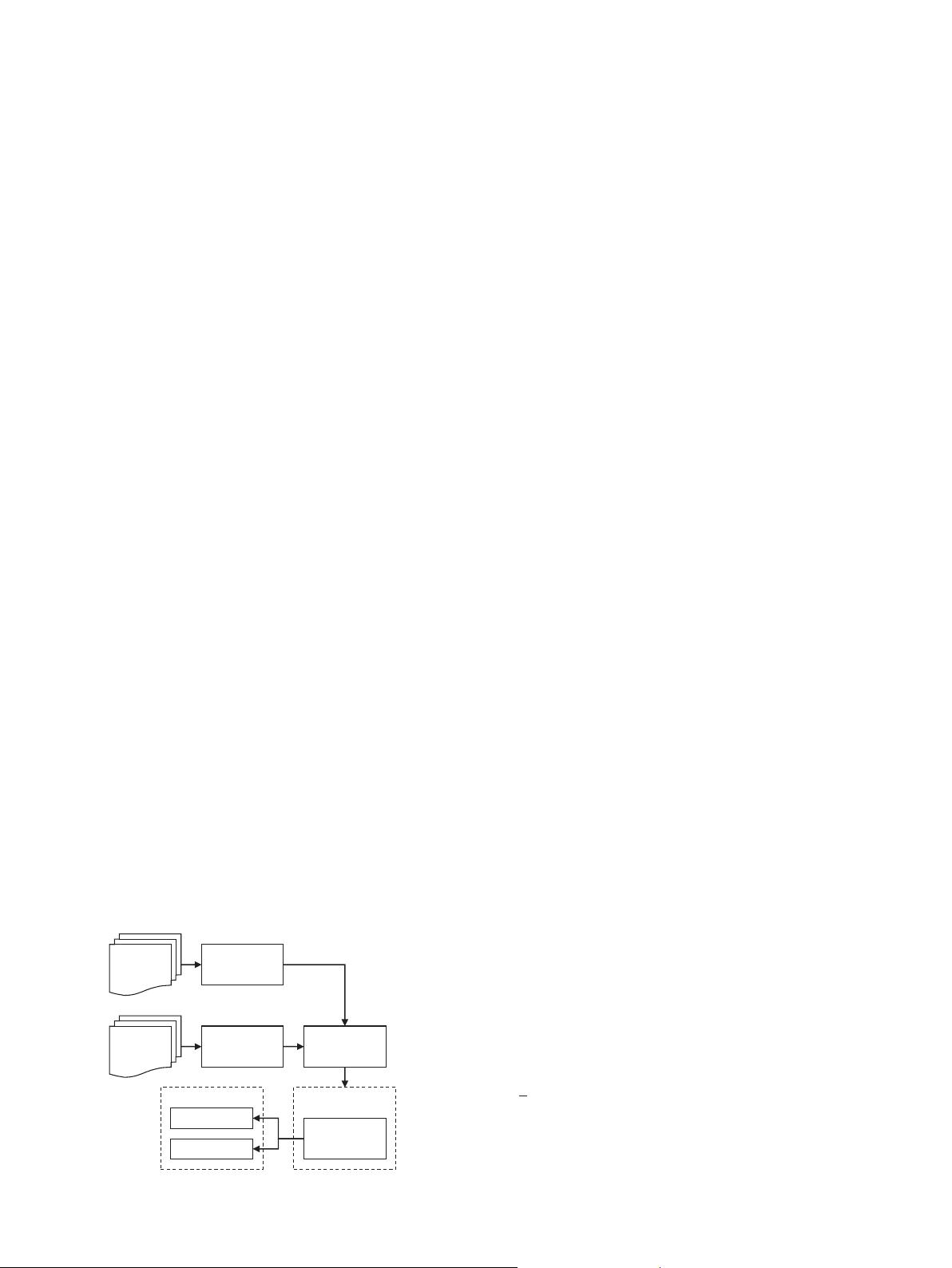

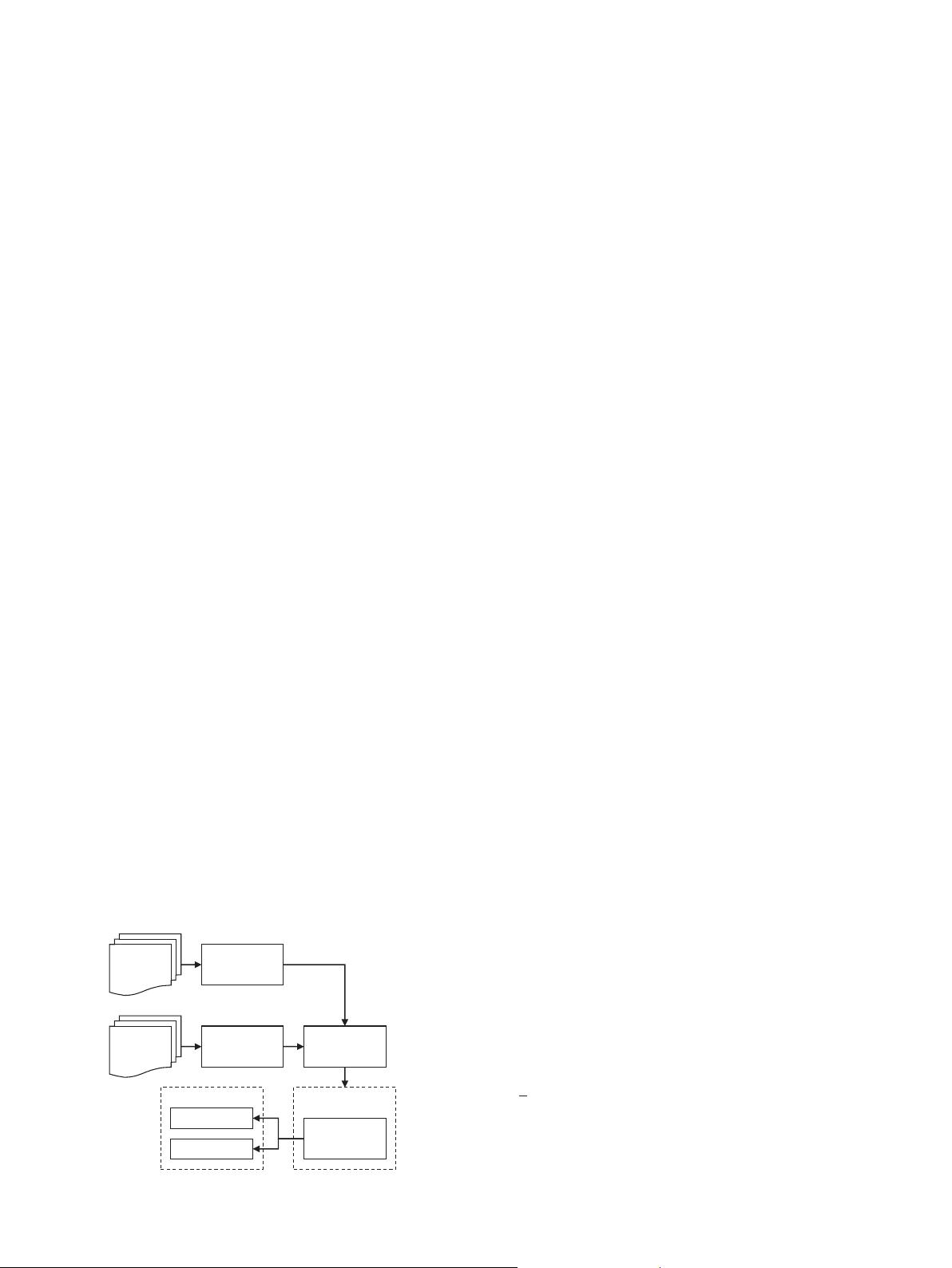

As illustrated in Fig. 2, the processing pipeline of the

approaches that use the news information source could be

summarized below:

1. Representation of news articles. A piece of news is usually

represented as a term vector by using the Vector Space Model

after the removal of stop words and feature selection [11,21,25,

26,46,48]. Sentiment analysis is occasionally employed to

analyze news at a semantic level [15,24,30,31].

2. Labeling. Each piece of news is then assigned with a label. In a

classifi

cation model, pieces of news are sorted by their time

stamps, and labeled with nominal values, such as positive/

neutral/negative. While in regression approaches, news is sim-

ply labeled with a continuous value, such as daily stock price

return. In this paper, we use short-term price return for intra-

day prediction.

3. Model training and evaluation. Machine learning models are

employed in this step. Except for evaluating models by means

of standard metrics, such as Precision, Recall, and Mean Square

Error (MSE), some researchers conduct preliminary simulations

[12,36], where strategies trade stocks in a virtual market using

historical real data or data that was generated by simulated

agents. Return rate is the measure of the trading performance.

However, how to design a functional trading strategy that could

make full use of the predictions of a model (not strategies that

simply buy-and-hold) is beyond the scope of this paper.

Besides the work on news, there are also many published

papers on mining signals from stock prices. Guo et al. [17] focus on

the architecture of the neural network and develop a sparsely

connected network model, which achieves the better performance

than traditional neural networks with respect to three data sets

(Microata, Finance Data and Telecommunication Data). For fore-

casting the changes of long-term index, Hung and Lin [20] develop

an intuitionistic fuzzy least-squares support vector machine with

genetic algorithms (IFLS-SVRGAs). Lin et al. [29] recently apply

FLS-SVR-GA to forecast the seasonal revenue of a company. Gestel

et al. [47] use a Bayesian evidence framework and apply it to least-

squares support vector machine regression for price and volatility

prediction. Tay et al. [42,43] and Cao et al. [3] modify the SVM

objective function and make C an adaptive parameter for non-

stationary time series. For predicting index prices, Kim [23]

concludes that the performance of SVM is better than that of

back-propagation neural networks. Applying SVM to predict S&P

500 daily prices, Cao et al. [4,5] also find that SVM has the better

performance based on the metrics of normalized mean square

error and mean absolute error. Huang et al. [19] predict the price

directional movement of NIKKEI 225 index using SVM. After

comparing SVM with linear discriminant analysis, quadratic dis-

criminant analysis, and back-propagation neural networks, they

reach the same conclusion. To summarize, the steps of the

approaches in this category are (1) Preprocessing raw prices. To

make historical prices more indicative, prices in absolute price

levels are sometimes translated into price indicators. (2) Patten

classification. Patterns of prices are then classified (or regressed)

by models into predetermined categories (or estimated values).

Note that previous work made use of only one type of

information sources, i.e., either finance news or historical prices.

In contrast, our proposed approach use both information sources

which are integrated for market prediction by MKL.

2.2. Multiple Kernel learning

In order to have a deep understanding of the problem and to

interpret the derived decision function in a simple way, we

describe MKL used in our system in this section.

The objective function of a single kernel SVR is

min

w;b

1

2

〈w; w〉 þC ∑

N

i ¼ 1

ðξ

i

þξ

n

i

Þ; s:t: ð〈w; ϕðx

i

Þ〉þ bÞy

i

r ε þξ

i

;

y

i

ð〈w; ϕðx

i

Þ〉þ bÞr ε þξ

n

i

; ξ

i

; ξ

n

i

Z 0; i ¼ 1; …; N; ð3Þ

where N is the number of training instances, and C is a penalty

term which balances between training error and model complex-

ity. ξ

ðnÞ

i

(ξ

ðnÞ

i

refer to ξ

i

and ξ

n

i

) are slack variables, where ξ

i

means

News

articles

Historical

prices

Textual

processing

Time series

processing

News

labeling

Model training

Classification

or regression

Evaluation

Accuracy

Closeness

Fig. 2. Architecture of traditional approaches.

X. Li et al. / Neurocomputing 142 (2014) 228–238230