Cogn Comput

events when modeling each event. This may lead to high

computation cost, especially for long sequences. Moreover,

extensively modeling all the previous events may also

introduce redundancy and noises. Ma et al. [15] proposed

a two-step attention mechanism to capture both the target

and sentence level attention for sentiment analysis; still, all

words in the sentence are considered in their model.

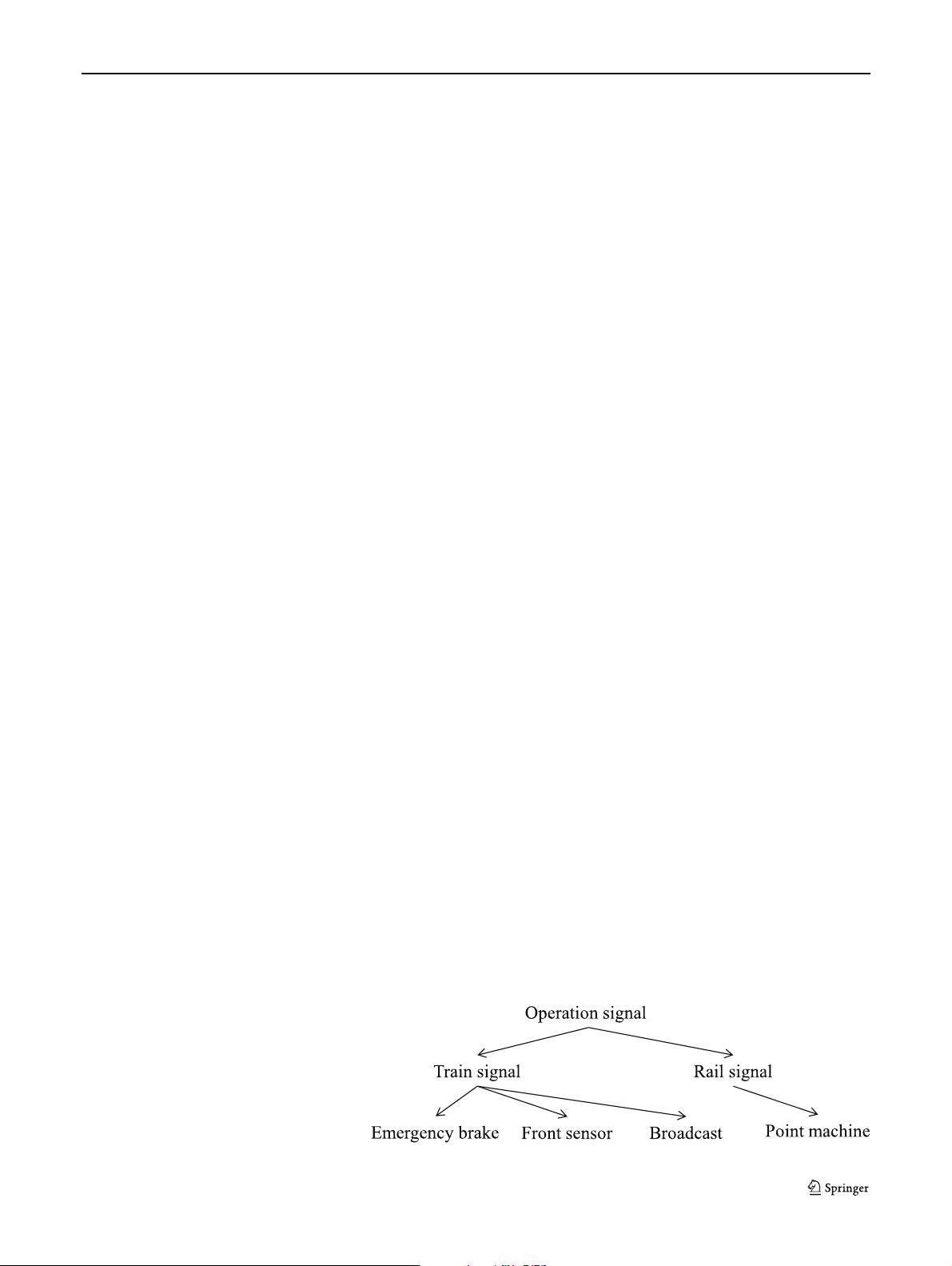

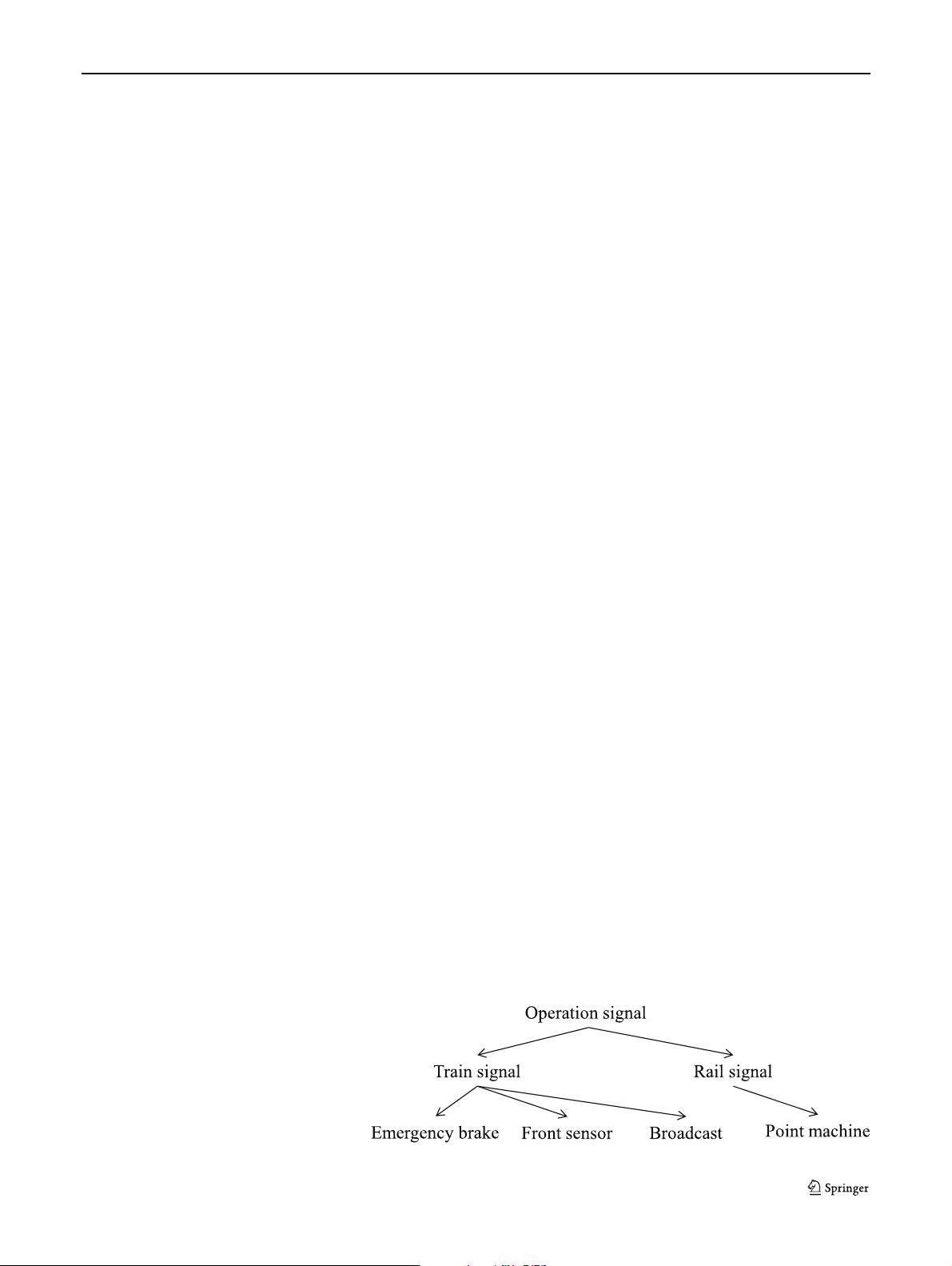

Secondly, temporal dependence–based RTPP models

neglect the possible explicit ontology dependencies in the

event sequences. Sometimes, the explicit ontology depen-

dencies may exist among the events. Take ATS log as an

example, events (i.e., system alarms) naturally form a tree

ontology. Figure 3 shows a toy example. These explicit

dependencies not only provide additional domain knowl-

edge about the event sequences, but also help remove the

unnecessary implicit dependency modeling in an RTPP

model like CYAN-RNN, e.g., “train broadcast error” should

not be considered when modeling “point machine error”

because their dependencies are very weak as indicated by

the ontology dependency structure. In Fig. 2c, h

5

now

depends on the aggregation of {h

1

,h

2

,h

3

} but not h

4

because e

4

and e

5

do not have direct explicit dependence.

There exist some models (e.g., Topo-LSTM [4]) which uti-

lize explicit dependence for event prediction. However, they

do not employ a TPP framework and thus cannot predict the

next event time. Moreover, they only model dependencies

with one single relation type. But in reality, the relations can

be of multiple types, e.g., the relations of event dependen-

cies in ATS log can be

parent (e.g., train broadcast in Fig. 3),

or child (e.g., front sensor-train), sibling (front sensor-

emergency brake). To the best of our knowledge, none of the

existing RTPP models has studied the problem of modeling

event sequence with explicit ontology dependencies, not to

mention the settings with multi-relation dependencies.

In view of the limitations in existing studies, we design

a novel multi-relation structure RNN with a hierarchical

attention mechanism to embed the historical event sequence

with explicit ontology dependencies. We then propose

a multi-relation structure RNN–based recurrent marked

temporal point process model (MRS-RMTPP) which

conditions its density function on the learned event sequence

embedding to predict the next event marker and time. As

illustrated in Fig. 2d, to predict (e

7

,t

7

), we learn a hidden

state at every time stamp. For each event, we leverage

the embedding from all “relevant” events which happened

before it. We consider both temporal dependence (h

4

→ h

5

)

and ontology dependencies with multiple types of relations

(

parent: h

1

→ h

5

,h

2

→ h

5

, sibling : h

3

→ h

5

). Note

that the impacts of

parent events and sibling events may

be different. And even for e

1

and e

2

that are both parent

events, they may have different degrees of influence on the

occurrence of e

5

. Inspired by this, we design a hierarchical

attention mechanism to automatically learn the impact of

different relations (e.g., α

p

, α

s

,andα

t

for relation parent,

sibling,andtemporal predecessor in Fig. 2d), as well as

the impacts of different events within one relation (e.g.,

α

1

and α

2

for relation parent in Fig. 2d). The advantages

of our MRS-RMTPP are two-fold. Firstly, our model

utilizes the explicit ontology dependencies to capture

relations among historical events. It is more expressive

compared with the temporal dependence–based RTPP and

more efficient compared with the implicit dependence–

based RTPP. Secondly, our model exploits different types

of relations in the ontology dependencies. The proposed

hierarchical attention mechanism can effectively capture

impacts at different levels (i.e., from different relations and

from different events within one relation).

We summarize our major contributions as follows.

– We design a novel multi-relation structure RNN, which

embeds event sequences with an event marker, event

time, and the explicit ontology dependencies among

events.

– We propose a hierarchical attention mechanism to

distinguish the impacts of the historical events within

each relation and the impacts of different relations.

– We propose an MRS-RMTPP model with its density

function conditioned on our designed multi-relation

structure RNN for the next event marker and time

prediction.

– Our evaluation results show that our model outperforms

the contemporary baselines by 3.8 to 24.4% (event

marker prediction) and 1.9 to 38.6% (event time

prediction) on three real-world datasets.

Related Work

Before we introduce our method, we first review the

representative studies in two relevant fields: structure RNN

and temporal point process.

Fig. 3 An example of ontology

dependency structure in ATS log