use of HTM to construct concurrent search tree structures.

Here, we use an HTM-based B+Tree from DBX [32], an

in-memory database, as an example. A B+Tree is a B-

Tree in which internal nodes only store keys, and only

leaves are associated with values [3]. The HTM-B+Tree

adopts HTM regions to protect operations of the B+Tree

such as get, put, and delete. This design was later adopted

and shown to be effective in other distributed in-memory

databases [10, 34]. Since most HTM-B+Tree operations

share the major process of accessing B+Tree, here we use

the put operation as an example to illustrate the access

algorithm of HTM-B+Tree in Algorithm 1, which comprises

the following steps. For brevity, we omit the structural

changes and rebalance operations.

(1) Traversing the internal nodes (Lines 6-8). In this

stage, the request traverses tree edges from the root to the

target leaf node; (2) Traversing the leaf nodes (Lines 10-15).

The request first detects if there are duplicate keys in the

target leaf. If so, the put operation changes into an update;

otherwise it will insert a new record; (3) Propagating splits

upwards (Lines 17-19). For a put operation, if the target

leaf node is already full, then insertion triggers splits, and

propagates the split upwards until encountering an internal

node with empty slots.

The three stages are included in a monolithic HTM region

marked by xbegin and xend primitives; such a coarse-grained

HTM region eliminates the complexity of maintaining fine-

grained locks and makes it easy to reason about correctness.

As a result, it was shown to have much better performance

compared to a state-of-the-art B+Tree (i.e., Masstree [20])

under low to modest contention [32].

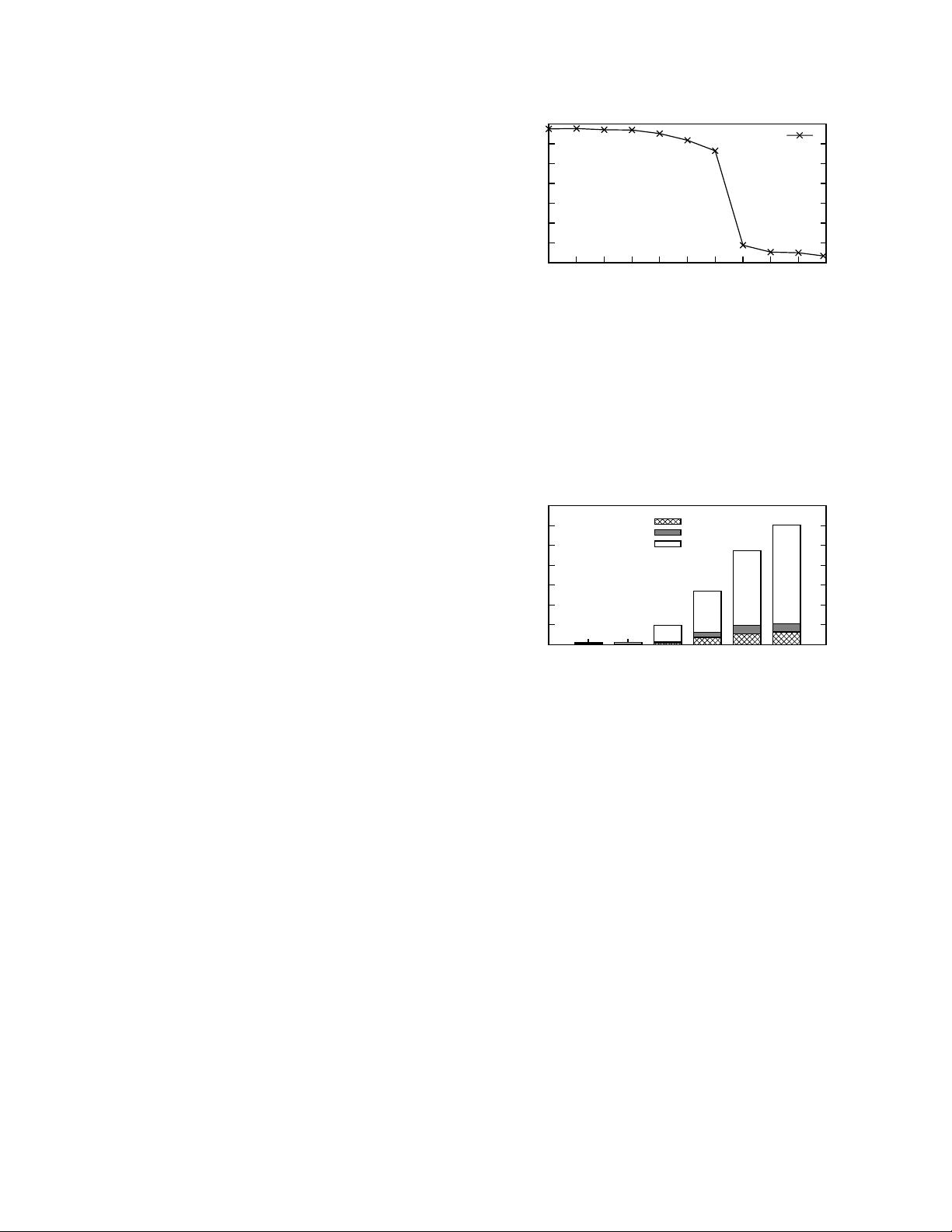

2.3 Issues under High Contention

While the HTM-based concurrent tree structure has high

performance under low and modest contention, its perfor-

mance may collapse under high contention. To illustrate

this, we evaluate the throughput of HTM-based B+Tree

using the YCSB benchmark with the Zipfian input distribu-

tion [17, 25]. We adjust the skew coefficient ✓ in the Zipfian

distribution to simulate different levels of contention. We

test it on a 20-core platform with Intel’s TSX [12] support.

All the performance results are collected using 16 threads

(a few cores are reserved for controlling threads). Threads

are distributed equally on two sockets (detailed experimental

setup in section 5.1).

As shown in Figure 1, with low contention rate (i.e.,

skew coefficient ✓<0.6), the HTM-based B+Tree achieves

high and stable performance. However, when the contention

rate increases (e.g., ✓>0.6), performance of an HTM-

based B+Tree drops sharply. When ✓ = 0.9, the performance

decreases to lower than 3 million ops/s. To understand the

reasons behind the performance collapse, we collect the

number of HTM aborts. Since adding performance counters

to each HTM region severely hinders the overall throughput,

here we set performance counters in every 10 operations, so

0

5

10

15

20

25

30

35

0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1

Throughput (Million ops/sec)

Skew Coefficient (θ)

HTM-B+Tree

Figure 1: Performance under different contention rates.

that the performance with HTM counters deviates little from

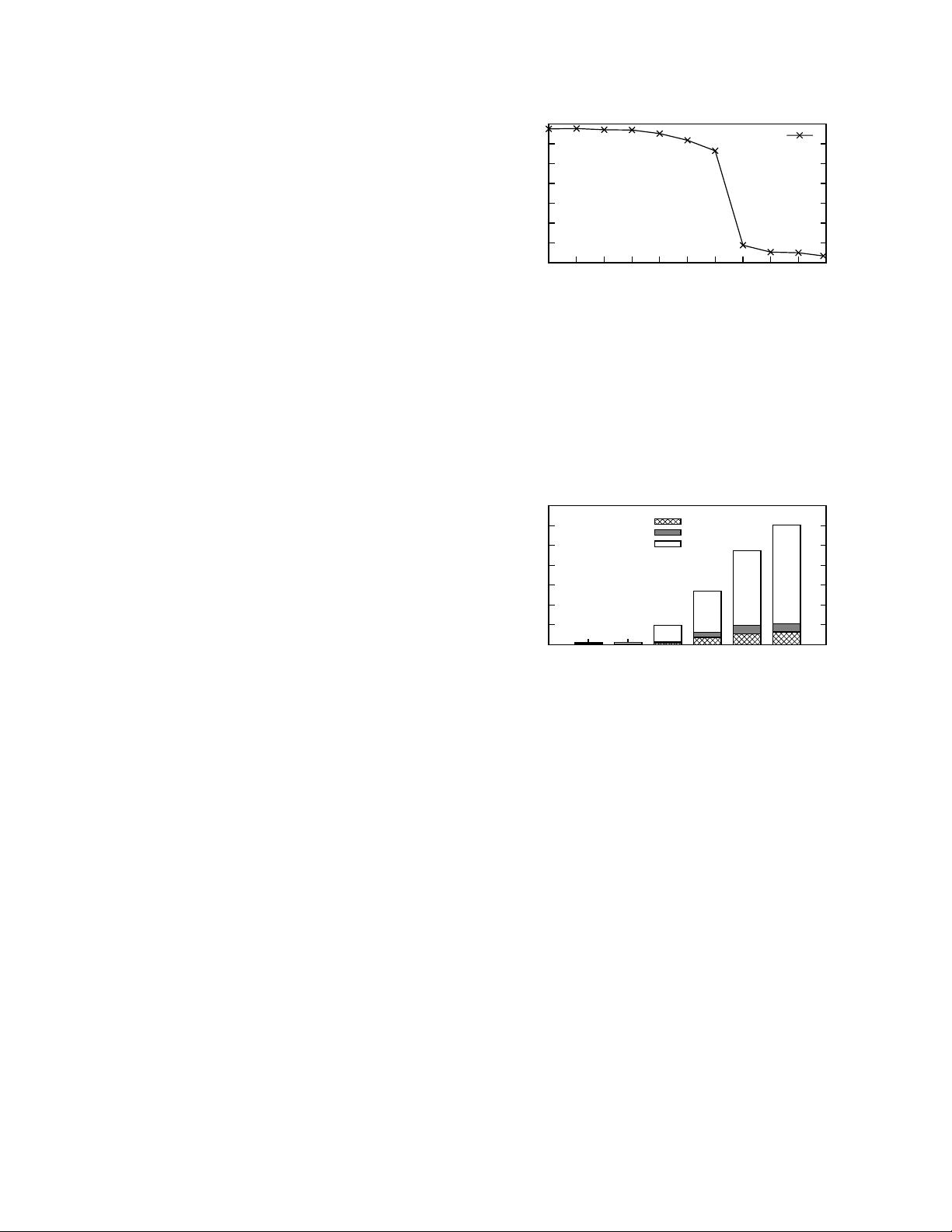

that without counters. As shown in Figure 2, the HTM abort

rate increases sharply with the contention rate; the HTM

abort rate for ✓ = 0.9 is around 47X higher than that for

✓ = 0.5. The collected CPU cycles also show that frequent

HTM aborts and retries waste more than 94% of the total

CPU cycles when ✓ = 0.9.

0

10

20

30

40

50

60

70

0.5 0.6 0.7 0.8 0.9 0.99

HTM Aborts per Operation

Skew Coefficient (θ)

Same Record

Meta-Data

Different Records

Figure 2: HTM aborts incurred by different reasons.

To understand the underlying reasons for collapsed per-

formance under high contention, we perform a detailed

analysis and uncover three main sources of aborts.

• High retry cost due to monolithic transactions. While

using a monolithic transaction for a critical section

provides consistency with trivial effort, it also causes

increased abort rates. Even worse, a retry from a leaf

node would waste a lot of useful work, causing high retry

cost. Our analysis finds that the distribution of conflicts is

non-uniform in the B+Tree: more than 90% of conflicts

occur in the leaf level. In this case, a conflict in leaf nodes

will abort the entire tree traversal from root to leaf, even

though there is no conflict in internal nodes.

• False conflicts. False Conflicts are conflicts incurred

by requests accessing different records. False conflicts

stem from two major reasons. The first one is cache line

sharing of consecutive records. B+Tree arranges keys

stored in a node in a consecutive manner to provide an

ordered store. However, such data layout causes severe

conflicts under high contention. Since HTM detects

conflicts at cache line granularity, concurrently accessing

data in the same cache line would result in increased

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功